Good Robot has been on my mind lately. I keep coming back to the fact that I put a few months into it and didn’t finish it. But then I remember that on top of gameplay concerns I’ve also got a bunch of annoying technology problems to worry about and I lose my enthusiasm.

The central part of the technology problems come from [my usage of] GLSL – the OpenGL shader language. The rest of my code – talking to the filesystem, input devices, AI, and gameplay – is basically solid, but the shaders are a mess. On one machine robots would strobe, randomly rendering or not from one frame to the next. Another tester reported that walls didn’t render. Another had the robots and walls render fine, but powerups were invisible. Another person had strange slowdowns that should not have been happening on their given hardware.

The center of the problem is that I’m not very knowledgeable regarding GLSL. I’ve only gotten around to messing with it in the last couple of years, and I’ve only learned enough to accomplish the few simple things I need to do. This leaves great big blind spot in my knowledge where problems can hide. It creates situations where I can do something the wrong way and have it work on my machine, and malfunction a dozen different ways elsewhere.

But to correct this problem I have to deal with another one: The GLSL resources are an absolute mess. The language has undergone sweeping changes at least twice since its inception. Programs that were once valid are now wrong, which means nearly all of the basic “hello world” tutorials are now broken to the point where they won’t even compile. The docs are filled with side-notes about what’s “new” in a version people stopped using five years ago. The simple stuff is out of date, and the advanced stuff is written with the assumption that you already know what you’re doing and you just need a few pointers on how things have changed. It’s amazing how many pages of documentation fail to explain how things actually work, but instead explain how things differ from how they used to work. It’s like giving someone directions based on scenery that no longer exists. “Drive until you get to the place that used to be a drugstore, turn left onto old main street, and turn left when you see the empty lot where the recycling center used to be.” You’ll probably be be able to find the place eventually, but those layers of ambiguity and uncertainty are a major hindrance.

Further exacerbating the problem is that the updated and proper way of doing things is a lot more complex. The entire OpenGL matrix stack has been deprecated. In plain language, this means that the built-in systems for managing tables of numbers and performing the spatial transformations needed to take 3D scenery and draw it on a 2D screen are no longer supposed to be used. You’re supposed to write all of those systems yourself. Now, there are a lot of good reasons for that, but it’s also a massive undertaking when you’re just trying to get a handle on the basics. I’m sure there are open-source tools to do the job, but integrating those is a pain and it adds huge levels of complexity to what should otherwise be simple and straightforward learning exercise. (I already have my own matrix code, although it’s not nearly as well-developed as it could be.) When you get a blank screen you have to wonder: “Am I still not doing this shader properly, or am I mis-applying this new matrix system?” You can fall back to using the old matrix stack, but only if you find the directive to do so and only if you can intuit how it works. (It’s not well documented and I have yet to see it appear in example code.)

If you post some short shader code and ask for help you’re likely to get immediate dismissal: “The old matrix stack is deprecated! Don’t use it!” Which is like asking someone why your internal combustion engine doesn’t work and having them give you a hard time because it doesn’t have a gearbox, a catalytic converter, or a starter motor.

As if this wasn’t enough of an adventure, the OpenGL pages are somewhat capricious. In the process of working on this project the official docs would vanish for hours or days. You can download the docs as a PDF, but PDFs generally suck and Google can’t help you find what you’re looking for if they’re on your hard drive. And of course snarky RTFM forum posts all become that much less helpful as fallback documentation, since now they’re just insults with dead links.

Basically: Learning GLSL is is tough hill to climb, it keeps getting steeper, and the topography keeps changing without anyone updating the maps. The people who reached the top years ago don’t really have a concept of how convoluted the road has gotten and so they often give unhelpful advice.

But maybe I’ll have an easier time getting back to Good Robot if I force myself to do something sophisticated with shaders. Right now in Good Robot I’m just using them for simple stuff: I’m drawing basic squares and using the GPU (the graphics card, basically) to rotate them, scale them, and adjust the texture mapping so they draw from the proper section of the sprite sheet. That’s silly easy. My hope is that by doing a bunch of stuff that’s far more complex, I’ll actually get some depth to my GLSL knowledge and be able to spot the mistakes I’m making in Good Robot.

Goals:

- Aside from the shader work, I want to be really comfortable with what I’m doing. Which means doing something I’ve done before. Which means heightmap-based terrain. That’s easy, it’s familiar, and it looks interesting. (Enough.)

- I want to do something that lets me dump a bunch of difficult processing onto the graphics card. Since we’re working with terrain, I figure erosion is a good choice. I’ll make some real-time erosion code and have it run on the GPU.

- I want to explore how difficult it is to support Oculus Rift levels of performance. Most games run at 30fps. If the scene gets crowded or if the player stumbles into some scripted event, it’s generally acceptable (or tolerated) if the framerate dips down to 20 or so for a few seconds. But if you’re programming for VR, you need incredible performance. You need 60 frames per second. Worse, you need to render everything twice – one for each eye. If that’s not hard enough, it’s extremely uncomfortable to miss frames, so you can’t ever dip below 60fps unless you want to risk making people sick. So now the program has to render twice as much, twice as fast, with no margin for error.

I can’t afford (or justify) getting a Rift dev kit right now, but it should be simple to set up a test to see if my program can keep up with the Rift performance demands.

- I’m going to stop being so stubborn and just use the same dang coordinate system everyone else uses. More on this in another post.

So those are our goals. I’m calling this “Frontier Rebooted” just because I’m re-hashing a lot of the ideas from Project Frontier, although the code is going to be all new. We’re going to have hills and water and grass and trees like before, but they’ll all be made using new techniques with shaders.

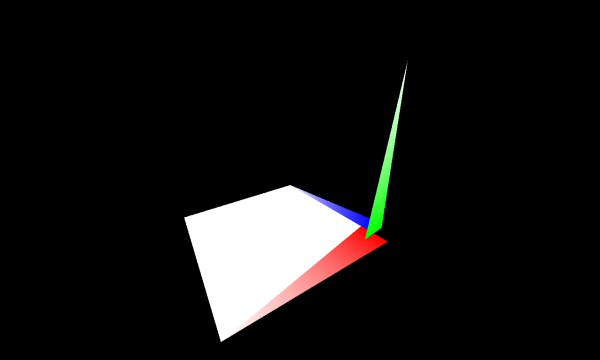

Step one: Grab the latest version of my various 3D tools (which come from the Good Robot codebase) and drop them into a new project. I set up an empty scene with a one-meter panel drawn at the origin. Just to make sure I’ve got the axis all pointing the right way, I build a literal axis arrow at the world origin. Red is X, Y is green, blue is Z.

|

Was that worth reading 1,500 words? I hope it was worth reading 1,500 words to see that. Next time we’ll actually accomplish something.

The Mistakes DOOM Didn't Make

How did this game avoid all the usual stupidity that ruins remakes of classic titles?

Secret of Good Secrets

Sometimes in-game secrets are fun and sometimes they're lame. Here's why.

The Biggest Game Ever

How did this niche racing game make a gameworld so massive, and why is that a big deal?

A Star is Born

Remember the superhero MMO from 2009? Neither does anyone else. It was dumb. So dumb I was compelled to write this.

This is Why We Can’t Have Short Criticism

Here's how this site grew from short essays to novel-length quasi-analytical retrospectives.

T w e n t y S i d e d

T w e n t y S i d e d

I’ve been going through this same process recently, with having to learn OpenGL in general on top of it (And learning OpenGL is even worse than GLSL- it has all the same problems of outdated stuff on the web, plus every tutorial uses some third party library to do some of the work, making starting from the very basics really hard).

From the sounds of it, you’re moving from using the fixed-pipeline style of rendering to the modern shader-based one. It definitely does put a lot more work on the programmer, in exchange for a ton more flexibility.

I too have been learning OpenGL and GLSL recently and given how easy it is to encounter old material on the internet I did run into a lot of the old fixed function pipeline stuff. This might depend on if you knew it before hand but I found it more confusing than doing it the more modern way. Doing it all yourself might not be easier, but I found it simpler.

I’m currently in a Computer Graphics class. My teacher basically limited us to using OpenGL 2.0 (a very outdated version) for this very reason. Going much above that would simply take too much time to learn how to use properly.

I gave up making 3d games entirely for these reasons. It made me feel like an idiot trying to figure this stuff out, discounted myself as stupid, and I went back to not making anything with code.

At least I know I’m not alone, now.

“I’m not smart enough” or “I’m stupid” is a bad reason to ever give up on anything. Everything is difficult, until it is easy.

“I could spend my time more effectively doing something else” is a good one.

“I could spend my time more effectively doing something else” can be a very low bar when the task at hand is sufficiently frustrating.

For example, if your honest expectation is that pressing further on graphics programming is going to be a painful slog that never results in you actually building anything that works, you might decide that it’s more rewarding (even in the ‘long term’) to spend that time tickling your pleasure centre by ingesting some nice empty calories.

True, but I leave that particular calculation up to the individual to determine.

At least it’s less discouraging than “I’m not smart enough.” I taught a middle-school dropout with poor math skills how to convert between binary, decimal, and hexadecimal in 2 hours.

And your point is?

I have had some wonderful times ingesting empty calories. Mmmm, chocolate.

Maybe so, but there are few things more discouraging than being unable to prove to yourself that you’re capable of doing some mental task. I now know that I’m not the problem; the problem is that the information base is held together with toothpicks and gum(not duct tape, that would actually have some substance).

But yes, eventually I felt like I was wasting my time because I have artistic obligations, and there are easier ways to make games, so long as those ways aren’t intended to result in a game with 3D graphics, and also not involving using OpenGL to make a 2D game.

I’m not gonna sit here and argue that it’s impossible. That’d be blind to the fact that there are those who do accomplish things with these systems. But the barrier for entry is obscene, it seems. It might be easier to learn how to play dwarf fortress over the phone.

I can’t help but wonder, from a business standpoint, how many work hours are lost just trying to get the misshapen puzzle pieces to fit together.

Well, if your inclinations lean more towards artistic talent than staring at endless lines of text, that’s still useful. Engineers tend to make terrible artists.

From what Shamus has said, I’d have to agree that the barriers to entry are rather obscene. It may provide more performance at the end of the day, but at what cost? The result is a system that you can only really learn if you’re a maniac who isn’t willing to ever admit defeat, which is just another reason why so many programmers are crazy.

That’s not efficient, that’s just elitist.

If you still want to learn the programming side, I would encourage you not to give up. For anything. If you think it’s just not worth the effort… well, nothing to be ashamed of. Emphasize the things you’re good at. If you can’t think of anything you’re good at, find something you want to be good at and practice it until you are.

It’s actually entirely worth it once you learn how. And it doesn’t take *that* long in the grand scheme of things- I learned it to a functional level in maybe 2-3 of weeks.

It’s just incredibly frustrating because so often you find yourself in a place where making *any* progress is painfully hard because you’re trying to cobble together some sort of guide out of dozens of different sources on the internet. You can spend hours feeling like you’ve accomplished absolutely nothing at all, which makes is really hard to soldier on and continue.

Once you get it working, though, there’s no comparison to fixed-pipeline stuff. It’s not just better performance-wise; the fixed pipeline was done away with because it was too limiting. Shaders give you way more options.

Just because I’m an artist doesn’t mean I’m not also good at logical deduction. I programmed a working JPS algorithm, at least. :P

And I’m an Engineer who also has a lot of artistic talent.

There’s always 2D games. With technology these days and the massive amounts of memory, I have always wanted to create a huge 2D world, something procedurally generated to explore, perhaps using isometric graphics.

Diablo 2 was a great example of 2D graphics in a large environment. If you look closely, you will see the game doesn’t have any hills at all, it’s all flat terrain, yet it is so nicely done, you never really notice.

There’s plenty to do out there. Personally, I think 3D is overdone sometimes, or maybe it’s just the same old games, oh look, another WW2 shooter… I haven’t seen that for a while! ;)

Also, with all the handheld devices around these days, it is breathed new life into 2D games which has been quite refreshing to see.

With that said, I do like Frontier and am happy to see something on here again as I love the idea of this, and exploring it. I would like to see some ruins randomly placed in it, maybe some old roads etc… something to explore. I love exploring, not really much of a killer. ;)

Ugh, yes, I have the very same problem in one of my FLOSS projects, xoreos (a reimplementation of the BioWare 3D games; think ScummVM for Neverwinter Nights and later).

In short, my OpenGL knowledge is stuck somewhere in OpenGL 1.2, and that too only from old tutorials. The code I wrote works…somewhat. It’s slow, missing a lot of features, and my 3D engine code in general is a mess. I tried learning the recent OpenGL API, only to be bogged down in conflicting information. What I have gleamed, and some infodumps by nice individuals with knowledge, just tought me how much I don’t know. I basically gave up on trying to learn all that.

I have tried cramming the Ogre3D engine into it, with middling success. Again, it kinda works, but I have to work around several Ogre3D peculiarities, and I lack the knowledge to judge whether I made the right choices. In fact, I know I didn’t, because a screen full of text drops the FPS into single digits.

Right now, I’m kinda hoping I’ll find a person willing to work with me on xoreos, a person with OpenGL knowledge and some time to spare. Hope dies last, and all that.

EDIT: Okay, two links is already enough to trigger the spam moderation queue? :)

My approach has been to create a Renderer class that contains all of the actual OpenGl calls. It helps keep the messy OpenGL stuff in one place, while everything else in the engine can have the interfaces I want them to have. Every renderable object is basically just sent to the Renderer in an array each frame.

That’s what I did, too. Built a draw manager that was able to organize everything to suit all the features I wanted, then every frame, I send it any updated objects, and say “Update, draw.”

It’s a much more rewarding system when you get a handle on it – allowing you to do fancier things like deffered shading to allow practically unlimited lights to render accurately.

I followed this tutorial a few months ago and it really helped to get to know everything and seems to be up to date.

I hadn’t seen that one before, it seems reasonable – but skip the first three tutorials!

They’re fixed-function pipeline, and won’t actually work on a lot of hardware as the fixed-function is only guaranteed to exist in OpenGL 2.x and older contexts.

– They made me think the whole thing was deprecated, but it quickly jumps up to OpenGL 3.3.

It is also very important to specify the exact OpenGL context you want – the various underlying toolkits offer ways to specify this, and you really must do as otherwise you get whatever’s default.

(And most tutorials don’t say how. Which sucks.)

– Minor hint, nVidia may be slightly slower in pure Core Profile, so develop in Core, release in Compatibility.

Yes, the OpenGL tutorials are awful, and most of the tutorials that pop up at the top of Google are deprecated!

The best tutorial site I’ve found so far is this one:

http://www.arcsynthesis.org/gltut/

My recommendation is to target pure OpenGL 3.3 Core Profile.

If you stick to the core profile and don’t use any extensions or anything marked as deprecated, it should run correctly on all hardware and reasonable drivers from the last few years.

Extensions are where the trouble starts, and mixing shaders with fixed-function (eg OpenGL 1.1) is asking for trouble. (OSX is particularly hairy, and has broken almost every time Apple released an update.)

You’ll need OpenGL 3.2 or newer if you want to do geometry shaders, which are a wonderful way to do sprites and procedural geometry, as you can offload almost everything to the GPU.

I’ve had a lot of fun with these!

I agree with all of the above, apart from the fact that I really should learn geometry shaders but am too thick to do so.

It’s fairly easy to get your hands on a set of matrix classes these days – I stuck the one I use together from bits of online tutorials and my own diseased imaginings, but I’m pretty sure one was included with, for example, the OpenGL Superbible.

Shamus, maybe you’d get more useful advice on GLSL if you posted about your particular problems here; I have personally found it easier to deal with GLSL’s quirks because it does at least do vector and matrix operations natively very much in the way that C doesn’t. However it’s often not clear from your column exactly which operations you’re having trouble with, and a basic lighting model isn’t more than a few lines in GLSL so between your commenters it should be pretty easy to get you moving in the right direction!

I don’t know anything about GLSL, OpenGL or shaders at all, and I have no intention of using them.

But it was absolutely worth reading 1500 words, because it’s still an interesting topic. Thanks.

I always love reading your programming posts, Shamus. Now, I ain’t a programmer, nor do I play one on TV, but it is still a lot of fun to be a spectator in watching you build something.

Now, what is meant by ‘deprecated’ in this context? I can guess a lot by context, but I’d like to know more precisely.

It means old and shouldn’t be used, but hasn’t been removed as not to break old code.

OK, that’s basically what I thought.

Still, that feels like handing a new mechanic at a shop a tool box and pointing to some of the tools and saying ‘Don’t use those tools, we only use those when fixing older cars.’

I think a better analogy is if you’re building a car, and you have a choice between part A and part B. While you can use part A, it’s no longer manufactured, so you won’t be able to replace it if it breaks.

It’s still not a perfect analogy, but it’s closer.

I would flip the analogy around – a deprecated car part is not used in new vehicles, but is still made for spares in older ones.

To modify your anology, I think it’s more like saying “here’s your tools, these ones aren’t that useful any more but we keep them around because they’re required to work on older machines.”

It wouldn’t surprise me if it CAN be like that at some mechanic shops, though I can’t say. I know almost as much about cars as I do about programming.

Me too! – everything I know about motoring I learnt from reading car analogies on Twenty Sided… XD

Anyway, this post talks a little bit more (if only a little bit!) about deprecation:

http://www.shamusyoung.com/twentysidedtale/?p=20921

Or maybe it’s like how thousands of businesses have computers still running xp, 98 or hell, even MS-DOS just in case they need to use a piece of ancient hardware or software that refuses to talk to anything newer?

When a function is depreciated, that basically is warning you that it will eventually be removed entirely and no longer be available. It can also mean something better exists to do the same task. They leave the function in to give you a chance to learn the new way to do things while still having the old one available.

You can basically count on a depreciated function eventually no longer being available. It gives you some time to adjust anyhow.

Small typo in the paragraph before “Step one”: “We're going to have jills and water and grass and trees like before…”

As far as getting help, have you tried StackExchange? I don’t know if they have a community for OpenGL/GLSL, or how good it is if it exists, but it’s been a great resource for any math or programming topic I’ve needed help on.

The GameDev portion of Stack Exchange might be a good place to start. Especially since they’re less strict about the “good” questions rule than StackOverflow is. Like, you’ll get more questions which are less focused, but you’ll also get answers which are genuinely useful for people trying to figure stuff out. SO often has questions locked as “not useful”, or “too broad” or whatever, but which are exactly the same thing I was about to ask… ^^;

I had the same thought. Any time I run into a poorly documented language, feature set, API, or whatever, StackExchange/StackOverflow is where I look first. (Well, usually I google it and look for the stack exchange links, since google does a better job finding stuff)

This is almost always the most effective way forward, since the site is populated by people who are both

a) very knowledgeable in whatever niche you might need,

b) motivated to provide helpful advice, because helpful advice is what gets modded up and gives you “points”

Here’s a link to the top voted questions tagged GLSL in stackoverflow, one could probably learn a ton by just browsing through these questions and becoming familiar with the answers:

http://stackoverflow.com/questions/tagged/glsl?sort=votes&pageSize=15

I read all 1500 words with a tinge of guilt, and I don’t think I ever apologised for breaking everything, so let me officially apologise for breaking everything.

And if it turns out the problem is because I’m using three-year-old drivers, you have permission to slap me.

(Seriously, though, even though I haven’t done programming since leaving school, I still love these posts of yours. A lot of your complaints about documentation apply to the support/server side of things too.)

Documentation sucks for pretty much anything more complicated than a toaster. Software, hardware, textbooks…everything. :S

Relevant: http://xkcd.com/1343/

Making tools that accomplish a task in an obvious way is difficult. Documenting the in-obvious ways in which tasks can be accomplished with poorly designed tools is equally difficult.

It really comes down to how much effort you’re willing to invest up-front, and how much you’re willing to offload onto the user-base. If the tools are only going to be used by a few specialists, it might make sense to document them poorly and simply pass the knowledge along as needed, or require that the experts derive usage from first principles. Ideally, of course, you’d have elegant tools AND thorough documentation… but that gets really expensive. When you’re paying “free on the internet” it’s hard to take complaints about quality of both tools and documentation very seriously.

The paid tools I’ve used have had documentation that was barely better than the free stuff on the internet. (Many free projects are actually better.) So, where’s all that money going? XD

I totally agree that purchased goods carry an expectation of comprehensibility. That so many free tools are better than professional ones is a reversal for sure (and a happy one at that). I just think it’s a bit unfair to hold free tools to the same standards that we expect from ones for which the developers are being compensated.

My one complaint with the article: I wanted more words! :O I have really really missed your programming posts. So glad they are coming back!

Seconding! In a way, programming stories are like the ancient Heinlein mode of science fiction adventure: weird and unexpected problems, overcome by daring heroes with knowledge and ingenuity. :)

For a few years I’d been trying to learn OpenGL without success. What finally made modern OpenGL and GLSL actually click for me last year was restricting myself to OpenGL ES (for WebGL). It allowed me to focus on a much, much smaller API, a simpler shader language, and very little historical cruft. I could do searches for “OpenGL ES” specifically and get better results. I also found the WebGL specification to be a good, quick reference: except for GLSL, everything I needed was on that one page. There are also a couple of good OpenGL ES books out there.

I got an error here: “This page has moved”

So https://www.khronos.org/registry/webgl/specs/latest/

is not valid…

http://www.khronos.org/registry/webgl/specs/latest/1.0/

should be used instead.

And do note that the url is not the same as http://www.khronos.org/registry/webgl/specs/1.0/

Which has a higher revision but is actually a older standard (how the hell did they mess up that numbering this way?).

The truly great thing about standards is how badly documented they are.

I linked “/registry/webgl/specs/latest/” because I assumed that would always redirect to the latest version. Somehow I’m not surprised to see Khronos screw this up.

They’ve been a tad messy over the years yeah. The redirect works but threw an error/message here.

A better url would be http://www.khronos.org/registry/webgl

and then just click the “WebGL current draft specification” link.

The extension registry is also linked from this page.

Yes, this; so very much this. :-)

Focusing on webgl, which outright prevents the use of any of the older deprecated stuff because it’s built on ES, and also outright prevents the use of any extension unless you explicitly ask for it, turned out to be amazingly helpful when trying to port the original Frontier to a browser. (Not that I’ve gotten very far. But trees render, at least, and it’s doing order-independent transparency! Which I think I’ve talked about before, at length.)

Turns out the OpenGL thick client setup allows you to call any particular extension function that the GL library includes, whether you negotiate it or not, as long as you have a function prototype. (In one sense it doesn’t have a choice, since wglGetProcAddress / glxGetProcAddress have to work the same as the native GetProcAddress / dlsym functions, which look at the DLL’s / shared-object-file’s public symbol table. Doing the symbol lookup at program-link time instead of runtime has to work, but it means you can accidentally mess up extension requirements or whatever.) Since webgl doesn’t let you use a symbol until you’ve asked for the extension that contains it — the extensions are each registered in their own namespace so your code can’t just refer to them — it can’t run into the same issues.

Of course, after saying all that, it *does* mean moving from C++ to javascript, and from thick client code to a browser. So that might be another whole learning curve for you (==Shamus), which luckily I didn’t have to climb since I did it a few years before.

I wonder if it’s possible to do OpenGL ES in a windows program. Hmm. SDL might make it hard though…

GLFW3 seems to be able to open OpenGL ES contexts (I don’t use ES myself, but their “New features” page claim you can do it). For me, moving from SDL to GLFW3 was quite easy. Unfortunately, that means that I’ll need to look elsewhere for playing sounds.

I would be interested in reading about your order-independant transparency. Where have you written about it?

It is – that’s what ANGLE does under Windows

Qt5.x currently has two available builds on Windows desktop (as well as the compiler – ANGLE (default) and OpenGL.

In the ‘ANGLE’ build, you have the OpenGL ES API, and ANGLE translates this into DirectX.

While this probably comes at a performance cost, I haven’t tried it so couldn’t say whether it’s significant.

In the OpenGL build, you’ve got normal desktop OpenGL.

I’ve only been using the OpenGL builds so far, with OpenGL 3.3 or 4.x depending on the project.

This is very reassuring to hear as I have been wanting to use 3D graphics in Javascript programs and have in the past tried and failed to understand OpenGL. It is good to know that WebGL simplifies things somewhat, thanks for the link to the specification.

Shamus, among all the things I come to Twenty Sided for these programming articles are some of my favourite. More words spent on the intricacies of coding please!

Shamus can you update your Projects page to include the Good Robot stuff (and other stuff not on there)?

I think they’re all in ‘Programming’ rather than ‘Projects.’

That’s what I meant, and no they’re not.

You don’t see them via the link below – starting five or six posts down? (Perhaps after a page refresh?)

http://www.shamusyoung.com/twentysidedtale/?cat=66

(Please do not share that link with any clone troopers you may know.)

I think the above might refer to the page reached by clicking the big button that says “Programming” in the right column.

Reckon you might be right, there! Never even noticed that; d’oh.

Yes. That however (as Packbat notes) is not reachable via the Programming button.

I have no idea where you found your link, it’s not anywhere I can find, but thanks for it. It’ll make going back and reading those articles far easier than trying to go back blog post by blog post.

I fiddled with the sidebar some weeks ago and managed to remove the category selector. It’s been on my list of stuff to fix for a while now.

Cool. I await it’s return!

There are publishers out there that appear to specialize in cheap books that turn opaque documentations into actual how-to books. With the predictable reaction by some that: “LOL, this is just selling free docs to the clueless”, but, you know, whatever.

Sure enough, I can find at least one such book on GLSL from such a publisher.

http://www.packtpub.com/article/opengl-glsl-4-shaders-basics

Is it good? Accurate? UP to date? Couldn’t possibly tell you, but even if this specific one is not what you need, there must be other titles out there, and a few bucks may save you hours of headaches.

Boy do you hit the nail on the head about the OpenGL docs. I originally learnt OpenGL through uni when using 2.1, but for my projects now I like to use OpenGL 3.3. At first it was an absolute pain getting info on 3.3, which had completely overhauled all the function calls and of course the matrix stack. But finding a tutorial for 3.3 was impossible, all I kept getting was 2.1 or even immediate mode tutorials….

I eventually stumbled upon http://ogldev.atspace.co.uk/ which is a superb list of tutorials that go through how to code with 3.3 and has examples (Just skip the first three, they basically just explain how it “used to be done”).

On 3.3:

I love the new matrix stack, it vastly improves how you can set up your rendering pipeline. For my project I needed to do lighting calculations done by vertex, rather then by pixel, with 3.3 it makes it really easy to do my lighting calculations in the vertex shader before the perspective matrix is applied.

It is also much cleaner to both send variables to the shaders and then sending variables between shaders, and simply by looking at the names, you can tell what variable is an input and an output for the shader.

I am so glad I decided to learn SFML instead of putzing around with all that OpenGL code. I actually got somewhere quickly without too much trouble. It’s a solid library.

I would have loved to use SFML, but it does (did?) not seem to be able to open sRGB contexts. How do you do your sRGB encoding? By hand, or does SFML do it now?

The words were absolutely worth reading; I love your programming posts as I get huge motivation hurdles of my own and your blog lets me experience the programming vicariously.

… I should really dive back into that last project of mine …

I always hate the ‘snark’ as you call it when you want to learn new things like these. A sad fact is that it is both boring and hard to write good documentation that can be read by someone who actually needs it, so it hardly ever gets done. This problem appears to be worse in open source projects, I assume because of the boring part.

My favourite example is all the Microsoft documentation on for instance C# (but it is universal for everything they do). The official pages can only be read if you have a degree in that language it seems and I’ve never actually met anyone that can use them effectively. It is like they go out of their way to make it look more complex than it is. Even as a reference in case you just want to remember how it works it doesn’t seem to work. Thousands (millions?) of lines of documentation that are useless to most people.

I understand it can get frustrating to see the same question over and over, but if people keep asking it over and over it is likely something is wrong with the documentation and not those people…

Oh so agree with this. I work in document solutions and my new job has had me shift from a purpose built WYSIWYG program with GUI to dabbling in C# instead. There are loads of web based resources for the beginner but the Microsoft library is an unwelcoming maze of self referencing jargon. I generally end up on StackOverflow sifting through the snark trying to find the one toungue-in-cheek ‘gag’ response which actualy answers my ridiculously simple noob question.

I’ve found MS’s docs to be pretty good, actually.

They’re references, not tutorials. You aren’t supposed to be going to them to learn the language itself, they’re for learning the libraries.

I know this might be even less helpful than RTFM posts, but have you considered using Cg instead? I know nobody really uses it, unless he is programming for PS3, but it can output both DirectX and OpenGL shader programs and the syntax is (obviously wuite similar to C and other derivatives).

So here’s what I did. Do some of the basic tutorials like NeHe at http://nehe.gamedev.net/ to understand all the concepts. Then throw away all that sample code and start again.

Download the OpenGL 3.3 language and shader reference manuals:

http://www.opengl.org/sdk/docs/man3/

https://www.opengl.org/registry/doc/GLSLangSpec.3.30.6.pdf

Now either use SDL to get everything initialized, or do it yourself (painful.)

As you write your new code, use the reference manuals to find the features you want, then Google for uses of those features, checking to make sure the uses are version 3.3. StackOverflow has lots of good stuff.

Because you are starting with the reference manuals, you won’t be using the wrong functions. But do make *very* sure when you Google that the examples are the right version.

You can switch to earlier versions (2.1 is close to WebGL) or later versions (4.0), and the principle is the same. Anchor yourself with reference manuals and then Google for working code.

OpenGL is a funny thing to write in. My experience a few semesters ago, writing a flow visualization, was that you REALLY need to test on multiple platforms. Some platforms (Windows/NVIDIA) are more lenient than others. I had what I thought was a working program because it ran on my primary development machine (my MacBook in Windows). Then I tried to test it in Linux and Mac on the same machine and got garbage out, ditto for my much more powerful AMD-based desktop.

I had to strip the GL-based stuff down to 1.2 level stuff to get it to work everywhere before the deadline. I skimmed the comments and bookmarked one of the tutorial sites listed — hopefully that will be helpful. I look forward to this series, no matter what happens.

Yay, new programming project! I’m always so interested by these. Every pedantic little detail is fascinating to me, don’t worry.

Also, I really enjoyed Frontier the first time around, so Frontier Rebooted should be interesting. Are you still planning to just leave it as a proof of concept sort of thing for the terrain, or are you going to do more on the “how to make this an actual game” side of things?

A game all about erosion would be kind of weird, but at least it hasn’t been done to death.

You have probably already come across this one, but I thought I’d still drop this one here: http://rastertek.com/tutgl40.html

It’s an OpenGL 4.0 tutorial series, covering some of the basics up to simple lighting. I have no clue on about how good it is, but his(her?) DirectX tutorials are pretty good.

How ubiquitous are OpenGL 4 machines these days? I know that my five-years old laptop stops at 3.3. I’ll get a new laptop soon, but I am wondering if I should continue with 3.3 or if pretty much everybody can run 4.0 by now.

Whenever you have this kind of question, about the install base of different things, I strongly recommend you check Steam’s hardware survey. Here: http://store.steampowered.com/hwsurvey/

Annoyingly, they don’t say anything about OpenGL, but you can deduce that from the graphics card’s statistics.

After a bit of research, it would seem that OpenGL 3.2 is about the sweet spot for modern OpenGL and cross platform compatibility (has Apple updated their drivers yet?)

Thank you for the tip, this is very useful. Is there a way to convert a DirectX version into a OpenGL version? Like “DirectX 9 shader model 2” into “OpenGL 2.1”? I understand that I am violently mixing software and hardware here, but there may be such a correspondance table somewhere.

only 1500 words? was hoping for so many more :)

Absolutely adore your programming posts and I am glad you are back at it :)

I find it a little odd when I see people mention they are having trouble with certain things about this or that GL stuff because I never had much trouble getting into it. Then I remember my programming course is focused on how to make games and get you a job doing it, so graphical programming is rather important in my education but its not something easy to locate online, nor is it something other fields seem to care about (also I tend to assume everyone knows more than I do about everything).

If you have any maths troubles I’d recommend looking into OpenGL Mathematics as I’ve found it solid to use, much better than my own maths library.

So maybe when Shamus is finished doing this he can write a book about how to do this stuff which is comprehensible and useful, and make $$$. I mean, the market is doubtless not that large, but to say the least it does not seem to be saturated.

Possible odd marketing trick: His loyal followers (us) could go around to all those places Google searches take you to, where people are asking questions about this stuff, and recommend Shamus’ excellent book. Then everyone looking for answers and going to look in those places will see that their prayers can be answered for a couple of bucks and a quick download from Amazon.

An idea for the title of the book!

The F***ing Manual

by Shamus Young

+1

That would be hilarious.

Oh, yes!

+1

I’m really pleased to see that you’re getting back to this Shamus. I’m planning to get into the GLSL myself when I get some free time again over the summer, but I know how tricky this stuff is having tinkered with it on mobile phones and Linux..!

My advice, if you’re serious about learning OpenGL and GLSL, invest in the OpenGL SuperBible (get the version for whichever OpenGL version you mean to target). It doesn’t assume you know anything, and walks you through the basics without referencing the old ways.

It also has a ton of example code, which you can also download for free if you want just examples of the shaders. You can get those here: http://www.openglsuperbible.com/example-code/

It goes from the most basic (drawing a single triangle) to some pretty advanced stuff, so even just reading the code should be helpful.

The Superbible seems to rely a lot on a library written by the authors themselves. I guess that’s fine(ish) when you program in C++, but I don’t (I code in go these days). If their sb6 library abstracts too much, then the book won’t be that useful to me. Indeed, I don’t want to learn THEIR OpenGL, I want to learn the ‘real’ one. This is why I have not purchased it yet. Do you think that their sb6 library is light enough for people like me?

I used SB5, since that’s the one I bought a while ago. That said, they simply used the library to handle things after explaining why they did it that way, or to hide things until they can get around to explaining it.

Since you have the source to the library anyway, you can dive in and see how things work in as much detail as you want. You can even modify the library and see how it works.

For example, even though the examples in SB5 used GLUT, I never bothered with it and instead I used their library with SFML to handle the windowing. SB6 seems to have done away with GLUT and provides a framework for the application better suited to their purpose.

Take the simplest example I was able to find in the SB6 source bundle: singletri. If what you want is to learn GLSL, none of that is abstracted by the library. The shader code is right there for you to see (in this case, a vertex shader that creates a triangle and a fragment shader that colors it). For someone like Shamus, that says he’d like to see examples of modern shader code, these are perfect.

So, to answer your actual question: Do I think the library abstracts too much? The library abstracts all the boilerplate code to create a window and an OpenGL context, with an object oriented approach. That’s all the “not OpenGL” stuff you don’t care about.

At least for the 5th edition (I can’t speak about the 6th edition), the book explained how OpenGL is modeled, and why you need to take each step. I’ve found it very helpful to understand OpenGL (though I’m not an expert by any stretch, and I haven’t used anything more complex than textured triangles in my own work).

Thank you for taking the time to clarify this. I am relieved :). It will be nice to have an `exhaustive’ and consistent documentation other than the Reference Pages for once.

It was well worth reading the 1500 words. It’s not the end result I enjoy, it’s the journey getting there. Your thought processes and sense of humor I personally enjoy more than the end product usually.

Ooooh, how exciting! I loved reading the Project Frontier entries, they made me want to learn how to do programming. Don’t have time for that unfortunately, so I’ll just have to live vicariously through these posts.

Ok, this is OT and may even considered to be a troll — but it is not intended as such.

In general, I probably wouldn’t play stuff (on a PC) that works at 30 FPS. I can easily see the difference between 30 and 60 FPS and 30 just isn’t smooth at all. 20 is borderline unplayable, imo.

In other words — why do you say that most games run at 30 FPS (on a PC)? I can’t truly remember the last game I’ve played that was intended to run at this FPS — possibly Atlantica Online — and it was annoying as hell.

I strongly believe that ‘the world’ has moved away from ’30 FPS is enough’ long ago. 60 is the new 30 :)

Sadly, the opposite seems to be the case in some places (read: Not PC). Time was, you could reasonably expect consoles to attain 60 (or 50) consistently, but I’ve read that these days, consoles are completely failing to keep up with new graphics technology, and many games choose to sacrifice 60fps in favour of “more shineys” at 30. And of course, if you complain about this, you get called a Nazi. (I’m not even kidding)

The reason that console players at least have the option now is because the complaining about it was so numerous and so vocal.So while there were many idiots that called people nazi for it,they lost.