Mark normally posts on Sundays, but he seems to be on a roll this week. He has more on the probalistic systems, which I mentioned earlier. This led me to this bit from Nicholas Carr, which is one side of a debate on the merits of probalistic systems.

Back already? Great.

As others have pointed out, one thing about the these systems is that even if nobody is cheating, deciding what is “good enough” is a bit abstract: It depends on what you want to do with the emergent data, and what your standards are for usefulness. Everyone’s big problem seems to be with Wikipedia. It is often used as an example of a probablistic system that doesn’t really deliver and (occasionally) used as an indictment of probalistic systems in general. As far as probalistic systems go, Wiki is really a poor example. I think it’s a stretch to lump it in with systems like Google and Technorati. So what makes Wikipedia so different?

Low fault tolerance

Let’s say I wrote some software that looks at common airplane approach vectors to major airports. Pilots can can enter their current position, their destination, a few other variables, and my program will then come back with, “Based on what other pilots have done in similar circumstances, we suggest using the following approach…” Let’s assume I do a good job and my program makes the right choice nearly every time.

Well, we can stop right there. Nearly every time isn’t nearly good enough in this situation. I don’t care how much depth we give the dataset or how many variables we take into account. The whole system is useless.

On the other hand, let’s say you want a picture of Brittny Spears for your desktop (humor me here) and Google comes back with a less-than-optimal result. Instead of giving you the “official” page run by some media company, it gives you a website maintained by a fan. Odds are, his site has what you want as well. Even if it doesn’t, he probably has a link that will point you to the goods.

The difference between these two situations is pretty stark. One is a waste of time, even with a 99% success rate, and the other works well enough even when it gets things “wrong”.

And this is Wikipedia’s problem: Most people have a pretty low tolerance for error in an encyclopedia. If the info is wrong (or even suspect) then they have to look it up elsewhere, so why bother with Wiki at all? More to the point, if you have a low error tolerance, should you really be using probalistic systems? Probably not.

Lack of Darwinisim

As I understand Wikipedia, each subject has one entry. If I think the guy who wrote the entry for Article 153 of the Constitution of Malaysia got something wrong, I edit the original article. The next person to visit the page will see my version, not the original. People can review new changes or revert to old versions acording to various rules, but at any time there is only one page for Article 153 of the Constitution of Malaysia, and the average visitor isn’t going to want to take part in the courtship between new data and old data.

This isn’t a good way to foster, uh, probablisim. For a healthy probalistic system, it would need to create a new article that exists parallel to the original. They would be “ranked” according to (perhaps) number of incoming references that favor one version over the other, and the number of times users clicked on “this item was helpful”. The two versions of the same subject would be allowed to compete for visitors, with better pages slowly knocking less useful pages down in the rankings. Thus, each visitor contributes to the system by helping to rank pages, often by simply using them and then going away. This means the data gets more useful even when nobody is editing the articles themselves.

(Note that I’m not suggesting it should work this way. There are many reasons why this might not be a good idea. I’m just saying this would give the system much stronger probalistic properties.)

Detecting bad data

As I mentioned before, often Google will give you a less-than optimal result, but things still work out. Often the “wrong” site will contain a link to the “right” one. Finding a Brittny Spears fan site leads me to the official one. The same is not true for poor Wiki. When I get to the wrong site, I don’t know it’s wrong. If I did, I wouldn’t need to look it up. Even worse, finding the wrong birthday for Napoleon doesn’t lead me to the right one. It leads me to propigate bad data.

Help from the user

It is very, very rare that I ever need to check out page 2 of Google search results. Usually what I want is right there on page 1. However, often my goal is not the #1 result. So, Google is great at narrowing a search down to 10 or so likely contenders, but it has a really hard time picking the right one out of those 10. Since it lists all 10, and lets me choose, it doesn’t have to. That last level of value judgments – the most difficult – is left for the user.

By contrast, there is no way the user can really “help” Wiki, unless they jump in and write an article.

I guess my point in all this is that Wiki, regardless of its usefulness, is a bit shabby when it comes to probalistic properties.

Fable II

The plot of this game isn't just dumb, it's actively hostile to the player. This game hates you and thinks you are stupid.

Steam Summer Blues

This mess of dross, confusion, and terrible UI design is the storefront the big publishers couldn't beat? Amazing.

The Game That Ruined Me

Be careful what you learn with your muscle-memory, because it will be very hard to un-learn it.

My Music

Do you like electronic music? Do you like free stuff? Are you okay with amateur music from someone who's learning? Yes? Because that's what this is.

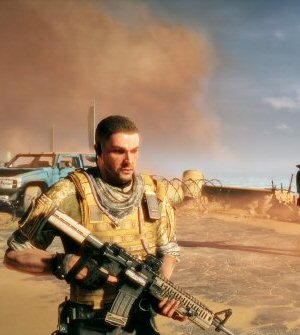

Spec Ops: The Line

A videogame that judges its audience, criticizes its genre, and hates its premise. How did this thing get made?

T w e n t y S i d e d

T w e n t y S i d e d

You make some excellent points, but I do think that Wikipedia is a great example of a probabilistic system… but you need to look at the entire system, not any individual entry. It’s true that many individual entries are probably not the emergent result of very many visitors, but the system as a whole certainly is…

Anywho, thanks for the links and the food for thought…

I’m hard-pressed to call any well-managed wiki to be a probabilistic system. There’s still administrators and moderators sifting through commits, looking for bad information and correcting it. It begs the question, why allow these commits to hit the front and then be merely moderated after the fact? Regardless, there’s a big, human hand involved in wikipedia, and many other major wikis, and as such, I don’t consider it a probabilistic system. As for poorly-managed wikis, they’re usually about as useful as an anonymous no-signup forum dedicated to the topic at hand, except that the threads have a certain, specific direction and posts can be redacted by anyone at any time (through they’re still in the history).

Or something.

tl;dr: Wiki != probabilistic. The manual, human involvement is far too large.