It turns out that the most capricious, buggy, unpredictable, and mysterious part of my program is the vertex shader. This is frustrating because it’s the thing I know the least about, the thing with the least documentation, and the thing that’s hardest to debug. Of course, these are also the reasons the problem exists in the first place.

The vertex shader malfunctions on about half of the testing machines, and it malfunctions in a different way in each place. There’s no pattern to these behaviors as far as I can tell. It works fine on one Linux machine, and malfunctions on another. Fine on one XP machine, not on the other. Works great on my Windows 7 machine, but goes crazy on another. Maybe I could nail something down if I started collecting data on driver versions and graphics card manufacturers, but that’s probably not a good way to spend my time. I mean, if I discover the problem is with laptops using ATI cards, that doesn’t help me solve the problem. I’m not John Carmack and I don’t have encyclopedic knowledge of all the various drivers and their quirks and exceptions. For me, knowing where the problem happens doesn’t put me any closer to the solution.

I need the game to run properly on all these machines, and the stuff I’m doing is so stone-age simple that there’s no reason for this chaos. It should just work. Barring that, I would hope the system could at least have the decency to fail predictably.

I’m sure all of these problems are either from bugs in my shader code or from holes in the documentation. But I can’t find the problem if I don’t understand it.

I’ve described how shaders work before. You might remember this diagram:

|

So at one point I had a bug in my shader where it was passing along the red, green, and blue values of the polygon, but NOT passing along the alpha value. The outcomes:

- My machine: Shader just silently forced all alpha values to 1, thus rendering everything. The only hint that anything was amiss was that smoke particles weren’t fading out the way I expected.

- User #1: Shader silently forced all alpha values to zero, thus not rendering anything.

- User #2: Alpha had a random value, resulting in sprites flickering on and off randomly like strobe lights.

- User #3: Shader apparently used one of the given color values for the alpha channel, so the the brighter the color, the more opaque the polygon.

One bug, four divergent outcomes. I managed to track that one down, but I’m still getting random behavior.

Part of the problem is that there are a half dozen ways to do everything, and it’s never clear which is the “right” way. We’ve got different graphics cards, different drivers, and different versions of the OpenGL shader language. New cards add new ways of doing things, which results in new stuff being added to the language, which results in situations where sometimes things are REMOVED from the language, which results in Sparta.

I’ve been trying to read up on the subject, but it’s hard to make any headway. The shading language has gone through a few revisions, so old example code might not compile, or it might compile with warnings, or it might compile but malfunction. Tutorials seem to begin mid-stream instead of introducing first concepts and building up.

Actually, this is my biggest complaint. I really feel like the “Hello World” of vertex shaders should be a short example that simply reproduced the fixed-function pipeline (what you get when not using shaders) and is compatible with all versions of the shader language. I’ve googled around, and all I can find are non-working / incomplete examples, dead links, and jackasses asking “Why would you want to do that?”

Yes, there are books on the topic, but my shader program is so simple I really hate to purchase and read a friggin’ textbook just to shove some 2D quads around the screen. That’s like getting a medical degree so you can take aspirin.

Basically, I’m rendering thousands of squares. For convenience, each robot has a position, a rotation, and a size. Now, I can do math to turn these values into the corners of a square, but that’s a lot of trig to give to your CPU. When we have thousands of particles and each particle has 4 corners, we end up doing a lot of sine and cosine operations to figure out where all the squares belong.

This is what sprites look like if I draw boxes around them:

In my profiling, it looks like the vast majority of my time is spent generating these squares and shoveling them at the graphics hardware. It’s the only part of my program where I’ve made any effort to speed things up, and it’s still the slowest part of the game. AI and collision detection are a distant second and third. They’re so distant that it’s actually tough to tell which one is second and which one is third. The CPU usage of building sprites is so big and noisy that measuring the other two is like trying to figure out if your cell phone light is brighter than a candle when you’re standing in direct sunlight. (To be fair, my performance profiling tools are really rudimentary. I mean, my clock only has millisecond resolution, so I need to take a lot of measurements over many frames. Also, a LOT of CPU time goes unmeasured if it’s happening in things like audio-processing threads.)

With a shader, we can simply hand all of that annoying and CPU-sucking math off to the graphics card. The graphics card is barely working, and the CPU has lots of stuff to worry about already. Let’s just send it a simple 1×1 rectangle along with the position, scale, and rotation values and let the hardware to the math.

Imagine our CPU and graphics card are roommates. Our CPU is Alice. Alice is a healthy but underweight lady who has many jobs working as a sound engineer, musician, animator, file clerk, garbage collector, and analyst. Her roommate is AN ENTIRE FOOTBALL TEAM, who is currently unemployed except for some occasional one-man jobs that show up on the weekend.

Then a new job opening appears: Moving heavy appliances. Who should take the job? Alice, or FOOTBALL TEAM?

This shader is my attempt to pass the work off to FOOTBALL TEAM instead of just dumping more work on Alice, but it’s turning into a time-sucking circus of bugs and frustration. This one feature accounts for over half my bug reports for the last few builds I’ve done

For the curious out there, this is the shader I’m working with:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 | #version 120 #define TEX0 gl_TexCoord[0] in float attrib_angle; in float attrib_scale; in vec3 attrib_position; in vec3 attrib_atlas; void main() { vec4 vert; float texture_unit; float rad; vec2 rotate; //Color is pass-through. gl_FrontColor.rgba = gl_Color.rgba; //Atlas pos contains the column, row, and scale of our sprite TEXTURE. texture_unit = (1.0 / 32.0) * attrib_atlas.z; //Default grid is 32x32. TEX0.xy = (attrib_atlas.xy + gl_MultiTexCoord0.xy) * texture_unit; TEX0.y = 1-TEX0.y; //Because OpenGL thinks upside-down. //Finally, prepare the vertex for the frag shader vert = gl_Vertex; rad = radians (attrib_angle); rotate.x = sin (rad); rotate.y = cos (rad); vert.x = gl_Vertex.x * rotate.y - gl_Vertex.y * rotate.x; vert.y = gl_Vertex.x * rotate.x + gl_Vertex.y * rotate.y; vert.xy *= attrib_scale; vert.xyz += attrib_position; gl_Position = gl_ModelViewProjectionMatrix * vert; } |

Sigh. 32 lines of non-branching code. It’s just some simple math. It should not be this mysterious and difficult thing.

(For the curious, the bug I mentioned earlier was line 17, where it originally said:

17 | gl_FrontColor.rgb = gl_Color.rgb; |

That’s pretty subtle, and I could have any number of bugs like that in my code, where undefined behavior will work fine on one machine and cause chaos on another.)

On one hand, using a shader is the right thing to do, performance-wise. On the other hand, this is a massive time-sink and the project would be much further along if I wasn’t wasting so much time on it. I have no way of knowing if the next round of changes will clear things up until I send it out to my testers, and when they report visual glitches I don’t know what to make of them because it’s all random.

I have features I could be writing and the playtester feedback would be much more useful if they could actually play the game.

So this is a frustrating spot to be in. Do I waste more time doing it right, or cut corners and have an inefficient game that requires a lot more horsepower than it should? I’ve taken such pains to make a lightweight, trim, and efficient little program. It would kill me to ruin all of that now. It would also kill me to spend more days on this crap.

I’ve actually re-written the shader this week. If it’s still wonky I’ll just disable it and re-visit this topic later in the project.

EDIT: My new shader failed on the first machine.

0:6(22): error: 'in' qualifier in declaration of 'attrib_angle' only valid for function parameters in GLSL 1.20

So it works fine on my machine and compiles with no warnings, but is an INVALID program on another. This error is worded in such a way that indicates it shouldn’t compile OR work ANYWHERE.

So that’s horrible.

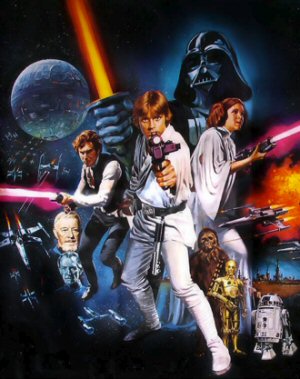

If Star Wars Was Made in 2006?

Imagine if the original Star Wars hadn't appeared in the 1970's, but instead was pitched to studios in 2006. How would that turn out?

Diablo III Retrospective

We were so upset by the server problems and real money auction that we overlooked just how terrible everything else is.

Punishing The Internet for Sharing

Why make millions on your video game when you could be making HUNDREDS on frivolous copyright claims?

Are Lootboxes Gambling?

Obviously they are. Right? Actually, is this another one of those sneaky hard-to-define things?

Skyrim Thieves Guild

The Thieves Guild quest in Skyrim is a vortex of disjointed plot-holes, contrivances, and nonsense.

T w e n t y S i d e d

T w e n t y S i d e d

I don’t know OpenGL, sorry, but in HLSL this would be a pixel shader, not a vertex shader– is there a reason you’re using a vertex shader?

EDIT: it occurs to me I’m so ignorant of OpenGL I don’t even know if it has pixel shaders, so ignore me if this is a dumb question.

Could you explain why this would be a pixel shader and not a vertex shader?

From the looks his code, and diagram, it looks exactly like Shamus is doing basic operations on vertices.

Wouldn’t this mean that it has to be a vertex shader?

Generally speaking, a vertex shader is for mapping a 2D image onto a 3D surface, while a pixel shader is for mapping a 2D image onto a 2D surface. (Or altering an existing 2D image in some way.)

Shamus is doing operations on vertices because he’s using a vertex shader. (Yeah, I know.) The point I’m making is he could omit the vertex shader entirely and do his sprite drawing in the pixel shader.

When I think about the problem more, maybe there’s absolutely no difference between the two in this use-case. I just remember from working with HLSL that generally speaking, pixel shaders seemed to be easier to get correct on the first try. And perhaps using a vertex shader makes, for example, having a correct z-order easier. I’m sure he has a good reason.

That is not even remotely correct. The Vertex Shader works on Vertex data (which is usually what the GPU gets from the CPU). You can edit the position, vertex normals, vertex colors and whichever attributes you want to give your vertices. (here you could have an optional Geometry Shader, that can create or remove vertices.) Then the Rasterizer interpolates these data over the polygons (using barycentric interpolation) and creates one fragment for each pixel on the screen (difference between pixel and fragment: a pixel has only rgb, a fragment has all the vertex data that the vertex shader produces). After that the Pixel Shader (or Fragment Shader) creates the image you see on screen.

It’s not a matter of vertex shader or pixel shader, you always have both. And what Shamus is doing (editing Vertex position and color) is exactly what you would do in a vertex shader.

A common use for the pixel shader would be to ap0ly a texture to a polygon, or calculate lighting information.

Welp I feel dumb. Sorry all.

A vertex shader manipulates vertices’ positions. They’re often used for skeletal animation, or grass swaying in the breeze.

This is a pixel shader, or, as OpenGL calls them, a fragment shader. It modifies colors, not positions.

It operates on vertex data, not pixel data. It’s a vertex shader.

[edit] Actually, it looks like it might be creating the quads, which I think would make it a geometry shader.

Nope, it only modifies Vertex Data. It rotates, scales and moves a set of vertices and then applies the ModelViewProjection.

I was pretty sure someone else would have brought it up already if I was right, but it’s nice to get confirmation.

I remember having to switch between OpenGL and DirectX in an old game when I played it on a modern machine. One of them worked improperly all the time, having severe graphical glitches. The other worked perfectly… as long as you weren’t underwater, where it didn’t work at all, it just showed a black screen. I think the game was Half-Life, but I’m not sure.

“I'm not John Carmack and I don't have encyclopedic knowledge of all the various drivers and their quirks and exceptions.”

You’ll recall that Rage’s launch was marred by high-profile bugs, caused by issues with the shaders in AMD’s drivers. When John Carmack can’t figure this shit out, something’s wrong.

Actually the release problem was because AMD (Or nVidia? one of them) guaranteed they had fixed the bugs and would release a new version of the drivers to support Rage. But then for some reason or another, they didn’t. It took a week or two for the fixed driver to come out. In the meantime all the press and fanboys with those cards when ape-shit.

Wasn’t Carmack’s fault.

Actually, it looks like that error means that your tester is running a version of GLSL that is incompatible with the version you’re writing for.

So yea. You’re borked. No one wants to play forward-backward-compatible tag with something like shaders.

Yeah. Something like the “radians” function work for GLSL 2.0 but not in 1.2

It’s kind of weird using function for basic conversion. 1 degree always equal x radian. But that’s just me. I always try to restrain to multiplication and addition whenever possible. Even division sound like a bug-bait. Better badly rounded than sorry.

Once I was grading an Open GL graphics class, and an assignment was a fairly simple refraction program. One of the student’s vertex shaders didn’t want to compile, and the error was some vague thing about reserved keywords or somesuch (it wouldn’t tell me what it was, just the line). You know, the “17:expected identifier got keyword” sort of helpfulness.

It turns out that there’s some GLSL keyword that’s reserved…

but only on AMD cards…

and only on Linux.

I don’t remember the keyword anymore, but it was a really silly and simple keyword that made sense in a refraction context. From my research, the keyword doesn’t DO anything. It doesn’t refer to any AMD-specific function, constant, or variable, nor is it used in any internal context. It’s kind of just reserved for the sake of being reserved. It doesn’t even have a legacy purpose like the old C “register” keyword.

I think shader bugs like that might drive me insane if I ever make anything commercially.

Might be the case, aye. OpenGL support in graphics drivers is notoriously worse than directX..

Kinda ironic, how going with the supposedly more compatible and open graphics language results in more compatibility problems. This seems to always be the hidden hurdle that no promoters of oss acknowledge.

“We *have* a standard, but most vendors just write to the proprietary thing that another vendor wants lock-in for.”

Nope, I’m pretty sure we OSS promoters acknowledge that one ;-)

In this case the “other vendor” is Microsoft, and the lock-in in question is for Windows. DirectX is much harder to port to Linux or Mac — it’s effectively a full rewrite of the graphics code, on purpose.

I know, I know, “who cares if the game runs on Linux or Mac?” That would be that lock-in again.

Aye, there’s the problem that I’m snarking at – this so-called standard for better compatibility sure has a ton of compatibility/versioning problems, eh?

The point X2Eliah I think was getting at is that it’s rather ironic that the open standard meant to have compatibility *is* being supported by graphics drivers, but that they aren’t implemented with equal implementation, which means that, like HTML, while it works equally across all browsers, it doesn’t do the same thing in every driver.

In other words, some of the drivers are basically written like Internet Explorer, some like Firefox, some like Chrome…

So the idealised Write Once Run Anywhere of the open standard instead becomes Write Once Run Anywhere You Get The Same Expected Behavior You Were Hoping For.

WORAYGTSEBYWF is probably not an ideal programming methodology, but for reality doesn’t seem to care for ideal-ness.

This… seems like a really good idea, though now I’m scared to try it in case it kills everything.

I’ve got a very similar bottleneck, but I’m working on mobile, so things are even more critical. I’ve already committed to using shaders (can’t beat normal mapping as bling for nothing on 2D artwork!), so the idea of offloading the vertex transforms to the GPU seems pretty smart, especially when rendering is taking ~ 20ms on target hardware with a fairly modest (<200) number of sprites, and everything on two textures (the colour and the normal map). So I think I'll give this a shot, and see how it goes for me.

I feel like I've just taken one step onto thin ice.

“I've taken such pains to make a lightweight, trim, and efficient little program.”

I’ve been thinking about this…I wonder if you’ve fallen into the trap of premature optimization. Are you sure the CPU can’t handle all of this math? Do you actually NEED the performance increase of shaders to meet your performance goals? I would bet that you don’t–CPUs these days are pretty powerful.

Obviously I can’t answer that question for you. I suggest you experiment: rip out the GLSL code, find the oldest, crappiest laptop you can get your hands on, and see if it’s fast enough or not. Even if it isn’t, there’s a lot you can do to optimize the code running on the CPU. It’s tempting to want to do the thing that is best for performance, but if it’s not actually getting you real improvements then it’s a waste; you could be using that time working on neat features instead (and you’d have a lot more fun doing it)

While this isn’t inherently a bad idea, and with his kind of game he could probably get away with it. But I daresay I think that in part his reason for doing this project was so that he could make the game he wants to and one of the specific requirements he set for himself, from the start, would be that it would be heavily optimized.

Also Shamus, your code is fantastically commented. Not sure if you do this for our sake or your own but damn it’s good.

But that’s my point. Improving performance is meaningless if it doesn’t actually improve the user experience. If you’re just focusing on performance for its own sake, it’s just intellectual masturbation. Which is fine, if your goal is to practice optimizing things, but this isn’t like Shamus’s previous projects, which were largely intended as learning projects. This is an actual game that Shamus wants to be a marketable product.

And really, being “highly optimized” is not a binary thing. There are tradeoffs, and at some point you have to decide when the tradeoff isn’t worth it anymore.

To be clear: Unless I’m way off with my profiling (which is possible, but I trust it for now) then the sprite-building is the choke point of the program, and CPU is the only resource that matters right now. We’ve got TONS of memory and GPU power.

So, every improvement I make to this part of the program should lower the overall required system specs by a large factor.

Fair enough–as I said below, calling it premature optimization is probably the wrong phrase. It’s clear you’re doing decent profiling and targeting the actual bottlenecks in your program, which is great. I’m just saying that it might not be necessary to meet your performance goals, and that if that’s the case it’s worth ditching the shaders considering how much of a nightmare they’ve turned out to be.

I see this a lot and I don’t agree with it, or at least not as absolutely as it is stated. Optimizing code prematurely teaches the programmer something. It can be very helpful to set yourself some tasks of which you definitely don’t know yet how to do them and then try to optimize the hell out of it. It basically gives you the opportunity to learn and train. It is only inefficient if you’ll never use it again, but I think that a programmer will re-use anything in their toolbox.

This is an approach I take on any project I work on that is ‘extra’. My general job has nothing to do with programming, but my boss knows I can, so he gives me the space for some extra work I can do ‘If I have time’. The best outcome for both of us in my opinion is if I also use that time to learn something new. For instance, I’ve optimized the hell out of a database that will handle only 5 users or so and never concurrently. According to your theory that would be a waste of time. According to me, I’ve learned how to do it correctly from the start.

The point is moot anyway here, since it is clear it requires optimization according to Shamus himself, but I think this sentiment in general is a little bit too much corporate culture.

It’s funny. I actually see the “I’m not done until I’ve tuned it as much as possible” as the more common attitude in corporate environments.

This tends to flow from an expectation driven down (mostly by project managers) that what you’re trying to do is a series of tasks, and when a task is done by god you better be done with it, and if you have to come back to it later to work on it again it’s a sign you’ve done a bad job (and are a bad programmer). Which leads to the defensive reaction from the programmer to pre-tune and optimize everything before they take their hands off it (and estimate their work assuming that’s what they’ll do). Which generally winds up in a tension between the project manager’s “are you done yet? how about now?” default vs. the developer’s “you have no idea what I’m doing. I’ll let you know when I’m done.” gruff response.

While I’d agree there’s some value in exploring and learning new things, the defensive “optimize everything” is a death of 1000 cuts to any effort of any size. There are hundreds to thousands of potential optimizations in most apps. Few will actually make a difference, but every one has cost.

The challenge is, well, that the people who manage programmers often don’t understand programming. “Only optimize the things that need to be optimized” sounds good, but ignores that knowing what NEEDS optimization is hard. The real challenge is getting management to understand rework is OK and EXPECTED. And (as you point out) sometimes we invest some time in learning things we need later, and even if that slows us down a little right now it’s OK.

Knuth said that “premature optimization is the root of all evil.” Normally I’d balk at a bald assertion and appeal to authority. Donald Knuth’s authority has been established pretty well.

The problem with heavily (performance) optimized code is that it takes a long time to understand it, which means that it’s both bug-riddled and hard to maintain. In my experience, making it work before making it fast gets you to a working and fast version with less programmer time than trying to make it fast and make it work at the same time. The main reason for that is that it’s absurdly difficult to predict where in your program you will have performance problems. Profilers are tools for determining exactly that, and when Shamus talks about his profiler it’s because he’s used it to determine what’s bogging down the works. This is the sign that he knows what he’s doing and isn’t just sprinkling the inline keyword around and calling it faster.

Optimizing to learn is fine, if you’re just learning. (Of course, it’s useless without measurement.) If you’re writing code that has to be delivered in working order on a schedule, optimizing before you have something functional to test is a terrible plan.

“Normally I'd balk at a bald assertion and appeal to authority. Donald Knuth's authority has been established pretty well.”

Remember, the fallacy of appeal to authority is only when you’re appealing to something that isn’t actually an authority, usually because they may be an authority somewhere else. For example, taking financial advice from movie stars. When you’re appealing to an actual authority, as Knuth is in programming, you’re not committing a fallacy!

One of the reasons we say not to do premature optimization is you can’t know if it’s going to help without profiling. People frequently think they know where the bottlenecks are, but they’re wrong.

The issue with premature optimization is that optimization carries several costs.

The obvious one is time. Most projects are starved for time and are delivered out of schedule. So it’s important to allocate time properly. If you start optimizing before you actually profile the program, however (what premature means), you can’t be sure that you’re spending your time on something that will actually have any impact. Maybe you spent a week streamlining a search operation that only needs to search through a handful of items and wasn’t actually taking any time at all in the first place. So after a week, the program still performs as it did before, and you still need to optimize it (assuming performance was an issue in the first place and you couldn’t have better spent the time finishing features).

If every project had infinite time, then you could optimize to your heart’s content. Since they don’t, you need to choose what parts to optimize to get the most benefit from your time, and the only way to do that is to know where the performance bottleneck is, which means profiling.

Without profiling, you could even make things worse. Many optimizations are really trade-offs; speed up a program by caching results, which increases memory footprint. If your bottleneck was memory access, however, you’ll have decreased performance with this “optimization”, because the program has to shuffle more memory about, which was what it was spending all its time doing in the first place.

Another cost is maintainability. Optimized code can be more difficult to follow, since by definition it’s not the naive, direct way of doing something. This means the person that comes after you may introduce bugs without realizing it, because they invalidate some of the assumptions the optimized code made.

So not only should you check where you need to optimize performance by profiling, but you should also leave non-critical code alone. The weakest link between humans and computers is ALWAYS the human. Let the computer shoulder the heavy load and make things easy for the humans, even if it means less optimal code.

Another cost is portability. Optimized code is often less portable. If you stick assembly code into a function because you can save a couple operations compared to what the compiler produces, you might have issues when porting the code to a different architecture. Say, if you want to port the game from x86 (PCs) to ARM processors (smartphones).

Since optimization is expensive, given the costs I detail above, it is important to only use it where you need it. This doesn’t mean to not think about what the proper algorithms and data structures for the program should be before you start coding. But when going back over the code to speed it up, make sure to only muck around the bits that need it.

[rant]

I don’t want to sound like a jerk, but on what planet is 4 comments in a 32-line program, with many tersely-named variables, considered “fantastic commenting”?

I might be biased by writing in Prolog, Haskell, and Python in school (Python also in real life), but I’d have doubled the number of comments.

I’d also have thrown a lot more whitespace in.

I know that programming style is how many holy wars have been started, but I’m pretty sure humans can read things easier, with applicable levels of whitespace.

HCI studies how well humans respond to different interface colours, spacings, etc.

(Time taken to find an element on the screen, etc.)

Obviously this isn’t an interface, per se, but you can use some of the same principles to make your code easier to read/maintain.

I mean, the polar opposite to good programming layout/style (however/whoever it is defined), is code obfuscation.

[/rant]

I do love, however, that Shamus uses normal squiggle brackets, instead of Egyptian ones.

Man, I hate Egyptian brackets! :P

I also find the “Egyptian” braces style very hard to read. It is not so much about the opening brace being at the end of the line before, but that the braces are not on the same intendation. When I see a closing brace I expect to be able to use my eyes to scan upward in the same column to find the opening, and vice versa. Generally looking for something goes way better for me if my eyes only need to move vertically to find the item in question. That’s why it is vastly easier to find an entry in a list that is presented vertically than one that is displayed in one line, even worse if it is too long and has to wrap around at the end of the line.

A good example of bad UI design is the control panel in Windows 7. Items are sorted alphabetically, but horizontally, the list wraps around at the right border of the window.

Which means if you look for something starting with, say, the letter K, you can’t just scan for where the entries with K start and then go downward, no no no. You have to somehow find any entry starting with K, then scan the entire line right and left of the entry, and the lines above and below, also possibly in their entirety (I have so far failed to develop an efficient habit to even quickly finding at least one entry with the same letter, I just try random positions until I get something).

But it gets worse. Sometimes the item in question is the single item with the same letter at the start or end of a line that otherwise consists of entries that start differently. So I often overlook them and have to start over, sometimes wondering if I am still looking for the correct name.

I still don’t understand why Microsoft needed to remove all display options other than “large symbols” and “small symbols” in the first place. That’s like… it just makes no sense.

Even when optimization is required, I agree that there are a lot of optimizations you can do to speed up the CPU, especially if all the shader code does is perform 2D sprite rotations.

For example, off the top of my head (assuming rectangular sprites):

1. Find center of the sprite: C = (Cx,Cy)

2. Get unit vectors for rotated X and Y axes (for 0° X=(1,0) Y=(0,1))

3. H = SpriteHeight / 2 * Y

4. W = SpriteWidth / 2 * X

5. Corners (in clockwise order) are then:

– C + W + H

– C + W – H

– C – W – H

– C – W + H

In this algorithm, usually #2 is where you need trig functions, but:

– First, you only need 1 trig call (in C there is a sincos function to calculate both values at once), and the second unit vector can be determined from the first (to rotate 90° CW: (x,y)=>(y,-x); 90° CCW: (x,y)=>(-y,x) ).

– Second, you can pre-calculate the unit vectors (at say every degree) and store them in an array. You only need to round to the nearest value for display, not for your internal calculations.

By doing so, you have only a single array access and a handful of vector mults/adds/subs to determine the four corners.

Premature optimization is an unfortunate bystander in the path of software design politics.

Knuth’s original statement in full was “Programmers waste enormous amounts of time thinking about, or worrying about, the speed of noncritical parts of their programs, and these attempts at efficiency actually have a strong negative impact when debugging and maintenance are considered. We should forget about small efficiencies, say about 97% of the time: premature optimization is the root of all evil. Yet we should not pass up our opportunities in that critical 3%.”

His point was that when you’re throwing together your design you shouldn’t try to solve the hard optimization problems, but keep it simple – the inefficiencies will present themselves later and you’ll fix the important ones.

In Shamus’ case there’s no way this is premature optimization. This is right-on-time optimization of the most critical blocker in his application, and is very worthy of his time in producing quality software.

You can argue that it’s not a good use of his time for the product given the probable target audience, but that’s a business argument, not a software engineering one. “Premature optimization” this ain’t.

Fair enough, I’ll grant that it’s not premature optimization. It’s clear from the posts that Shamus is doing profiling and is identifying the areas that are bottlenecks in his program before attempting to optimize them. The issue then is whether it’s *unnecessary* optimization.

And I disagree that it’s not a software engineering issue. Engineering is about ensuring that your system conforms to your requirements, and managing the tradeoffs involved in implementing that system.

This is probably a stupid question (I’ve only done rudimentary shader stuff myself, and my understanding of OpenGL in general and OpenGL > 1.2 specifically is pretty wonky), but why are you using the “in” qualifier as opposed to straight up declaring the attributes as attributes with “attribute”?

EDIT: To clarify what I mean: I’ve never seen “in” used this way. My understanding was that you use “in” for function parameters like so:

void foo(in float bar) {

}

to denote how the parameter is used: “in”, “out” or “inout”.

While when declaring attributes/uniforms/varyings, you’d do this:

attribute float foo;

uniform mat4 mvp;

varying vec2 coords;

etc..

EDIT2: There’s also

http://qt-project.org/forums/viewthread/32537

and

http://lists.freedesktop.org/archives/mesa-commit/2011-January/027967.html

which suggest that using “in” like that is a GLSL 1.3 feature, while you declare your shaders to be version 1.2.

I was originally using “attribute”, but the compiler gave me a warning that “attribute” was deprecated and I should use in/out instead. Honestly, I was assuming that pleasing the compiler was the more “correct” thing to do. That may not be the case.

In GLSL 1.2, you use “attribute vec3 pt”. In later versions, you use “in vec3 pt”. So if you had written the first, but forgotten the “#version 120” line at top, you would get a compiler error from some versions of the driver, but not others. It should compile the old code as long as you have the correct version label.

This messed me up quite a bit at first, since browsing around the net, you get examples in different versions of the language, and they all look similar.

In fact, now that I think about it, some of the problems you are having on multiple machines may be due to this. If you are going to use “#version 120”, then use “attribute.” What you have now is a mix of version 120 and later (version 330). Some drivers have very permissive compilers. Others may be screwing up because of this.

Wait.

We can use “#version 120” to tell the compiler to compile for 1.2 , but it will still throw depreciation warnings every time we use anything from Version 1.2?

“That explains everything.” -Kenstar

Using “#version 120” should cause it to be compiled as an OpenGL 2.1 shader. I’m guessing that Shamus copied some code without the version header, and got the “deprecated” error message because the compiler defaulted to a newer version when there’s no header.

Later, he adds the version declaration, creating a mix of styles. “in vec3” is now an error, but some compilers recognize both formats and don’t care. Others report it as an error (like the one he showed at the end of the blog.)

For the record: You were right.

I DID have the header there, but perhaps I bungled it or typed 130 or somesuch? I was commenting and un-commenting lines of code, trying different combinations to figure out what the compiler wanted.

I’ve tested it now and it seems to be working as expected.

Of course, I’m sure everything will go bananas when it reaches the testers, but at LEAST it’s working as advertised on the dev machine. :)

Yay crowdsourcing!

I do recommend StackOverflow for that. You’ll learn something, the web is enriched by a problem + explanation + solution, and the problem gets fixed in the process. If it’s not an entirely idiotic question (“Please do my homework for me”), Stackoverflow is the way to go.

I wanted to recommend that as well. Consider posting a question on either Stack Overflow or Stack Exchange Code Review. I’m sure there are a lot of GPU/Shader gurus over there, and Stack Overflow is much more helpful than most forums in my experience.

Thirded.

> Stack Overflow is much more helpful than most forums

That’s like saying that rockets are just slightly faster than shoes.

I’d consider Stackoverflow an absolute must as a professional programmer, at the very least for searching on it, but also for asking and answering questions.

Why would anyone ever possibly want to go faster than shoes?

Hey Shamus – maybe I just missed it re-reading your post, but I don’t think you actually posted a description of what the current shader bug is, as opposed to the rgb/rgba one…?

Disclaimer: I have not messed around with OpenGL or any high-performance graphics stuff in a long while. I am not trying to solve your problem with the “Why are you using X? Just use Y.” approach. Just curious and wanting to help / discuss.

So, you’re storing robot orientation in radians, and then using sin/cos to rotate the quads to display them? No wonder the CPU version took the bulk of your rendering time. I don’t know if the GPU version of the trig functions will be much more efficient or not. It doesn’t strike me as something that lends itself well to a series of small parallel operations, but I could be wrong and nVidia/ATI could have circuitry dedicated to trig functions for all I know.

I had to dig around in the cobweb-encrusted parts of my brain for this, but can’t you use matrix multiplications to do the rotation, and pre-calculate the matricies you need for particular angles? Since you’re on a fixed FPS, I imagine most of the rotation you’re doing is for missiles that adjust themselves by, e.g. ±1° each frame, or turrets that track by ±3° each frame. You could work out the appropriate Rotation Matrix ahead of time, and applying it is just a series of multiplications rather than the expensive sin/cos calculation. I think that instead of storing an entity’s current orientation in radians, you’d store a vector… but my maths gets rusty around this point. Maybe you’d store a rotation matrix of accumulated rotations. Linear Algebra was my favourite of the mathsy subjects I did, but still, it’s been a while.

I’m neither well-versed in hardware nor experienced in OpenGL (so feel free to ignore me completely as you see fit), but it’s my understanding that GPUs are optimized to be massively parallel, at least in comparison to the CPU. This makes sense to me, as doing something like applying a pixel shader means running an extremely small program (limited to something like 32 or 64 total mathematical operations, I forget which one it is) 2,073,600 times for a 1920×1080 resolution. Doing this without allowing for as much parallel computation as possible would seem like it would take an incredibly long time: assuming that each shader allows for 32 operations and you’re targeting 60 frames per second, that’s 3,981,312,000 operations per second.

int i

for (i = 0; i < 3981312000; i++)

{

printf("Oh god make it stop\n");

}

As for the trig operations, it would make sense for a GPU to have hardware dedicated to solving trig problems; they're the sort of thing that's very likely to come up in the course of graphics processing.

Again, I'm 90% talking out of my ass here, and this is WAY out of my area of expertise (largely because I don't have an area of expertise), but what I’ve gathered about graphics and graphics computing has led me to believe that this is the case.

As far as I can tell from mostly skimming your post, you are mostly correct.

The CPU generally has 1-4, sometimes 6 or 8 if you’re lucky/rich, cores. They’re usually clocked at between 1.3 and 4.2(these days) Ghz.

The GPU generally has 1-4 THOUSAND(or more) cores. They’re usually clocked at, last I recall, 300-600 Mhz.

Each “core” on a processing unit can do one thing at a time. So single-thread throughput on a CPU is WAY faster than single-core throughput on a GPU. But If you’re doing single-core throughput on a GPU, you’re doing it wrong.

This is, for example, why the GPU was so much better at calculating normals for Project Octant. It’s actually a little slower for a given GPU core to calculate a single normal than it is for the CPU to calculate the same normal, but the CPU has to calculate the normals consecutively, meaning that calculation time is dependent on the number of normals to calculate. The GPU calculates all of the normal vectors at the same time, so even if it takes 3 or 4 times as long to do(which it might not even, since the GPU would be optimized for vector calculations), that’s OK because until you run out of cores, it doesn’t take any longer at all to calculate another normal vector.

This is the same sort of thing. The rotation of a given robot doesn’t really have an effect on the rotation of any other robot, so every single one can be calculated in a vacuum. Even if the GPU takes 4x as long to calculate the rotation of a SINGLE robot, due to other bottlenecks, the calculation of every robot past the first is done effectively for free.(there will never be more robots than GPU cores. There’s no hard limit on this, but it’s a safe assumption.)

Well, the GPU is (…or, *should* be) really really good at matrix multiplication, because each vector entry is independent, so it’s what I like to call trivially parallelizable. And if you’re multiplying two matrices together, rather than a matrix and a vector, then it’s trivially parallelizable across each entry in the output matrix, for a similar total time required to finish.

Each entry, though, requires a full dot product of two vectors, which I think needs to be serialized.

(Multiplying two NxN matrices requires O(N) multiplications and O(N) additions, for each of the N**2 output entries. So done sequentially, as on the CPU, it’s O(N**3), but since they’re all independent and the GPU has craptons of cores available, you can get it done in O(N) total time by doing each item in parallel. Multiplying an NxN matrix by a 1xN vector needs the same number of multiplications and additions over each of the N output entries, so is either O(N**2) on the CPU, or O(N) on the GPU.)

I have no idea how sin or cos operations compare to multiplications in terms of how long they take on a GPU, though. I would agree that it *seems* likely that precomputing the various rotation matrices might go faster, but it seems like that’s only true if all the angles are known, and there’s a way to pass a full matrix down to the graphics card with each point. The latter seems like a large possible issue; shader programs usually only take vectors; the matrices involved usually have to be uniform.

Alternately, I suppose it’d work to precompute all the rotation matrices and set up a few thousand uniforms, then pick the right one in the shader. That might be either a huge complicated if structure (which is bound to be bad) or *maybe* a matrix array index. But I don’t know if matrix arrays are even a thing in shader programs…

One simple change that might help if sine and cosine were a bottleneck would be to only recalculate them when the angle changes rather than every frame.

I’m not clear that optimizing the vertex shader would gain anything. He said he was heavily CPU limited, so the GPU is probably sitting idle anyway. GPUView is a very good tool for visualizing your CPU and GPU load, and also for identifying blocking operations between the processors. Depending on how you are sending data to the GPU, there may be places where the CPU is waiting. Those cases are easy to spot with GPUview.

To actually optimize the GPU code, I’d want a tool to access the HW counters on your target system. Each of the GPU vendors provides a tool, at least for D3D, I haven’t done OGL in a long time. But use GPUview first to make sure it matters.

Accumulating rotations is usually not a good idea, because you accumulate errors. Something that is very close to floating-point-epsilon after one matrix multiply is quite a bit larger after a few thousand.

Learning OpenGL / GLSL is an absurdity of a minefield, and almost none of the difficulty comes from the concepts or even the code itself. The problem is that there’s so much conflicting information because of the vast amount of changes. Is this deprecated, is this horribly slow, how will this subtle api change between versions ruin my life?

I have found a few useful resources though.

A quick reference for OpenGL 3.2. Marks stuff that’s deprecated with blue text.

http://www.opengl.org/sdk/docs/reference_card/opengl32-quick-reference-card.pdf

A list of OpenGL extensions and how many cards support them.

http://feedback.wildfiregames.com/report/opengl/

Similar to the quick reference, but more of a crash course.

http://github.prideout.net/modern-opengl-prezo/

The only thing that stands out to me as unusual is the use of GLSL built-in variables (gl_Vertex, gl_ModelViewProjection, etc). I think that most cases can safely use version 3.X stuff (where X is a low number … I forget the exact version, but that’s the maximum version that macs support). The common way to accomplish that now is calculating your m/v/p matrices on the cpu & then uploading them as uniforms.

You also might want to investigate compatability vs core OpenGL contexts: http://www.opengl.org/wiki/Core_And_Compatibility_in_Contexts

Well I think the best first step is what you’ve done here: post the code.

Given your readerbase, chances are someone will have ideas and suggestions that you’d need years of experiance to figure out yourself.

I’m reminded about a quote regarding number of eyeballs and bugs, but I can’t quite bring it to mind.

“Given enough eyeballs, all bugs are shallow.”

It’s ESR’s version of Linus’s Law. It’s why open-source *tends* to produce much less buggy code. Quoth wikipedia, “more formally, given a large enough beta-tester *and co-developer* base, almost every problem will be characterized quickly and the fix will be obvious to someone”. (But the emphasis there is mine; both beta testers and people with the source are required.) Luckily for this case, the source is posted right here. :-)

And see MichaelG’s comments above. Those sound pretty likely to be correct to me.

Good luck monetizing that code.

I am not sure what you are hinting at. That someone might use the code Shamus posted for their own commercial project? Or that code cannot be “monetized” at all once it has been posted in public? I find the former rather unlikely and so minor an issue if it were to happen as to be irrelevant, and the latter simply not true.

It’s X2Eliah — he’s been making statements that are obviously prejudiced against open-source for a *long* time on this blog.

Personally, I just ignore him on this.

Counterexample:

https://www.google.com/finance?q=NYSE:RHT

Also, Linus is employed full time working on Linux now.

I don’t know squat about OpenGL or programming shaders but from a portability standpoint the first thing that caught my eye are your hard coded constants. You might want to make sure that the data type is actually being inferred the same way in all environments. Like use the “f” suffix or maybe even go so far as explicitly casting them to floats or defining them as variables rather than inline constants.

This doesn’t matter in a graphics shader.

*Everything* is a float unless specifically declared otherwise.

It’s only very recently that integers even became possible on GPU hardware.

That is entirely not true. Shader programs knew about — and used — integer types since day one. To cite from the GLSL Common Mistakes page:

float myvalue = 0;

The above is not legal according to the GLSL specification 1.10, due to the inability to automatically convert from integers (numbers without decimals) to floats (numbers with decimals). Use 0.0 instead. With GLSL 1.20 and above, it is legal because it will be converted to a float.

(End quote)

Also note the subtle ways how this transitions between an error and a warning depending on the declared shader profile and vendor (NVidia being more relaxed than AMD). As has been pointed out above, if Shamus (if inadvertedly) mixed syntax styles of three different profile versions and worse, have the shader compiler guess which profile should be used due to leaving out the #version pragma in some tries, this is indeed recipe for a hair-pulling experience.

On a related note, I can highly recommend the Lighthouse3D GLSL Tutorial (GLSL 120, GL 2.1, deprecated) and GLSL Core Tutorials (if you aim for OpenGL 3.3+, current). Each comes with a “Hello World of Shaders” tutorial including downloadable source code (and a VS2010 project for the latter), too.

From your comments I gather that the CPU is the bottleneck. This leads me to the question if you render your sprites all individually, or are you creating one object consisting of all the sprites and render them all at the same time, like I mentioned way back in your first post about particles. If you create a new draw call for each sprite, you definitely have your bottleneck right there. The GPU is really good at working on a big set of data, due to parallelization (SIMD), but if you are giving it only two quads at a time, it is mostly idle.

What you should do is create a list if single vertices, each on the position of one sprite, with data on rotation, alpha, color, texture ID, etc. Give these vertices to your GPU in one single draw call, pass them through your vertex shader (nothing to do here) and then use a Geometry Shader to turn each Vertex into a Quad with the respective data at each of the 4 new vertices. I don’t have any experience with GLSL, only really used HLSL, so I can’t give you exact code, but if you think it could help, I can send you my HLSL SpriteRenderer and the Class with DirectX drawcall (which is probably even less useful).

I stumbled upon this early in my game development project as a good way to massively reduce drawing overhead.

IF you can organize everything by texture. I even developed a mesh-packing trick where I can pack a bunch of meshes into a single array such that the Drawable objects don’t need to know about their neighbors, and the Draw Manager doesn’t need to know about the Drawable objects.

I don’t remember specific numbers, but I think I was seeing something like a 50% reduction in total duty cycle even with toy-sized(like 20 cubes) datasets. Current iteration is capable of drawing several thousand cubes per frame, so long as I don’t arrange those cubes in such a way as to cause 100 layers of overdraw.(even that only bumped me up to 150% duty cycle at 1080p)

Are you leaving the switch in the ‘More Magic’ throw?

Nice.

For those, who don’t know, a story about magic.

Well okay I’m drifting this away, but I’m surprised NOBODY mentioned Alice is quite a naughty girl for living with an entire footbal team.

Just a note to say calling robotic monstrosities ‘Dave’ is pure win

What are you doing, Dave? Stop that, Dave. My mind is going. I can feel it.

I’m just an IT guy and not a professional programmer, but when my team is being frustrated with learning new tech tools because they are weird and buggy and complicated I always coach them to push through it because even though it might seem like a waste of time to spend a week learning how to do Thing X with Tool Y instead of just doing it the old fashioned way (which usually involves setting yourself a calendar reminder every day to go log in somewhere and do something manually) it’s almost always better to push through the pain and learn the correct tool for the job.

For example, we had some stuff that could be automated through powershell scripts, but nobody knew how to write them. So I tasked someone with learning and they hated it, but now they know how to do it. So I had a person spend a week learning a tool that saves 2-5 minutes per day of lost productivity. Not a great investment by itself. But now the next time this situation comes up it will take less time, and over time this investment pays dividends.

So basically I’m saying look at this as an investment for the long haul. Based on reading your blog for a number of years I don’t think this is going to be the one and only time you’ll ever want to use shaders. A few days spent on this now will pay for itself in the long term and you’ll be happy you made that investment in yourself.

So, I know this sounds about as fun as ranking every kind of bee sting on the planet from least painful to most by testing them on your face, but-

Have you considered trying to detect the version of OpenGL the user has and having different shaders for different versions?

That is actually common practice. You can write different versions of a shader and the program uses the first one that doesn’t fail. (keyword: fallback)

Never done it myself, though, outside Unity.

Sorry to hear you’re having trouble, but if you learn enough to write that totally-basic shader tutorial you’re talking about I would be extremely happy. Pretty please?

I don’t know how to solve your shader issues (plus it looks like good old Michael has got you covered) but I just wanted to put in a request that the “draw boxes around all sprites” render mode be in the options menu. It looks awesome!

I enjoy poking around with the developer tools when games leave them in. It can be fun to see the how many triangles are being thrown at the GPU, turn on wireframe and watch the tessellation (and potentially the overdraw), or just have individual sprites in a particle effect show their bounding boxes and get a sense of how complex it is.

That said, it’s only amusing for a few minutes, so I certainly wouldn’t put work into offering. But if it’s already there, I’d certainly appreciate the option.

Yeah, it looks cool!

It sounds like you are profiling your code manually? There are some really good profilers out there. VS2012 has a very good profiler built in, I like it almost as much as vtune. I’m not sure it’s in the free version, but the pro-version has a free trial you could use. Vtune also has a free trial option. There is also Sleepy for free. I haven’t tried it yet, but it looks pretty cool.

I good profiler is so much more powerful than using timing code, it lets you really dig down into the places you are wasting time, and timer resolution isn’t as much of an issue. If you install the symbols for your OS, it will also let you see time spent in system calls, which can be a big help if you are waiting on locks or API calls.

I would totally agree with the comment above about using a rotation matrix (you could get all fancy and use a scale matrix as well but if you’re constraining proportions you don’t really need to). Not only will this actually work out simpler in code, you will also find that it uses the GPU more efficiently (GPUs prefer to multiply entire vectors rather than doing an operation on each component).

You’ll probably hate this idea, but you might also find that dropping all of the old bits of immediate mode and pre-3.1 OpenGL might actually make your life simpler. The new model adds some baggage in terms of making your own matrix library, but you can turn your shaders into a black box that Does Stuff without worrying about pushing and popping the matrix stack, and you get to throw out all of the built-in variables apart from gl_Position and just pass your own numbers about between shaders.

If you’re using a vertex array object for your billboards, you could also use an attribute array containing all of your locations and rotations to do instanced rendering so that, for example, you fill up a couple of buffers and draw all of e.g. your missiles with one draw call, which will save a whole lot of CPU. I think that this article is really handy for learning to do instancing.

Well, I had an exam earlier this week in precisely computer graphics, where writing shaders in GLSL were one of the hand in exercises(version GLSL 1.5 (OpenGL 3)). Given that this was my first course in computer graphics it is possible that what I’m about to say is common knowledge, or that it might not really solve anything due to varying versions of GLSL or what have you, but nevertheless:

1. The “in” attribute hasn’t always been a part of the parameters, earlier versions used “varying” to indicate that it was variables to pass along from vertex to fragment shader. Precisely which version that they made the switch I don’t know, but the version used might determine whether the user gets protests from the program. Furthermore, the predefined “gl_Vertex” is only present in older versions of GLSL(then the one I used).

2. Is any information intended to be passed on to the fragment shader? As in, as an “in” parameter in the fragment shader? If so, those should be listed with an “out” attribute at the top of the vertex shader. At least that was how we were taught; it looks like you use the TEX0 variable to carry information.

Apart from that I really don’t have much to say. Sorry if I wasted your time.

If you need a better timer, you should look (at least under Windows) at QueryPerformanceCounter and QueryPerformanceFrequency. You can get 3 million ticks a second on a 2007-era Core 2 Duo.

“It turns out that the most capricious, buggy, unpredictable, and mysterious part of my program is the vertex shader. This is frustrating because it's the thing I know the least about, the thing with the least documentation, and the thing that's hardest to debug”

Its been a long time since i double checked your entire Good Robot posts, so forgive me if you already answered this but…..why do you decided to use it? Why, when you started this whole thing, you decided to have in your arsenal of tools a shader that you and everyone else on the world have little knowledge off and its the hardest to work? why not choose another one from the beginning if you knew this was going to happen? What was the hurry to find another more documented or reliable shader before starting?

Hey Shamus.

Just a heads up from a graphics guy, you are mixing GLSL versions, GLSL is not backwards compatible. At all.

Different drivers are or less strict with specs, and might attempt to compile it anyway. Meaning that you get weird bugs and unexpected behavior etc..

If you are using shader version 1.2, you CAN’T use the ‘in’ and ‘out’ attributes for shaders. This was before unifying storage in GLSL.

Back then vertexshaders took vertex attributes, and outputtet varying data to the fragmentshader.

In version 1.3, they changed it all around and unified storage specifiers so all data flowed between stages using in/out attributes.

You should be very careful not to mix shader versions together, especially since you don’t seem to bother reading the language spec. (I can’t blame you it is very boring..) and prefer just to borrow quick code samples.

My advice is, pick shader version 1.5, and keep a quick reference open whenever doing shader development.

with sufficient number of football players and detailed scripts for each one, the could simulate another Alice.

i wonder if the disparity between GPU/CPU optimization might someday be more simply solved by just simulating a CPU on the GPU.

Depends on whether vertex shading etc is harder to do than the simulation…

In my own game(s) I used a 3D rendering package (truSpace) to create my sprites. They look great, none of this shader stuff required. I’m not sure of the advantages of a shader and polygons verses prerendered image(s) (with alpha etc).

Undefined behaviour is the worst, right? What you really want is a compiler that, when asked to do something undefined, would throw a wobbly rather than guess what to do. That’s annoying, sure, but better it fails at compile rather than at runtime, right?