And now it is time to point and laugh at all the silly things that dum-dum Shamus has done over the past week or so. Let’s start with noise. Remember the image I ended with last week?

|

There’s something wrong here. Don’t get me wrong, that’s a cool canyon and all. I’m not knocking the canyon. The problem is that there is another canyon right next to this one, running in parallel. Defying all odds, there’s yet another one, very similar, just beyond that one. And another. And…

Yeah. My noise-generating system is broken. It’s not spewing out duplicate data. These canyons are unique. But we’re seeing patterns when we shouldn’t.

Also, the format of the noise makes it really annoying to use. It’s supposedly giving me values between zero and one. As I’ve plugged these numbers into my world-building system, I’ve noticed that it’s all kind of homogeneous. The possible range might be between zero and one, but the actual values it gives me are very, very rarely lower than 0.4 or higher than 0.6. This isn’t really a bug. This is how value noise works, actually. It’s just that it’s not very convenient like this.

So far I’ve designed my world-building code around this clunky mess, constantly re-scaling the noise and fudging the numbers until things look the way I want. But this makes for messy code. If I want mountains that range from zero to fifty, I’ll end up with code that looks like (noise - 0.4) * 250. Those are what programmers call “magic numbers”. They’re unexplained values floating around in source, and another programmer (probably future-Shamus, programming from the cockpit of his flying car) will look at this and say, “If you want mountains fifty meters high, why are you multiplying by 250? And subtract point four? What’s that all about? What idiot wrote this crap!?!”

So let’s calibrate:

|

This is our noise spectrum. When we generate a page of noise values, we should see a little bit of everything. A bit of low red. A bit of high purple. Things can follow a bell curve, but we need to see the full spectrum. If, during the course of churning out the 262,144 pixels for one of the upcoming images, I don’t get a single purple pixel, then it’s so rare that it’s not worth having in the spectrum. If I actually needed something to be that rare, there are better ways to come up with it than sacrificing one-fifth of my noise spectrum.

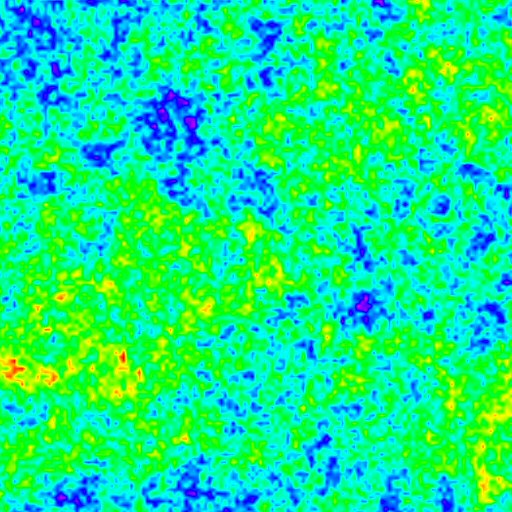

So let’s generate a page of noise and see what we get:

|

Wow. There are so many things wrong with this image.

- We’re using less than a fifth of the spectrum.

- Worse, it’s not even the middle of the spectrum!

- We can see a strong pattern of vertical lines.

- I don’t know if it will show up in the eventual blog-screenshots, but I can see a couple of pure blue pixels mixed in with the cyan values. These sudden shifts in values should not happen. The noise generator is for making smooth outputs. If I needed spikes, I’d generate them some other way. Random spikes like this will produce…

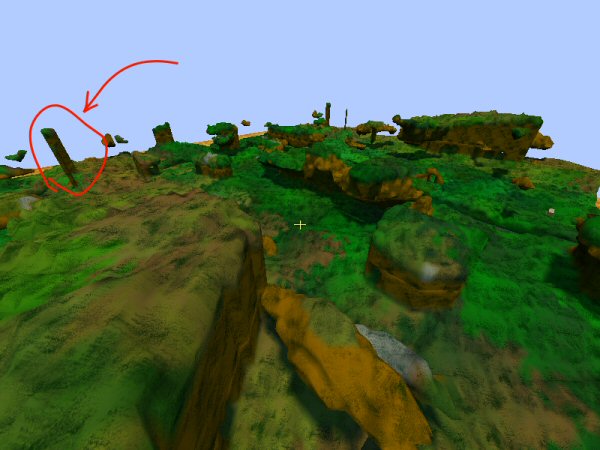

|

Yeah. Man, I’ve been seeing those telephone poles now and again. I wondered what was causing those.

(Half an hour of furious pondering, which non-programmers mistakenly refer to as “surfing the web and reading webcomics”.)

Ah! The input image. I’m using a image of pure noise – a 256×256 image of random color. It’s a PNG file. I’m just loading these data values and dumping them into a big ‘ol bin of bits. Can you intuit the problem?

PNG files have an alpha channel. One pixel is a single byte of red, another byte for blue, then green, then the transparency. This image is opaque, which means the forth value is always the same. (Max value.) I’m sure this problem appeared when I switched from Qt to Visual Studio and changed to using a different set of image-loading tools.

I could modify the image to have random opacity, but it’s probably safer to just drop that channel. Let’s see:

|

Much better. The stripes are gone and the noise is nearly centered. (It’s at 0.53, when a perfect average ought to come up with 0.5. Not sure if I care about that. That could be an artifact of the noise I’m using.)

I’m not so sure about the blue dots, which is where the noise system is giving a value that doesn’t fall in with the gentle gradients it normally turns out. Why? Blue is not the bottom of the spectrum. The bad values all appear on the same spot in the range. For example, we’re not seeing incongruous purple or red dots. I don’t see any pattern to their placement.

I’ll have to come back to this one later.

So now I just need to re-scale my noise to fill as much of the spectrum as I can. Here is what we’re doing:

|

Most of the spectrum is going to waste, which means that I’m groping around, trying to find the range where the “interesting” noise is taking place. Every time I ask for some noise I re-scale it, and then re-normalize it to the zero-to-one scale. It’s much neater and more sensible to do this at the source, so that I can just blindly query some noise and be sure that the numbers I’m getting will be useful. The only danger is this:

|

If I narrow the range too much, then occasionally a value will drift out of bounds. These values are probably very rare, but statistically inevitable. In fact, this is sort of the whole point of noise.

If I don’t tighten the range enough, then my values will be too flat and I’ll once again clutter up my code with scaling and endless tweaking. If I tighten it too much, I’ll end up with clamped values.

A clamped value might look like a mountain with a perfectly flat top. Or a cave with a flat bottom. It’s some point where the numbers drift off to the maximum value and just… stay there. Suddenly I’m not getting noise anymore. I’m just getting max value, over and over.

I adjust the ranges a bit. Let’s see how it looks:

|

Lots of variety. If some of those red or purple spots result in a bit of flatness, we can probably play it off as part of the scenery. Much better to have the occasional freak spot than to have it bland everywhere.

Which leads us to our final problem:

On Friday I mentioned that it was taking crazy long to fill in the scene with data. It turns out that problem wasn’t so much a bug as a design flaw. Consider a grid of nodes:

|

Imagine we’re looking down from above, and the viewer is in A. Each letter represents a node – a 16x16x16 block of points. The order of the letters is the order in which nodes will be filled in. A, B, C, D, etc. This builds the stuff close to you first, and works outward in concentric circles. There are three stages to building a node:

- Generation – The noise system is used to create a bunch of points and decide what they’re made of. (Grass, dirt, what-have-you.)

- Lighting – The system looks at each block and figures out if its in direct sunlight or not. Eventually this stage will probably include some sort of other light sources, so that underground areas can be lit.

- Mesh construction – We take the data from the previous two steps and turn it into a big pile of polygons.

I had a bit of code in here that would cause new nodes to force their neighbors to update. When A is built, nothing in B exists yet. So it can’t make these blocks match up with whatever will eventually appear in B. When B does appear, there will be a gap or a seam or a bunch of extra polygons or whatever.

To correct for this, as soon as B is done building it would nudge A and tell it to update. So then A rebuilds, repeating step #3. Then C is created, and it nudges A, and A does step 3 again. Then D, and A is built again. Then E, then A again. Then it gets really, really stupid…

F is created, and it causes both B and C to be rebuilt. Oh, and because it shares a single corner cube with A, then A must be built again.

The upshot is that in the process of filling in the nine nodes in the center, A will be rebuilt nine times.

Note that I couldn’t see this happening. I mean, if it rebuilds a node and the node looks exactly the same, then the process is invisible to me. This is a case of me just not thinking things through. I put in that bit of nudge code and moved on without considering the implications.

The fix for this was to just have it be a lot less stupid about when it builds meshes. A doesn’t do step #3 until the other 8 nodes have finished the first two steps. It means you wait a little bit longer up front, but in the long run it saves a ton of time.

Let’s crank up the draw distance:

|

Here we have a draw distance of 384 meters. It’s a bit bland because we’re only making these swiss-cheese canyons.

I’d wanted to go for higher, but it seems sort of silly now. This feels pretty far. It took almost a minute to generate this. (Although over half of that time is spent filling the last few rows on the horizon. Every doubling of the view distance is quadruple the time cost.) That’s not too bad for rough, un-optimized code. I’m content that we don’t have any serious design flaws or obvious structural problems that would prevent us from creating scenery at an interactive rate. Moving at a full sprint, I can’t get anywhere near the edge of the terrain as it’s being built.

Let’s do one last test. Let me double the draw distance again. I’m also going to disable the fog, since I think it makes things look far away and kind of screws with your perception of how far you’re seeing.

|

That’s over half a kilometer. Took ages to generate the terrain, and I don’t think it really looks any more impressive. And on the ground, you can almost never see that far because there’s always something in the way. Maybe this would change if we had some mountains or other large-scale features to look at.

At any rate, the framerate is still over 40fps, even with this aggressive draw distance. Again, it’s too early to start patting myself on the back, but it does mean I don’t have any serious problems in what I’ve made so far.

I’m looking at this endless expanse of Swiss cheese and thinking the next step should be to add some variety.

Patreon!

Why Google sucks, and what made me switch to crowdfunding for this site.

Overthinking Zombies

Let's ruin everyone's fun by listing all the ways in which zombies can't work, couldn't happen, and don't make sense.

Shamus Plays LOTRO

As someone who loves Tolkein lore and despises silly MMO quests, this game left me deeply conflicted.

Project Octant

A programming project where I set out to make a Minecraft-style world so I can experiment with Octree data.

Lost Laughs in Leisure Suit Larry

Why was this classic adventure game so funny in the 80's, and why did it stop being funny?

T w e n t y S i d e d

T w e n t y S i d e d

That’s pretty interesting but i don’t understand why you use an image as an input for noise.

A noise value is somewhere between 0 and 1 (including 0 and 1) right ?

So why use the average of 3 color values ? Won’t that always give you values slightly around 0.5 ? Because to get 0 you would need red, green and blue to be 0 and to get 1 you would need them to be 255.

(0+0+0) / 3 = 0 “black” and (255+255+255) /3 = 255 “white”

but an image rarely has full black or white pixels.

You should probably only stick to one color value like red for example.

Or maybe i’m not fully understanding what you’re doing… X )

The noise doesn’t look at the brightness, it looks at the spectrum. So (#0000FF) equals one while (#FF0000) equals zero. Or at least that’s how I’m understanding it.

I wan’t averaging them, but treating each channel as a different bit of noise. But I was a little worried that things were going on with the color values that I wasn’t seeing, so in the end I switched to using red only and throwing away blue and green.

I see, but then again, maybe your red channel doesn’t have a lot of values around 0 or 255 meaning your initial image is too blurry.

I was actually wondering a similar thing. Reading about this project inspired me to start learning a bit about this procedural generation stuff, although I’m using 2D tiles instead of using noise values to modify a 3D set of points. To generate my noise, I did a bit of digging and found some code written for that purpose, then just populate an array. The code I found was actually linked to on the Wikipedia article for Simplex noise, as one of the sources, can be found here: http://staffwww.itn.liu.se/~stegu/aqsis/aqsis-newnoise/ (simplexnoise1234.h and simplexnoise1234.cpp).

It took a bit of experimenting with the inputs to the noise functions but I eventually got some nice fluffy looking clouds to use as a heightmap. Now I’m wondering if that wasn’t the best way to do my noise generation.

noise can be generated easily, but the advantage of using an image as your nose source is that you can be sure you’re getting the same data every time you ruin the program.

(The advantage to saving your noise in an image instead of a text file or something is that you can visually inspect it. )

noise can be generated easily, but the advantage of using an image as your nose source is that you can be sure you’re getting the same data every time you ruin the program.

(The advantage to saving your noise in an image instead of a text file or something is that you can visually inspect it. )

I think I missed something. Why not just specify the distribution of the data and take random draws? Is the problem that the random draw might result in major shifts over a couple of pixles (the telephone poles)?

I can already hear future Shamus, in the cockpit of his flying car, go off on that idiot who thought a value average of 0.53 ‘wouldn’t be a problem’.

Interesting swiss cheese landscape you have there. But why is so much of it approximately the same height?

As far as I can tell, in last weeks

http://www.shamusyoung.com/twentysidedtale/?p=15980#more-15980

he said he was generating terrain, and then taking stuff away with the noise to create tunnel system things.

Yeah, that terrain looks very flat. I think your noise is still misbehaving.

^ I’m pretty sure he isn’t using the noise to generate terrain. He’s got a normal ‘rolling hills’ generator and then he uses the noise just to remove bits of it.

What he’s shown us of the noise generator so far, doesn’t look like it would be necessarily very useful to generate actual terrain, because it doesn’t really differentiate between up and down. If you enlarged it, like the big shots he showed us, it would just look like really empty cave structures because there’s bits of roof. Terrain above ground has a lot more rules that we’re comfortable with and I suspect that next week he’ll show us how he wants to generate it, and it will either involve doing something very clever to the noise, or no noise at all. Remember in Frontier he had that erosion generating thing going on

EDIT: Also this terrain is reminding me of that fake ICO sequel concept where you explored canyons with a hanglider, but it had no motor so you always went down and had to climb back up for height. You could do fun things with it, there’s something very attractive about exploring canyons

Looking great, I really enjoyed seeing how you tidied up so much in this one post. I bet it felt fantastic.

Where you moved A to being regenerated only once at the end of the process, I love it when I stumble back across hastily written code and with a small tweak make it really fast. The other day it only took me three minutes on some old code to drop a PHP page’s rendering speed by 85%. Great times.

Are you being sarcastic or is it actually what you do when you need to think over a problem?

Nope– it is actually what he does while pondering, that or playing a video game, or wandering around the house with a look on his face that tells us all he is THINKING and to not interrupt. The really funny thing is I work the same way so both of us might be playing a game or surfing the web but in reality be working out some problem in our heads.

I tell that to my boss every time he sees me browsing the internet while working.

Unfortunately, he doesn’t work that way, and he is only soothed by my adequate production.

+1 for “background processing” a problem by distracting the shiny-seeking part of the brain with…well, shinys.

Subconsciousness is a wonderful thing, no matter what Freud said.

However, problems arise when the idea is hatched and it’s time to stop looking at the shinies…

Science backs up the idea that idle time is good for processing: http://www.nature.com/news/why-great-ideas-come-when-you-aren-t-trying-1.10678

I was being semi-serious. As Heather said, I often have flashes of understanding while web surfing, showering, before falling asleep, etc.

I hear they have a chamber for that now.

This seems normal to me. There are people for whom this is not the case?

People with one track minds :D I can and do solve problems whilst walking around/sleeping/washing etc but if I tried to do something more complex my attention would completely switch over to the new thing.

This is the main reason* I like to walk to work if I can. I have solved countless problems while walking to and from, and the walk is so much shorter when you can dive into a programming problem on the way. Driving however, I need to concentrate on, so it’s much more boring (I prefer programming over driving, even if it’s only in my head).

I also like to take a walk during a break if I’m working on something difficult, just to clear my head. My manager can’t seem to understand I need to surf the web to be able to work however :P

*of course it’s also cheaper, and healthier, plus it wakes me up in the morning.

For me, it depends. If I know I’m on the right track, then I just need to focus all my mental energy on the problem until it’s sorted out (which I usually do by drawing diagrams or pacing back and forth while staring into space, and muttering under my breath.) But, if I can tell that my current approach isn’t working, then I need to take a break and do something else until I think of something new to try.

I dont know about Shamus, but for me the answer is …yes.

In fact, its what Im doing right now!

Bill Waterson has a line in the Calvin and Hobbes 10th Anniversary Collection. I can’t find the exact quote (my brother has the book and the Internet is not being forthcoming). The gist of it is that his creative process consists mostly of sitting at his desk staring off into space – which to the layman looks remarkably like goofing off. Nicholas Meyer says something similar in his commentary on Wrath of Khan, that creativity requires taking some time to relax in a bath and stare at your toes.

My own inspiration tends to strike in the shower, but I keep playing videogames in the hopes that it will spread.

I do the same thing when trying to come up with a solution to a programming problem. Sitting there banging my head against it at a certain point becomes counterproductive.

Can’t count the number of times the solution to my problem has popped into my head while A) watching TV, B) playing a video game or C) sleeping. As long as the problem is rattling around in my head and my current task isn’t complex, I’m “thinking.” I do the same thing at work.

I’m doing it right now.

From above it looks like pictures of the top of a rainforrest (tree tops as far as you can see). I’m digging the look below thought, looking forward to you adding more variation.

Glad to see that I wasn’t the only one thinking that. Looks more like a giant forest of some sort at this point. At least from the top.

The issue with clamped values in your noise that you mentioned toward the end of the first section of this post is something that sound guys struggle with constantly. In sound engineering, the clamped values are called “clipping”. When it happens accidentally (like if an instrument suddenly makes a much louder sound than was expected and overdrives its channel) it creates a bit of distortion. When it’s done with excessive compression, which is essentially the same as your setting the value range too small, you get mushed-out attacks (beginnings of notes), which is especially noticeable on drums and other percussive sounds.

The latter is especially a problem on commercial recordings these days because sound engineers are under tremendous pressure to mix everything as loud as possible, on the misguided belief by the record labels that a song that’s louder and harder to ignore will sell better because it’s forcing itself into people’s consciousness or something like that.* They compel the mix engineer to mix everything as loud as possible, to the point that a lot of the peaks in the sound get chopped off as everything is crushed up against the dynamic ceiling. It’s painfully noticeable once you know what’s going on – listen especially to the drums in modern commercial rock songs. (Or better yet, don’t, if you want to enjoy the song.)

*If a radio station or somebody plays a bunch of different songs, they’ll all be at different volumes because they were mixed and mastered independent of each other, and a song that’s been compressed for high volume will generally be louder than a song mixed to preserve its dynamic range – to the annoyance of the listener who has to keep adjusting the volume at the beginning of each song.

The Loudness Wars in full effect.

I buy records sometimes, genuine vinyl, because often the CD version is horribly clipped, but the plastic version is not.

Metallica’s “Death Magnetic” album was a great example of this in action. Apparently, however, the version of the songs included in Guitar Hero was mastered differently, and didn’t feature the clipping. These graphs illustrate what you were talking about perfectly:

http://mastering-media.blogspot.com/2008/09/metallica-death-magnetic-sounds-better.html

Huh. That’s interesting to know.

I have an Apple MP3 player loaded with hard rock and metal. I manage to preserve my hearing however, by listening at the lowest audible level. Except that there is this one album that is so loud I jump a bit every time it plays, and have to fiddle around with volume controls constantly.

I was wondering why they did it that way.

Oh man, if you think that’s bad, try listening to a lot of indie/random-guy stuff from the internet (Song Fight, OCRemix, random people in home studios, etc.) – the levels will be all over the place. And I’ve found that the “Sound Check” function in the iPod music player, which is supposed to normalize the overall volume of your music, actually makes things WORSE more often than not.

It’s funny – I’ve been listening to music very loud through headphones (the better to hear sonic detail) for at least 20 years now, but when my hearing was checked last summer when I had an inner-ear problem, it turned out to still be very sensitive. I can still hear the “teenager noise” loud and clear, for instance (which is handy because electronic equipment that’s turned on but not doing anything frequently makes very-high-pitched background noise as well). So I guess “headphones + loud = deaf” is subject to a lot of individual variation.

Normalizing only works based on peaks. If a track is mixed very loud and another quiet but with high peaks (i.e. sharp, loud drums vs. loud everything) then they will still be of perceptually different values. The only way to get levels reasonably consistent is to compress all the music you play back, reducing audio quality in the process.

I would think that there would have be some way of figuring out the average level of a track in terms of what actually registers psychoacoustically rather than just going by peaks, and then just adjusting the overall volume, but what I know about sound and recording I’ve mostly picked up within a music context, I haven’t studied the science in any kind of depth.

There is: http://en.wikipedia.org/wiki/ReplayGain

From that article: “Sound Check is a proprietary Apple Inc. technology similar in function to ReplayGain.” And Sound Check is total shit. That makes me sad – either the concept is a dud, Apple has completely broken something rather than forking, obfuscating and polishing it like they usually do, or my ears or audio processing are so abnormal that no volume normalization technology will ever grant relief. :-(

Actually, clipping isn’t something people in audio production “struggle with constantly” unless they’re completely incompetent. Managing levels is very, very easy to do.

For the record, most radio stations put all their songs through insane amounts of compression and EQ anyway, so there’s literally no reason to “master for radio playback” at all.

And yet it continues to be mandated by the people with the money…

And yet you still hear it on professional and dedicated-amateur recordings all over the place. Maybe not as obviously as a blast of distortion on what’s supposed to be a clean track – it more often takes the form of popped consonants, muddy or artificial-sounding percussion transients (by accident or under orders), or simply an instrument that’s way too high in the mix. Managing levels certainly can be done right 99% of the time if you and the people you’re recording give a care, but I wouldn’t say that clipping only ever happens when someone is being “completely incompetent”.

Shamus, the central limit theorem may be relevant here. For your application, it basically says that averaging your noise data will always result in something that has a bell-curve distribution of noise.

That’s what I was thinking. Simple linear scaling is bound to produce clipping errors, you could do something cleverer with a cubic function or something, but then you’re starting to get kind of expensive.

Was anyone else getting a weird optical illusion from the green-and-yellow “why is my noise so limited” picture? While reading the text above and below the image (so that the image was not in the center of my field of view), it seemed like the image was “dancing” or swapping… like it was somehow flip-flopping while my eyes scanned back and forth across the words.

Anyone else notice that?

Instead of clamping the noise spectrum don’t map it linearly. In your example you map 100% of your spectrum to the “interesting range” and hope that you don’t end up with problematic outliers too often. What about instead mapping say 90% of that spectrum to the interesting region, this allows you to preserve those outlier values without clipping while still making use of most of your spectrum. Obviously this makes the normalization more computationally intensive but it would achieve a similar result with less risk of clipping. The exact mapping function will probably take some trial and error, the envelope of a a sinc function would work well but is probably overkill in complexity.

You’re right. it would be better to re-map it so that the interesting stuff fills 90% of the spectrum, and scale the exceptional values into the 5% at the top and bottom.

And yeah, it’s more expensive, although given just how much it’s doing already this would be a small increase.

On reading the post, I immediately thought that it was an ideal case for logarithmic mapping or some variant thereof.

The statistician in me wants to map each point not to a value, put to a percentile- this point is ‘higher’ than 3% of the points, so it has a value of 3 (out of 100). It’s a few more steps in the middle of a program that’s already been written, and it invalidates every world created, but it has the upside that you can say ‘I want a cave system that is 77% dense’ and get it on the first try.

The problem with that is that to calculate percentiles, you need to operate on the entire dataset, and this dataset is literally infinite in size. You might be able to do something like bootstrapping, which doesn’t require the entire population, however.

There’s probably a good answer, but I was wondering why he didn’t just autoscale the values like I did when I was writing my own Simplex noise generator.

I suppose it has something to do with the difficulty of autoscaling across multiple chunks and could cause problems if several chunks are generated with very narrow values followed by one with much wider values(CLIPPING GALORE!). Perhaps the Green and Blue channels could be co-opted to help with this, since they’re apparently being ignored currently?

I’ve been reading this series from the start, but sadly some piece of information has fallen out of my head and I cannot find it (…er, rather, I don’t have time to find it… :-) )

So, I’m a bit confused – you mentinoed that it took over a minute to create your final landscapes, and yet your framerate is still up at 40 fps? How is that?

It was rendering at 40fps, while it generated all that crap in the background.

So you have procedurally generated an ugly planet, a *bug* planet.

Starship Troopers: I see what you did there…

Two questions, Shamus:

First, is there any way to use clamping to chop off those dark blue pixels?

Second, have you considered faking a curved horizon to produce a limited view distance instead of using fog? I don’t know how hard this would be. Everyone seems to use fog, but I wonder if there’s another way.

Clamping works to better utilize spectrum getting rid of of the blue would require what in signal processing you’d call a low pass filter (LPF). The problem with the dark blue is that the change in magnitude over distance, the frequency is too large. An LPF allows through (passes) all of the frequencies below some threshold and removes everything above that. Basically an LPF would put a clamp on the frequency domain and clip the values that are too high. In real terms it would mean there is some maximum slope that everything in the world conformed to.

The downside to this is that an LPF is really computationally complex to implement. To do it right you’d need to do two Fourier Transforms of the image which even with the advanced FFT algorithms out there can be pretty complex.

Ah, I see. At first I was thinking the deep blue spikes were coming out of the less-deep blue areas, in which case you might think trimming them off would work. But on closer inspection they are not coming off of the darkest blue, so you couldn’t trim them away completely without flattening a good proportion of the other blue values in the process.

Low-pass filter => no need for a full blown FFT back and forth, a little gaussian filter will have an *equivalent* effect for a modest cost. No ?

Yeah a gaussian filter would work but the blurring effect might not be something you want on the rest of the image. If a moderate blur across the rest of the image is acceptable then a gaussian filter would work very well but if the goal is simply to trim the high frequency points down to a more reasonable size an LPF would be the only way to go.

You could potentially apply the gaussian to only the areas with too high of frequency but at that point you have to parse across the image in two directions and I’m not sure how much efficiency savings you get in that case.

I’m pretty sure a median filter would be better if you’re going after single pixels.

The combination of 2 mods in minecraft allowed someone to have the distance cubes appear to be floating about all over the place through the use of shaders; specifically this.

That being said, the times where the cubes stretch into the sky very high are quite freaky, as opposed to normally where it sort of tilts side to side.

I’d seen videos of that mod, which is what got me thinking about it. IF you can bend the horizon like that, surely you could bend it over the horizon.

It’s quite beautiful in a rather disturbing way… Everything is made out of jelly.

Are you using cubes or blobs at the moment?

I guess the blob approach will cause different problems, but at least it’s something that’ll really make this project differ from a “Minecraft-with-rounded-edges” clone… Instead of every cube being made out of 8 points in space, sharing corners etc with other cubes, every point in space would just be made of matter or air or whatever, and look to 6 neighbouring points to form a shape. Using points like this could also improve some world building ideas (imagine placing something like a wood plank on two adjecent points in space: instead of filling 2 entire cubes, you’d just have one wood plank between the two points).

Gahh, it’s hard to describe it, but I can clearly see it in front of me :D

Edit: the pictures at the bottom of the article kind of show what I see in my mind’s eye: http://local.wasp.uwa.edu.au/~pbourke/geometry/polygonise/

Each “corner” of a cube (or just each point in space) determines the shape of stuff

“The upshot is that in the process of filling in the nine nodes in the center, A will be rebuilt nine times.”

I do not think that means what you think it means. ; )

I’ve always understood (and used) “upshot” to mean, “the end result” or “the result of that previous stuff”. You’re the first person to suggest this is not how you understand it.

What does it mean to you?

Is (infrequent) clipping actually a problem here? I mean, it would be horribly noticeable in, say, a heightmap, but aren’t you using your noise to generate isosurfaces? That shouldn’t really care about clipping extrema.

If some one needs expert view about running

a blog then i advise him/her to pay a quick visit this blog, Keep up the good work.