Today we’re finally going to start adding some features to make this thing look a bit more… What? You say you want another digression on noise filtering and interpolation techniques? You sure? Okay then!

(Nerd!)

If you remember from last time, we’re taking tables of random white noise and expanding them to create “interesting” patterns. Stuff like this:

|

Now, I really don’t want to have the next bunch of images be black & white scramble squares. That’s boring and it’s actually hard to see the effect I’m talking about. So I’ve altered my program to output example images that will use color-blending to make the gradients more clear. So the above image now looks like this:

|

Just remember we’re not really dealing with color data right now. We’re actually just generating heaps of numbers between zero (dark blue) and one (red) and this color-fade makes it easier to see what we’re doing. Okay? Great.

In the image above we’re looking at around 16×16 pixels, give or take the partials on the edges. We need to be able to expand this data and come up with values between these points.

In the previous entry, I put in a system based on cutting a quad (rectangle) into a pair of triangles and blending across it. Doing so yields this:

|

(Am I the only one who thinks that circle with a tail in the middle of the image looks kind of like the Mandelbrot set?)

I’ve been using this snippet of code for years. It wasn’t until I posted the last entry that I even questioned it. Someone asked why I wasn’t using bilinear filtering and I realized I didn’t have a good reason. There might be a good reason, but I hadn’t even considered the question. I’ve been using the quad-fade code for so long I just sort of take it for granted.

See, the quad code has two important features:

- It is fast. This is probably the fastest possible method that won’t create nasty seams.

- It breaks things into triangles.

The triangles thing is important in certain cases. Like, in collision detection. It’s handy if the player is standing on a square of terrain and I want to see if they’re ankle-deep, or floating above it, so that I can nudge them up or drop them down according to the long-understood rules of gravity and… walking up hills. If they’re walking on a triangle (and you are always walking on triangles in a 3D game) then I don’t want to treat the surface like it’s rounded. That will not look right.

So I used this code out of habit. I saw a blend-four-values problem and pulled out the hammer I always use on four-value problems. But is that the right choice to make here?

The quad blending begins with four values. Say you’ve got four pixels you want to blend together:

|

We take this square and divide it into a pair of triangles. We can do this by cutting from upper-right to lower-left, or from upper-left to lower-right. Whatever. It’s best to alternate your cuts in a checkerboard pattern to avoid ugly artifacts. (Alternating our cuts will lead to the “diamond” pattern you see in the earlier image.)

|

If the point we’re trying to generate falls in the lower triangle, then we blend between those three points. If it falls in the upper triangle, we use the corners of the other triangle. If I’m generating point X on the image above, then I create a value that is 1/4 cyan (upper left) and 3/4 orange (lower left) because we’re about 3/4 of the way down the image. Then I take that blended value and mix it half and half with dark blue (lower right) because we’re halfway across the image. It might sound confusing, but the code to accomplish this is actually a good bit smaller than this paragraph and only slightly less readable.

|

The downside of this is that the points aren’t given equal weight. The two points that form the right angles can’t effect the middle. The exact center is a blend of the two blues, without any weighting from the orange or green. (Please don’t correct me and explain that these are peach and avocado or whatever artists call these colors. I’m making polygons, not a salad. I’m an engineer, and the only color names I know are the ones from the Crayola 8-box. Doghouse Diaries and XKCD have already slain this horse and gave it a good pummeling afterward. (Insert “pummel horse” joke here.) Let’s move on.)

Instead, let’s try bilinear filetering. (“bi” meaning two-way. Yes, just like the sex thing, you complete juvenile. “linear” meaning in a straight line. So, a couple of lines? Let’s try it.)

|

Bilinear filtering gives the values a more equitable distribution. To solve for the same x as before, we would blend halfway between the top two pixels for point A. Then we blend halfway between the bottom two for point B. Then we blend 75% of the way from A to B for x. Clear? I hope so, because I want to move on.

Oh, the same square with biliner filering looks like this:

|

Note that we needed to do three blending operations to get this value, as opposed to two blends for the cheapo blend I’ve been using. Now, a single blend is nothing. A few math operations. But remember what we’re doing with this data. We need to do three blends per pixel. But we’re doing this in three dimensions, so we need to generate two pixels and blend those together for a single octave of noise. And we generally want to combine about 8 octaves to make our noise interesting. So…

(3 blends * 2 pixels + 1 blend) * 8 octaves = 56 blend operationsThat’s per cube. Oh, and most cubes need 2 bits of noise. (One to shape hills and one to dig caves, for example.) And a single 16-meter block of the world needs 4,096 cubes. And in a scene, even at the lowest possible visibility settings you’ve probably got around 400 such blocks rolling around in memory. (I think Notch calls them chunks. I’ve taken to calling them nodes.)

So even a crappy low-visibility scene needs to perform 183,500,800 blend operations. Also keep in mind that a single blend operation is…

inline float Lerp (float n1, float n2, float delta) { return n1 * (1.0f - delta) + n2 * delta; } |

Looks like a subtract, a couple of multiply operations, and an add. We need to do that, one hundred and eighty-three million times. That will wipe the smug off the face of your quad-core 4Ghz processor in a hurry. Your machine is going to work for this scenery, kiddo. So no, increasing the number of blends we need to do by 50% is not something to be done lightly. We need a really good reason before we start dumping more CPU juice on the problem. We need something important, like marginally better graphics or something.

Anyway. So this is what we have now:

|

And here is the same thing generated with bilinear filtering:

|

So… is it worth it? It’s hard to tell. Obviously it looks better in RAINBOW VIEW, but that’s not what the data is used for. In our program, the data is used to make caves. Does it make better caves?

True story bro: As of writing this paragraph, I have yet to try it. I honestly don’t know, 1,200 words into this post, if this is a complete waste of time or not. Let’s find out.

|

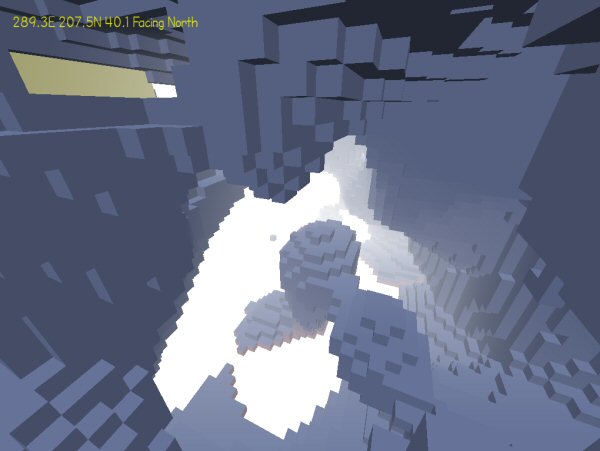

That didn’t take long. Okay, so I made a huge volume of noise caves with the threshold set at 0.5. (In English, it should be a roughly even 50-50 mix of air and solid… block stuff.) First, I take a screenshot of a nice bit of cave using the discount triangle-interpolation blending:

|

And now the exact same location, but generated with bilinear whatsits:

|

Is one of these really better than the other? It’s hard to say. What’s “better”? More visually interesting? More fun to explore? More realistic? Is the slow one really 50% slower?

This sounds like a decision to make later, when one of these variables matters. I’ve got both systems sitting side-by-side and can flip between them as I need. I guess for testing it makes sense to stick with the faster system anyway. If I stick with this project then eventually I’ll have to add a bunch of timers to keep track of where all the CPU is going, and that would be a good time to come back to this.

Also, in the process of writing the new filter, I managed to mess up and make this:

|

(I was blending between the wrong corners.) It’s useless, but kind of pretty in a strange way. I like it because it looks like one of those shuffle puzzles. I suppose it would be considered evil to present this as a shuffle puzzle and let someone drive themselves insane trying to get things to match up?

So that’s what we accomplished today… nothing! This riveting series will continue in the next entry unless there isn’t one.

id Software Coding Style

When the source code for Doom 3 was released, we got a look at some of the style conventions used by the developers. Here I analyze this style and explain what it all means.

Lost Laughs in Leisure Suit Larry

Why was this classic adventure game so funny in the 80's, and why did it stop being funny?

Batman: Arkham City

A look back at one of my favorite games. The gameplay was stellar, but the underlying story was clumsy and oddly constructed.

Steam Summer Blues

This mess of dross, confusion, and terrible UI design is the storefront the big publishers couldn't beat? Amazing.

The Best of 2013

My picks for what was important, awesome, or worth talking about in 2013.

T w e n t y S i d e d

T w e n t y S i d e d

Since the project is about oct trees, could you use the oct tree in the generation of the noise ? Would that help cut down on the effort needed to generate it?

I really like this series (I enjoyed Project Frontier as well) and hope you continue.

Sounds like a cooking technique to me! Or perhaps an accounting one…

Oh, how the fingers dull in these wee hours of the morn, eh, Shamus? ;]

The topic itself is out of my non-programmer leagues, but so were most of the details of Project Frontier (save the lattice-building) – point is, even as a dunderhead, I’m still enjoying this series and happily following along.

It would be neat to see what kind of terrain that last mapping produces, though, even just on a lark… (Who knows, maybe it would look suitably alien or constructed?) Pictures, perhaps?

Oh, and heehee:

Eh, don’t worry. Not a morning person myself, either.

Of the two noise-generation in-world comparisons, I think I like the one using triangle-blending more.. The bilinear in that screen, at least, seems to produce sort of “globular” spaces and formations, things seem to approach to a sphere a lot. The result of triangle is a bit more sharp and horizontally flat – it just creates a more natural scene, I’d say.. It feels less artificial.

I was thinking exactly this, and came to comment to that effect, but you beat me to it. Smooth blends is an advantage when it comes to blending colors, but when you’re using it as a gradient for terrain building, regular shapes is not always good…

Thirded.

The occasional sharper corners from the triangle-interpolation blending look more realistic than all of the rounded edges from the bilinear filtering.

I am a bit of a student of landscapes, and both systems appeal to me. If we look around us, we can find lots of different landscapes, some more angular, and some more rounded. I guess it’s too much to ask to have both systems, but in different circumstances?

Shamus, why don’t you spend the first half of your day coding, and then the second half posting about what you coded?

I think he did; he only coded something entirely else than what he had planned.

Because he has other things to do. Eat. Sleep. Play with the kiddies. Write. (You know. Book and all.)

Besides, I kind of like his haphazard approach here. I guess it is kind of contradictory to the rules of programming, but I appreciate an honest coder more than a planning-ahead coder. And it’s always nice to hear about the ideas of a graphics programmer.

“Because he has other things to do. Eat. Sleep.”

Oh,thanks for reminding me.I should grab a lunch.

He didn’t sound like he was having a good week.

Missing SW, then bringing up ‘true/cool story bro’ memes, then a negative ending :(.

Still interesting and maybe someday I’ll actually understand a part of what he’s talking about but by far the best programming blog (for someone who will forever be a complete newb) I’ve found.

Hahahah, he spends most of his day coding or writing– whichever is the current bee in his bonnet, even when sick as he is now. He just occasionally stops, goes back and writes about what he already has finished.

Wasn’t all this noise generation intended for creation the terrain in the first place? As in, instead of delivering dozens of megabytes of premade terrain-data, just provide a seed and a decent algorithm which churns out a terrain at the start of the program? Like those ultra-small 3D games which fit into a few kilobytes but have you wait 5 minutes just to generate all the assets during startup.

Also, that little OCD-nitpicker-guy in my head now prefers bilinear filtering for the sole reason that it seems to be the theoretically better algorithm. Although that is the same guy who demands that all graphics should have ever only been raytraced, as everything else is just cheap, heh.

This is a very good point. Isn’t this noise sampling and filtering for generating terrain? Terrain that is then put into octrees for drawing and such? Why would it be necessary to repeatedly apply the filter? Or would it just take too long to generate?

Without the filter, the output would be impossibly “jagged”. It would just look like a random scatter of blocks. No cave would be larger than a couple of meters.

I just sent this to a friend with one square whited out, telling him it’s a shuffle puzzle, night before a test. I’m a bad person.

Is the purple robot made out of a Rubbermaid tote? Sure looks like it…

That’s Gypsy, the purple robot from “Mystery Science Theater 3000”. Her head is made from a Century Infant Love Seat, with an Eveready flashlight for the eye, and foam tubing for the lips, painted metallic purple.

If you’ve never seen any “Mystery Science Theater 3000” episodes, track down a few episodes, I’m sure you’ll get a laugh out of them. They basically mock really bad movies and do short comedy skits during the breaks.

Wasn’t that guy suggesting bicubic (or, since it’s 3d, tri-cubic, I guess) filtering? It’s way worse for performance, but if you want to disguise the sample points it’s far better. Also, doing this on the GPU with shader code can dispel any performance worries… assuming you can trust the graphics card to not cheat (so long as you don’t use texture sampling, I think you’re in the clear).

I wouldn’t mess with passing it off to the GPU. For one thing, it sounds like a monumental pain in the ass. Render to a texture using a sharer that generates perlin noise, and then get that texture back out, and then sample the pixels at whatever bit depth is popular on this screen… Gah.

Also, I imagine this applies only to later-gen cards. So people using integrated hardware wouldn’t benefit. I’d be speeding up things for people who already had an awesome framerate, and not helping the people who need it. Which means I’d need to ALSO maintain code for doing this on the CPU.

I’d also be worried that different implementations might produce different results. Can I be sure NVIDIA cards won’t use slightly different behavior than ATI? I can only imagine: People with different graphics cards would see slightly (or radically!) different scenery with the same seed data.

Still, I imagine if this was a AAA game this is exactly the sort of thing I’d have to do.

Not to sound snobby, but what kind of people are using integrated graphics hardware these days? Even most laptops from later than ’07 have graphics cards, not to mention desktops even. I mean, sure, you can keep cutting back things all the time to accomodate legacy issues, but you have to draw a line somewhere, otherwise where will you end up – a thing designed to run without a screen, a mouse and audio devices?

The other three computers in this house all run integrated hardware. Related: Minecraft even runs on them!

This is unrelated, but I’ve been playing Terraria recently and wonder what you thought of it. I’ve never played Minecraft, but you’ve said a lot about that and I’m curious how the two compare.

EDIT: I should add that I never really got into building fancy structures or making decorations just for the sake of it. I was focused more on upgrading equipment, beating bosses and so forth. How much of that does Minecraft have, compared to Terraria?

True fact: My laptop has integrated graphics (Intel HD).

On it I can play: Portal, TF2, KotOR, all 3 Mass Effects (in fact, 2 and 3 run better on it than the first does), Bioshock, Alpha Protocol, Oblivion, Deus Ex: HR, etc., and a horde of other less-intensive games. Really, it’s amazing what you an do without a video card when you don’t care about all that blindin’ bleeding-edge graphics shit.

(EDIT: Haven’t tried Minecraft (don’t terribly care to either), so I can’t comment on that, sorry.)

So either: integrated graphics are not as terrible as everyone believes, and it’s mainly gamers’ fetishism of ultra-ultra-detailed brown corridors that drives the idea; or MSI makes some bitchin’ good motherboards or however all that computery stuff works; or magic. Computer magic.

Hm. So.. why can’t that chip be used for what Shamus spoke about – letting the gpu part deal wiht the numbercrunching? I mean, the intel HD integrated gpus are still physical graphics chips, not emulations on a cpu thread – why can’t you offload the work to them?

In particular.. I was responding to this bit by Shamus – “Also, I imagine this applies only to later-gen cards. So people using integrated hardware wouldn't benefit.”. Tbh I’m not sure why it wouldn’t apply to integrated GPU users? If it’s bound by generation, then then problem isn’t integrated/dedicated, but old/new, which brings me back to an earlier point – how much legacy support do you have to provide before it gets ridiculous?

Different grades of cards have different levels of functionality. Different levels of complexity for shader models and such. It’s not just that some cards are faster, but some cards do stuff that others don’t. This is why you’ll open up advanced graphics options in a AAA game and see some stuff greyed out on a low-end machine: This card doesn’t do that thing.

Like, maybe a super-card from NVIDIA has a really great tool that makes (say) depth of field super-easy with a special little bit of functionality. It’s also available on ATI cards, but it’s done differently, so if you want hardware-driven DoF you’ll need to write two entirely different sets of shaders, one for each company.

Then you’ll have older cards that don’t have that special tool. The cards still have the raw power to do DoF, but you kind of have to do it manually. So there’s another shader path to write for two year old cards.

Then there’s integrated cards that don’t have the functionality OR the juice to pull it off. You’ll have to write another shader path that leaves out DoF entirely. So now you’ve got four different shaders you had to write.

And then there’ some knockoff copycat card that incorrectly TELLS your game it can do X, but can’t, or does it slowly, and now you’re got people bitching about your buggy game and you don’t know what shaders are failing or why and it’s impossible to test even a fraction of the chipsets.

It doesn’t help this mess that NVIDIA and ATI are trying to out-perform each other and at the same time cock-block each other, and both will propose features or roll them out, or copy the functionality of a rival, or deliberately make their own system work different.

This is why indie devs tend to stay away from the hardware unless they’re doing very straightforward stuff.

I have no idea how thorny hardware-driven Perlin noise would be.

Heck, I thought the shaders I wrote for Project Frontier were pretty basic, but they’ve got all kinds of artifacts and purple textures and other crazy problems. (Which magically don’t show up on any machine available to me.) And that’s just one shader doing something simple. Perlin noise would be a far more sophisticated undertaking.

So yes, the power is there, but getting at it can be hard if you want your stuff to run on most machines.

Hm, interesting. Can the “x card supports Shader Model 3” thing used for general capability certification, though? I’m not really aware of any other statistic that tells what particular functionality scope a graphics card has – the supported SM, I thought, was an encapsulation of most of the non-company-specific features you could rely on.

It’s just that if I recall correctly, most gpus in today’s use, and indeed most integrated cards, support up to SM3 – if the functionality you’re thinking of is dependent on that, then maybe it’s not so infeasible?

(To be honest, a big issue here is that I don’t actually know what kind of functionality Shader Model version specifies, and how big of a deal it really is – obviously it won’t descripte memory structure or architecture of the card, but.. the function calls, stuff like that? idk.)

But, yeah, I do recall several cases & articles of feature cross-wonkiness between amd’s and nvidia’s products… And yeah, doing it “directly”, by interfacing wiht the lowest-level stuff you can access on the gpu, that’s definitely a no-go – that kind of stuff, iirc, changed all the time with new revisions and architectures..

All of this “offload to the GPU” talk set my programmer spidey-sense tingling. The thought centered around one red flag in particular: Don’t performance tune too early.

“Rule 1. You can’t tell where a program is going to spend its time. Bottlenecks occur in surprising places, so don’t try to second guess and put in a speed hack until you’ve proven that’s where the bottleneck is.

Rule 2. Measure. Don’t tune for speed until you’ve measured, and even then don’t unless one part of the code overwhelms the rest.”

Article here: http://www.faqs.org/docs/artu/ch01s06.html

This rule shows up frequently, and with good reason – if you’re performance tuning without ever running, you’re just stabbing in the dark. I would avoid this like a plague-ridden cyborg zombie until you can prove that there actually is a performance issue. Its stuff like this that can send you down programming rabbit holes of despair.

I wonder how long it will all take to settle down? Remember, CPUs were in a similar situation at one point, before everyone settled on x86 (and subsequently x64).

How well a game runs on an integrated video card is very dependent on how that games is programmed (as Shamus already pointed out). It’s possible those games are designed to make good use of your CPU, before passing things off the the GPU. Something else might be programmed to be highly integrated into the GPU, making the experience highly graphics card dependent.

My laptop has both an integrated(Intel HD3200) and a dedicated(ATI Radeon HD5450) video card, so I’ve actually had a chance to look at this sort of stuff.

Intel HD cards are generally reasonably powerful, but wholly insufficient for any sort of real gaming. Notably, they tend to lack vertex shader support, which isn’t an issue for 2D graphics(watching HD videos or playing Flash games or emulated SNES games), but since vertex shaders actually pre-date pixel shaders by a couple of years, you’re going to get into all sorts of trouble with 3D games. I haven’t tried on my latest one, but for the most part, any Valve game using the Source engine doesn’t run on an Intel graphics card, because they require vertex shader support. At best, it usually crashes halfway through the first 3D content load.

Wings 3D also doesn’t function properly under a combination of Windows Vista or later, and an Intel graphics card. It has to do with how the Aero display system handles OpenGL support.

Minecraft and Eve Online do both run on an Intel card, but Eve refuses to display texture resolutions above the lowest mipmap level, and Minecraft runs at about 1/4 the framerate that it does when the Radeon card is activated(I need to turn off fancy graphics and reduce the viewing range to “Short” to get above 30FPS, which the Radeon can do with Fancy graphics enabled and rendering distance between Normal and Far)

Additionally, I have a friend whose first experience with Moonbase Alpha was playing it after I had forgotten to switch from low-power to high-power graphics cards, and the game chugged so badly he’s refused to ever touch it again, even after my insistence that the problem wouldn’t happen again, so the Intel HD cards have actually damaged certain game sales and experiences.(there’s no way this is an isolaced incident.)

So yes, there is a purpose to a dedicated graphics card. The frustrating part for me is how many laptop computers have an amazing CPU powered with an Intel GPU. I do too much stuff that requires an ATI or nVidia GPU, and they’re getting harder to find.

Usually when people say integrated chips they’re thinking of the cheap stuff from back in ’05, with the huge differences in performance.

They’re not referring to the new Intel HD 3000 or AMD Fusion lines, which are supposed to substitute for a dedicated card. (Though they’re still weaker than the high end stuff, obviously, much like how a high end laptop still ends up weaker than a high end desktop.)

I want AAA games designed for click-speaker and Morse key!

Well, maybe not. But designing an indie-ish game for low-spec hardware may well be a good choice. If you’re relying on a GPU, you get into the whole “we need to test this on the N most popular GPUs out there, to make sure it does the right thing”.

A lot more than you’d think and if the software is to reach a large audience you have to consider this. With the 2013 Intel integrated GPUs and AMDs Trinity onboard graphics are getting much faster than those <$100 GPUs that most retail PCs ship with (and that nVidia relies on for revenue). Most desktop replacement notebooks come with 'dedicated' GPUs but most of those are so bnadly butchered compared to the desktop GPU they're based on that it's not worth the cost in battery life. The majority of notebooks ship with nothing but integrated GPUs.

I think the rise of 'decent' quality integrated GPUs will be a real boost in the arm for the PC games market. It rose on the back of largely software rendered titles (Doom, Quake) reached maturity with the largely affordable PowerVR(RIP) and 3Dfx offerings and then ate itself alive by all but demanding $400-$500 GPUs.

It's Yet Another Library but have you considered OpenCL or DirectCompute for accelerating intensive math like this? You could get most of the speed benefits of the GPU without having to deal with the variable output of different cards and drivers. Of course as only recent GPUs (dedicated and integrated) offer support so you're shrinking the size of the 'market' even further. Not to mention the challenge of wrapping your head around a whole other compute model.

people with budgets who don’t play FPSs on their PCs, mostly, i would guess.

So the vast majority of the population then?

I imagine anyone who actually plays PC games as a hobby (“real” PC games, not Farmsville, Freecell, or Bejeweled) will at least have a cheapo video card in their computer.

I work in a computer shop: most computers we work on still have integrated graphics; desktop or laptop. It’s definitely not uncommon.

Yeah. I last bought a laptop in 2009 (so after the “2007” threshhold Eliah has asserted) and Dell definitely treated adding a graphics card as a nonstandard, costly upgrade, one I was not in a fiscally reasonable position to take at the time.

And I just checked Amazon’s top-selling laptops list. (Which may be apropros of nothing, who knows. That’s definitely going to be a particular subculture and not representative of the market on the whole. On the other hand, I would suspect if anything the results would slightly favor more tech-savvy people.) The top seven or eight sellers all came with integrated graphics as the standard option.

Yeah, but… well… I mean…

Well, Dell is terrible.

They’re better than HP *shudder*.

Wait, scratch that, they’re the same level of evil. I just remembered that the newer Dell laptops don’t have a compartment for the hard drive that you can remove from the bottom… You have to take the entire computer apart (on some, including the MOTHERBOARD) to get the hard drive out.

You have to remember we’re not talking “Computer Users”, we’re talking “PC Gamers” here. “Computer Users” is an irrelevant demographic when we’re talking about what sort of hardware should be expected for a game.

Of course, GPU offloading would only be useful if this was a performance problem… which I don’t think it would be. But in case this something you would look at later (taking into account I’m more of a Direct3D guy):

I believe render-to-texture is fairly invariant, so long as you avoid texture sampling, especially multisampling and mipmapping, though perhaps least-significant bits of trancendentals like sin(), log() might not be equivalent?

As for ease: well, you do have to figure out render to texture (http://www.opengl.org/wiki/Framebuffer_Objects), but then it should just be glReadPixels(). Near as I can tell, OpenGL 3+ supports renderbuffers with pretty random formats: GL_REDUI8 for single channel unsigned byte is probably a good choice? But for 2 (as supported by SDL) you’re probably limited to 3 or 4 channel 32-bit float buffers.

Otherwise, you could use OpenCL (or OpenACC, AMP, etc…) to absolutely guarantee avoiding variable results, and that (according to Wikipedia) can use GPUs from about Raedon HD 5×00 or nVidia GTX 2×0 series, but can fallback to x86 SSE2 using the AMD or IBM runtimes, or SSE4.1 or AVX with the Intel one, so there’s less of a worry about needing certain hardware or multiple implementations. (Strangely the nVidia runtime doesn’t do fallback? But you could probably dynamic load the right .dll if you sniff the hardware type) Gory details here: http://www.khronos.org/conformance/adopters/conformant-products/

This is what OpenCL is supposed to solve.

HO HAI ^^ For the current Shamus usage, *proper* linear interpolation is good enough. Summed at various scale, cubic or linear will give the same puffy looking texture. Rotating the layer (golden angle, damn it !) will remove most of the annoying artifacts. Which only a gfx/coder nerd would spot anyway.

But if you want what I believe to be a good reference (subjective blabla), here you go

Noise & cubic interpolation & 3d

So thats what you were doing instead of spoiler warning.You know,you could turn this into a show:Will it blend,colour edition.

Also,nice intro.

Rainbow view sort of hurts my eyes.

So does this mean that we can solve all of our terrain variation issues by overlaying different 2D images across the entire surfaces? i.e. red allows a wide range of values (mountainous regions) and blue allows a very short range of values (plains regions)

Great post as usual, but your writing voice is a bit different today Shamus. That “bi” joke was almost a bit two much for me. I almost rolled (snake) eyes at that point.

Two things:

1) when bicubic is good

If you are using just the one layer, and interpolating linearly, and using it for something that will be directly presented to human eyes – example: elevation data – it will be painfully obvious where the edges between your grid’s rectangles are (each rectangle will have roughly smoothly rolling ground, then the edges will be visible as edges/corners where the ground suddenly changes direction). Because human visual system is very good at spotting abrupt changes to the second (I think) derivative (AKA nasty seams).

On the other hand (bi-)cubing interpolation looks at the adjacent squares and uses a cubic polynom specially constructed so that the interpolated value is smooth to the second (third? I don’t really remember which, but it’s enough for humans) degree even on those edges.

2) speed-memory tradeoff

Let’s have a rectangle:

AB

CD

for each point P between ABDC, you can do what you are doing (always blend all four at once) and not have to remember anything

or you can split it in two steps

– interpolate (and remember) all points on the line AB, and all points on the line CD (varying just x on each line)

– for each P on all vertical lines between those two, interpolate between the two points on AB and CD with the same x as P (varying just y)

This is less computing per point (and scales much better with dimension), but requires more memory for precomputed values – which is not a bad thing if you are already holding the value of every point, but a bit of an obstacle if that’s exactly what you want to avoid.

But if you ration your nodes properly, you will only *need* to remember edge values for one node at a time.

♪Why are there so many

Blogs about rainbows?

And what’s on the author’s mind?

Rainbows are spectra

But only of values

And rainbows have nothing to hide.

So we’ve been told

And some choose to believe it.

I know they’re wrong, wait and see.

Someday we’ll find it,

The rainbow collection

The readers, the dreamers, and me.♪

Poorly rewritten, but that’s what you’ll get from a no-talent hack in 5 minutes.

I liked it!

And now the chorus again in code:

Collection rainbow = new RainbowList(readers. dreamers, me);

I enjoyed the more informal conversational tone of this post. It seems that your style became a lot more formal while you were writing “Witch Watch”. I’m glad the chattyness is back.

Oh, and also, cool stuff about noise and filtering. Thanks!

I don’t know how you do it, Shamus, but you make reading about programming fun, even to the utterly code-illiterate like myself.

If I'm generating point X on the image above, then I create a value that is 1/4 cyan (upper left) and 3/4 orange (lower left) because we're about 3/4 of the way down the image. Then I take that blended value and mix it half and half with dark blue (lower right) because we're halfway across the image.

I don’t get it. Shouldn’t that mean that the final pixel is 1/8 cyan, 3/8 orange, and 1/2 dark blue? With the specific colors you used, that blend would have a hex code of about 626876, which is a medium grey that’s very slightly bluish. But point x on your first blended image there is 0376cb, a definite blue.

And a hypothetical point y very close to the middle of the image would be 1/4 cyan, 1/4 orange, 1/2 dark blue. With the formula you describe, the orange should be affecting the center just as much as the cyan is.

Am I missing something important here?

Yeah, I think the text description is a little off. The resultant color is (like you said) 0377CB and the orange has FF in the red channel, so the orange is much less than 3/8 represented. Blending only the cyan 1/3 and the blue 2/3 we get “0371C8” which is really close to what is shown.

I ramped up the Red channel on the image “octant5_8.png”. Here’s the result. You can see that the red from the orange (and the green) doesn’t reach anywhere near the central diagonal. Something fishy going on for sure. The visual result looks more like the diagonal corners (Cyan and DarkBlue) are blended first, and then other corner (Orange) only gets blended in when the position is over 1/3 from the diagonal.

So, yeah, the text and the image don’t match. You’re not crazy.

That is not what their ex said.

I love these programming articles.

.Net apps may pay the bills, but this is the sort of stuff that I like to learn about with the little spare time that I have.

I am always up for more digressions on filtering techniques!

I was hoping for a view of the scene from your messed up slide-puzzle map, could’ve made some very interesting shapes.

Yes, I think it’d be interesting to see as well.

Shamus, could you still put up some images?

I wish I really had any idea what was going on in this article, I just got misty eyed through the entire thing picking up on the odd thing here and there. Ah damn I don’t understand code…

I agree, Shamus’ writing voice is definitely in the I-am-or-have-recently-been-sick-th person. Sorry Shamus, hope you are feeling better…

Looks like a subtract, a couple of multiply operations, and an add.

Come on, you can do better than this!

inline float Lerp (float n1, float n2, float delta)

{

return n1 + (n2 – n1) * delta;

}

There, with only one multiplication.

“But we're doing this in three dimensions, so we need to generate two pixels and blend those together for a single octave of noise.”

Maybe it’s an artifact of how this is only easy to explain in two dimensions, but why is that the case?

It seems like most of the intermediate values would only need to be calculated once, not once per pixel that they are used in- You need to blend all the Pixels A ONCE, then all the Pixels B ONCE, then all the pixels C (z axis, perpendicular to the screen in the metaphor) ONCE. Then do all the pixels X once, and blend X and C for the final field.

Your 16x16x16 node would need 16 blends each for A, B and C, then 256 (all iterations of A and B) for X, and 4096 for the final data, or 4400 overall blends per octave per node.

Alternately, blend A and C to form a ‘top’ plane, then B and D (with C:D::A:B) to form a ‘bottom’ plane, then blend the appropriate points in the top and bottom plane to get the field. Using 8 starting values at the vertices of a cube with base IJKL and corresponding top MNOP: Blend IJ and LK 16 times each to form lines A and B which contain 16 points each. Blend MN an PO similarly to form lines C and D. Blend each of the 16 points in A with the corresponding point in B 16 times to form plane alpha, and likewise with C and D to form plane beta. To generate the pixel values, blend the appropriate point in alpha with the corresponding point in beta, weighted according to the relative ‘height’: 16 blends each for A,B,C,D; 256 blends each for alpha and beta, and 4096 blends for the pixel values gives 4672 per node per octave.

Times 8 octaves, two bits, and 400 nodes generated at the same moment gives 29,900,800 blends, or about 16% what you calculated.

If the reason that quad blending and bilinear filtering don’t produce nasty seams is that the values for the edge of one node are based only on the corners that share that side, then this should share that property.

Just realized that my algorithm is probably identical to yours except for storing intermediate values that need to be used multiple times, rather than calculating them fresh every time.