|

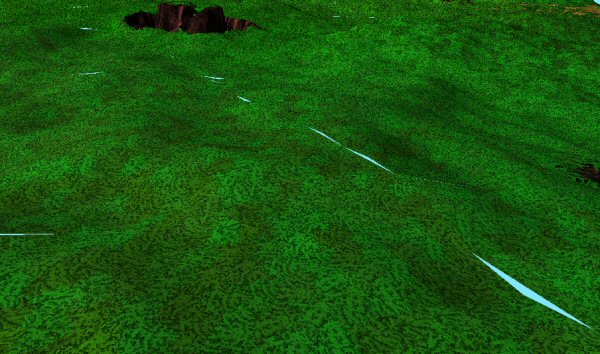

My project. Looking nice enough so far. But it has a problem. The problem is this:

|

Those are triangles.

|

A LOT of triangles.

See how sections of terrain are different colors? Each of those is a block of terrain, which is a grid of 32 x 32 squares. Each square is a pair of triangles. So, each terrain is 2,048 triangles. There are 729 of them in play right now, which gives us our grand total of 1,492,992 – one and a half million polygons.

If I go way up in the air and look down, I see this:

|

That seems like a ton of terrain data, and it is. Yet from the center to the edge is only 416 meters. Not even half a kilometer! Yeah. Filling in the world out to the horizon is tough. It takes a lot of data. Most games don’t even go this far. I think Minecraft lets you see about 256 meters. I think Oblivion, Fallout 3, and New Vegas stop well short of half a kilometer. (Okay, FUEL had a massive view distance, but that was made by a real team of guys, so sue me.)

Now, my graphics card can handle this. In fact, it’s not even really trying. But throwing polygons at the graphics card like this is wasteful. Horribly, horribly wasteful. It’s not affecting my machine, but if this was a low-end machine, I’d really be slowing down.

I don’t actually want to work on this right now. The real bottleneck is on the CPU side, where my machine struggles to churn out the pages of data needed to fill this terrain. By contrast, actually rendering it is no big deal. That’s all handled by the GPU, which, as far as I can tell, is bored right now. Having said that, I can’t leave things like this. I need to make sure the terrain system works right before I move on, which means taking some basic steps to reduce polygon count. I don’t want to be one of those developers who writes inefficient engines and expects you to buy new graphics hardware to make up for it.

This step is going to mean mucking about in the guts of my terrain engine, and it’s easier to do that now than later. Now the thing is fresh in my mind. Later I will be foggy on the details, and I’ll have a bunch of other systems built on top of it.

For polygon optimization, I’m going to go back to Peter Lindstrom and his magic method of polygon reduction, which I used way back in 2006, during my original terrain project. Rather than make you go back and re-read that series, I’ll reprint the relevant steps here:

| To use a quad tree, our grid must be a perfect square. It has to have the same width and height, and they must be a power of 2. In the terrain I’ve been using, the width and height is 32, which is 25. For our example here, let’s assume we’re working with an 8×8 grid, which is 23. |  |

| The main grid is divided into 4 quadrants. So, we have this sub-grid of 2 x 2. Then… |  |

|

…each of those squares is, in turn, divided into 4 quadrants. This yields a sub-grid of 4 x 4, which in turn can be divided further, and so on.

Anyway, this gives us a way to look at the grid at different levels of detail. |

|

| My program starts at the largest blocks on the 2 x 2 grid. Let’s say it examines the four blocks in question and determines that the lower-left one is a bit hilly, and needs to be divided further. The other three quadrants are flat enough that they can be left alone. So, we divide the lower-left block. |  |

| Within that block, we have 4 smaller blocks, and of those 4, we determine that the upper-right has some detail that needs to be preserved, but the other 3 are again simple enough that they can be left alone. Once again, we divide the one block into 4 smaller ones. |  |

| As you mark individual squares for use, you’re actually flagging points on the grid for use. When you do so, there’s this chain of other points you mark as used. You step up to the next largest spot on the grid, and flag a point as in-use. That point, in turn, reaches up to the NEXT larger square on the quad tree and flags a point for use, and so on, until you’re talking about the corners of the entire grid itself. |  |

This is a really complicated system. The math behind it is trivial, but the rules controlling the points are confusing. Then turning those points into triangles is even worse. It took me a couple of days to figure it out the first time, and I don’t remember any of it five years later.

But!

In my least entry on project Hex, I mentioned the importance of comments. Check out the comments I left in my old terrain project:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 | /*-----------------------------------------------------------------------------

North N

*-------* *---+---* *---*---* *---+---*

|\ | |\ /| |\Nl|Nr/| | | |

| \ Sup | | \ / | | \ | / | | A | B |

| \ | | \ / | |Wr\|/El| | | |

| \ | West+ * +East W*---*---*E *---+---*

| \ | | / \ | |Wl/|\Er| | | |

| Inf \ | | / \ | | / | \ | | C | D |

| \| |/ \| |/Sr|Sl\| | | |

*-------* *---+---* *---*---* *---*---*

South S

Figure a Figure b Figure c Figure d

This takes a single quadtree block and decides how to divide it for rendering.

If the center point in not included in the mesh (or if there IS no center

because we are at the lowest level of the tree), then the block is simply

cut into two triangles. (Figure a)

If the center point is active, but none of the edges, the block is cut into

four triangles. (Fig. b) If the edges are active, then the block is cut

into a combination of smaller triangles (Fig. c) and sub-blocks (Fig. d).

-----------------------------------------------------------------------------*/ |

Thank you, thank you, Shamus of 2006. Your ten minutes writing those comments just saved me a day and a half. There were a lot of wonderful notes in that thing, which allowed me to take the Lindstrom system and drop it into this Frontier project in just a couple of hours.

Note to self: You should be putting more comments in THIS project.

So the polygon reduction is gone, and we are left with a NEW problem. This problem:

|

Gaps. Removing polygons naturally changes the shape of the terrain. These changes cause the squares to no longer line up with each other. There are now gaps, where you can see between the blocks of terrain. Here is an example:

|

There’s a terrain right under us. (Pink) And other in front of us. (Yellow) At A, you can see that Pink needed a point that yellow didn’t, and at B you can see there was a point that yellow needed, but not pink. The way to reconcile this is to “stitch” them together.

Sigh. And this is why I didn’t want to have to do this step. I HATE stitching. It’s not hard or complex, but it feels… inelegant. See, programmers talk about encapsulation. That’s the idea that you make a bit of code that does one thing, it does it well, and once it’s done you can treat it like a black box and not worry about how the insides work. The overall structure of the program should be as simple as possible, even if the individual parts are dauntingly complex. Each system should be connected to as few other systems as possible.

Up until now, terrains have been a lovely black box. I just make a terrain, tell it where it goes, and give it a few milliseconds between frames. The terrain block handles everything else. Now my terrain blocks have to interact with each other. Each one has to know about its four neighbors (if they exist!) and be able to negotiate with them to make sure their edges match. Which edge points is it using? Is that neighbor actually done building? Has it changed its edges recently, perhaps in response to some OTHER terrain block? Terrains are no longer just something you can create and forget about. Now you have to create them, and then help them find each other.

Now, this isn’t a horrible thing. It’s actually kind of trivial and not worth the two paragraphs I’m spending complaining about it. But when I have to add inter-connected stuff like this it makes me uneasy. It usually means I need to re-think my design. Not always, though. Some problems are hard, and there’s nothing you can do but suck it up and deal with the complexity.

|

118,353 polygons. Less than a tenth of what it was before. And it looks exactly the same.

Well, we fixed something that wasn’t a problem and added a bit of complexity that I dislike, but the important thing is that it’s built right. Somewhere there is a crappy laptop that will really appreciate the effort.

Next time we’ll add something cool.

Batman v. Superman Wasn't All Bad

It's not a good movie, but it was made with good intentions and if you look closely you can find a few interesting ideas.

Please Help I Can’t Stop Playing Cities: Skylines

What makes this borderline indie title so much better than the AAA juggernauts that came before?

Another PC Golden Age?

Is it real? Is PC gaming returning to its former glory? Sort of. It's complicated.

Shamus Plays LOTRO

As someone who loves Tolkein lore and despises silly MMO quests, this game left me deeply conflicted.

The Best of 2013

My picks for what was important, awesome, or worth talking about in 2013.

T w e n t y S i d e d

T w e n t y S i d e d

Performance is ALWAYS cool.

My crappy laptop thanks you.

As does mine. I was really hoping that I would be able to experience the end product. Thankyou so much for thinking about people like us!

I don’t use my crappy laptop much anymore, but I was stuck with it for several years before I got my new monster, and it thanks you too.

If the end product has LAN support, this effort will not be wasted on me and my friends.

We always need to dig out the crappy laptops to get everyone into the game.

So does mine :)

My crappy home desktop thanks you.

My crappy laptop also thanks you.

It’s two years old and runs Portal 2 just fine on almost-full-settings with a little tweaking, but it can barely handle some of the less efficient engines such as Singularity and Metro 2033 on minimum settings. Heck, the framerate I get when I try to play Twin Sector makes me wonder if they’ve done any optimizing at all. (This is the second biggest reason why Valve is dominating the PC market: the Source engine may be “old” tech, but it runs on practically anything and still looks great.

Also Blizzard and Mojang.)

As does mine.

I wonder about something – This paging and switching of terrain data – Is this going to happen whilst in-game? That is to say, is this engine going to drop in the entire level or do you plan to stream it? I wonder if some of this growing complexity could be better handled by generating the world, and generating the polygons from this data in one sweep before the play even gets in-game.

Sometimes I wonder that long removed bottlenecks like lack of storage are clouding some engine designs like this – You could pre-calculate all of this as a single giant ‘page’ of data with much more simplicity, at the expense of a much larger data storage requirement. After that’s done it would be trivial to cut the terrain up into pages to stream to the player in-game.

One other note in regards to the CPU doing so much work – Have you considered offloading the work to the GPU? You could rewrite your terrain gen code in Nvidia’s CUDA (Or OpenCUDA for an open source version) framework and utilize the hundreds of cores available to your average GPU these days. In fact I really think you should – Churn all the data on the GPU, generate the optimized polys with the same data at extreme speeds, and then drop it back in to your engine running on the CPU. THATS what you call a black box piece of code hah.

You should do it for nothing else other than the fact that no one has really done it to the extent you plan to before. I think it could be a real showcase.

The problem is that by doing so, it would ensure the game requires a far more resource hog of a graphics card, once again removing the crappy laptop form the equation.

Another problem with doing this is that CUDA only runs on nVidia cards. There’s OpenCL, but I haven’t heard as much good stuff about that.

Way too complex. Only worth it if you really need silly amounts of number crunching, such as cryptography or physics simulation. Writing special code for the GPU is also quite expensive in person-hours. It’s a last resort.

Miguel Cepero over at Proc World is actually doing just that, in OpenCL. He’s reticent to release his code for other people to test because although he’s optimized the parallelization to work well on his machine, it is highly likely to have different bottlenecks on different GPU/CPU combos. One of his early posts actually talks about how he expected to get a much bigger boost in speed when using OpenCL, but that you have to write the code differently and so that there isn’t any real interconnectedness going on.

Shamus’ post today is something that would really play hell with parallel processing. It requires a lot of interdependent data access and doesn’t really lend itself to being done in parallel. The GPU has a TON of processing power, but to use it effectively you need to front-load your data and run the same bit of code millions of times over. Otherwise, you’re probably better off leaving it on the CPU.

Aside from the strict technical difficulties this would add for Shamus, I don’t recall Shamus discussing any OpenCL or CUDA experience. So, he might have to LEARN a new API with very different limitations and then STILL have to implement this on the CPU to see if he’s actually gaining anything by using the massively parallel processing GPU.

Disclaimer: My coding experience is small and most of this information comes second-hand or is conjecture.

You could run all regions in parallel though. They are completely disconnected. Not that I’d recommend that, as outlined above. But it would be incredibly easy to implement the multicore things from C++x0. Take a look at those, Shamus. Quite a few neat features there.

You could do the culling for each grid section in parallel, but you can’t stitch them all in parallel, at least that’s the impression I get from other sources.

The thing about multicore parallelism right now – wouldn’t that technically bump up the spec sheet to require multiple cores?

Not exactly. If you design and optimize for a certain number of cores (CPU or GPU) in parallel, you’ll get better performance on systems of that type or better, probably. However, even though it won’t _require_ that number of cores and will work on a lesser number, you’ve made it less efficient for systems that are lower-end. It’s a lot of work, and then your payoff only works for people with better machines, the people who actually _need_ the optimizations won’t benefit at all, the code will actually execute _slower_ than before for them.

Work-stealing threadpools are not necessarily any slower than a loop on uniproc machines, and they deal well with unbalanced workloads. C++11’s library doesn’t support them, but you could use either Intel TBB or the VC++ specific (and builtin in VS10!) PPL, both of which explicitly support working with C++11 lambdas.

And doing general purpose processing on the GPU is not for mortals, nor does it currently give any benefits in a lot of the cases you’d think it would: nVidia’s CUDA implementation of H.264 encoding is slower than the CPU only x264 library, for example.

I sit corrected. The extra ticks for juggling a few CPU threads is really minor — I don’t know what I was thinking.

No, sorry I wasn’t clear: starting a thread is super expensive (which is why they are pooled), and swapping them out is also fairly expensive (Id guess around the same order of cost as running Shamus’ simplify on a terrain). What I was trying to say was good implementations of threadpools will avoid swapping out threads for you.

Nice work Shamus. One trick to avoid seams and still process each terrain cell in isolation is to create “skirts” at the cell borders. The skirt is perpendicular to the terrain and shares the same UV as the border. This works well for heightmap-based terrains like yours. For best results you need to have a little bit of overbite from one cell into the next. You will get some Z-buffer fighting, but since the texturing will match down to the pixel it cannot be seen.

Instead of CUDA it might be possible to translate the problem to some kind of image processing problem that could be expressed in OpenGL. That’s what I am thinking of in a similar project.

As as example of how this might work, you could represent each parallel input as a pixel, use a pixel shader to encode the logic, and render the texture to an output surface. I don’t know much about OpenGL, so there might be better ways.

That’s pretty much how “GPGPU” was done before CUDA, OpenCL and DirectCompute were built explicitly to make it easier.

Wow. That’s some quality comment there, Shamus. I think you now owe your 2006 incarnation some major favors.

Though I’ll be honest, that comment didn’t make your system any easier to understand for me (the images a paragraph earlier helped, though). But as long as it makes things easier for you, it’s time well spent.

Now go write some comments. Maybe turn that into Project Frontier #6: Comments A-Go-Go.

I had to do an exaggerated sigh, because you explicitly write that you do neither need nor like it, but you still implement it*. By the way the simple and properly encapsulated solution is to just never optimize any borders of regions, and keep all those vertices. Not quite as efficient, but incredibly simple and fast.

*Knuth: Premature optimization is the root of all evil.

This is the second time I had to type this today.

It’s true you want to avoid premature optimization, because you don’t know where the final bottlenecks will be. But I KNEW I’d be doing this sooner or later. This really is part of building the system right. Better to do it now than to attempt it when I’ve forgotten how it all works.

Okay, you do have a point there. If you are absolutely sure that this point will become a bottleneck, you might as well do it now while it is still simple. And with 1.5 million triangles, I am not one to argue much. Even on current 2011 hardware, that is an issue. In ten years time, I wouldn’t bother any more. ;)

Yeah, I have to disagree with the Knuth quote: _Unnecessary_ optimisation may be the root of all evil, but its never to early to build something right that you’re going to eventually have to build anyways.

Hm, actually, I think it’s pretty accurate, in that “premature” doesn’t necessarily mean “before everything else is done”. Everything required for this bit is done, and as mentioned, it is effectively necessary, so it’s not premature at all; the quote doesn’t apply here, really. It’s an accurate quote, but not a relevant one, I suppose.

Point. If you take “premature” to mean “before you know if its really a bottleneck”, then as Kyte points out below its a known bottleneck, and optimising it isn’t premature.

That’s ’cause nobody quotes the full Knuth quote.

We should forget about small efficiencies, say about 97% of the time: premature optimization is the root of all evil. Yet we should not pass up our opportunities in that critical 3%. A good programmer will not be lulled into complacency by such reasoning, he will be wise to look carefully at the critical code; but only after that code has been identified. [etc]

Since Shamus managed to cull 90% of the polycount with this, I’d say it counts as that critical 3%. (Plus, it’s a well-known bottleneck, so it’s not like we’re optimizing something without knowing about the benefit)

i think somehow while reading that, i missed something

how exactly did you get less polys?

i probally just misread something, but if i were to think about it and guess based on what i did read

before you had every section of the grid converted into a seperate polygon reguardless of weather it needed to be seperate or not

and now only the bits that are significatnly different are converted leaveing spaces that have only a few large polygons and other spaces that have lots of tiny polygons

is that at all correct?

Not the ones which are signigicantly different are changed, those are kept.

In the example 3/4th of the grid are turned into larger polygons, because “the other 3 are again simple enough that they can be left alone”.

The simple explanation is changing it to use fewer, larger triangles.

Look at the first diagram for the quad-tree, and consider that to draw each individual square takes two triangles. Then, look at the last diagram, where there are fewer, larger triangles.

Its all about making the polygon larger without sacrificing too much fine (small) detail.

The size of the individual polygon is essentially irrelevant to GPU performance, so making many small ones is slower than a few huge ones.

I have a question about quad-trees. I understand how they work but because each square is broken into triangles anyway isn’t there some form of quad-tree that instead of using squares just starts with triangles?

Mainly your world is not one big triangle. Even if he represented his world as a single flat plane, it would reduce to two triangles not one.

Yes but it would end up being two triangles anyway. I messed around with some graph paper and I figure if you could do it you would get a better optimization, though I will say it would probably be at the point of splitting hairs and not actually noticeable barring extreme size or detail. I think I will play with the idea some more, if anything its an interesting distraction to think about.

Skipping over coding, mechanics and the other ‘under-the-hood’ stuff: the simplest answer is people are able to think in square spaces easier than in triangles (generally speaking).

If you want a square (or cube) divided into 8 parts of similar/identical shape and equal size, thats pretty easy. Now, try to visualize that with a triangle. It can be done, absolutely, but will it take more time?

From thought to implementation to design, time is always valuable. The simplest way to fully achieve all goals is the best.

Gotta love the comments, especially when dealing with black box code. In one of my few ever attempts at coding (everyone’s first visual basic calculator) I had decided to enjoy a couple of drinks that night, as a distraction from the boredom. Always a bad idea to code while drunk.

Anyway, when I woke up the next morning, the program was complete. I didn’t quite remember finishing, but it worked and stuff. So I shrugged and handed it in as an assignment it. Got a half decent mark, as well as a big red smash of text across my code.

Which ended up forming the words ‘WTF’. Apparently I had managed to invert the code, which meant it shouldn’t have never come anywhere near computing successful calculations. Except that it worked. Perfectly in fact, to a greater number of decimal places then anyone else managed.

No one else actually managed to work out how either, which lead to the swearing on my marking sheet. It literally shouldn’t of worked, except via some drunken knowledge I can no longer access. And because I was drunk at the time, the only comment on the entire thing was ‘Guess how I did this sober Me’

Well, there IS the Balmmer Peak:

http://imgs.xkcd.com/comics/ballmer_peak.png

Hasn’t ever worked for me, unfortunatley.

I seem to do a lot of things better when I’m drunk. Once again xkcd saves the day with explanations.

That’s an awesome story. :-)

Sounds like a good candidate for The Daily WTF, especially if you can back it up with source code or something.

Did you keep the source code?

It’d be interesting to see what you managed.

As would I!

I’m currently looking for it. It was a couple of years ago during my days of

tortureeducation and I went on a note burning spree after education.I’d be interested in knowing some metrics about this project. How many LOC? How many classes? How many (if any) tests?

Me too. But Visual Studio doesn’t offer metrics in the free edition. :)

It’s 260k bytes of code, though!

Get cppcheck. Free, tiny, can do things like that.

Got it. It… doesn’t?

http://sourceforge.net/projects/cppcheck/

I looked at every option in every menu. Nothing about metrics at all. Is that the right program?

Nope, sorry. I got confused. Cppcheck is good for finding troublesome code (uninitialized variables, for example), but apparently, it doesn’t have LoC. Not sure what I used, probably a linux tool…

Sloccount. Linux. :(

wc -l *.cpp *.h

Too simple. Not sufficiently arcane.

cat $(find ./ -name ‘*.cpp’ -or -name ‘*.h’) | wc -l

EDIT: Wtf? ‘, ` and ´ are different characters with different meanings in every context.

EDIT2: Wtf? (vertical apostrophe), (single quotemark open) and (single quotemark close) are different characters with different meanings in every context.

Just use ‘ (vertical apostrophe, looks very strange with this font) for string delimiters. The other two are mostly for à and é, and some word processing tools have prettier versions of ‘ used for quoting.

Is it possible to detect the edges of each block of terrain, then draw all vertices along the edges?

I see two ways of doing this:

1) Optimizing from vertex 1 to 30 instead of 0 to 31. This will cause problems with the nice division of powers of two into smaller powers of two.

2) Actively detect and temporarily store the edge vertices, optimize like before, then put the old edge vertices back, and finally stretch the textures over it. This will increase the generation time and needs some good memory handling.

I’m not an experienced programmer by any stretch of the imagination, so there’s probably a good reason why you’re not doing this (assuming you thought of the possibility to begin with). Anyone care to spill the beans on the details here?

It is a lot easier, actually. A region knows its own borders, and can therefore easily treat them differently.

Assuming all the terrain data is generated from a predictable source (table look ups of predefined noise / elevation data, or similar) AND assuming each terrain block has access to look this data up; the simple option is to modify the optimization routine to take into account a larger terrain sample (overlapping the edge of other terrain).

If a block is 8 by 8, then the optimization routine may be calculated using a 12 by 12 sample size (two extra quads on all edges). There may still be some level of error with the 12×12 sample, so perhaps a 16×16 sample would prove more reliable.

You have a slight overhead, but the end result is that each edge of the terrain blocks should match up.

The overhead only needs to be considered when generating the optimized mesh (which shouldn’t happen frequently for a single terrain block). However the overhead may be to much to consider this “simple” solution.

That doesn’t work, at all. Because then you’d end up with triangles that cross the boundaries between regions, and I am pretty sure that most systems based on regions won’t be able to handle it.

I may not have made it clear, but I was suggesting the optimization routine takes extra data into consideration, but would not generate extra polygons. You’re still only generating a mesh that fits an 8×8 grid.

Like I said, if the data comes from a predictable source (which it should if you want it to be repeatable), then you should have access to all the data required to make this work.

So, those polygons which would end up intersecting the edge would need to be cut down, which would introduce more work on the edges, but less reliance on other regional entities.

But you may be right. This may not work on this data. I’m just taking an educated stab at it based on my own experience with this sort of thing.

I can definitely see your concerns, and agree it may end up being more trouble than it’s worth.

Edit: Also, I’ve spent about as much time thinking this through as it took to type up… so you know… That’s not usually a good sign of rigor or anything.

Actually, I highly doubt that the region class has access to the function that generates the terrain. That would be very close coupling, and quite bad software architecture. The region class should have access to at most the code that generates a single region with given borders, and probably not even that.

Not really what I was talking about, but that’s fine. Let’s assume you don’t understand what I’m talking about because it’s late here and I don’t make any sense. ;-)

Also, 12 is not a power of 2.. so, you know. That part was wrong too.

You’d be stuck sampling 16×16 quads if you wanted to try taking it down this route.

Whilst this might be simple, it’s still not a great idea (like I was trying to stress in my original and subsequent posts).

I should never post after midnight. Sleep.

I think the biggest problem here is the the texture blending across region borders. I don’t know the details of how Shamus has set up the textures though, but I imagine the blending algorithm from part 3 needs to have information of where the terrain block boundaries are.

Anyway, Mike’s solution doesn’t solve the encapsulation issue. The whole problem was that you needed information of the 4 surrounding blocks to optimize the one you were on, and that problem persists with the optimize-a-larger-area solution.

Yep.

Keeping all the verts along the edge of a page may look like an easy solution, but I’m pretty sure it wouldn’t work at all. At least not with a quadtree, since you simplify the edges in the first step. You prevent that, you prevent the whole thing.

My old man tries to tell me that I just don’t know how to apply myself, and if I really tried I could have been doing real programming.

Then I read stuff like this and remember I hate anything more complex than simple multiplication, subtraction, division, addition, etc.

Which is why I find it so much more fun to do front-end web development. I wish I had actually switched to a more graphic-oriented major rather than learn in IT, but when working with HTML, CSS and JavaScript I can actually visualize the page elements in my head and what is going on. I don’t know how in the Hell you could take all that math and figure out everything you just did.

This is also why I’m trying to make time to learn Game Maker: so I can skip all the programming and get to the level design and story-telling aspect of game development…after doing some logic via a WYSIWYG and making graphics, of course…

I need a team.

Speaking of GameMaker…

While it is interesting to see experienced people do things the right way it could also be interesting to see an amatuer badly design a game. This is pretty embarrassing, but its me admitting some of my mistakes. earlier posts link to a zipped version of the in progress GameMaker game I’m making.

Shamus, maybe you should look at tesselation?

This should allow DX11 (and DX10.1 as well I think) to improve the polygon rendering.

So those one hundred thousand polygons would look like a million more.

And the GPU can autoscale this as well I believe.

Shamus is using OpenGL which doesn’t support tesselation unless he’s opening a version 4 context (?). Also, as he’s aiming this for lower-end machines and OpenGL compliance lags a little for video cards, OpenGL 4 isn’t available on low-end cards.

He COULD implement tesselation on his own or from someone else’s OpenCL implementation. The Kronos group didn’t add tesselation to the OpenGL 3 standard when MS added it to DX 11 because they thought the OpenCL implementation was fine, I guess. So Kronos added it to OpenGL 4 after a massive outcry.

SDL doesn’t let you get a context better than 2, I believe.

As far as I know, DX11 tessellation is more complex than just running a subdivider over the input mesh to get an output mesh, it culls to the viewport and other stuff I could never get a grip on. I’m not sure how much of that you could do in a compute shader, but it probably wouldn’t be as efficient anyway.

Oh crap, SDL only supports OGL 2.1?

I might run into problems shortly when I try to write some code. SDL 1.3 is supposedly coming into beta this summer and advertises that it supports OGL 3+. I may have to build my stuff with SDL 1.3beta…

Shamus: either you mixed up 2×2 and 4×4, or I’m not awake enough to understand that section.

“It's hard or complex, but it feels… inelegant.”

I suspect you forgot a “not” there.

I assume that your stitching method is not concerned about texture-stretching? Or do the gaps generated by the game at that point are not so large as to cause visible texture-issues?

Incidentally, how do you handle texture mapping so that a single square texture works on a bumpy hill of many triangles? A planar map of crushed-down tris? or stretching the map over the tris? Or just a planar ‘push it downwards until face is met’ thing?

I’m not sure how far back you want to go in terms of GPUs, but I have access to two laptops: one with an Intel 965GMA and one with a Radeon X300. If you want to do some testing on low-end systems to make sure that the engine performs well, let me know.

This is probably a dumb question, but when you’re using your quad tree funtion to segment the squares up, why not do two passes – the first segmenting it the way you are now, the second, having each square look at it’s neighbours and decide if the segments need to be adjusted to take into account the gaps that would otherwise be created in the terrain?

I’m thinking that you would expect to end up with marginally more triangles (since you’re now adding triangles to the squares that would otherwise not be needed except to better match their neighbours), but still end up with everything being black-box rather than trying to stitch it all together afterwards.

I’ve always wondered how weird graphical glitches like that appear – now I know.

Though I seem to remember you having the same problem last time, or something similar – could you not just make sure it’s solved once, then copy it over as well? On second thought, perhaps this is the only time you’ve had to solve it…

Last time, the terrain was one giant fixed block. (It has the whole map in memory at once, like an RTS.) Now it’s many blocks. (So I can make them on demand.) So, the earlier version never faced the seams issue.

Ah, so you have to do real-time stitching then…That’s got to be annoying…

If you don’t want to stitch, why do it?

Couldn’t you apply your full everything-is-a-triangle method to the immediate surroundings (like an 8×8 box) and apply the polygon reducing Shamus2006 code to everything farther away? The ugly gaps will still exist but will be too far away to see and therefore won’t be ugly.

Note that I’m not a programmer so there’s probably a good reason not to do this.

The ugly gaps could still be visible. Is the background (skybox) is a light source, or has highly reflective/glowy/bright colour, then even from a distance, it will shine through, especially if there is no edge anti-aliasing and the pixels inbetween the edges get render-tracing to the skybox. You can’t ensure that the gap *will* be smaller than 0.5 pixel so that it doesn’t get rendered.. And so at times, the thing *will* shine through, causing severe case of ugliness.

Now, what Shamus could do is apply LOD-optimization so that the further you get, the fewer subtris everything is subdivided into. That could lead to less render-weight, but more calculations all in all, which actually is bad in this particular case.

Actually, it doesn’t matter how small the gap is, pixels are sampled at points.

And Shamus will probably need to do some sort of LOD if he starts filling out this world with actual things. For terrain, this should be roughly applying what he is doing now 2-5 times with different tolerances for merging and a max split depth, and storing the resulting meshes, possibly along with the full mesh. Then the complicated bit is as you move around, checking if you need to release or push a bigger version up to the card for terrains – but you will need that logic for everything else anyway.

Heh. My lagtop (technically, it’s a netbook) almost has a heart attack playing Minecraft, on lowest graphical settings with the fog on max. I can almost walk without skipping frames if I stare at the ground…

Back in alpha before all the fancy lighting and such, it sort of ran on my netbook (I had to turn it off to keep the poor thing from over heating after about an hour), but now there’s no chance. I wish he would add in a *looks really crappy* mode that would use as little CPU as possible, even if it looked terrible.

Playing with the fog turned all the way up is actually pretty scary … minding your own business .. BOOM! no chance to see them coming :D

This reminds me of the XKCD comic Good Code. You are definitely going down the Do Things Right section.

The unfortunate part about doing things right is that you have days (or weeks) like this where you fix problems that aren’t necessarily problems yet but that will probably be problems in the future.

Glad to see that you’re spending time on making things work from the beginning.

I’d argue that “The requirements have changed -> Throw it ALL out and start over” part is something I disagree with (Either the requirements don’t change on a personal project, or you can re-use what you have done already), but yeah, that sounds right.

On the other hand, “Fast, kludgy code” does get the “almost done” section of the code line.

Which could be the argument between “Duke Nukem Forever” vs. “Crysis (1)”. (Er, at least, on the assumption that DNF had “good code” that is. At least presumably DNF will run on older computers from 1999. Anyone know if that’s true?)

Duke Nukem Forever had the “The requirements have changed” stage, whereas Crysis had the “Almost done and can barely meet the requirements, but it’s a mess of kludgy and spaghetti code that holds the machine to full lock when it runs. But it’s good enough.”

Oh requirements change plenty in a personal project. Remember, Shamus started out with project Hex, an RTS world and now moved onto project frontier.

In personal experience, I will start coding something, get distracted or realize what I’m doing isn’t working and change directions a lot. Of course the goal of my personal projects is to get experience in programming, so the end goal doesn’t matter much to me

I don’t know – for me, the I try to keep the design requirements as close to the original idea I had as possible, perhaps adding new requirements, but not actually changing the previous ones, and try and bend languages to my will.

For example, one of the ones I’m working on has to do with timed keyboard input – the user can type whatever they want for a specified amount of time, then WHAM! The game takes said input, then parses what it can, and runs off of that.

Turns out, that’s…really difficult (Read: Couldn’t quite get there) with Python, but I found it worked in Flash. So yes, I do need to re-code it, but not the actual requirements themselves.

But I suppose that’s personal choice though. Hence, personal project. :p

DNF already doesn’t run well on gaming rig from last year… I cannot imagine how slow it would be on older machines. But seeing as how it is based on Unreal 3 tech, better don’t even try. And I don’t want to imagine the loading times on a 5400rpm disk.

What exactly are you doing when you move around in the terrain? You have a lot of low-res chunks at a distance. When you move closer, do you recalculate the resolution of each chunk? Are you doing this in the background?

And I assume at the top level, you still have fixed size chunks? You are just generating them at lower resolution in the distance, right?

No, right now the terrain is a uniform level of detail, so moving around doesn’t change things.

Which begs the question: Are you going to introduce distance-dependent LOD?

Not being a graphics programmer, I was just thinking: Could you maybe produce the elevation data as a texture (RGB: High-byte, Low-Byte, one byte free for whatever you like), put that into the graphics card and have a vertex shader convert it into geometry? If yes, then shouldn’t there be a way to use mipmapping to reduce the resolution of terrain (as well as the textures) that is farther away? All on the graphics card?

Or would that introduce problems with hardware-specific stuff, and nVidia/ATI incompatibilities and ugly hacks? Or some other problem I don’t even know about?

I want to know how the program decides when to subdivide the quad tree. It doesn’t seem right, looking at the screenshot.

Yay screenshot speculation!

I’m guessing you have lower level of detail: At distance, and where there is higher roughness. It looks like some parts should be subdivided more, and some should be less. I guess the important part is that you can have a variable level of detail. How the level of detail is decided is easy to change at this point.

Of course, it would be best if the level of detail is also increased on edges with a high normal incidence and a high z-gap behind them. That way the silhouette is high quality, which is very important to perceived detail level. Otherwise the mountain ridges keep jumping around as they increase in detail as you get closer.

Generally you would determine a plane and test your data set for deviation from the plane. If the deviation is too great then that node subdivides. If it’s within the expected amount then it doesn’t.

A faster and less detailed way would be to just find your highest and lowest corners and only subdivide if it contains data that goes above or below those points. I don’t know which he used, but I’d bet on the second one since he’s going for quick and easy so far.

Actually going back and looking at it again, it seems like he’s testing the point at the dead center of a given square. So if that point lies too far off the slope between top-right/bottom-left, or top-left/bottom-right, it creates a point in the center and subdivides.

Shamus, as a programmer myself I find these posts absolutely intriguing. Sadly, I am not good at graphics in any way, so that part is like magic to me, heh, but the stuff about creating terrain data, processing it like in your Lindstrom algorithm, is really the kind of stuff that really draws my interest. That said, I’d love to know your data structures. Two-dimensional non-jagged array of terrain blocks? Array of the blocks themselves or pointers? How do you look up a specific block during the stitching, is there a function that receives two coordinates and returns a pointer to the terrain block at that position? Does each block know its coordinate in the array? Or is it more a linked-list approach, just two-dimensional?

Sadly, all the large amounts of data I have to handle at my job sit in a database, and all the really interesting data handling is done by creating another friggin’ SQL view and have the database engine do the crunching.

I’d love to do stuff like this (my dream: a galaxy simulator which goes right down to indivial planets, moons, and larger asteroids with physically correct simulation), but my main gripe is that I have no clue where to start in order to get something on the screen. My job is about databases and webservices (gladly, however, not the imho overhyped cloud, heh), the only output towards human beings I do is log files, and sometimes a bit of Wt, but nothing fancy, and especially nothing out of a large chunk of raw data. Heh.

Your dream sounds like Spore. Only I don’t see anything about DRM in that dream, so…maybe it’s not Spore.

Spore’s galaxy was too big, but only because your entire galactic civilization only has one spaceship at a time. Do you want it to fight a war or do you want it to pick up the spice from the outlying colonies or explore to the galactic core? Pick only one…

This.

This is why the last stage was the least fun…

Something along the lines of Universe Sandbox?

I’ve tried out the demo and while it is nice, it is more about specific simulations set up by hand and less about procedural generation. And I’d like to make my own project, possibly just for the sake of making it. *g*

I get the urge to make your own thing even if something similar exists… that’s why I have a towering stack of programming books that I should use at some point…

Well, if it’s any consolation, you know it can be done. :p

But yes, I understand where you’re coming from, and I support that. I was just saying that Spore’s done it, in case you wanted to see how they did theirs.

World.cpp has this big table of pointers. Mostly empty. As you move around the world, it fills in the table with terrain blocks. (The table itself corresponds to world coords. The pointer at [0][0] it the northwest corner of the map. It’s easy to look up the index for any area of the world.

When a terrain block needs to stitch, it calls something in World.cpp to look up its neighbor. Then it queries the neighbor directly.

There’s a couple of lines of code that gradually walk this grid, looking for terrain blocks that are far away from the camera and deleting them.

I’ve never seen anyone keep a great big table of pointers like this. I suppose it’s wasteful, since I have a table of thousands of pointers. In my professional work, we always used hash tables. I think the others would argue that a huge, mostly-empty table is wasteful. Which it is. But… they’re *pointers*. The whole table is under a megabyte, and not even a hash table can touch it for lookup speed.

If the world gets REALLY big, I’ll have to abandon this cheapo system and build something more robust. But for now it’s small and fast and easy.

Hash tables are good for lookup of sparse keys: for example words in english. They have (asymptotically) constant time lookup and insert (asymptotically is fancy-pants Computer Science talk for “most of the time” basically – it can take longer, *much* longer even, for an individual operation, but N operations will take k*N time).

However, your data here has perfectly dense keys, so a plain table is the best lookup strategy possible without considering machine-level issues like cache lines. I would recommend ensuring your array is flat: eg not “Terrain* terrains[][];”, but “Terrain* terrains[N][N];” or “Terrain* terrains[]” if you want to allocate dynamically – this means lookup is one memory load, rather than two, which matters if you are touching a large amount in an inner loop (like you probably are).

Other considerations:

For rendering purposes, you might find some algorithms easier if you moved to a quad or oct-tree storage, which leads to very simple loops to say things like “give me each terrain in view in order from front to back”.

Controlling the allocation of your terrain blocks so that blocks that are accessed close to each other are stored contiguously can speed things up and lower memory usage. This is premature optimization before you can profile it, though.

A galaxy simulator that seamlessly goes down to single planets? You mean, like this:

http://www.infinity-universe.com

All procedural, Shamus will love this :).

I just wanted to say, thank you for these coding series — it’s really interesting to see how someone does it. I loved the procedural city.

And I loved the aside that your 5-years-ago comments were useful now. It gives a real feeling of satisfaction :)

(And I know it’s redundtant, but FWIW simplifying the polygons now seems correct to me.)

So…uh…of topic, but Spoiler Warning’s not here, and the Escapist is down…attacked by LulzSec. And we wanted our videos! [/priorities]

And I know you’ve covered Anonymous as a group already, but any chance you’d do an article on LulzSec themselves? I don’t know if they’d let you do it as an Escapist article (Given LulzSec’s attack), but it’d still be interesting to see your perspective on the group, and how they manage things like this.

I dunno. Somewhat trying to lull work.

I’d love to see this made into a procedurally generated adventure game or RPG. Drop in a few generated dungeons, maybe some Diablo style loot, etc. Give some additional mysteries to do while exploring.

Maybe I’m underthinking this: why are you splitting the terrain based on squares when you have to render triangles? Start with a world based on tessellating equilateral triangles, then break each triangle down into four congruent triangles when needed.

Possibly just because octrees are a solved problem.

I’d say because he can generate a height map fairly easily and that is basically a grid, or squares. Also, there are already known solutions to his problem as done.

Well, in this case, Regions are easily to block off in squares, so that might be why as well.

Once you start breaking them down, it’s harder to associate them with a certain region, I’d guess – here, he can say “Any triangle within this square section is put to this texture.”

That would be 2. Each square has 2 triangles, and they really should be able to have different textures.

It might be easier to use this method if the initial heightmap wasn’t optimized for Cartesian coordinates, but the project hex code was already heavily into nonCartesian geometry.

I also don’t fully understand the stitching issue; is it so ugly to add a vertical triangle to that discontinuity? You already know everywhere it will happen (every edge on every block of terrain)

In terrainqt6.gif, it looks like the mid-bottom-left sqaure is sub-divided into 3 or 4 triangles (Not quite sure how to interpet the full vs. dotted lines, but I think it’s 4), presumably for more detail on each triangle.

Also, all you’d do is take the 2-D cartesian grid and drop it onto the height map to determine textures. Or at least, from a high-level process, that is.

As for adding a vertical trangle to each edge, that’d be adding 4 polygons per block – and therefore 2 per edge -…and most won’t see the sight of day.

“Well, we fixed something that wasn't a problem and added a bit of complexity that I dislike, but the important thing is that it's built right.”

Goddamn right sir! Nothing and I mean NOTHING stops a computer problem before it starts than good optimization!

Actually, when you want to bump up the view distance to, say, several miles, even your own machine will thank you.

Seriously, you should think of this not as “now dinky machines can handle this small area” but as “now a really powerful machine can handle 10X as much terrain as it could before – MAKE BIGGER!!!!!!1!!!1!!one!!!”

Or at least, “Make scalable to machine’s capability”, but that doesn’t lend itself to as many exclamation points.

You’re saying each terrain would need to know about its neighbours in order to stitch correctly but I don’t see why,

I’d imagine you’d just have a low priority background thread running that would run a function (initially created with the square root of a max number of terrains (so 5 for a 5×5 grid)) that each second or so would get the player position, determine grid position, free any terrains too far from the player and load any that were now needed, for each new terrain get all available neighbours (the function knows about them not the terrains themselves) and run the stitch function on them.

No waiting for terrains to load, no wondering when to delete old terrains, stitching handled without issue.

Cool stuff next time? Is it trees? Ice or glaciers? Rivers? Clouds?

Oh noes! Spoiler Warning was posted and then it disappeared when I reloaded to see if there were comments…

Yeah. Whoopsie. It was supposed to go up tomorrow morning. It was only up for a min.

Mwhahaha, watched and commented on it.

It seems to me like the simplest solution that requires no communication between regions is to require additional points on the border to stay on the exact same line between the two adjacent border points for the last level of subdivision.

From your example, that would mean point A would have been moved up slightly to stay exactly between the points immediately its left and right.

Since the problem only shows up when there is a detail gradient crossing the region border, smoothing that transition in level seems worth the slight loss in border detail.

In the past you released source to other side projects like this. I like that, but I dislike the whole “wait until it’s done” part – because I think little could be MORE educational to a wanna-be-graphics-dev like myself than seeing the _differences_ between the code at each step – instead of looking at the final result and try to figure out which of your posts describes each part of the code.

Though I think I read you’re describing things you did a while ago, so I’m just wishing aloud here.

Dear god, wordpress knows all!

My massively over powered desktop laughs derisively at your optimizations, before pondering what it will do with all its spare cycles.

Really excited by this project though, will be following closely :)

Ps I also think that whilst premature optimization is a Bad Thing, this seems one of those cases where it is more of a design decision than an optimization per se. Cutting your triangle count by a factor of ten (at the expense of complexity including the stitching) seems like design. Going further and hand rolling your own assembly library to do the math and unroll the loops – then you have gone too far.

Great work. Love the views you posted too.

As for the issue with the polygon reduction misalignment…

Notice your non-unions do not exhibit this issue. You are modifying the borders as if they are individual polygons, not like your mid-land polygons, which “know” they are splitting… You can do one of two things.

You can force non-splitting of the border-edges, demanding they only split on in-land sides for those triangles… (Split or merge)

Or…

You can duplicate the border from one edge, to the other. If one side splits/merges, that butting-edge becomes forced to split/merge also.

It is only the edges you need to focus on, which is a rather fast calculation, since they are all on a similar X or Y alignment. This is still stitching, without actually “stitching” manually, It can be done “on the fly”, and even accommodate for real-time editing. Again, since they are all specific XY cords. (At an intersection, that is only 8 edges to match, in real-time. If the LOD increases beyond your pre-matched distant edges.)

I recently tried thca pre roll and I’m absolutely impressed with the quality. The effects were slick calming and scrupulously what I was hoping for. The make of options also allowed me to upon something perfect in the direction of both relaxing evenings and productive days. Definitely recommend for anyone seeking wonderful results

Considering that I am still using a bargain bin laptop that is literally older than this article, I thank you for keeping performance in mind.