Here we are at the end of this series. I was hoping we’d get a public beta of JAI before the end of the year and I could end this by experimenting with a new language, but it looks like that didn’t pan out.

This last vexation isn’t remotely the most serious problem, but it’s the one that annoys me the mostOr maybe it’s second, after header files..

Of all the things that suck the joy out of writing code, I think that managing project files is pretty high on the list. This is sort of linked to the problem where integrating libraries in C++ is a madhouse and a chore. If using someone else’s code was easier, then the headaches of setting up project files would be less of an issue. But these two problems exacerbate each other and force you to spend time putting all the files in the right places and referring to them in the right order.

Disclaimer: This is one of those rare instances where Linux seems to be more user-friendly and turnkey than WindowsDespite the efforts of Microsoft to “idiot-proof” their OS, there are surprisingly a lot of these.. It’s been years since I did any programming on Linux, but I remember using a package manager that could find the required files, download them, and put them in the right place. There seem to be some basic rules about how to share source files. On Windows, this entire process is done manually and there doesn’t seem to be a coherent set of rules. Every Windows-based library has its own logic regarding how the files should be structured on your hard drive and how they should be added to your project.

In any case, this means there’s an annoying amount of sorting and accounting the programmer has to do in order to get everything working right, all of which is complicated by the archaic C system of header files. It’s not that these problems are too difficult. If you can write code to do memory management then these tasks shouldn’t be challenging for you. The problem is that these sorts of busywork tasks are something that – in a sane world – would be offloaded to the computer. (Assuming they needed to be done at all.) Computers are very good at large-scale sorting problems, and our programmer has way more important stuff they could be thinking about.

Vexation #7: Fiddling With Project Files is Not Programming

You need some way to tell the compiler (or your development tools) what files are part of your project. C compilers are terminal programsThis just means that they run in a text-based context like a DOS window. and they don’t automatically know what files they need to be compiling or where to find the various libraries you’re using. There are a lot of options for compiling. Is this the debug version? What character set are we using? What libraries do we need to pull in? Is this 32 or 64 bit? What sorts of optimizations should the compiler apply to your code?

In the old days the programmer would have to type a bunch of inscrutable crap into the terminal window. For example, this is what you’d have to type into DOS to compile a modern program:

D:\Projects\Source\BugHunt\Debug\BugHunt.exe" /MANIFEST /NXCOMPAT /PDB:"D:\Projects\Source\BugHunt\Debug\BugHunt.pdb" /DYNAMICBASE "kernel32.lib" "user32.lib" "gdi32.lib" "winspool.lib" "comdlg32.lib" "advapi32.lib" "shell32.lib" "ole32.lib" "oleaut32.lib" "uuid.lib" "odbc32.lib" "odbccp32.lib" /DEBUG /MACHINE:X86 /INCREMENTAL /PGD:"D:\Projects\Source\BugHunt\Debug\BugHunt.pgd" /SUBSYSTEM:WINDOWS /MANIFESTUAC:"level='asInvoker' uiAccess='false'" /ManifestFile:"Debug\BugHunt.exe.intermediate.manifest" /ERRORREPORT:PROMPT /NOLOGO /LIBPATH:"E:\SDK\boost_1_55_0\stage\lib" /LIBPATH:"F:\SDK\gl\lib\" /TLBID:1

Nobody actually does this, of course. The IDE handles it for us. I’m just trying to show that behind that sleek interface is an architecture that dates back to the 1970s.

Very few programmers pay attention to this stuff. We set all the compile options using a friendly interface and let the development environment figure out what esoteric options are needed to get the compiler to do what we want.

If you’re programming on Windows then you’re probably using Visual Studio and you’ll do things one way, but if you’re on Linux you’ll do things a different way. The language is supposedly platform-independent, but the system that holds the project together isn’t. Like header files, this is understandable due to the age of the language. It’s not that the language was designed poorly, it’s just that it was designed a very long time ago for machines that are very different from what we use today.

So we have our C++ code, then we have some project file that’s not part of the C++ language but is needed so the editor knows what files we want to compile, and we’ve got these huge command-line arguments that nobody understandsStatistically speaking, anyway.. Which means that your project requires 3 different sets of information in 3 different formats:

- The source code itself, which consists of source files and header files.

- Some sort of file explaining what source files are part of the project and where they can be found.

- The compile options to tell the compiler how to behave.

If you’re using Visual Studio, then the last two items are stored in the proprietary project file. Just to keep things interesting, Microsoft likes to fiddle with the format of the project files from one version to the next, creating a one-way conversion to the latest edition of Visual Studio. Which means that if one person upgrades, everyone on the project needs to upgrade. This also means its a pain in the ass for other projects – like Linux programmers – to make use of this file. This should all sound familiar to people who remember Microsoft Word and its ever-changing .docx format.

For years the structure of Visual Studio projects was basically folk knowledge. There wasn’t any official documentation. It wasn’t something you could learn in college. It was this whole body of specialized knowledge that you were just expected to know somehow. If you had a problem communicating with the compiler, you had to post on a forum somewhere and hope someone else out there had already fallen into this trap, found their way out, and posted their escape route to the internet. In the early aughts, I lost a lot of time to weird little mysteries when trying to do special builds or deal with oddly structured libraries. I don’t run into those problems anymore, but I don’t know if it’s because Visual Studio got better or because my work is simpler.

In any case, the answer seems obvious: Don’t use Microsoft’s tools. That should keep you out of trouble, right?

However, the tools are otherwise really good. They’re also very common in the games industry where so much of the development process takes place on Windows machines. Based on the GDC talks I’ve watched, Visual Studio seems to be pretty standard in AAA development.

None of this is the fault of the language, of course. But it’s still a dumb headache that developers have to deal with. It might even be a contributing factor to why Linux gaming is still in such sad shape. Moving your project off of Visual Studio and getting it to compile on Linux has got to be a huge pain in the ass.

We Can Do Better. I Think.

As I’ve said before, my experience with modern languages is pretty limited. Still, every language I’ve tried has been better than the C family when it comes to project files.

Like I keep saying, I don’t want this series to sound like a commercial for Jai. The language isn’t out yet, it’s all unproven, I haven’t used it, etc etc. Having said that, I really like the way Jai supposedly handles project files. In Jai, all three kinds of data – source code, compile options, and included files – are expressed in the same file. Essentially, your source code is the project file, and the compile options are specified in your code. You communicate with the compiler using the same language you’re writing the program in.

You invoke the compiler by pointing it at a single file. Something like this:

jai main.jai

This calls the compiler and tells it to compile the file main.jai. That file will tell the compiler what other files are part of the project, where they are, and what compile options we want to use. The compiler will then process all of the files in one go, building up a complete understanding of all the functions. You don’t need to make header files to tell it how to find things, because it can just figure it out on its own.

This means that compiling the program will be exactly the same on Windows, Linux, Mac, or wherever else you’re trying to work.

I love this idea so much. Your project structure isn’t hidden in a bunch of specialized files that are tied to your development environment. They’re something the programmer can read and understand.

This is the kind of convenience that you usually only get in higher-level languages. The assumption is usually that if you want an easy compile, then you must also want garbage collection, copious error checking, and lots of other convenience features that hinder your ability to write really fast code. If you want direct memory management and freewheeling data access, then you must also want a really fiddly and obtuse system for compiling it.

Along those lines, Jai offers another feature that’s usually associated with higher-level languages…

Introspection

Introspection is a pretty strange concept, but it involves a program being aware of the code used to generate it. Let’s return to our old friend the space marine:

class SpaceMarine { bool dead; char[8] name; Vector3 position; float stubble; short bullets; short kills; int dead_wives; int hitpoints; //Don't forget the hitpoints this time, Shamus bool cigar; int mana; //I really think the designers need to get on the same page here. }; |

That’s the class definition. That block of code describes a SpaceMarine, what its fields are called, and what kinds of data they store. If you want to use a SpaceMarine and maybe give him some bullets, you would create an instance of it in code like so:

//Please don't give me a hard time about encapsulation. I'm trying to illustrate something here. //I'm not going to ship this code, I promise. SpaceMarine shepard; shepard.bullets = 10; |

When we’re looking at the code we can clearly see the name of the class (SpaceMarine) and the name of the variable (shepard) and we can see what all the internal parts of a SpaceMarine are called. The important thing to remember is that the compiler can see this, but not the compiled program.

Remember that once a program is compiled, it gets turned into stuff like this:

mov eax,4 mov ebx,1 int 80h mov eax,1 mov ebx,0 int 80h |

The various commands boil down to mechanical instructions like “Move program execution to this instruction. Now copy stuff from this memory address to that other one. Now take the value in this address, add some number onto it, and copy it back to the first location. Now move execution to this other memory address and do whatever instructions are stored there.” It’s all numbers. There’s no way for the program to know that the stuff stored in memory address 1D4573 was originally a variable called “shepard”. All of those handy human-readable names are lost when the program is compiled.

But!

It is possible to retain that information. Doing things this way costs extra memory and processor cycles, so not all languages support it. This sort of thing is called introspection.

Actually, sometimes it’s called reflection.

Actually, sometimes it’s called RTTI for “Run Time Type Information”, but only in C++C++ author Bjarne Stroustrup did not include run-time type information in the original version of his language, because he thought this mechanism was often misused. This is a really strange reason, given how the rest of C++ lacks anything in the realm of safety features. Like, he was willing to include buzzsaws in his language in the form of memory pointers, but he thought the paring knife of RTTI was too risky? I don’t know. He’s the author of one of the most successful languages on the planet and all I’ve made is this silly blog, but it sounds funny to me.. Sort of. RTTI isn’t really the same thing, but it is related. It’s complicated.

I’m not going to try and untangle these terms, since people seem to disagree on their frequently-overlapping definitions. For now let’s just stick to talking about introspection and ignore the other two terms. We can poke our head into that wasp nest someday in the future if we’re feeling brave and there’s something to be gained in the effort.

I never have a use for introspection when making production code, but I’ve found it immensely useful for debugging tools and meta-analysis. The classic command console in the old Quake and Half-Life games is a great example of using introspection. The user can type in text, and the program can use that text to manipulate or display variables while the program is running.

There are many other uses for introspection, but they don’t usually crop up on my projects.

At any rate, introspection is generally associated with higher level languages like C# and Java. C++ doesn’t do introspection at all. Neither does Rust. D language seems to partly support it, but I think I’d need to know the language myself to follow the conversation.

Jai supposedly has introspection, and apparently it’s even seen as one of the main pillars of the language. It’s interesting to see so many high-level programming ideas being put into an ostensibly low-level language. Jai is making some very revolutionary promises. I don’t have the expertise to predict anything, but I am extremely eager to see how it all turns out.

Wrapping Up

I finished writing this series in late summer. At the time, I was really hoping I could end this series – or begin a new one – with some actual coding in JAI and see how true / ridiculous / misguided / prescient my various claims were. But it didn’t work out that way. Drat the luck.

I have no idea if Jai will be any good, but I really am on board with the idea that a programming language designed for games could be immensely helpful. I realize we’d still be left with the chicken/egg problem and I don’t know how you overcome that. A New Thing needs to be a lot better than the Old Thing to overcome the cost of switching. Are the gains big enough to break through the C++ hegemony? I don’t know. It certainly wouldn’t happen overnight. It would probably start with indies, and expand to larger and larger projects.

Or maybe we’re doomed to use the clunky and vexing C++ to make our AAA games for another 40 years.

I guess we’ll see. Thanks for reading.

Footnotes:

[1] Or maybe it’s second, after header files.

[2] Despite the efforts of Microsoft to “idiot-proof” their OS, there are surprisingly a lot of these.

[3] This just means that they run in a text-based context like a DOS window.

[4] Statistically speaking, anyway.

[5] C++ author Bjarne Stroustrup did not include run-time type information in the original version of his language, because he thought this mechanism was often misused. This is a really strange reason, given how the rest of C++ lacks anything in the realm of safety features. Like, he was willing to include buzzsaws in his language in the form of memory pointers, but he thought the paring knife of RTTI was too risky? I don’t know. He’s the author of one of the most successful languages on the planet and all I’ve made is this silly blog, but it sounds funny to me.

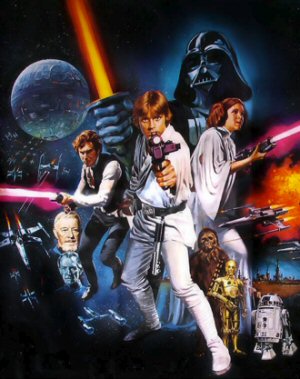

If Star Wars Was Made in 2006?

Imagine if the original Star Wars hadn't appeared in the 1970's, but instead was pitched to studios in 2006. How would that turn out?

Overused Words in Game Titles

I scoured the Steam database to figure out what words were the most commonly used in game titles.

The Loot Lottery

What makes the gameplay of Borderlands so addictive for some, and what does that have to do with slot machines?

Hardware Review

So what happens when a SOFTWARE engineer tries to review hardware? This. This happens.

Fable II

The plot of this game isn't just dumb, it's actively hostile to the player. This game hates you and thinks you are stupid.

T w e n t y S i d e d

T w e n t y S i d e d

Typolice:

In the first footnote:

Either “Or” or “Actually” should be removed.

Regarding the series, I generally liked it, but for some reason I wasn’t as interested in it when compared to your other articles about programming. I really liked the first 4-5 parts, but I felt a bit lost after that. I guess it’s probably because I’m still sporadically learning how to program, and I’m still a beginner, so I haven’t encountered/learned about some of the things you’re talking about.

Still, looking forward to your next long-form series.

These vexations are very specific to C++. If you’re learning Python or Java or whatever other high-level language that exists out there you practically do not run into the things Shamus described here.

Yeah, it’s probably that. I’ve dabbled a bit in R, but after finding it’s not the best language to start with, I started learning Python.

Personally, I think that people should start with a lower-level language. It’s harder to do more complex things, but it also teaches you a lot more about what the computer is actually doing when you write code.

I think that one of the reasons we have such non-performant code floating around today is that too many programmers just accept the inner workings of their language as magic, and don’t consider what those function calls are actually doing. new SomeObject[10000] is just one line of code afterall.

I disagree, both with the idea that people should start with a lower-level language, and that it’s a primary cause of “non-performant code”.

I think a lot of people needlessly get turned off of programming just because they tried to start with the “Dark Souls of Programming Languages”. Being new to programming can be frustrating in any language, and dealing with C++ Vexations generally just makes things worse. I don’t see any other field that thinks “throw them into the deep end” is the best way to initiate neophytes. It identifies top talent: anyone who survives such an initiation is probably a pretty solid programmer, but it unnecessarily weeds out a lot of people who might have made good programmers with a gentler introduction.

—

I also don’t think it’s either the cause (or a solution) to widespread performance issues.

Certainly I think it’s useful for the vast majority of programmers to have some understanding of the lower level concepts of how the computer actually works: but I don’t think actually learning a low-level language is necessary for that, and certainly it doesn’t have to be the first thing a programmer learns.

And like Shamus said in the beginning of this series: everything is context-dependent. Learning how to write or optimize C++ isn’t actually that useful of a skill for trying to write performant code in a higher level language: the language functions differently and usually has different use-cases, so it requires different techniques to optimize. A website with poor performance isn’t going to be optimized by aligning memory or unrolling loops.

The main cause for performance issues I think is just an issue of incentives and priorities. It takes time to do optimization right[1], and there’s only so many hours in the day, and optimization usually falls at the end of a programmer’s priority list, behind fixing bugs and new feature work. (I find this is true both of individual programmers and of management) Until performance issues start causing acute pain for users, they generally won’t get prioritized, so programs are generally as non-performant as they can get away with.

[1] If you aren’t measuring before and after optimizing, you’re doing it wrong. A lot of off-the-cuff “I think this is an optimization” decisions actually turn out to be optimizing the wrong thing, or can actually make performance worse.

I second this. Back in the day people would code in assembly. It is much more performant. Higher level languages were invented for a reason. In most use cases developer time is more costly than computer time. So easy to read is a much more important requirement than performance.

Even in applications that have to be performant the code mostly follows the 10/90 rule. 90% of the computation is done by 10% of the code. This 10% can be written by the performance-nerds in your organization. Almost certainly there is already a library written by experts in the field that will be much faster than what you can come up with.

I’d rather have beginner programmers focus on using the correct datatypes for their type of problem, getting to know common algorithms and write readable code.

So much performance can be won by using a proper algorithm. These differences are much bigger than the difference between a high and low-level language.

The cause of performance issues is doing unnecessary work – the slowest lines of (micro) code are the ones that don’t need to be executed but are anyway.

So, the main reason for slow applications is using an inappropriate algorithm – one that does a lot of unnecessary things.

That tends to happen when the details of which algorithm is being used are hidden from the programmer.

For example, consider the C++ “std::list”.

It doesn’t have an at(n) or operator [n] to access the ‘n’th item in the list.

This is because std::list is a linked list, so reading the last item means walking the entire list.

It’s trivial to write that algorithm, but if you need it, you’re probably using the wrong container for your task.

So the langauge doesn’t provide it, acting as a not-so-subtle hint that maybe you should change your algorithm.

Many “higher-level” programming languages and libraries have forgotten this.

For example, Javascript and Python have a nice “function chaining” syntax.

Seems lovely – except chaining three functions onto a list iterates the list three times, instead of just once!

The language has hidden the algorithm, and thus the cost.

O^2 is of course the sweet spot of bad algorithms, because it’s fast enough not to notice during testing so tends to blow up in production.

The deep-level optimisations should be done last – there’s no point in making list iteration really fast if you don’t need to iterate over the list in the first place!

This is one of the places where C/C++ utterly blows everything out of the water due to the language design* and the tooling.

Windows and Linux both have absolutely awesome performance measurement tooling that you can run on the release binary, but it all relies on the binaries following a C/C++ ABI.

The tooling is primarily a matter of history, but any new language claiming to be for performance must be able to use that tooling.

Anything using a “JIT” or interpreter can’t ever access the existing tooling (C#, Javascript, Python), simply because of basic architecture. So those languages are limited to whatever their runtime is able to provide.

* If it’s broke, you do have the power to fix it. The cost of such power is that if it’s not broken, you also have the power to break it.

Iterating over a list multiple times is still O(N). It’s when you need to do things like pairwise comparisons or rearrange the list that linked lists can start turning unnecessarily quadratic.

As somone who does a lot of Jaascript, this is the exact “optimization” I was thinking of. I’ve seen developers say “we can’t use this functional chaining syntax because it’s ‘not optimized'”, but in the vast majority of cases, it’s a complete non-optimization.

Again, context matters, and in the context of a C++ program, optimizing loops is often everything – hence why memory alignment and loop unrolling are classic optimization techniques. That makes sense when you’re crunching lots of data as efficiently as possible. Javascript is almost never used for such a use case. The vast majority of loops are looping over – at most – a few hundred items. Even if the algorithm were n^2, (it’s not), it doesn’t matter when n is small.

Plus, interpreted languages nowadays do on-the-fly optimization when code gets “hot”. So if you can “trivially” optimize code by rewriting it slightly, there’s a decent chance the optimizer can do it too.

—

Your point about the danger of O(n^2), and worse, algorithms is correct – outside of cases where you’re crunching a lot of data, the computational complexity of the algorithm is the most important thing. And C++ doesn’t have any advantage over high-level languages there, as far as I know.

Manual memory management and algorithm design are entirely tangential, as far as I can tell. An O(n) algorithm in C++ is an O(n) algorithm in Javascript, and an O(n^2) algorithm in Javascript is still an O(n^2) algorithm in C++.

I think it would be more clear to say “The compiler can see this, but not the complied program”.

Also, I think one of the reasons that C++ lacks reflection is probably because of how much more flexible it is with its typing. In C++, you can take any block of memory and decide to treat it as a SpaceMarine. It might crash your program, but you can do it. C#/Java don’t allow for those kinds of shenanigans.

Reflection is also kind of a crappy man’s OO. Anything you’re doing with reflection is something that would be better done with polymorphism, but sometimes you wind up working with code where you’re stuck with a class hierarchy that you didn’t design and where you need to do a lot of casting to sort things out.

Yes, that’s a far better wording. Fixed.

Out of curiosity – I do a fair amount of casting variables in C# for my Unity projects, and I’ve never run into a case where the compiler won’t allow me to treat one type as another. Since I jumped to Unity right from scripting tools like GMS2’s proprietary language, my experience with C++ isn’t nearly as deep as it could be. This series has been really interesting to evaluate the differences.

Is there an easy example out there of an assignment you could do in C++, but not C#?

Sure.

SpaceMarine* spaceMarine;

int number;

spaceMarine = reinterpret_cast(&number);

spaceMarine->bullets = 10;

There, you’ve now cast your integer as a SpaceMarine. This will compile and run. If you’re lucky, it could even do so without crashing your program. If you’re not lucky, then you’ll overwrite the function’s return address on the stack and your program will crash when it tries to load instructions from memory that it doesn’t have access to.

If you’re wondering why this sort of this is ever useful, then consider reading a bunch of SpaceMarines from a file. In C#, you have to read the file to an array of bytes, create an Array of type SpaceMarine, then explicitly create each SpaceMarine in that array by casting each set of bytes to some value (int, double, char) and then assigning it to the appropriate SpaceMarine field, one at a time. This makes re-constructing an array of structs from a file slow and difficult.

In C++, you can just say “Hey, see that array of bytes? Just treat that as an array of SpaceMarine” and you’re good to go.

I wouldn’t say that reading data from a file into some data structures is difficult. It just takes some time to write the function that does the mapping. It’s error-prone if you’re saving/loading binary data, some it’s more work to verify what you’re doing, though.

His point isn’t that it’s difficult to write a function that turns the raw bytes from a file into a struct, just that it’s less performant due to the extra copy. reinterpret_cast just tells the compiler to interpret the same chunk of memory as if it were a different struct so there’s literally no overhead.

Except you can’t do that in C++, that’s a type aliasing violation with no defined behaviour.

It might indeed run “correctly” on an implementation that doesn’t check, but if C++ had generalized RTTI it *would* error out because there is no SpaceMarine object anywhere in that code.

char *buffer = new char[1024];

SpaceMarine *marine = reinterpret_cast<SpaceMarine*>(buffer);

Or even something fairly ridiculous like:

int n = 42;

float *f = reinterpret_cast<float*>(&n);

Note that that doesn’t convert the integer to a float. It’s treating the exact bits of the integer representation of 42 as if it was a float, which will probably have nonsense results.

Really the only way C# can do something similar is with explicit offsets in structs, which simulates a union, but that is limited to value types.

You say that… but I actually used that once. I was writing an OpenGL shader, and I wanted to be able to pass an integer to the shader as part of the vertex data. Of course, the rest of the vertex data is an array of floats, so what I did was use the reinterpret_cast to get the float value that matched the bit-by-bit value of the integer, then put that float value in the array.

Several of the very, very fast algorithms for estimating calculations rely on converting the bitwise-representation of floats to integers and back.

I say “estimating” because they give inaccurate results – however, the result is close enough for a lot of purposes.

Eg Quake would not have existed without the fast inverse square root, which relies on this trick.

What’s the difference between “There is a special main.jai file which contains a listing of project files and how to build them” and “There is a special .csproj file which contains a listing of project files and how to build them?” I can only think of a couple and none of them seem useful.

One advantage might be that you can also put *code* in your main.jai file, but why would you do that? It makes sense to have a separation between compile options and program logic. The first one is something you ideally set up once at the start of your project and then never think about again. Program logic is something you change constantly. It would be very rare that you need to work on both together.

Maybe the intent is that you can have *any* .jai file in your project contain compiler information, but that seems like it would make a lot more work for the compiler – it now has to check every file to learn the dependency graph before it can start compiling anything – and possibly send us back to the bad old days of #include guards depending on how it’s implemented.

Possibly there’s some advantage to having the project listing written in the same language as the code, but of all my issues with MSBuild, having to write in XML isn’t one of them.

The difference is that one is part of the language spec, while the other is specific to Visual Studios.

In addition to what Bloodsquirrel said above, I’d add:

* The .csproj is in its own format. (Is it in XML these days? I didn’t know that. Last I looked, it was just a non-standard text file.) The coder isn’t taught to use this, it’s not part of the language spec, and the IDE actively discourages you from massing with it. (I don’t know if it’s still true, but in the aughts I remember that you had to close Visual Studio if you wanted to mess with the file because it would clobber your changes.)

* In effect, this is a “missing feature” in the C* languages. Every project needs a way to organize the files, and header files, and set compile options. Since it’s not part of the language, it means everyone will roll their own and we’ll have competing specs. This leaves room for Microsoft-style “standardization takeovers” where a large company fills a need with a proprietary solution.

* The programmer will be familiar with this main file, with what’s in it, and with how it works. They can SEE their project without having the options obfuscated behind a dozen dialogs.

* Sharing projects becomes easy. Do we have the same IDE. Do we have the same VERSION of the IDE? Sharing a VS2019 project with someone using VS2015 is asking for trouble, and having multiple simultaneous installs sucks. With the project info is part of the language spec, then we can all use our own favorite IDE without worrying about conflicts.

As of 2017 (and probably earlier, but that’s what I’ve checked), Visual Studio lets you edit the project file very easily – it’s in the context menu, no need to leave the IDE. I’ve done it before when the GUI failed me. The MSBuild documentation is not very good, but I’ve never had any of the issues you mention like getting changes overwritten for no reason.

The rest of your issues seem exclusive to C/C++, which is caught in the double trap of not having a good system for including files in a project and being too old and entrenched to fix that in the spec. I suppose writing “C++, but without 40 years of legacy code preventing changes” is a worthy project, but my point is this isn’t a new innovation, it’s the same thing most modern languages do. Saying “your source code is the project file” sounds really cool until you notice that in practice, you still want to keep your actual source code separate from the file that defines the project.

You usually have to unload the project file while the solution is open or open it for editing without first loading the project/solution up. And yes Shamus they are currently just big old XML files.

As Shamus said the main and important reason the Jai code is the project file approach is important is that it decouples the tool you’re using from your source code. I can hand my jai project to someone on Linux using Vim or say to someone using a bizzare proprietary code editor on their phone and it will work fine with their applications and compiler because it’s part of the baseline expectation for the language. The same is not true with the current existing tools, they all tend to rely on their own special project files or try to duplicate some other tools, so while the code is transferable, porting the project between tools or even versions of the same tool is a pain in the ass.

“just big old XML files.”

Is there any other kind?

:)

There are small XML files that are only 4 or so gigs.

It’s possible you want to have a look at V, even if it’s just to skim the page and go “Huh, that could be interesting.”

https://vlang.io/

I’ve been using it for the past couple of weeks to do code puzzles, and from the amount of weird raw C errors and missing features I wouldn’t recommend using it quite yet, but what it’s promising to be one day is pretty Jai-aligned.

I’ve been able to program the same project using it on Windows, Mac, Linux, and on my phone, so definitely pretty portable.

Since .csproj was brought up, it’s interesting to note that in .Net Core (the cross-platform, open source version of .Net) the .csproj format is standardized, used by the included CLI tools (not linked to a particular IDE), and designed to be edited manually.

For example, all .cs files in the project’s directory are assumed to be part of the source, unless explicitly excluded in the .csproj. This means that often the project file doesn’t need to be much more complicated than this:

<Project Sdk=”Microsoft.NET.Sdk”>

<PropertyGroup>

<OutputType>Exe</OutputType>

<TargetFramework>netcoreapp3.0</TargetFramework>

</PropertyGroup>

</Project>

And since .Net Core is integrated with NuGet, referencing libraries in the project file is also incredibly simple and they’ll automatically be downloaded when you run “dotnet build”.

None of this is true for C++, unfortunately.

The problems aren’t even limited to C++. I was a Delphi developer for quite some time and it was plagued by many of the same problems, without even the handy excuses. It was an essentially Windows only language with one IDE from one manufacturer. And still you would get compatibility problems if you opened you project in a wrong version. Add to that that every IDE had it’s own compiler version and you couldn’t tell it to use an older version. For years I had two IDE on my machine because we did development and fixes for newer software version on the new IDE, but we had to support the older versions on the old IDE.

I was going to say that project management is probably weird and inconvenient no matter what language you’re using or what OS you’re running, but upon further reflection that might just be my inexperience talking. These days, I program exclusively in Java. From what I’ve seen, different Java IDEs will by default impose different sorts of structure on your project. Netbeans, for example, assumes that you always want your source files in the

srcdirectory and your class files in theclassdirectory. (It seems reasonable enough, but a more experienced programmer than I could probably think of cases where that’d be undesirable.) IntellijIDEA has a similar scheme that’s just different enough to be confusing when you switch from one IDE to the other. In full-featured IDEs, adding libraries to a project is, I believe, straightforward enough; there are menu options and wizards for that. The thing is, I don’t really need an IDE for that sort of thing. I know how to do almost all of that on the command line if wanted to. To replicate what Netbeans is doing, I just need to typewhen I compile a file, where “classdir” is the directory for the class files for this project and “libdir1” and “libdir2” are the directories for the class files for the libraries I’m using. In practice, of course, I’m not going to have short punchy directory names like that, but you get the idea. What’s more, this works exactly the same in Linux as it does in Windows, apart from the differences in the way in which the two OSs specify paths.

Nevertheless, project management is still kind of terrifying to me, because not only are there different IDEs there are different build systems, which I believe are analogous to

makeforgccor whatever proprietary thing Visual Studio is doing. Do I use Ant, Maven, Gradle, or something else? Do I even need a build system? For the scale and type of projects I work on, I’m fairly sure that the answer is almost always no, but for professional development I expect the answer is always yes. What little Gradle I’ve picked up has all been due to working with libGDX, where it’s useful for cross-platform development purposes. I have to admit, producing a functioning program for both Android and Linux/Windows from a single set of source files is rather magical.I would think it reasonable to always use a build system, they’re just so convenient. I also weep for the future as gradle seems to be taking over from maven as the standard. Whenever your build is so complicated that it requires another programming language to set up, something has gone terribly, terribly wrong.

The thing that bugs me about build systems is that, while the various Java IDEs all seem to support all the various build systems, they do not necessarily support the latest versions of all the various build systems. The reason I stepped back from libGDX for a while is that the last time I tried to get a libGDX project going in Intellij there was some kind of Gradle incompatibility that was well beyond my ability to handle. The truth, however, is that I am an amateur and all build systems are voodoo to me. Gradle is not unique in that regard.

Node / JS seem to be pretty good for installing libraries, and having a single, well-documented file for specifying where your source is, where the libs are, and what flags to pass to the compiler. Python pretty much just has a few good defaults for where it finds files (local dir, parent dir, system path…?) which you can override with normal code and maybe some command-line flags. Golang has a very well-specified tech stack for finding libs, source, etc. So yeah…not all languages suffer from the project-management headaches that C* does.

And then, of course, there’s Perl, which set the standard for good library management with CPAN.

Anytime I see the words “dependency hell” I think of autotools and smile. Automatic dependency tracking is too convenient to reinvent.

Typo. “Run Time Type Information” doesn’t have the closing quotation marks.

Typo. Missing “and” between the words “introspection” and “ignore” (perhaps it would be better to also add a comma after the word “introspection”?).

Ahhh, the good old days.

Who were you, DenverCoder9?

WHAT DID YOU SEE!?!?

Hi, I’m going to argue about this:

> Nobody actually does this, of course. The IDE handles it for us.

A lot of people (me for example) live inside the console, and actually does this all the time. Just, not with C++ (we use Makefiles for that).

> an architecture that dates back to the 1970s.

The fact that terminal interfaces date back to the 70s is not a concern. If anything, it should be a plus: it is a true and tested, “boring” technology that just works.

The problem is that terminal-based UIs are still UIs. It takes time and effort to design them well, especially for complex tools like compilers. C++ compilers don’t tend to offer a great user experience on the terminal, perhaps because there’s often an implied assumption that you will use an IDE on top.

Other languages are much more pleasant to use from the terminal. Rust is a particularly salient example for me.

In my experience a lot of it comes from choosing some reasonable defaults. In rust there is one place to put dependencies on (your cargo.toml file), one place to put your source code (the src folder). This means that if you add 10 files and several folders to src, your compilation step will still be `cargo run`.

If you need to interact with C/C++ from your rust project, however, there might be some uglyness involved :) .

+1 for boring tools that work. I’m so uninterested in chasing trendy new languages that haven’t proven themselves. (Trendy languages that have proven themselves are allowed. :)

Pish posh, unproven untrendy languages are clearly where it’s at.

Assume I’m on a Linux machine, but I want to create a build for Windows? How would that work?

Assume I want to be able to create both debug builds with symbols and stripped (pre-)release builds. How would that work?

Assume I want to have more targets than just one, for example unit tests. How would that work?

Assume I have a CI pipeline that tests the build for every commit with different configurations and setups. How would that work?

Assume I have auto-generated files that are included in the build, for example using a parser-generator like yacc or shader compilation or UI middleware that’s based on Flash. How would that work?

Assume I have release/package automation. How would that work?

I am by NO MEANS an expert, and all I’ve done is watch the livestream on the topic, but it would go something like this:

You use if () statements. Sort of like the #ifdef preprocessor stuff in C*, but it’s just regular Jai code. So, if debug build, load these files, if release build, load these other files. I think this means it would be easier to have a lot of different builds. One if statement for Xbox / Playstation / PC. Another if statement for debug / release. In Visual Studio, that means creating and maintaining six builds, and if you want to change some thing about the debug version then you need to change all three debug builds. Supposedly in Jai, you just add the change inside if your if (debug) {}.

I sounds cool. In theory. Of course, a lot of things sound cool in theory.

Yeah, that’s basically what CMake does, then. Or SCons. Or the GNU autotools. Not really new or revolutionary.

Except to codify that with the language itself. Which is mostly a disadvantage, if you’re then mixing the actual project code in. This makes it harder to maintain and change, especially later and especially if it’s a collaboration project. Separation of concerns.

It also stands and falls with the degree of flexibility it allows. In the open source space, there’s a disagreement there with CMake vs autotools, where the autotools allow more flexibility for outsiders to do things the project maintainer didn’t think about. On the flipside, the autotools are more arcane and difficult to understand at first.

I imagine the simplest approach would be to have a separate main build file for each target:

-> jai build-debug.jai

-> jai build-release.jai

As things grow in complexity you’d probably want to pass in flags somewhere and then have your code handle it.

Probably something like:

-> jai build.jai target=linux profile=production

Then your jai script would literally say something like:

if (string_is_equal(cmd.target, “linux”) {

compiler.options.target = “linux”;

}

As for running extra commands your build might need, I would expect jai to provide compile-time access to files/processes (you can do just about everything at compile time, even require you to beat a space-invaders game for compilation to succeed). So you could use jai as your build system for these circumstances.

Otherwise you’d use whatever build system you currently have in place for shader/etc compilation, and just the jai part would be replacing your current makefile call with a jai call.

Basically the theory is that with Jai you should be able to do everything you currently need a makefile/sln/whatever you currently use with C, without needing to learn an extra language.

The trouble is, instead of “needing to learn an extra language”, you end up having to “learn a team-specific or even project-specific” style and set of assumptions, that will be regularly broken as people find that the team-specific idioms can’t easily do the thing they need to do and the deadline is fast approaching…

Worse, if there’s no difference between a ‘project’ and ‘source’ file, they will quickly intermingle – this source file that is mostly “space marine” actually changes the compiler settings.

Re-usable libraries requires subprojects that are able to transparently refer to some global project settings (chosen versions of the 3rd party libraries, where each internal and 3rd party library is installed on this machine) and can have overrides placed upon them by the master project (disable feature Y) without changing any of their files.

makefiles can do this, but buries these settings in a potentially huge tree of build scripts, with no way for a UI to list them unless the writer of the makefile explicitly writes documentation or a ‘dry-run’ build target to list them.

Such (self)documentation is one of the first thiungs dropped as deadlines approach, leaving you diving through the makefiles trying to find which settings are needed to do Z.

Visual Studio used to be pretty terrible at this.

Since VS2015 it has got a lot better with the advent of “*.props” files and the MSBuild build system (replacing nmake).

CMake is very good at this.

Libraries define options and settings, complete with defaults, can check the options are valid, search for libraries “I need Qt 5.9 or newer and Unreal Engine v3” and the cmake UI (GUI or command-line) automatically lists out the options, filters ‘advanced’ and ‘normal’, groups them together etc.

The Jai architecture immediately falls into the same traps that makefiles do, for exactly the same reason, while also adding the probability that people will add “real code” to the “project spec” source file because “it was easier”.

I don’t really think you’ll find people mixing code and build settings any more than you currently do with things like toggling optimisation settings around functions when debugging things or adding linker dependencies with things like #pragma comment lib.

In my last job the tech director and I had to reverse-engineer the Unity build system in order to make a solution we could quickly iterate on console with. It took us a few weeks to come up with something that worked and that involved creating our own MSBuild plugin to generate platform-specific project files using the output of other build steps – it was a whole mess. If we were able to write it all in the language we used every day things would have gone much faster.

CMake I’m only really starting with, it seems to have become the de-facto standard for cpp libraries which is nice (I don’t love some of its include path handling but the result is functional and it’s easy enough to integrate libraries other folks have made).

It’s still much messier to work with than a language based solution would be though.

I would imagine most of the main build files would be like a CMakeLists file importing whatever file your main() is in, except further dependencies would mostly get added during compilation as everything you use would be adding all their dependencies instead of having one main list somewhere.

Less overhead to adding any file, but you could argue it’s beneficial to have a single list of all the things somewhere (be it a vcxproj or a CMakeLists file). With the compiler metaprogram stuff in Jai though I’m sure it’d be easy to log out all the files you’re compiling with.

In short, I think project files make sense for C++ because of its compilation model where every file is a whole separate process, but one every build is a full rebuild I don’t think having such a wide divide between source and project is helpful.

Shamus, would consider some speculation about what’s gone wrong with Bethesda’s quality control? After yet another weird, improbable bug in ’76 that seems to involve completely differrent objects breaking each others’ data.

That’s not that weird. All it takes is some bad pointer arithmetic.

To be clear, I would love to see Shamus look at some of Bethesda’s hilarious bugs, then analyze what the basic issue might be and how it was missed. It’s speculative of course but would be a fun way to explain common problems and good troubleshooting.

Bethesda has quality control?

Off topic, but have you seen the announcement for a time limited demo of System Shock? I figured people here might be interested.

I’d just like to point out that “Visual Studio Code” is an awesome editor for programming be it C or PHP or Javascvript

Likewise “Build Tools for Visual Studio 2019” is pretty awesome.

“Code” editor is available under the “Visual Studio Code” download here https://visualstudio.microsoft.com/downloads/

and the build tools under “Tools for Visual Studio 2019”

80% of your list is fixed in rust ;D

(and you can use rust now, maybe you should try it instead)

http://arewegameyet.com/

Should probably also link the book.

Also, here’s rust introspection (as an add-on crate)

https://github.com/vityafx/introspection

YMMV since it’s a procedural macro, it’s more like RTTI than Reflection I guess?

Looks like the beginning of the (what I assume is) the MSBuild call to build BugHunt got clobbered.

Ah, package managers. Yet another reason I switched to Linux and couldn’t switch back now. Sure I had to learn how to type sudo apt-get update && sudo apt-get upgrade into the terminal, but the ability to automatically apply any available updates to any of my installed programs at any time of my own choosing (without ever needing to restart my computer), and to easily add new ones to the list with a few keystrokes is downright magical after the mess of “go to a website, find the download link for the installer, run the installer, now do that again for all your programs you want to keep updated every time there’s a new update out for them” I used to have to put up with.

On another note, for someone like me who doesn’t know a lower-level language than Python, that was a very interesting explanation about why it might be harder to make a Linux version of a game than simply “make a Linux executable”. If Jai can help make it simpler to port games cross-platform (like Vulcan supposedly should do), then I’m all for it.

We know from reverse-engineering that Bethesda uses it. Oblivion, Skyrim, and their editor tools, at least, are compiled using MSVC conventions re: virtual function tables, name mangling, and probably other stuff I don’t know how to spot yet.

You’re missing a closing quotation mark here, between “there” and “It’s.”

While they probably do use the Visual Studio IDE, you can’t tell that from the binaries.

The toolchain doesn’t say anything about which IDE – if any – was used.

These days, if you’re building a binary for Windows then you will almost always use an MSVC toolchain because it’s the one that’s best optimised for that platform and has by far the best debugging support on Windows.

(In the past there were a lot of other choices, but they have almost all died. I suspect the main thing that killed them was MS finally realising that standards compliance is a good thing!)

Just like gcc (or perhaps clang) will be your first choice for Linux, and the Apple-flavoured clang for macOS.

Yes, there are other options, but they are niche – eg on Windows the main other options are Intel compilers (sometimes better performance on Intel CPUs but always worse on everything else), mingw and clang (work but generally slower).

This is changing.

Please see VCPKG on Windows (and now on Linux), or even CONAN for a more expansive crossplatform solution.

But it is undeniable other languages handle package management much better.

Actually, the .VCXPROJ files are mostly stable since Visual 2012. I have a script that generates simultaneously projects files for the same project for all VS since VS2008, and lately, the only change is the compiler identifier itself (I just did it for 2012 vs. 2019, and only two values change, “4.0” becomes “15.0” and “v110” becomes “v142”, in the .VCXPROJ XML file).

So, you can upgrade/downgrade a project to suit your IDE version. But if the underlying compiler is not available, you will also have to upgrade/downgrade your code anyway. FWIW, at work, I use VS2019 to code in a VS2017 codebase, and it works, out of the box, as it did when using VS2017 to code in the VS2013 codebase.

Now, it’s true the language itself doesn’t specify its project file. It’s good as anyone can offer a better solution. But it’s bad because now, we have countless “better solutions”, even if it seems to boil down to CMake, these days.

Actually, it was the contrary.

The point was to have a language that was mostly compatible to C, to make transition to C++ easier. Which meant you had to start from C, and then keep most C features, both good and bad.

And C already had memory pointers. So the memory pointers **remained**. Same with the C casts. And the macros. And the freaking compilation toolchain everyone complains about, and no one seems to find a standard way of correcting (because, yes, C++ toolchain was, and mostly still is, initially, C’s).

You can have native introspection, or you can have a low-level language, but not both.

A perfect compiler would produce an executable from high-level code that was better than the top human could produce in low-level code, and that is why compiler implementation is the hardest problem in computer science- and very underserved, given that programmers regularly have problems merely invoking the compiler.

I _love_ introspection in Python, and it’s amazing to make more efficient (stuff done per line, not per CPU cycle) and safe code.

Example:

Most space marines in your game have no lasers but there’s a small elite force which has. You could make a subclass “EliteMarine“ which has the additional marine.laser.charge and marine.laser.power properties to keep track of the laser’s state. But maybe if one of these get killed, someone else can pick up the laser, and now you have regular marines with lasers? You could deal with that by giving _every_ marine the .laser.xxx properties, along with “marine.has_laser“ to track whether they have one — but most marines would never even use that!

Or, with introspection, you could (in pseudo Python code) just do something like:

def shoot(marine, target):

'''tells a marine to shoot at a target coordinate vector with their primary weapon'''

if hasattr(marine, 'laser'):

marine.laser.fire(target)

else:

marine.gun.fire(target)

(edit: dangit, how do I get indentation to work in code tags? neither spaces not tabs seem to have any effect…)

Also a piece of introspection: The Docstring (the second line in the example) is mostly just used while programming, by the editor, so when you start calling the function, it can automatically show you this comment, so you know what you’re doing. But it can still be accessed at runtime. It’s of course not recommended to put things in docstrings which influence the running program, but it’s super useful if you import a library, call a function out of that library and don’t understand what’s going wrong … you might not be able to or want to look at the source code, but it can still show you the docstrings, which should explain most of what you need to know.