In my column this week I talk about the AI that recently beat some pro StarCraft II players 10-1. This was a tough column to write because I think that:

- The developers that designed the AlphaStar AI have done something really remarkable and groundbreaking, but…

- …they also overstated their accomplishment.

It’s hard to criticize someone that’s just invented something amazing without coming off like a smug idiot. I don’t really have a problem with their AI, or even with the constraints placed on the match that tilted the game in favor of the AI. My problem is with the claims they made that made it sound like the AI was playing with human-like restrictions on speed and perception, when this simply wasn’t the case.

If you want a much more technical analysis, this article by Aleksi Pietikäinen offers a pretty good breakdown on what AlphaStar was doing during the game and why its performance doesn’t really match with the developer’s claims.

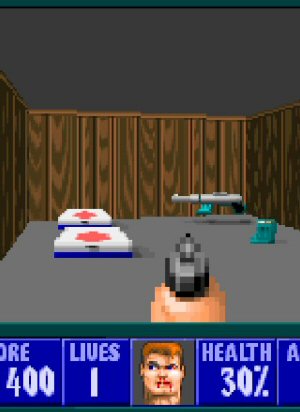

Secret of Good Secrets

Sometimes in-game secrets are fun and sometimes they're lame. Here's why.

The Best of 2011

My picks for what was important, awesome, or worth talking about in 2011.

How I Plan To Rule This Dumb Industry

Here is how I'd conquer the game-publishing business. (Hint: NOT by copying EA, 2K, Activision, Take-Two, or Ubisoft.)

Game at the Bottom

Why spend millions on visuals that are just a distraction from the REAL game of hotbar-watching?

Was it a Hack?

A big chunk of the internet went down in October of 2016. What happened? Was it a hack?

T w e n t y S i d e d

T w e n t y S i d e d

I’ve been surprised by the naivete of certain Starcraft commentators who analyzed the games on YouTube. There was a tendency to assign human intentions, and to assume that every weird move had an ascribed objective, that simply doesn’t jive with how I understand such AI to work. It seems like the agents developed by playing games against each other, so you can imagine that some weird behaviors were retained for no particular purpose.

Every weird move had the goal of victory. The commentators pointed out that human players play defensively against certain counterbuilds, while the AI had no need to because it has good enough micro to win.

What I found interesting was that having more probes than humans use results in more wins.

The AI’s strategies are solely trained against AI – so no strategy is based on having better micro to survive a tactical disadvantage. They’re strategies that are designed to work against someone who also has superhuman micro.

It looks like this changes the balance of the game though. Stalkers and Phoenix aren’t usef to exploit a micro advantage but they’re much better than other SC2 units when playing with high micro

That just means preferring the units that provide a disproportionate advantage with godlike micro.

To a first approximation, that means preferring the units with the longest range: Stalkers.

Yes – what I’m trying to say is the AI isn’t planning on having good enough micro to not need to play defensively. Unless you mean, at a certain level of micro playing aggressively becomes ineffective even with godlike micro.

Then again, harassment must work at that level in some form, because the AI used some harassment strategies.

If you harass successfully and your opponent does not, you have an eco advantage. All other things being equal, that wins.

I think the ‘overproduction’ of workers is largely a proactive counter to harassment tactics, but I’m not sure.

And my point is that if there are two unit types that are balanced with the best human micro, it’s likely that one of them is more efficient with godlike micro, and that if human-level play was considered in designing units it’s likely that with godlike micro there’s a unit composition that is excessively powerful.

That’s cool! I thought you might be interested in that, so I emailed it to you. :D

Great article! It can also apply to what happened with OpenAI’s matches vs DoTA pros.

Really? While the “fighting on AI’s terms” is still true with regards to map and character selection, there were actually stronger constraints in place for OpenAI’s agents: They could only react to players something like 100 ms (I forget the right number, sorry) after first spotting them, AFAIR they had a reasonable EPM limit, and the only time they reacted INSTANTLY were in situations where they already saw enemy players way before said players started throwing their specials around (I’m basically thinking about the instant-silence there). I think a similar result could have been achieved by a human by just being really concentrated on that one player and having their finger “on the trigger”, ready to drop the silence as soon as they saw any funny business.

Also, I don’t think DOTA relies quite so much on “microing” the camera, does it?

The first time they played vs pros was in the Shadow Fiend match (which is a very stripped-down version of DoTA). There the AI also had API access, which was monumentally unfair. I thought I said that in my original comment – at least in my head I did. Apparently, today I’m a even more absent-minded than usual :D

But for the second game, you’re totally right – the only big restriction was the hero pool and the fact that every player got an invulnerable courier (at least I think it was invulnerable).

In any case, I’ll be very interested to see how it does at this year’s TI, because the series vs paiN was incredibly entertaining.

In the article I linked, Aleksi Pietikäinen made the case that:

Serral is the fastest player in the world. He is 50% faster than the next closest player.

AlphaStar is quite a bit faster than Serral. On AVERAGE their EPM might be in the same ballpark, but during and engagement Alpha can spike up and take double or even triple the number of actions that Serral can. These extreme bursts are what enabled AlphaStar to win the matches. It would show up to a fight with a dumb unit composition and attempt to fight UPHILL through a chokepoint, and still win because of those APM spikes.

I don’t think that Serral is making as many meaningful actions as the APM measurement suggests.

About 360 APM is equivalent to typing at 120 WPM. I don’t think Typing of the Dead speedrunners hit the same number of deliberate actions per minute that Starcraft players claim- I think that the tools which measure ‘APM’ measure something far different from the number of unique intentional actions taken by the player.

This is absolutely correct. Which is why Serral’s APM is much much higher than his EPM. EPM = Effective Actions Per Minute, a purported measurement of how many of the actions in a player’s APM are actually useful. I don’t know how it is measured or how accurate it is, but yeah, the problem you are pointing out was already accounted for in the article (and by Shamus, here, who says EPM and not APM).

(Assuming I understood what you were saying correctly.)

If OpenAI didn’t enforce a 15ms or longer reaction time in every case, it had a shorter loop than the part of a human’s setup outside of the brain.

Vertical refresh at 60FPS happens every 16ms or so, the average wait for vsync is 8ms.

It takes about 7ms for motor nerves to transmit a signal from the brain to the arm.

15ms reaction time is 0ms of thinking.

My only nitpick with the column itself is about the different agents. Is it fair to say that the agents are different players, or is it more appropriate to think of them as different strategies by the same player? The whole “once I figured out what my opponent was doing, I knew how to counter it but the advantage already taken made it was too late to execute the counter” is a staple of pro games too, so I am not convinced we should read too much into the fact that each game was against a different agent.

Without a way for the agents to switch strategies, it would definitely be more like extremely specialized different players than one player adapting different strategies for different situations. From what Shamus said in the article, it seems likely that if you simply had them play best 3 out of 5 the agent would be unable to adapt and so would keep using the same strategy the entire time, even if it resulted in a loss.

Each AI has different branches though – if its rushing and you rush too, it changes what it was going to do. Deepmind have a probability map for each agent.

I don’t really buy it as an unfair advantage. Every pro player comes into a tournament with 5+ different builds prepared, which they vary to make themselves unpredictable. In Shamus’ Best of 5 scenario, no pro would try the same strategy 5 times in a row.

If you designed a simple algorithm where the ‘AI’ rolled a dice before each match and picked an agents build plan, that would be ‘one’ AI mimicking how pros do play.

Its disappointing from an exhibitipn point of view though. I dont think it’s an unfair advantage, but it does stop us from seeing how flexible or repetitive their AI is.

But in a best of 5, that wouldn’t really be how the pros do it. They’d try to pick up on tendencies they noted from the previous game and shade it that way, even getting into attempting to predict how they’ll react to what happened in the previous game.

And we know from sports that players don’t vary their strategies that much, because they have things that they are stronger or weaker at and so always try to play to those strengths, while trying to play away from their opponents strengths as much as possible. In curling, for example, you might have teams that are better at or prefer hitting or prefer softer shots, and so playing against those teams you want to push them out of their comfort zone, but it’s not good for you if you push yourself too far out of YOUR comfort zone because you generally don’t make as many of those shots nor are you as good at the strategy for that sort of game. I imagine that esports work the same way; you can’t be the best player at all possible races and all possible strategies. If you’re good at all of them, you’re merely good at all of them (which can itself be a strategy you can use to win over a best of 5).

Pros in Starcraft absolutely vary there strategies as much as the AI did.

Sure the AI is only varying at random instead of picking their strategy strategically. I fail to understand how that’s an advantage to the AI.

If you beat a pro in a game you still dont know if they’ll go with a different strategy or not in the second

No, but if you have a fairly good idea of their playstyle and their common strategies you can definitely make some general predictions.

“Hass” is known for doing a lot of weird cheese builds. “Innovation” is known for really solid execution and macro. And pro’s actively study their opponents strategies to determine their habits, strengths and weaknesses, and preferred styles, and partly base their own strategies on that knowledge.

Like Shamus said, it’s not an insurmountable problem, but in a real top-level tournament the human would at least have some idea what to expect.

Sometimes, and sometimes not. Every tournament you would expect some players to show up with new builds. It’s an advantage – but one any new pro would have. If people see the AI play for 50 games, the AI will be, if anything, at a tactical disadvantage.

The AI is also unlikely to know or have trained against the builds human players use, so the AI has the same disadvantage.

The difference here is really that if you were to play a real human with a proven track record (which you need to be a pro level player of SC2) you could study their previous games, tournament participations and general trends of playing to figure out roughly what they do and when. Thus you could have a fair, if not perfect, understanding of how that player plays. Even if they switch up their strategy they still have tells, signature moves, preferred strats and build orders. Even if you come utterly unprepared (what kind of pro are you?) you’d still understand the current top level meta of the game, you’d know what build orders, strats and counters are favored and could react from that. You might not have played a ton of Probe spammers (not a thing), but if someone employed the strategy on you, you’d know the theory on how to counter it.

The AI on the other hand has no meta and since you haven’t studied it and it is essentially a deck of different set behaviors, you can’t prepare and you can’t anticipate. This is why the randomness of a deck of different AI sets is actually a negative for a human, because you don’t know what DeepAISet4 is or what it plays, but you’d definitely know that if you went up against another human pro and you can’t learn about DeepAISet3 from playing DeepAISet4, because they are a different bunch of algorithms with no overlap. You can’t extrapolate anything between AI sets, whereas you can definitely extrapolate or anticipate how a human player will play based on their previous performance.

It’s an advantage to the AI because the human can’t use their observations in the next match.

“Huh. This guy is leaving his expansion undefended. Also, he only attacks my base from the left side, never the right. If I got to fight him a second time I could take advantage of that and win.”

But you can’t use that knowledge, because you don’t get to fight that agent again.

Since the human learns about their opponent from previous matches and the AI doesn’t, this setup negates the human’s advantage. It’s not CHEATING or anything. It’s just that their show match was designed to give the AI the best chance possible.

But the AI is even more handicapped by incorporating _no_ information from previous games.

And having an AI play only one build, whilst the human plays as many as they like is not a level playing field, but a massive disadvantage to the AI that no human player would ever face in a tournament setting – the human player wouldn’t need to scout!

I don’t see that as deliberately putting the AI in the best light. Sure the fairest would be if pros could see the agents play before the show match, to get some idea of AI builds, but realistically that spoils all the fun of a show match.

“But the AI is even more handicapped by incorporating _no_ information from previous games.”

Exactly. The AI CAN’T do it, so they set up the parameters to take that advantage away from the human as well.

Worse than that they didn’t tell the human. TLO even said he played for a long game in game 2 because of the game one cheese.

Humans can cultivate the same advantage by learning more strategies and bringing a die to select one at random.

At least, I assume that dice are permitted in SC2 pro play.

Indeed. Who defines what the scope of this opponent is? If it’s defined as only a single neural network (program? system? thing), well that’s a massive handicap for the AI, because apparently they need quite a narrow focus to get going. But it would be trivial to take a number of separately developed programs and put them under a single “head”, which just randomly picks which one to use. Or picks based on some algorithm. Or potentially and less trivially, is itself a further neural network program which has been trained to pick between running those sub-programs, and maybe even switching between them. It’s turtles all the way down really.

I would assume that they’re running off a modified version of the game engine, so they can speed it up to get bajillions of games to brute-force that machine learning.

Hand-coded algorithms are widely believed to never exhibit more intelligent behavior than their programmer knows how to code into them.

The entire point of a neural network is that it can be smarter than the person who designed it. (For a specific, narrow definition of ‘smarter’)

Also, technically a human opponent is ALSO a neural network. It’s just running on a type of hardware that only about half of people can assemble.

And the humans don’t really know how they do it too. So in a sense, half of all humans have their own specialized network for constructing new networks.

https://xkcd.com/387/

Too bad stick guy doesn’t understand sexual reproduction.

I think it kinda does, though – just not exactly in the way Shamus describes. I remember the team saying that previous games don’t affect the AI’s strategy for the current game. When pros play a series, previous games heavily affect strategies for future ones. E.g. if game 1 was a protracted late-game slug-fest, you can usually expect some cheese or a rush in game 2, so as a player, you’re probably going to be very careful and take extra precautions. On the other side of things, if you win game 2 with a rush, then for game 3 you can expect your opponent to be very paranoid, so you can take it easy and go for some high-tech units. Or, if you know your opponent, maybe you can extrapolate that he’ll be very angry after losing game 2 to a rush, so in game 3, maybe you should be extra cautious. Part of the game is exactly these mind games pros play on each other.

But for AlphaStar, this was not the case – it had two series of best-of-five’s, and one individual match. But from the AI’s perspective, these were eleven games, completely separate from each other, and neither human player could adapt their strategy based on what happened the previous game, because that previous game effectively took place in another universe, and had no bearing on the current game.

Based on what I’ve read:

Agent A has evolved to attack with mass stalkers.

Agent B has evolved to attack with mass scouts.

If you fight A and build units to counter stalkers, it won’t switch to B’s scout strategy. It will just build stalkers until it loses.

The developers choose which agent they will run. So it’s not like one player for five strategies. It’s like five players who each only know one strategy.

If the AI devs want to simulate a player, then all they need to do is have a program that picks an agent at the start of each game, either deterministically or stochastically. It seems to me pretty similar to the problem of the pro player who needs to pick a starting build at the beginning of the match with zero information.

The point here was to test each agent, though, rather than going through this rigmarole. But there’s no difference.

Even then, it seems that this AI might be incapable of switching mid-match. It has decided on (say) mass Stalkers right from the start of the game and will attempt to execute that strategy.

It’s been a while since I’ve really followed Starcraft II, but I have to wonder how this AI handles “cheese” rush plays. (Do these still exist? I understand that revised starting conditions in the LotV expansion made rushes much harder as everyone starts with a stronger economy)

Does its superior APM let it play through virtually anything, or if you (say) try a cannon rush somewhere has it got no answer?

—

I know that in Chess when Grandmasters are planning on playing against computers, they’ll do special anti-computer strategies. At the time I learned this, the best chess-playing computers could plan roughly 10 to 11 moves ahead (this may not have changed, the problem is exponential in nature). The GMs would therefore plan 12+ move exchanges to get marginal material or positional advantages that the computer simply couldn’t plan for. (It works sometimes, other times the AI manages to see something the GM didn’t – twelve moves is a long sequence!)

I can imagine Starcraft GMs planning something similar. It’s not totally unprecedented for a player to pick an off-race in tournament: https://www.youtube.com/watch?v=UrJ9lGuvi9A (Scarlett, normally Zerg player, switches to Protoss for a match). Tournament rules vary on whether players can switch races/matchups, but even then “Random Race” is allowed… it’s just that nobody plays it to the top-tiers of tournaments. Generally random players are eliminated early on.

Technically a Random player playing very aggressive rushes could have an edge because the AI doesn’t even know what set of pieces you’ll be using. I suppose the AI could eventually develop to handle any permutation of Random v. Random itself but that greatly increases the possible complexity of the match.

But do they pick an off-race for no reason but surprise or is it because they e.g. know something about their opponent (preferred strategies, control quirks or such) that would be easier to exploit with the off-race?

Just wondering.

Sometimes they pick an off-race for reasons unrelated to winning that match.

But it’s quite rare. Picking an off-race is a big handicap.

It’s extremely rare. That was the only example I could think of. It may have happened again since I stopped following SC2 regularly though. In the example match I linked, Scarlett had practiced that off-race build with another player and kept it as an ace up her sleeve. She’s interviewed about the series here: https://youtu.be/TerOadzxXu4?t=984 but doesn’t really say more about it than I did. If I recall correctly about the game during that time, the build she used was extremely powerful and was likely applicable against her opponent.

If “pro vs. computer” became a serious thing I expect that you’d see the pro players attempt to use Random Race to get an edge. Nobody* does this in current events but I imagine that it’d throw AIs for a bit of a loop. The pro has a 1/3 chance of getting their preferred race anyway, and they can probably play their “off” races well enough to beat the surprised AI, especially if they take some time to practice the two off-races and can know what the AI plans to play.

“Does its superior APM let it play through virtually anything, or if you (say) try a cannon rush somewhere has it got no answer?”

I could see rushing not working because of the APM, both because of micro and because the AI could easily be ahead of any player in unit production. In those circumstances, it’s my impression that a rush would be better against a player who is trying to tech up, but I think one of the players interviewed in the article mentions that the AI didn’t bother with that.

It beat a cheese attempt by Mana and the builds have branches – the mass stalker one wont always build mass stalkers.

But disappointingly, we didn’t see how well it does this. It does scout, for cheese and for the opponent, but when I went through the replays I wasnt good enough to see if it was reacting to the scouting info.

Playing off-race is a huge disadvantage. The TLO match should be written off – they underestimated the AIs strength

Game 2 (6:30 on Winter’s video) it canceled a building after TLO scouted it, so I think it was reacting. But its micro was so good that generally the reaction would be “I should build more Stalkers”.

Empirically, if you fight Agent A and build units to counter Stalkers, Agent A uses godlike micro to win with stalkers anyway.

I did AI and Philosophy of AI WAY back in my university days, and am somewhat bemused at some of the claims of AI using neural nets (also known as connectionist systems back in my day). They’re great at learning from a training set, but have always had one flaw that I don’t know if they’ve corrected and which is important for the fact that they only can play Protoss vs Protoss: because neural nets don’t store semantic information (meaning) anywhere in the system, any attempt to learn something new runs the risk of having it “forget” things that it had already learned, and things that are unrelated to the new thing it’s trying to learn. So, in the case of Starcraft II, trying to teach it how to play as Protoss vs Zerg or even as Zerg instead of Protoss runs the risk of having it, at the end, master the new learning but end up as an incompetent Protoss player. And, even more specifically, it could learn how to play Zerg Overlords but constantly mismanage Protoss Dark Templars, even if it had mastered them before.

This behaviour follows from how it has to shuffle the components of the net to learn anything new, but all of the components, in one system, play a role in determining the outputs from a variety of often unrelated inputs. If you make it so that the shuffling is too easy and so the net is less static, then forgetting things happens a lot more often. On the other hand, if you make it too static then the system has a hard time learning anything new, and this balance isn’t at all obvious.

For Starcraft II, you’d probably just want to build separate nets for each case, as they are statically determined at the beginning of the game and there aren’t that many of them. But then you run into issues with facing differing strategies, so you’d want them to be able to learn how to handle that and get back into the same situation, especially since some master strategies can be foiled by simple strategies that would be idiotic except against that specific strategy.

“some master strategies can be foiled by simple strategies that would be idiotic except against that specific strategy”

Any strategy that can foil a master strategy is a master strategy, even if it is also simple, unless there is some other strategy which is *strictly better*. (Assuming a game where every outcome is on the Pareto Frontier; there is no outcome that both you and the other player(s) prefer to any other outcome).

Not if it’s a simple general strategy that generally loses but happens to work well against that strategy, so you have a very novice player who plays one strategy that no one else does and happens to win precisely because of that. Pro players have a chance at recognizing that, especially if it’s a standard starting strategy, but an AI will likely have a harder time if they haven’t seen it in a while.

As an example, in high school I easily beat a player who was at least as good as me if not better in chess because I always started with the standard four-move checkmate move — I didn’t expect it to win, but it got me out front and opening up offensively — and he forgot about it because no one else used that as an opening.

It’s weird to discuss ‘strategies’ in games with hidden information and simultaneous action using the same word as used to discuss ‘strategies’ in games with perfect information and sequential quantum actions.

In SC2 a novice player will never beat an experienced player ever. Strategy is astronomically less important than mechanics.

To use an analogy from pro combat: if Floyd Mayweather is relatively weak to a left hook and a novice tries throwing it they still lose because their hook, though good in an abstract strategical sense, is still not good enough because they are a novice, and you still have to throw your punch with skill.

This would mean that using SC2 as an example of good AI strategy is a bad example, since strategy doesn’t matter that much.

I… I think this might actually be maybe not exactly “the case” but surely the fact that “wide vision” and “godlike micro” give the AI such a massive advantage despite making what would otherwise be poor decisions (like the attacking uphill scenario) gives some credence to this statement.

More specifically we’re entering a sort of circular argument along the lines of “but that is not strategy, just mechanical advantage” “being aware of your mechanical advantage and using it is strategy”.

The problem is that there’s no reason to think that that’s an actual strategy it’s using. A neural net could in theory be trained to do that — although it still wouldn’t really be “aware” of it as all it does is react to an input — but in this case it looks like it trained against AIs that wouldn’t be slower, and so if it gets those results it looks like the AI simply doesn’t punish those sorts of actions hard enough and so those strategies are left in, and would cost it the match except for that mechanical advantage.

I was responding specifically to his talk about a novice player, strategy is important eventually as I outlined in the other post.

The fact remains that if you, a novice starcraft player, were to play me, of decent skill, in 1000 games, I wouldn’t lose a single one. Similarly if I played TLO and he off raced as Protoss, he would also win all 1000 games.

Strategy is extremely important when players are of similar skill level. But if they are not at similar skill level it simply doesn’t matter.

A player named Destiny once got to Diamond by massing queens, which is an objectively terrible strategy. Because he was better than the players he faced he made more queens than they could of any other unit and they lost. Macro > everything.

To give you an example of how strategy is important, once upon a time in SC1 Zerg vs. Protoss was considered borderline unwinnable for the Zerg. Then along came a player named Savior, who developed a new strategy which single handedly flipped the matchup from the 30-70 zerg nightmare it was before to a 60-40 nightmare for Protoss once other players started using his strategy (Numbers may vary my memory isn’t perfect here). Strategy is important if mechanics are close to a parity. Thus, restrictions on AI.

Maybe I’m missing your point, but if your opponent was losing to troll chess openings, they weren’t exactly a master.

The non-standard openings are nigh-objectively worse than the standard openings (barring some great shift in chess theory) – they can surprise an intermediate player, but a player with strong fundamentals can exploit the weaknesses in the opening, even if they haven’t seen that particular opening before.

It’s not that the “four move checkmate strategy” is actually a strategy that foils the master strategy of “playing a real opening”… it was a weakness in the player, not in their strategy.

Even masters fall for the Scholar’s Mate.

Jerry is the best!

The point of the example is that the better player can lose a game to a move that’s basic and not particularly advanced but that they didn’t anticipate because no one actually does that (it’s not a common opening at higher levels). This was only furthered in the tournament I was playing in because I played someone who wasn’t as good as me who fell to that move, too. We played more games and then he learned not to fall to it, and when my coach admonished me a bit for making him better I commented that it was fine because no one else used that move [grin].

The overall point is that you need the AI to be able to do what you said master players will do: recognize a non-standard strategy that happens to work exceptionally well against their chosen strategy and adapt accordingly, but it seems like the AI here can’t change strategies, or else they would have had it play the human players in a more standard best of 3 or 5.

A very important question:

Why was nobody using it, if it worked?

My answer:

Because the top ranked human players simply have not mastered the entire game, because they only ever train against people who have not mastered the entire game.

I’m inclined to give AlphaStar’s choices that diverge from human players’ significant consideration as ‘better’; using more probes and not trying to build a wall being the ones that the commentators mentioned.

The AI testing mechanism was designed to be tested against all the bad early builds so that it doesnt ‘forget’ them – it’s one of the newish techniques they’ve been using, where they use metrics across a whole field of agents instead of comparing to the best (and select a series of the best instead of selecting one individual winner, as is the norm)

Take the same topology of neural net, train it on Protoss v zerg, and then compare it to the one trained on Protoss v Protoss. Where things are the same or similar, merge. Where things are different, add new nodes to the net, and weigh the ‘duplicates’ with the output of a new section of neural net that predicts whether the current match is Protoss V Protoss or Protoss V Zerg.

I don’t even see what’s hard about any of that, so obviously I know nothing of AI.

It’s not learning, and the point of training and build AIs is to get them to learn. It’s also potentially hugely wasteful. And, again, you run into the issue of semantics, as the parts where they are the same might well map to completely different notions and ideas. Again, this is fine if you want to keep the nets static, but then you make them no longer learn. And this then turns the lovely “learning” way to do AI back into the old brute force way that everyone rejected.

To build really good neural net AIs, you want the AI to learn each and every one of those connections. That means that you have to let it change them in response to new stimuli and situations. And that means that it can rather hilariously forget things that it had completely down and that aren’t at all related to the new stimuli and situations because the connections get mapped differently. Anything else turns into what I suggested: completely separate neural nets for each situation.

Why wouldn’t the gestalt be able to learn, once it was formed?

And there aren’t ‘notions’ or ‘ideas’ that map to those nodes, ever. At best, those nodes map to portions of the territory, and the *map* is an ‘idea’.

Because it was the learning mechanisms that caused the problems in the first place, because neural nets “learn” by shuffling the weights of connections between the nodes — and sometimes creating new ones — until they correctly respond to all of the examples in their training set. If you try to get the gestalt to learn, it’s going to shuffle weights and connections but since it doesn’t know what each set of connections “means” it will shuffle weights that correspond to unrelated things, breaking them, unless you keep all the old training sets in the training set as well.

You can force it to keep the connections static, but then it’s harder for it to learn. You can add new and separate sets of nodes, but this causes its own issues, not the least of which is that you’d end up with a hard coded set of nets, mostly.

They don’t even have that. That was the point. You can’t tell the neural net to preserve any specific notion when shuffling weights because no one knows how that notion gets represented in the net. So you can’t look at it to find out where its Dark Templar handling is to make sure that it doesn’t get altered when you add the Zerg Overlord handling, and neither can it. That’s one of its advantages — you don’t have to explicitly code or have it explicitly learn each proposition/idea — but it’s also one if its disadvantages if you really, really want to know how it came to its conclusion or want to make sure that you don’t break something that’s already working.

Right. In the gestalt process, don’t try to understand what the nets are doing- just have a node that gets reinforced solely based on how well it identifies the current matchup, and let that node decide how to balance the nine subagents that were separately trained on each matchup.

That’s somewhere in the range of [10,10!] times as complex as AlphaStar was in this exhibition; I’m inclined to think it’s close to the lower bound of that estimate but I’m not sure the upper bound is wrong.

To give you an idea, what you suggest is about as easy as creating a brain that’s good at math *and* football by cutting up the brains of a mathematician and a footballer, putting them together, and throwing out the duplicate neurons.

The problem is that neural networks are houses of cards. You can make them completely useless by changing any individual component, for no predictable reasons.

NNs are nowhere near modular enough to be plugged together. The intermediate layers are completely opaque to humans or other neural networks, even ones evolved on the same task, even the same neural network a few generations later.

Take two copies of the same person, have one attend a math class and the other attend a football practice, and then compare the exact states of their neurons afterward.

That is probably easier to reconcile; I don’t know, because the states of human neurons are probably not discrete at all, while the states of computer AI networks are discrete in large enough quanta to be contained in RAM.

The AI trains by playing against itself, so most likely training a Protoss+Zerg AI would involve randomizing the AI’s race so that it sometimes has to play as Protoss and sometimes has to play as Zerg. (You’d want to do this even if you were training it on a single race, because it won’t learn PvZ well if the Zerg AI is a total chump. It has to learn both races equally well to train itself.)

You’d still have to cope with the two of them sharing space in the neural network, but that sounds like the sort of problem that can easily be solved by throwing hardware at it. If Zerg-specific strategies end up borrowing 10% of network that was being used for Protoss, but a 90% Protoss AI is still miles above any human player, then it doesn’t matter.

Oh yes, excellent point.

It’s also odd that the article linked talks about the AI learning spam-clicks from humans. The rest of the analysis seemed right, but that didn’t make sense if it isn’t seeded with human games at all. (I don’t know if that’s true in this case, but is how deep mind worked before AIUI)

Training an AI ex nihilo is going to take so much longer than seeding it with very basic data.

But I woudn’t have seeded it with pro players; I’d have seeded it with bronze league players: Good enough that they know how to interact with the game, and not so inculturated that they never do things that aren’t in the meta.

It’d just be easier, though, to train them as completely separate nets and then switch to the right one when the players are selected. That way you never risk breaking some basic Protoss functionality fixing the Zerg functionality, because the neural net, when adjusting its weights and learning has no idea what functionality its impacting otherwise (semantics are not stored in a neural net generally, which is one of its advantages for pattern matching).

And in the end you have nine frameworks and a way of picking one of them to be active. That can also be made as a neural net, and trained against itself in random v random.

> They’re great at learning from a training set, but have always had one flaw that I don’t know if they’ve corrected and which is important for the fact that they only can play Protoss vs Protoss: because neural nets don’t store semantic information (meaning) anywhere in the system, any attempt to learn something new runs the risk of having it “forget” things that it had already learned, and things that are unrelated to the new thing it’s trying to learn.

That’s not as much of a concern as you might think with modern, deep neural networks.

The sheer size of such a network can be huge. All I can see offhand is that Alphastar can run (after being trained) on a single GPU, but that only limits the network size to a few gigabytes — more than enough to learn a Starcraft strategy or seven.

In fact, the state of the art in machine learning is exactly the opposite of what you suggest: transfer learning takes a network trained on one task (say cat vs dog classification) and adapts it to another (say emotion recognition from faces). Such learning is often faster than training a new network from scratch, and it’s used as a way to bootstrap an effective model when the “real” task has a limited training set.

In the case of Starcraft, the opportunities for transfer learning are obvious. A network trained on a Protoss vs Protoss matchup has already (for example) learned that clicks move selected units, and that base-level knowledge transfers immediately to other matchups.

Adding more nodes isn’t necessarily a good solution, though, because the best way to work around this problem is to keep the paths as separate as possible, but one of the benefits of neural nets is to re-use the connections without having to worry about the semantics or even actually knowing the semantics, so you don’t want to end up adding nodes and nodes until you have totally separate networks for Protoss vs Protoss and Protoss vs Zerg and so on.

As for transfer learning, that can work to ramp up a new neural net focusing on the new situation, but the question is if you could take that new neural net and get it to perform as well with the original problem. My comment is aimed at the fact that in general you probably can’t, as without training it on the old data set as well it will reshuffle some weights that break basic functionality from its source net.

From the column:

If the intent is to find out about the quality of the AI, I would like to limit the AI to the average APM of its opponent. If it constantly loses, it’s apparently not really adept at coming up with good strategies to combat the opponent, thus inferior to the opponent’s intellect at the level of action. If it constantly wins, I’d like to ramp it down to the point where the opponent manages to have a chance at beating it.

The difference between the human’s and the AI’s APM could be taken as kind of a measure of the AI’s comparative efficiency.

I think the quality of the AI can only be measured under a simulation of equal physical speed.

I think back of many Warcraft matches I played in my time, where I had the perfect strategy to counter a friend’s build, hero choice and known strategy, but he brute forced his victory through superior APM. I definately agree he was the better player in general, but his victories usually told less about his strategic acumen than his greater reflexes.

Back in the day, when I was a university student and terrabytes were the next big thing in read-only memory, I was taught that computers were really good at doing repetitive and routine actions. It was why they were so good for running robots on an assembly line. I think we’ve learned how to get a lot out of that ability, so that computers can fake doing non-repetitive and non-routine actions, but at ground level, computers are still upjumped calculators. The APM issue is, therefore, always going to exist in measuring human vs. computer ability in a game like Starcraft.

Yes, of course the issue will always exist, unless the AI is programmed with some kind of artificial limiter to determine it doesn’t perform more than x actions per interval y. I think basing a comparison of intelligence or system mastery on a scenario where one competitor has an unrelated advantage is a bad measure of intelligence. Also, I believe writing a bit of code to limit the machine’s APM or even reaction speed (issues for human players due to the physical limitations of giving commands manually) should not be too difficult.

If I perform a kind of interval task where I have to run a mile, then solve a puzzle, run another mile, do some more puzzles etc., a faster runner will have an obvious advantage, having more time at his disposal for reasons that have nothing to do with his ability to solve puzzles. A faster runner might be significantly worse at solving puzzles than another person, but still, by this standard, be judged to be the superior puzzle solver as he might have solved more puzzles in the time available, because the time the other person needs to perform an unrelated task (running) is adding up to the overall score.

If they were actually trying to make an AI that won strategically, they’d have harsher penalties for anything faster than what humans are capable of. They could even scale the penalties higher, the higher the click-speed is, above the human bell-curve. Reading the linked article (and especially the bell-curve graph at the end), it’s pretty obvious that the AI team is trying to hide the fact that their AIs win with micro, because they couldn’t train them to be smarter. If the AI team had openly said that they had some super-human things left in their AIs, I would view it as an interesting improvement in the field; Their dishonesty makes them untrustworthy to me now.

I don’t think they are lying; I think that the developers of AlphaStar actually think that they limited it to human levels of clicking.

The superior micro of AlphaStar is partially because the APM limiter that they set doesn’t measure effective actions, partially because AlphaStar has less input lag than a human who has to wait for a vertical refresh to see new information, partially because AlphaStar has less output lag than a person who needs to operate a motor nerve into a mouse and keyboard, partially because AlphaStar can multitask better, and mostly because AlphaStar has a significant information advantage from knowing the units’ orders rather than merely their location and current sprite, allowing AlphaStar to retreat the unit which is being focused even before that unit starts to take damage.

Those are all correct points, but you missed a large advantage the AI had, which is that the APM was limited on average, and not limited in bursts. This graph is the one I was referring to. As Aleksi Pietikäinen points out, the curve for the AI goes a long way to the right, into the 1500+ APM ranges. (That’s 25+ actions per second. Go ahead and try to click your mouse that fast, let alone move it with any level of control.) Sure, on average (the big hump of the curve), the AI was limited to sub-human performance, but the bursts of rapid-fire control (the right-hand tail of the curve) is what allowed it to win an engagement uphill, with poor unit composition, in a choke-point! The fact that these bursts were viewed as the deciding factor in the victories by pro players, and that they weren’t made prominent by the researchers would be slightly dishonest. To then claim proudly that the AI was limited to human performance levels, is what pushes it into outright cheatery.

Yep. The Tool-Assisted Speedrun community seems to largely accept that a human cannot deliver three consecutive one-frame inputs, even once; human theory TASes will use tricks that require two, but not three.

Clicking 25 times in 60 frames requires a ‘mousedown mouseup’ followed by (not concurrent with) a traverse ending (but optionally overlapping) a ‘mousedown mouseup’ within two frames.

Maybe with practice a human could perform that specific maneuver once or twice in a row, if they practiced that exact maneuver. But that wouldn’t help them move the unit that was being focused back before it was attacked, because humans only get information about the location and sprite of enemy units, while AlphaStar reacted to their current orders, moving units back when they were targeted, not waiting until they were attacked.

Another issues is that APM isn’t actually that easy to pin down, as far as what’s “meaningful” and what isn’t. For example, Zerg players tend to have higher APM spikes because of how their production works–all their units spawn simultaneously instead of forming a production queue like other races, so it isn’t unusual for a late game pro Zerg to hit 800-900 APM from repeated production commands.

An input that results in “not enough minerals” is not an action. If APM is counting spamming the production command early, APM is measuring something entirely irrelevant to quality play.

Also, since pro players DO spam production commands before they have enough minerals, Starcraft should natively support a meta-queue system of ‘as soon as you have enough minerals/gas/supply, this action will be executed.

It’s not “not enough minerals” spam, it’s the way Zerg production works. Zerg produce units from larvae, of which there can be (I think) a dozen per base. I’ve seen pro games where the player literally has hundreds of larvae in wait so when/if they lose their army, they can immediately replace it. Part of Zerg mechanics is “remaxxing” with this mechanic.

When it comes time to remax, it isn’t unusual (it’s unavoidable, really) for APM to spike.

After looking at claims about what APM actually measures, probably. My naive process is that ‘select a group of larvae’ is one or possibly three actions (three being ‘click, move, unclick). Apparently it counts as one action per larva selected. Presumably the one keystroke that gives the command to spawn is also one command each.

That’s a particularly bad way to compare a human with an AI; a human that selects an entire group and gives it an attack-move order generates some number of actions- an AI that selects each member of the group and targets it independently generates the same number of actions.

When comparing humans to each other, using a metric that is equally bad for both of them and also not targeted for specific improvement is only meh. But it’s several fatal errors in judgement to compare human APM to AI APM. Not because AI skips many of the limitations of the human’s I/O pipeline, but because the human players’ actions are being overcounted.

The trouble is, at this level of play, I’m not sure that there actually *is* a strategy where you can counter an enemy’s build so well that you’ll win even with inferior APM. At low levels, it’s easy to say “Oh, he has Stalkers, I’ll build Immortals to counter.” But at high levels, the moves and counter-moves have been mined out a lot more. “He has Stalkers, but he knows Stalkers are weak against Immortals so he also brought Phoenixes, and he also knows I know he’s bringing Phoenixes so he brought…” I would be very surprised if the AI managed to find some undiscovered strategy that was so good that you could run it at half the APM and still win.

Also, knowing how good your execution is informs your strategic choices. If you’re confident in your Stalker micro, then you’re more willing to take a fight where you both have equal numbers of Stalkers because you think you can win. Is that superior execution – you didn’t win on the strategic scale so you fell back on superior micro? Or is it superior strategic judgement – you brought exactly the strength you needed to win, and used the resources saved for something else? Or both?

(Certain strategies, like using Banshees to kite Marines, can be flat-out impossible without good enough execution)

This is especially a problem for AIs because they don’t care about winning by a wide margin, they just care about winning. If they predict that they’ll end up with 1 unit and you’ll end up with 0, that’s a win for them.

Every time someone makes a huge deal over AI accomplishing something–which, honestly, is almost always couched in the same sort of restrictions the AlphaStar AI had here–I roll my eyes.

I’m literally spending 8 hours a day every day trying to figure out how to program a computer to walk like a human without spending 20 minutes grinding numbers for each step. On even ground. Without having to avoid obstacles or carry an external load.

I think people just have this idea that once we get computers to do all these things we’ll load them up with all the software and they’ll be able to do anything, but that’s really not how it works,

The more I think about this, the more I want to go on a mile-long rant.

Link to an external blog post? The comments here get really difficult to read if people post long things. :)

Yeah, I don’t really have a blog…

Use pastebin or github gists.

WordPress is still free for basic users, and you could also make a post somewhere on Reddit.

Lesserwrong.com will let you publish, but you’ll get comments from a section of AI researchers that generally don’t agree with the mainstream AI research.

Better solution – just give your robot tank tracks instead of legs! :P

Since our “robot” is actually live human subjects….actually, now that I think of it, that’s a great idea! You’re hired! XD

What is your work? From the limited info, it sounds like you’ve got implants for paraplegic people, that operates with human control, to get them walking again. (This sounds super interesting, either way!)

Not implants, surface electrodes, combined with a robotic exoskeleton to make up for the fact that stimulating muscles with surface electrodes is not an ideal way to make muscles move.

This is our lab page

The upshot of all this is it’s really fucking hard to combine multiple actuators into a balanced gait on a live system, even before you start accounting for uncertainties. And yet, animals do it–as optimally as a computer system that spends hundreds of computing hours optimizing for a single step–all without even thinking.

I don’t think we’re in any danger of machines taking over any too soon

Wait, but then what’s the deal with this and this ?

There’s a pretty massive difference between what works in simulation and what works outside of simulation.

Also, there’s still a pretty massive mountain of calculations that goes into building those models.

Beyond that, I need to read the papers to give you a better critique.

You’ll note that even in those research papers / videos, the models also fall over quite a few times. ;)

Yeah, but only because the researchers keep throwing cubes in their faces :D

I’m not even good enough at Starcraft to qualify as “incompetent”, but I kind of feel like Starcraft and DotA are exactly the wrong sort of games for estimating how good an AI player is because of the ridiculous micro.

Starcraft doesn’t really have unit formations, which seems bizarre to me given that I came to it from Homeworld and Myth, in which using formations properly is vital to even have a chance. Meanwhile DotA is using mouse clicks to move a character in third person. Both of those are highly inefficient control schemes which stringly reward ludicrous APM just to position units.

I guess that’s precisely why these games in particular were chosen for these showcase events, though. Better to cheat and win, ultimately, especially if you consider The Golden Rule.

I wonder how AI’s like these would do at games like the Total War series, or a 4x game.

Seconded

Do the formations in those games get some kind of statistical/numerical bonus, besides just allowing players to control large numbers of units? If not, I suspect a micro-heavy AI would still gain an advantage.

Not as far as I know. Bear in mind I wasn’t any good at Homeworld or Myth either! One example of how formations worked in Myth that has stuck with me is the level where you have a bunch of claymore-wielding berserkers going after a guy with some sort of mortar type damage. If you allowed them to get bunched up you lost, but there was a “spread out” formation type that allowed you to keep enough units alive to win. Units in Myth were able to maintain formation and facing while pathfinding, rather than having to be told where to go individually and then jiggled around to get them pointed in the right direction.

The only units in Myth that really needed micro-managing were the explosive-flinging Dwarves, because left to their own devices they’d kill themselves and everybody around them in minutes.

By comparison, Starcraft’s units love to turn into an undifferentiated scrum. Formations certainly aren’t a panacea against micro advantage but a) they cut down on APM needed to control multiple troops significantly, and b) the AI needs to understand strategy to use them effectively, beyond “keep an eye on special timers and send the injured units to the back”.

I don’t believe that any human player can sustain an EPM in the three digits for more than a couple of seconds.

For reference, an EPM of 600 is equivalent to a 100-120 WPM typing speed. Yes, people can reach that level of typing speed, but not while they are composing as well as typing.

But the real problem isn’t the fingers moving, it’s reaction time. There’s a hard limit of 7ms action time for humans- that’s the approximate time it takes a nerve impulse to travel ~3ft from the brain to the arm. It’s hard to estimate the latency of human eyes, but there’s some limits there as well.

Consider someone trying to micro units perfectly: They have to wait for the enemy units to start their attack animation, then they have to wait (on average) 8 ms at 60 fps for their video card to vsync. Then they wait a minimum of 10 ms for the lcd screen’s input delay lag, 0 ms for light to reach their retina, and some amount of time that I can’t find a reference for to get a signal from the eyes to the brain proper; then there’s the decision processing. Once the human determines which units are about to be attacked and decides to move them back, they need to move the cursor over a unit, click, move the cursor over the destination, click (1 effective action).

While it is possible to do that blindfolded, it’s unreliable. An actual human is going to have the 7ms delay in motor impulse; suppose they get the mouse movement perfect and instant; they then need to wait 18ms for the display to update with the location of the cursor. Then they select the unit and move the cursor roughly to the destination and give the move command, adding another 7ms of latency plus the time to physically do those inputs.

Display hardware and motor nerves mean that a precision click has a minimum of average of 25ms, and a general-location click has a minimum time of 7ms from the decision being made. If an effective action requires clicking on a specific unit and then clicking on a general area, it can happen no sooner than 32 ms /after the decision to make that action/.

But there’s more to micro than running away. AlphaStar also managed to micro attacks perfectly. Group-select isn’t an option when every unit needs to focus or run independently, so every effective attack action takes a precision click to select a unit and a precision click to select a target: 50ms of latency in the pipeline, all outside of the brain. If AlphaStar had to use a monitor for input and 3 feet or so of human muscle nerve as output, it would have a *hard limit* of 1200 effective actions per minute, unless it was literally clicking blindly.

But I think AlphaStar had even more advantage than that. I think AlphaStar knows what the orders are of every unit it sees, not just the location and current sprite displayed by it. That is particularly important in micro, because it means that AlphaStar can start to retreat units *even before they are being fired upon*.

Actually, I think it’s totally possible, though I do agree there is a limit to how many meaningful actions can be taken over time. I just disagree about how high the limit is. When playing WC3, above mentioned friend and I analyzed our games post-match. Both of us had the philosophy to only act when it was necessary and I occasionally watched his matches when he played online and I didn’t want to, at the time. In our matches against each other, his APM (which, for both of us, usually would also qualify as EPM) stayed in the lower-hundreds almost constantly, spiking out to over 200 during combat. My own were substantially lower, but when I applied myself and tried to push him, I managed to perform at over 100 APM (I think, usually close to 130 or such). Considering my own APM/EPM are ridiculously low in comparison to most players in the field and I could always watch them outperforming me in meaningful actions, I’d assume the limit to what a human player can do lies substantially higher, I’d assume over 200 EPM, maybe close to 300?

A meaningful action doesn’t necessarily imply that it’s successful or well thought-out (in my understanding at least), just that it’s meant to be useful and in principle influential to the general flow of the game. A unit pulled back too late is still a meaningful action, even if the intended purpose was not achieved.

I agree that whether or not a command has the desired result does not determine if it is counted in EPM.

But asserting that a human is delivering two meaningful commands per second regularly throughout the game, but spike to only 3-4 during combat, is absurd.

It’s not that I don’t think that humans can focus and manage 4 commands per second, it’s that I don’t think they can maintain the concentration needed to manage 2-3 meaningful commands per second for long times.

I think what the APM or EPM tools actually measure aren’t ‘actions’, in the sense of issuing a command, but ‘interactions’: every mouse click or keypress, even those that don’t affect the competition.

When an AI takes video input and outputs in HID format, then I will agree that counting mouse clicks and keystrokes is a meaningful comparison to a human. But the tools used to measure APM simply have no meaning when applied to AlphaStar’s interactions.

According to Youtube comments, in Starcraft if you select 100 units, and de-select one, it counts as 99 actions for APM purposes, when it’s really only 2. That’s how TLO managed to break 3000 APM in his Alphastar games. So APM is a really unreliable AI limitation.

Whelp. If that’s true, APM and EPM don’t measure anything significant, so I need to find a way to describe how an AI could be throttled to human levels.

And my first thought is to give it information about the state of the game once every 16ms, 10ms late, just like a gaming-quality flat screen monitor does. That’s not sufficient to bring it down to human level, but it removes an actual information advantage that AlphaStar used.

According to youtube comments a lot of things. In this case it would be only 2 because the actions recorded would be 1 select units, and 2 deselect 1 of those units. It doesn’t count as 99 selections. In fact the math of your comment doesn’t make any sense, because its own internal logic would mean 100 selections then 99 selections or 199 APM (assuming you did it all in a minute).

Note: this isn’t an attack on you. If you don’t get how APM is calculated it might make sense. But APM is literally going through a replay and taking the number of actions taken and dividing by time. APM at any given moment is doing that over tiny time segments. Replays are created by recording exactly what actions players take, so you can’t cause APM spikes by doing a few simple actions, however telling it to do the same action by holding down a button can skew it (see TLO in the article Shamus linked).

That’s what the comments were about I think, one hot key press being counted as many actions. (My numbers being wrong was from me half-assing a paraphrase.)

Winter’s setup in this video seems to have a live APM estimate, I don’t think he edited that in after.

I haven’t played Starcraft 2 myself, all my knowledge is watching other people.

Thorpe et al says visual recognition of a complex image takes 150 ms, which seems withing sensible limits for human reaction time.

Beyond that it gets a lot more complex – different bits of the visual system recognise different aspects of the image over different time scales.

I guess the human maximum of one action per 250 ms therefore has a lot to do with the fact that a Starcraft pro may perform many actions on the basis of a single frame worth of information i.e. this is the speed at which they can act rather than the speed at which they can react.

Yeah, I don’t think that there is ‘complex image’ processing involved. I wouldn’t be surprised if pro StarCraft players had much better image recognition of StarCraft images than StarCraft speedrunners, and if both groups had a qualitative difference from casual players.

In this context “complex” means that the human test subject has to recognise having seen a particular class of thing, as opposed to visual latency studies which use flashes of light.

Apparently AlphaStar is getting unit position using an API, which is cheating even more due to monitor latency, and, assuming that Starcraft is coded properly and visual refresh isn’t directly tied to engine tick rate, perhaps cheating even more than that.

It’s multiplayer and uses video cards- visual refresh CAN’T be tied to engine tick rate, because it has to be the same for all players and the video pipeline doesn’t let things like vertical refresh be communicated back to the software layer easily.

That’s not correct, see e.g. Dark Souls.

Looking at the description of how the 60 FPS mod works, I stand behind my claim. Particularly in online play, the game speed has to be the same for everyone.

Reference: Nwks

I think the fact that you can – even occasionally – overpower bad strategy with fast micro in this game just makes it a terrible case for testing strategy AI. If you don’t hobble the machine’s micro, then it will clearly outperform a human in that area, and a sensible AI _should_ learn to use strategies that will overwhelm it’s opponent through micro. If you do hobble the machine’s micro, you’re not testing it’s ability to learn to play the game, you’re testing it’s ability to learn to play the game _like a human_.

There’s a similar but reversed argument to be made about the viewing window. Given that the AI has the full map available at all times, and assuming that they trained it up playing against humans, I’d say that it _should_ have learned to flip around the map making moves in many places at once, because this is something that it can do and it’s opponent can’t. (If they didn’t train it on humans then what on earth is the point of testing it by playing against humans? That’s like designing a really good washing machine and then testing it in drag races…) The fact that it didn’t use that obvious weakness against it’s opponent would indicate to me that it didn’t explore much of the available strategy space.

They’re trying to see if it’s teaching itself practical lessons, or just nonsense.

…and for hype purposes.

I disagree with your comment at the end that “none of this should be taken as a criticism of the AlphaStar team”. When you pit an AI against human beings, you have an obligation to make that match-up as even as possible. It’s egregious that the team didn’t play against only Protoss specialists, and that they were allowed to use multiple setups against players who hadn’t had the chance to see them in action. It wasn’t remotely an even match, even though it could have been easily fixed, and this makes a mockery of the idea that this experiment proved anything about the capabilities of AI.

There’s nothing unreasonable about limiting the computer to acting as fast as the fastest human player, by the way. This is supposed to be a battle of different strategies, a test of one’s mind, so any physical differences between the players ought to be minimized if not outright eliminated. This bothered me so much when the IBM program Watson was playing Jeopardy against human opponents, and it had a huge advantage just because it could press the buzzer near-instantaneously.

On another note, I think people are reluctant to admit that a computer winning a virtual game doesn’t have anything to do with actual intelligence. “Learning” algorithms are still just math, not that different from what mechanical calculators were doing centuries ago. The difference is in how the results of those calculations are translated into further calculations and the final graphical output, not in the essential quality of what the machine is doing. Computers don’t “think”, they don’t “learn”, and when one beats a human being at a game it hasn’t “outsmarted” the human race. This is just anthropomorphizing computers in the same way we tend to anthropomorphize everything, but a lot of otherwise intelligent people kid themselves this way.

A lot of what you call egregious is your own misunderstanding of both what happened and the game itself.

I am a Diamond level player and if TLO played against me as Protoss he would easily defeat me. TLO is good enough at the game that his off race is still Grandmaster level, that is the top 200 accounts per server. That was a good place to start.

Using 5 different agents doesn’t really matter. A good player would switch up a build order and strategy game to game anyway. Ultimately MaNa won by abusing a logic loop, and the AI have no ability to collectively learn anything about his play, so it could almost be considered an advantage for the human player.

The APM restriction is tricky, I’d encourage you to read the article Shamus linked at the end.

Even if this starcraft AI isn’t “thinking”, it’s a step along the road to having AI complex enough that it should be considered as a thinking entity.

It’s fine to start at not-the-top and work your way up as long as you aren’t already claiming you’re the strongest player since sliced bread, which I don’t think they were. They’re programmers who are super excited about what their program can do, and they misjudged some of the constraints they needed to have a fair game. (TLO had twice the APM as Alphastar, but as folks have said, a lot of human APM is empty carbs.)

Pretty much this.

They weren’t coming out saying “AlphaStar is the new best PvP player” or anything like that. They were saying “Holy shit we beat TLO who on a bad day would destroy everyone in the office, then we went ahead and 5-0’d Mana who came 2nd in a WCS last year”.

Having overpowered micro might have been how it got it victories, but it needed to learn how to play Starcraft first and that was the real achievement.

After watching all the games I feel like they could drop it’s APM to a quarter of what it was running at and still generally beat all non-pro Starcraft players, something I thought pretty unlikely a month ago.

AlphaStar has plenty of growing to do, but as a proof of concept it certainly showed off big time.

If you hadn’t mentioned your own AI experience I probably wouldn’t have noticed, but…

A* is pronounced “A Star”. Alpha is the Greek letter A. Ergo, AphaStar has the same name as A*.

To be fair: The AI in Age Of Empires is also perfectly accurate and can operate at ludicrous speed, but even an amateur can beat it. And from some of the games I saw, in my humble opinion, the micro was not really a factor. It somehow outplayed them while playing like crap. Though there were some engagements that were obviously godlike micro in others.

Agreed, it’s god-tier micro allowed it to go for some strategies humans couldn’t keep up with, but outside of those engagements it still looked like a pro-player.

Neutral question: is the Starcraft AI winning because it has superhuman micro ability any different than a traditional chess AI winning because it has superhuman tree-searching ability?

Traditionally, chess AIs aren’t really programmed with strategy – unlike a human that relies on heuristics and pattern recognition to find movies, they just look at huge number of potential moves, obviously way beyond what a human has time to consider.

It’d be a little like a match between Kasparov (chess Grandmaster) and myself (chess dabbler), where we’re given a day to make each move, but I’m playing from inside a Hyperbolic Time Chamber (where a day outside is a whole year inside) – I’d probably have a good chance of beating Kasparov under those circumstances, just because of the sheer number of positions and the amount of analysis I could do with a whole year of dedicated effort. CPU chess is sort of the same way: just due to the differing “clock speed” between a human and a CPU.

In both cases, the AI isn’t really playing like human. Though, I suppose a difference between the two cases is that the chess AI’s play is indistinguishable from a human’s play (in character, if not in quality), while the Starcraft AI plays in a visibly, distinctly different way that a human does.

—

I’m not really defending the Starcraft AI or criticizing chess AIs – (I don’t really have anything as convenient as a “point” to this comment, admittedly) – I just see the starcraft AI as just another point in a long running conversation on what the goals and purposes of AI really are.

If the goal was to make a Starcraft AI that could beat a human, it wouldn’t be. But the goal of the project is to make an AI that can teach itself good principles. Inhuman micro makes that hard to determine, because it can make up for a lot of mistakes.

Stockfish wins by looking really far ahead, because the goal of Stockfish is to beat humans at Chess. When AlphaZero played Stockfish in Chess, AlphaZero (supposedly) spent significantly less time looking ahead through the trees, because the goal was to make it teach itself well enough to not have to brute-force a solution.

No. Chess is known to be a solvable game- it’s possible to develop a perfect algorithm that, when it plays as the side with the game-theoretic advantage, will always win. The perfect tree-searching AI announces “Mate in X” (or possibly “Stalemate in X”) at the start of every move, “X” goes down by at least one every move, (more if the opponent makes an imperfect move), and always delivers checkmate or stalemate before X reaches zero. It can do this with zero ability to handle arbitrary legal board positions; it only has to know how to play positions that it reaches in perfect play.

To develop an algorithm that evaluates arbitrary legal positions is different.

Likewise, an algorithm that can defeat a human through godlike micro of the units it has chosen to build is one thing; an algorithm that can perform godlike micro of arbitrary units is very different.

The thing that is getting largely lost here, is that AlphaStar had to get itself into good situations in order to win with its overpowered micro.

We can say ‘attacking up a ramp is stupid, therefore AlphaStar is acting stupid’, but if it’s convinced it can win because of its micro it’s a valid strategy.

Throughout the games you saw AlphaStar pulling back when it was at a disadvantage, working to surround TLO and Mana before attacking, and building its economy and forces at the same rate as pro players.

That is the impressive thing here, that outside of engagements it looked like a good human Starcraft player.

I would like to see the AI try out a less micro-intensive game, like Total Annihilation. TA seems to have more variables in any given engagement, and units can maneuver on their own without player interference. Starcraft has always struck me as a very binary and rigid simulation by comparison. Maybe that’s what made it so good for e-sports, dunno.

I’d rather see a Civilization or other turn-based game. Master of Orion 2 would be an interesting choice, given the depth of choices related to ship design with strategic implications.

At the very least, the AI would find the most OP ship designs; but the best ones I’ve found (shield and armor piercing) have somewhat hard counters (unpierceable shields and armor). Since you sacrifice throw weight for the shield-piercing mod and Achilles targeting unit as well as hard shields and heavy armor, you are at a disadvantage if you paid to break a defense that they paid to be unbreakable, or if you paid to make a defense unbreakable if they didn’t pay to break it.

A turn-based game would certainly eliminate the APM advantage. I do wonder about the generally most effective ship designs, but that’s so dependent on available tech. I mean, my favorites would be death rays + teleporters, and a few stellar converters, but that’s endgame content.

And a 4X is also very asymmetrical and dependent on the universe generation.

Universe generation can be modded to be symmetrical, if starting location advantage becomes important.

And yes- dependence on available tech and miniaturization bonuses makes ship designs several layers deep in the strategic layer, since you need to decide which techs to research if you aren’t creative or uncreative, and even if you have all the tech there’s still a lead time on building a ship.