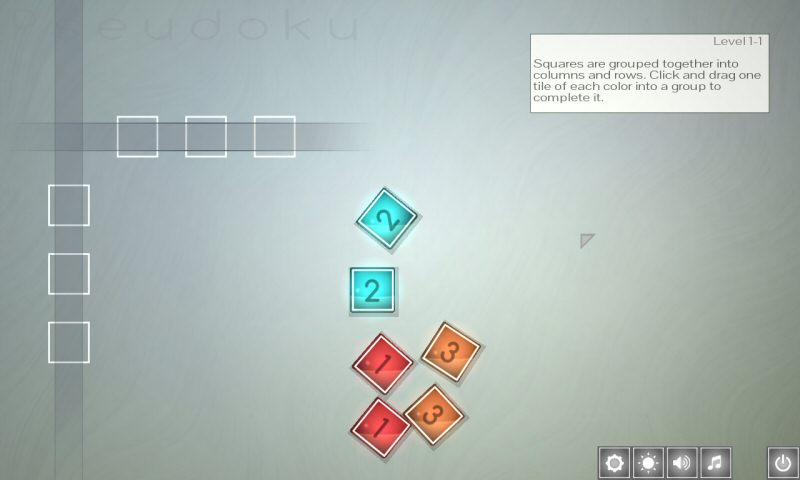

Pseudoku is not going well. Part of the problem is that I’m busy with other stuff and I’m only working on this for a few hours a week. The more serious problem is that I’m still having strange compatibility problems that have no business cropping up in a project so simple. I’d be upset about this, but it’s not really hurting me right now. The project is stalled on the other end – the business end. I couldn’t possibly explain the whole stupid story here, but the short version is:

The problem isn’t that you can’t solve this. The problem is that there are a hundred apparent solutions. (Different banks. Different forms. Pay someone to do this for us.) Finding the one solution that will waste the least time and money is the problem.

Part of the confusion is that it’s really hard to parse some of these forms. Usually you can tell when you’ve got the wrong form. If you’re a single person and you’re filling out a form that asks about your spouse and children, then you can be reasonably confident you’re barking up the wrong tree and you need a different form. But in our case ALL of the forms feel wrong. Everything related to doing business was designed in the middle of the last century, where opening a business means you plan to do business locally. Everyone assumes I want to sell pancakes on main street. There’s no concept of a “one-man international business”. Sometimes it’s not possible to truthfully and accurately fill out a form because it asks questions that don’t make sense.

Imagine you’re opening a furniture store, but the business form has a REQUIRED field that wants to know the license plate numbers of the cars you’ll be using to deliver the pizzas. That’s the level of incoherent stupidity we’re dealing with right now.

In any event, the technology problems in Pseudoku don’t matter because the project is stalled by bureaucracy.

I got a cheap ($90) minimalist Win7 box for testing Pseudoku. It’s an HP with integrated graphics. It’s got a virgin install of Win7 and no additional funny business. Let’s put Pseudoku on it and see how it runs…

Here we Go Again

Like last time, the program instantly crashes when it tries to use any OpenGL calls invented after 1994.

This makes no sense. I know integrated graphics systems are wonky, but this stuff really should be available, even on barebones system like this. I fiddle around with different ways of accessing those OpenGL extensions, and I always get the same result.

Is this really a thing? Are there systems out there that support Direct X as it existed in 2010, but were stuck with the 1994 version of OpenGL? If not, what could I be doing wrong here? If so, why haven’t I heard about it and written a long rant about it yet? That’s kind of my thing.

Like I said in a previous entry: I’ve never done deployment so I never had to worry about goofy edge cases and obscure hardware setups. In any case, I am tired of slamming my head into this problem. It’s a dumb waste of time.

Looking at my code, do I really need the OpenGL extensions? This isn’t like Good Robot, where I needed to push potentially tens of thousands of polygons at 60FPSWhich isn’t actually a big deal on modern hardware, but it’s still a couple of orders of magnitude more challenging that what I’m trying to do with this puzzle game.. Let’s see if I can strip the program down to the base OpenGL calls.

As it turns out, I’m using exactly one OpenGL extension. Everything else can fall back to vanilla OpenGL. The only thing that requires the modern stuff is the…

Texture Atlas

The problem you’re trying to solve is that texture-switching takes time, and excess texture-switching can kill performance. Imagine the graphics card is a painter. I tell him to paint a blue line. Then I tell him to paint a green line. But since his brush is loaded with blue paint, he has to lower the brush, clean off the old paint, and load it up with the new color. Then I ask him to paint another blue line and he has to go through all of that again.

You can mitigate this by sorting all the brush strokes ahead of time. Draw all of the blue lines, then all of the green ones, etc. The problem is that now you’re doing sorting on the CPU. If you’re eating up cycles on your processor to save cycles on your graphics card, then that’s a red flag that you might be approaching the problem the wrong way around. Worse, this creates a moving bottleneck. A slow computer with a great graphics card will exhibit problems you don’t see on a fast computer with a middling graphics card.

The better solution is to make a texture atlas. A texture atlas is when you take all the different textures you’re going to need in a scene and stick them into a single image. It’s like having a paintbrush already loaded with every color you’ll need, so you never have to clean off the brush and get a new color.

The downside is that a texture atlas is huge and the card might not support anything that large. But this is actually a good thing! It gives you a clear pass / fail. You know exactly how much graphics memory the user will need and you can state so in the system requirements for the game. This is far more preferable to those weird-ass situations where a dozen computers will all have different performance problems and the bottlenecks aren’t always obvious.

This requirement:

“Your graphics card must have 3.2 megaboozles.”

Is far easier for Joe Consumer to understand than this one:

“Your graphics card needs 3 kilowappers, UNLESS your computer has less than 100 fizzlers, in which case you need 4.2 kilowappers, UNLESS you’re using the new Smeg class chips that support the next-gen shaders, in which case you can go all the way down to 2.5 kilowappers.”

Making a texture atlas takes all these complex variables with regards to throughput and boils them down to the simple question of “Can this image fit in video memory?” It makes your engine simpler. All you have to do it place all of your textures into a single image.

In Good Robot, I did this manually:

That’s a portion of the Good Robot atlas. It was annoying to maintain. You had to manually arrange items on a grid, and if you were a pixel off in any direction then the resulting sprite would be clipped or have strange edges. Once you had the items placed, you had to tell the program how to find it using a system that was very convenient for the programmer but not convenient for the artist. That was fine when I was working on the game all by myself, but I felt bad for dumping that obtuse and inconvenient system on the artists.

So after Good Robot I added some code that would build the atlas dynamically at launch. The artist puts in their textures, and the game will arrange them into an atlas and work out how to find them. It’s a lot less work. It looks at the sizes of the textures and figures out how to pack them efficiently. It also works out a map so it can find the individual images later, because otherwise what’s the point?

This atlas building is currently the only part of Pseudoku that uses OpenGL extensions. I’m creating a blank atlas texture, then using a GL frambuffer object to render all the little sprites directly into the atlas.

I don’t have to do it that way. Rather than handing the job off to the graphics card, I can manually build the atlas by arranging the images in main memory. Instead of creating a blank texture, I create a blank expanse of memory and copy the texture data into it a block at a time. When I’m done, I hand the memory off to GL and tell it to make a texture out of it. This new way is not as compact and it’s probably slower by some trivial amount that doesn’t matter to humans. But it gets the job done. Here’s the auto-generated atlas:

Getting rid of the framebuffer stuff FINALLY gets me past the crashes. Pseudoku now runs on the virgin machine.

And so at last Pseudoku runs! I mean, it was already working on 90% of the machines out there, but now it’s running on a virgin machine with no redistributable packages, updates, drivers, or anything else. If it runs on this thing, it will run almost anywhere.

Except…

It’s slow. I mean really, mindbogglingly slow. It is so slow it would be hilarious if it wasn’t so annoying. On the virgin it gets a frame every other second. That’s half a frame a second.

It takes the game two entire seconds to draw… how many polygons?

On the very first level there are six tiles, each of which are two quads. The slots where you place the tiles make another 6 quads. In the word “Pseudoku”, each letter is another quad. The mouse pointer and the glowing aura around it each count as another quad. The entire gradient background is one more. Then the six menu buttons at the bottom add another 12 quads. That means the entire scene is 41 quads.

“Well Shamus, everyone knows integrated graphics are terrible. You should expect poor performance.”

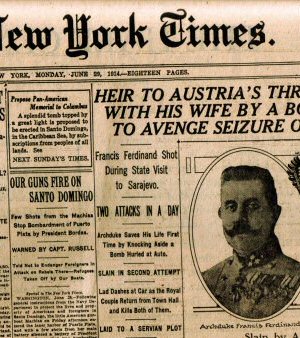

Let me see if I can put this into perspective. This is Unreal:

Unreal came out in 1998. At the time, it required a 166Mhz computer. My machine was right in that ballpark when I played it. This was before ubiquitous graphics acceleration, so the game could do all of its rendering on your humble little CPU. At the time, the flyby intro would dip down to about 10FPS when the camera pulled back to reveal the entire castle. For the purposes of comparison, let’s make the fairly reasonable assumption that the game was rendering about 400 polygons when you include the castle, the canyon, the little guys milling around in the distance, the particle effects, the sky, and the reflections.

Pseudoku is drawing 1/10th the polygons, on a CPU that’s 20× faster, and yet it’s running twenty times slower. I know integrated graphics are garbage, but they’re not that bad. They’re not “three orders of magnitude slower than 1998 processors” bad. If that was the case, there would literally be no point in having integrated graphics at all.

So it’s probably not the rendering itself that’s slowing it down. On the other hand, I have no idea what’s causing this. I can have the game skip most of the rendering and the framerate stays about the same.

I guess the next step is to go through the program and disable systems one at a time until I find the culprit.

OR!

I could fix this by simply requiring the end-user to have a graphics card made in the last 5 years. But damn it, it SHOULD work on this machine and it shouldn’t be this slow.

The truth is, I don’t need to solve this problem. This machine represents such a vanishingly small portion of the market that I don’t need to spend this much time trying to get stuff to work. I guess I’m being stubborn because this is really bugging me, not because this is a good use of my time.

Footnotes:

[1] It’s like a tax number for your business.

[2] Which isn’t actually a big deal on modern hardware, but it’s still a couple of orders of magnitude more challenging that what I’m trying to do with this puzzle game.

MMO Population Problems

Computers keep getting more powerful. So why do the population caps for massively multiplayer games stay about the same?

How to Forum

Dear people of the internet: Please stop doing these horrible idiotic things when you talk to each other.

Internet News is All Wrong

Why is internet news so bad, why do people prefer celebrity fluff, and how could it be made better?

How I Plan To Rule This Dumb Industry

Here is how I'd conquer the game-publishing business. (Hint: NOT by copying EA, 2K, Activision, Take-Two, or Ubisoft.)

Self-Balancing Gameplay

There's a wonderful way to balance difficulty in RPGs, and designers try to prevent it. For some reason.

T w e n t y S i d e d

T w e n t y S i d e d

dumb question:

why not generate the atlas with a compile-time tool that only needs to work on your computer, then make the resulting image a static file in the distribution?

(if you had a real art pipeline, you might have a special build of the game which generates the atlas dynamically, so your artists don’t need to figure out the compiler, but you can require your artists to have modern hardware)

(and this question is no doubt unrelated to the performance issue entirely — it’s more of a curiosity question about texture atlases in small games)

Yeah, this seems like the better solution to me as well. Unless you want people to be able to make their own sprites, which, is not a bad idea either.

Or if modding is so important include the tool with the install.

Shamus, just contact the VGA, he should be able to point you to a cost-effective solution to your company/banking troubles.

https://twitter.com/MrRyanMorrison

https://morrisonlee.com – rmorrison [at] morrisonlee.com

https://www.reddit.com/r/gamedev/comments/5pxldd/ultimate_as_promised_guide_to_legal_needs_and/

Insta-bookmarked! :O

This guy is notorious for inserting/promoting himself as a video game lawyer/attorney. I’m not saying he’s incompetent (which judging by the H3H3 situation he might actually be), but his act reeks of marketing more than actual longstanding experience or knowledge.

Also he doesn’t do this stuff for free, nobody does, it starts at hundreds of dollars.

I have done business with him, he advised me on a contract before I signed it. I don’t mind paying a lawyer for their legal opinion, but the reality of someone like Ryan (and lawyers in general) is that you need to be very specific about what you want. The generic legal advice I’ve seen given elsewhere is to form an S-corp, file trademarks, copyrights, etc. All of which we automatically rule out as too expensive and time consuming. But those are the ‘correct’ legal channels and you can bet that will be the first draft advice. Want to dig deeper? For $100/hr? Better weigh how much money you *estimate* your game will earn against how much you’re paying up front for legal advice .

I would instead recommend Shamus contact one of the local pro-bono associations, like http://www.pittsburghprobono.org/Get_Legal_Help.asp as even if Shamus makes too much money for actual pro-bono services, they may be able to direct Shamus to the correct info he needs or to someone who can do this at a discounted rate.

So I’m okay with Crysis, but can it run Pseudoku? This sounds crazy for a game that looks like it should run on a cheap phone.

This game should run on a free phone!

Maybe its running in a software emulationmode? You could check GL vendor string

Oh, I should have put that in the post. When you query GL version it does indeed report it’s running primordial GL. I forget what the exact string is.

Now I might be way off, but there is something.

So I use SDL2 in my programs, and if I don’t initialise the window and OpenGL correctly, then I’ve had it spit out that it’s running OpenGL version 1.1 with Mircosoft drivers blah blah. It’s been years since I’ve played with that part of the code, but with SDL2 you have to declare what version of OpenGL you are using (among other things), and if you are picking settings that aren’t available in the drivers on that system, it may be falling back to a barebones software render.

Other than that, I guess it back to commenting out bits of the code until you find what kind of call is bogging down the program.

Sure, but even a barebones software renderer shouldn’t be getting only *twenty* polygons per second. That’s crazypants.

I gotta agree. A modern browser on a older PC should be able to use HTML’s canvas and get FPS in the 30+ on a game like this.

Windows ships with a (software?) OpenGL 2.0 (or something like that), you need to install drivers from AMD/Nvidia/Intel to get OpenGL 3.x/4.x on the system.

Virtual machines (Virtual Box, VMWare) has OpenGL 1.2 or 2.0 or something and there are no driver updates to get OpenGL 3.x or 4.x on them.

Well, this case isn’t a VM, but yeah, Microsoft’s native OpenGL setup is a fiasco. (Shamus comments that he could “simply require the user to have a video card made in the last five years” … but that’s not actually enough. It needs to be made in that time, *plus* have a vendor-installed GL ICD and maybe even a totally different opengl32.dll library. I think the ICD is enough but it’s been a long time…)

Windows will redirect to the installed OpenGL driver if one is installed. Not sure how that redirect works. It’s possible it redirects the init of it thus the app talks directly to the installed OpenGL driver from then on.

Even after setting up the driver and getting it to load, your going to run into functions that either don’t behave as documented or flat out do nothing. Sadly vendor support for OpenGL has been lackluster for far too long.

I have a card where you can’t set a D24 frame buffer, even though it reports it as being possible. Using a modified driver designed to address this problem it goes away and all works as expected.

Some drivers will outright crash the system on a call that shouldn’t or just silently fail (not so much as an error in OpenGL’s error reporting mechanism). None of it really makes any sense given the times the drivers in question were published and the times those specific OpenGL features were introduced. So much time has passed that everything except for the OpenGL4 stuff should be fully functional without these ridiculous hiccups.

But hey, Direct3D works flawlessly… I hate that crap.

The answer is obvious, Shamus.

Your code is haunted. Sorry, someone had to say it. Do you know how many language design engineers poured their lifeblood into OpenGL?

The only solution is to dip the punk-rock chick you guys created for Deus Ex 3: Deus Exer in the code and hope for the best.

Are you sure you’re using an actual computer and not a series of binary vacuum switches?

I’m sure you’ve considered and discarded this option, but why not “publish” Pseudoku through Pyrodactyl?

Probably because Pyrodactyl may (understandibly so) want various changes to the project if it’s to be released under the Pyrodactyl name.

And maybe Shamus wanted to try and see how a truly “independent” developer could do it all solo.

Shamus wasn’t intending to release this game at all, it was just a hobby project. Now he’s found it has some appeal and appears to be fairly unique in the marketplace, so he wants to release it.

Reading between the lines, I think Shamus is expecting sales somewhere in the region of $5-10k, which isn’t much of a return, so investment needs to be minimal. If he handed it over to Pyrodactyl, he’d have to have some profit sharing arrangement, probably something around 60/40 or 50/50 with them, cutting his return significantly. It’s unlikely that Pyrodactyl could add value that is equivalent to the profit cut Shamus would be taking by having them involved (it shouldn’t cost $5k for Shamus to start a new business, and he mentioned that Heather has wanted one already anyway).

As a tangent, I’d be interested in hearing whether there are any international tax concerns with Steam or other game platforms. I think Pyrodactyl’s based in India, does that cost anything? (Is Pyrodactyl an Indian company?)

I can’t speak for Arvind, who actually owns the company in India and whatever that entails, but I’m comfortable enough giving the details on how our/my proxy company (Seventh House Games) works here in Canada.

Essentially, since Kickstarter doesn’t do business in India or accept Indian business numbers, we had a need to set up a company somewhere that did, in order to claim any potential earnings from a successful Kickstarter project. In Canada, money earned this way is taxable income (though a business is only required to apply for sales tax if it makes $30,000 or more within the year). The rates are expectedly much more favourable for businesses than individuals.

Enter Seventh House Games – a company run out of Ontario which collects and reports the Kickstarter earnings as income, then “hires” Pyrodactyl with them to develop a video game internationally. To open SHG I needed to:

-Apply for a Business Number (BN) – an index for the Canadian Revenue Agency so it could report earnings, taxes, deductions, and all the nation-level corporate shenanigans. A business number is how Kickstarter identifies and pays your company.

-Apply for a Business Identification Number (BIN). Confusingly not the same number; rather, this one allowed me to register a business name and obtain a license with the government of Ontario, and cost a pretty reasonable $50 up front. Both this and the BN can be obtained over the internet, to boot.

-Open a business account at a local bank. This required a BIN, and a meeting with the bank manager. In my case I just explained what I was trying to do, and got an account relatively easily.

As for tax concerns, for us the biggest is probably the cost of wiring money from one part of the globe to the other as wages. Bank transfers are ponderously slow and subject to sudden changes in protocol (at least coming out of India), plus have a flat fee high enough to discourage monthly payments; Paypal on the other hand takes a percentage cut that can get pretty needless for larger sums. Generally, if we have to send a lot of money at once we use the former, while the latter is good for small payments like residuals on the tail end of a game’s sales.

That aside, you have your general fees and taxes: 13% sales tax and 8-10% on Kickstarter’s end, plus whatever SHG pays in income tax (likely to be nothing along unless the KS is a roaring success).

I think you need to solve this. People with low end PC are the ones who are likely to buy this game, so among your potential customers, they are gonna be overrepresented.

This is all speculation on my part, granted, but it’s what my intuition tells me, that the people with Nvidia 1080’s aren’t your target demo.

^ This.

Yep. And I’m a guy who has a decent desktop machine for “serious” games, but Pseudoku is the exact kind of game I’d pick up for laptop use, because I can’t/don’t want to always be huddled in my office.

Which… relatedly, this seems like more of a tablet kind of game than a laptop game, even.

Ideally, I think it would be multi-platform. I can easily imagine playing this on a tablet, phone, laptop, or desktop, either by itself, or while listening to a podcast or something.

I myself have a computer with an Nvidia GTS 250. It’s definitely getting old, but it’s surprisingly capable at running most games – that is, unless they require DirectX 11 (basically, GTA5, Shadow of Mordor and everything henceforth), in which case it pouts and whines that it will NOT run that video game and that I shouldn’t even try.

It’s ridiculous really. The laptop I’m on right now runs DirectX 11, and I’m using an Inter HD Integrated Graphics Card, which works slower than my older Nvidia.

It would be a REAL shame if Pseudoku wouldn’t work just because my hardware is too old. :(

I don’t think your $90 machine has enough kilowappers to run the game :/

Yeah, sounds like the flux capacitor is not fluxing.

The malleable logarithmic casing must be off as well.

Perhaps you accidentally put something heavy on the samoflange?

Have you considered the possibility that as bad as integrated graphics are, drivers for integrated graphics are worse? How many FPS do you get with the hello world equivalent of OpenGL (maybe also DirectX, to see if it happens to be optimized for it) with and without textures, lighting, etc?

I had an integrated AMD GPU that would BSOD upon scrolling (with the scroll wheel) in firefox, for several consecutive monthly driver releases.

Something’s wrong. My friend and colleague wrote a cross-platform 3D engine (called “nya-engine”) that we use in all our games, and it manage to get 60FPS on integrated Intel video with thousands polygons in frame (in an open-source re-implementation of one of the recent Ace Combat flight arcades, which also uses that engine). So Intel cards are not that bad, even with OpenGL (though it’s an atrocity that many Intel drivers only support OpenGL 1.1 or 2.0 on Windows, especially with older cards).

However, disabling systems one-by-one isn’t the only way to debug this problem. You can try to use some profiler tools. I only mostly used CPU profilers, and you can start with that to make sure it’s GPU that’s bringing the framerate down, and not CPU. However, I think there are free GPU profilers out there, too, and they can save you some time if you learn to use them (I’m a tools-oriented programmer, I love all tools that let me think and do less :) ).

The OpenGL tool situation is not great. Intel’s profiling tools for integrated GPUs don’t even support OGL on Windows, and I think gDebugger got bought out by AMD and then killed.

Still “40 polygons are a slideshow” is an interesting departure from the MacOS OGL stack, which will either run at 20 fps while displaying a couple of polygons with no shading or texturing, or alternatively run at 20 fps while drawing ALL OF THE POLYGONS.

While I do recognize you’re trying to help, I personally don’t generally get much out of suggestions like “maybe using a profiling tool will help.” It’s not a specific recommendation or endorsement of a particular specific tool, or guidance on how to use the tool to help. It’s not even a strong endorsement – “I think these might help” is a lot weaker than “I’ve used these and I know they help.” Or, better “You should consider FooProfile. I use it all the time for GPU profiling – it’s fast, well documented, and easy to use. Let me know if you need help setting it up!”

Swapping one tedious task (swapping systems in/out to see which one is the problem) for another tedious task (researching profiling tool options and learning how to use them) isn’t necessarily a savings, especially if you’re confident one will work and aren’t sure if the other one will actually solve your problem (even if it will be faster if it does).

Sorry, trying not to pick on you personally, and I worry I’m coming off that way. Your suggestion about possibly using profiling tools might be a great one. Just that sometimes well meaning suggestions like this can be more noise than signal.

In this case, “use a profiler” is exactly the right advice. Which one you use depends on your toolchain. If it were me, running on some *nix, I’d use gprof. Or if I were working on an embedded system like I do for work, I’d probably use some in-house written sampling profiler that generates something that can be analyzed with normal tools. Shamus uses Microsoft’s toolchain, so this is probably the way to go. Or maybe the problem is actually in the graphics card somewhere, and he needs this instead. Either way, just saying “use a profiler” is enough information to know what to search. It’s not just a question of this particular performance problem, either. If you know how to read profiler reports, you can solve a lot of problems that are otherwise intractable. It’s a useful tool to have in the belt.

This is actually an interview question I ask people. If they claim they know how to work on high performance systems, I ask them how they make their code run faster. If they don’t say “use a profiler” I know that they don’t know.

That said, I can see where you’re coming from. Advice like this can lead down deep rabbit holes. Which tools to use is a complex and fraught set of tradeoffs.

OpenGL compatibility is a funny beast. My old Celeron E3300 was a surprisingly robust cheap CPU. It lasted me a very long time. The thing that finally drove me to build a new system this year wasn’t the fact that the processor was too slow–because it wasn’t, not really–but the fact that the G41 integrated graphics chipset couldn’t handle OpenGL beyond version 2.1. Here are the recommended specs for Endless Sky, a free space exploration/trading/combat game.

I want to stress that this is the complete list. And the minimum specs are even weaker. As you can see, a toaster ought to be able to run this game. But it wouldn’t even launch on my old computer because of the OpenGL issue.

What’s interesting to me is that I have seen what seems like an increasing number of indie and indie-ish games specify their minimum system requirements in terms of graphics memory and OpenGL version rather than by GPU model. (When we’re talking about Linux, that is. The windows requirement is usually given in terms of DirectX rather than OpenGL.) Transistor, for example wants “OpenGL 3.0+ (2.1 with ARB extensions acceptable)”. (I have no idea what ARB extensions are.) Satellite Reign, which I have been playing a great deal lately, wants “OpenGL 3.2+ GPU with 2GB of memory”. I can’t quite make up my mind whether this is more or less consumer-friendly than saying something like “GTX 750 or better”.

They really should keep ARB out of it as that is just confusing.

Now requiring OpenGL something is ok.

But in any case (even if they say they require DX10) they should also link to some kind of list as not all users know what their hardware can do.

But here is a list I stumble upon just now http://www.gpuinfo.org/

I just ran a test and uploaded my result (as no match to my system was found in the database)

(Hey Shamus, maybe download the OpenGL cap viewer there and see what it comes up with on your test machine)

I followed your link. The table is interesting, but I’m not sure that it’s a useful tool for the casual gamer. Generally speaking, I think that said casual gamer would probably be better off consulting the manufacturer’s website or the manual when he wants to know what versions of OpenGL or Direct X his GPU supports.

Still not useful to your typical consumer. Half of me helping people over the internet fix their computers is instructing them on how to look through dxdiag or msinfo32 to determine what hardware they have. I’ve grown to love service tags because of this, can just punch that into the brand’s website and get a hardware manifest without all the futzing around with commands that make people shut their brains down instead of trying to read the damn words on the screen back to me.

“I guess I'm being stubborn because this is really bugging me, not because this is a good use of my time.”

That's like 80% of engineering, really.

“Because this is really bugging me, not because this is a good use of my time.” Sounds like a box quote, the motivation for this blog, and words to live by.

Needs to be a motivational poster. I spent a week learning to build a reciprocating drive from scrap wood and a pulley salvaged from a washing machine. Could have just looked it up and had all the math to crunch what measurements I actually needed to make. Instead of used logic and testing to derive the rules myself.

Certainly not because it was a good use of my time, just because it was bugging me that I’ve seen the things used everywhere but never put much though beyond “gears and levers” into how it actually works and why it works without tearing itself apart. Still not so clear why it doesn’t tear itself apart but after breaking many wooden washers, enough that my fingers ache from carving them: I know it’s only one bad measure away from ripping itself apart.

I look forward to seeing what the bugbear hiding in your game is. I haven’t done too much in graphics programming, I tend to handle straight game mechanics which is way simpler; I’ve done some stuff with shaders but the more base level stuff is pretty impenetrable; feel like it would take a while to learn and with the available engines seems like for me it’s kinda not worth it.

But at the same time, definitely curious what’s goin wrong here.

Shamus:

Your best posts are the ones where you describe how you finally found the cause of a crazy-ass bug that was eating all your cycles, and explain what it was doing and why it was so obscure (or obvious, in retrospect).

SOLVE THIS. Then post about it. For both our sakes. :)

This, like a thousand times. I want a deeply nerdy post where you eventually figure out its some obscure OpenGL emulation because of the way you initialised it like its 1992.

It’d be interesting to see how you solve this problem with slow framerate. Considering I haven’t been able to play Total War games on my laptop since 2012 maybe if you showed how this Pseudoku game functions I can eventually play Total War as well.

Wait… is that a good comparison?

I support this message.

“best use of my time” is what I hear at work, and whenever I get half a chance I do whatever is not the best use of my time, and good things have come from that (also some very hectic deadlines, soo…). I’ve always envied people who can do whatever the heck they want with their time, so I’d be in favour of doing just that, turning it into a post, and then writing the post is what you do the bughunt for. That and your own curiosity.

Question from someone who does not program C++ or openGL: How easy would it be to time this sucker? Us spoiled Python programmers can just fire up the profiler and see exactly where all that time is spent. That should give you at least a good hint of where to look.

And now you’ve invented a tetris-solving program.

Or, formally, like the cutting stock problem.

Shamus you should make sure that the GPU actually has the texture in GPU memory, if the GPU has to read the texture from system memory then that could explain the very slow draw speeds.

Also. texture atlas? I’ve never heard that before, I assume it’s the same as a sprite sheet?

Pretty much, yes.

By some definitions, a Texture Atlas is a more generic form of sprite sheet.

Sprite sheets are generally static, pre-compiled by the artist or offline tools, while a texture atlas is often generated on-the-fly as needed.

For example, most OpenGL/DirectX applications that render text use a texture atlas for the characters (technically glyphs) in the font. As new characters are required, they are added to the atlas.

Eventually the atlas is filled and it either creates a new atlas for the extra characters, or removes old characters that haven’t been used for some time.

In this way you can have hardware-accelerated text rendering without having to upload the entirety of Unicode to the GPU – which wouldn’t fit anyway!

Three suggestions for Shamus to check:

1. As Roger said, maybe there’s trouble getting the texture onto the GPU? See if you can output the value of GL_MAX_TEXTURE_SIZE. Possibly this card/driver/OpenGL version doesn’t support the dimensions of your texture atlas, though if it’s really only 800×450, that seems unlikely. A bit of info at: https://www.khronos.org/opengl/wiki/Texture#Theory

2. I think I’ve also heard that some cards require texture dimensions to be powers of 2, so try adding unused space to make the atlas 1024×1024.

3. Only 0.5fps sounds suspiciously like it’s reloading images for disk every frame, or possibly just reassembling the texture atlas every frame. Have you double checked that these operations only happen once?

Related test: are the speeds on your better computer also unusually slow? If not, does one PC have an SSD while the other has an HDD (see point #3)?

When I try to use NPOT textures on a card or driver that can’t do them, the result I’ve seen is failure to create the texture, not super super extremely slow frame-draw time. On the other hand, that was webgl, not desktop GL, so maybe the error checking is more strict or something?

Or maybe GLES is stricter than GL? (I don’t think that’s true, but I’m not completely sure…)

My vague guess is reassembling the texture atlas as well, but that also seems like an obvious thing to look at, so I sort of assume Shamus already has. :-) If not, dump a printf in there and see what happens, maybe. (…Oh, except this is Windows, where that’s a lot harder. Crap. Uh… write an entry to the application log? Or keep a counter and MessageBox its value once, at shutdown?)

Even rereading the textures from disk shouldn’t take two seconds, though: after the first read, they should be in the OS’s disk cache…

Non-square, non-power-of-two is indeed asking for trouble, however this usually falls back to it really being the next size up square power-of-two texture and the texture coordinates appropriately adjusted.

Means you consume far more GPU RAM than expected, but otherwise it’s invisible.

In general OpenGL ES fails hard, while OpenGL on the desktop tends to fall back to slow paths or software rendering.

The general reasoning being that OpenGL ES was designed for GPUs that are coupled with a very slow CPU with very little memory – usually physically shared with the CPU as well.

OpenGL desktop is usually coupled with a very fast CPU with fast memory that isn’t shared with the GPU

Please be aware that (as far as I can recall) WebGL is translated to DirectX on Windows (no idea if Chrome for example uses OpenGL if that is available in a high enough version).

For Chrome (and many other applications) the WebGL fallback path on the Windows desktop is roughly:

OpenGL 3.2+, ANGLE, Software, Screw You I’m Going Home.

Desktop OpenGL 4.1+ is now a perfect superset of OpenGL ES 3.0+. (It was very close in OpenGL 3.2)

– I believe there are still some minor syntax differences in the shader language, but the OpenGLES > OpenGL direction is trivially handled by simple text replacement as the driver gets the shader source code.

ANGLE is a way of converting OpenGL ES 2.0+ (and probably 3.x by now) into DirectX 9+ calls.

It’s rather complex as DirectX is a very different frontend – for example, ANGLE has an actual shader compiler (not just translator) as DirectX uses precompiled bytecode for shaders!

Have you tried running other OpenGL programs on this computer? (Make sure you can roll back to a known state, of course.) It would be useful to know if all OpenGL programs do this, or just your code.

Regarding the business account fiasco, is that unique to the dystopic hellscape of Pennsylvania? I’m in Vermont, and getting a business account was a one page form, a $50 check to the state Secretary of State, and a 10 minute phone call to my credit union. Is single proprietor DBA not a thing in PA?

Previous posts’ comments have pointed out it’s probably a result of a catastrophic contradiction in business laws at the city and state level.

Ugh. Ambiguous scope bugs are the worst.

Very much a PA issue. Problem solved if we move. Bank requires paperwork that is not available for our business type in our state. Found a single bank that SHOULD work but after filling forms never heard back. PA small business law is a beaurocratic nightmare and everything is “just do this” and then “oh, that doesn’t work because PA/business type/etc”. And we refuse hands down to do the stupid or pay for stupid. Taxes alone are super complicated and expensive for what we do. Adding more complications and expenses would literally make what we do not financially viable. We are on the edge of that as is.

Shamus,

Does taking Patreon money come with any legal hassles other than reporting it on your taxes? Because at this point it sounds like you might come out ahead (in terms of both time and money) by just making the game donationware.

IIRC he explained that he wanted to open a business for other reasons and Pseudoko is only a test case, not an end of itself.

Your business woes seem crazy complicated! Surely you are already self employed and so already qualify for a business bank account without having to form another new company?

I live in the UK and went self employed last year and literally the only step that was required was that I notified the government so they knew to pester me for self assessment tax returns. Banks are falling over themselves to offer me business bank accounts but currently there is no advantage in me separating my business and personal accounts (and there is no legal obligation to do so if you are a sole trader).

I was surprised by how simple it all was but it makes sense. If you are self employed and a sole trader then you ARE your business and any money you make is made by that business, regardless of how it’s made.

It’s surprising to hear that in the USA this process is more complicated given Americas obsession with “freedom”!

The extension thing is odd. Even 2nd generation (we are at generation 7 now) Intel integrated chip sets support OpenGL 3.0, which includes framebuffer objects. I recently tested this on an older laptop. I was using a core profile instead of extensions, though.

Even more odd is the frame rate thing. The game I was testing on the laptop I mentioned was running 60 FPS with quite a lot more polygons then you have here, somewhere in the region of a few 1000.

Hope you can solve it!

Shamus,

Please, PLEASE profile your code before keep going this blind. Visual Studio has its own profiler.

That’s certainly a possible step. Of course, that means installing VS on the test machine, which means it will get all the packages and no longer be a “virgin” machine for testing.

We’ll see.

Windows remote debugging and profiling works pretty well with VS, you don’t need to install MSVC or any SDKs on the target.

It’s a long time since I set that up, but I don’t recall it being particularly nasty.

Not sure what your plans for profiling are right now, but a simple solution that won’t dirty your clean virgin install: a simple block scope based tick/tock with tags.

Simply create a class / struct with a constructor/destruct pair, log to memory for both.

In the constructor log to memory something like:

(“start”, tagname, QueryPerformanceCounter);

in the destructor:

(“stop”, tagname, QueryPerformanceCounter);

…

So in each scope you want to time, you can do something like

//Scope to time this part of a function:

{

ticktock t1=new ticktock(“Some meaningful tag name”);

execute_stuff();

}//t1 is destructed at end of scope.

…

Parse the output, collate all the tagged entries, and now you can see where time is being spent.

Or, you could collate on the fly (probably easier, actually). You could even log debug output for top n profiler tags directly to the screen (more useful if you have the game running at more than 0.5Hz)

PS. I’d be happy to donate my time to help out if you’d like.

If I were you and had a random internet stranger make an offer like this, I’d say, “thanks. But, no thanks. I appreciate the offer, but I’d rather handle it myself.”

So, no worries if I don’t hear from you. Just putting it out there ;-)

I’ve been following your stuff for many years and haven’t really interacted very often. It’s just part of my personality to sit back and not engage (for fear of saying the wrong thing or being rejected).

Anyway. All the best with the game and business stuff – I hope you get what you need out of it.

If you want a hopefully interesting perspective on this…

I’m not sure how Mikk (Good Robot’s proper art-doing artist) felt about this, but the system Shamus used to link parts of the texture atlas to the game was actually more convenient than the way things worked in Unrest, where each area had a manifest file for every art asset, script, and sound that needed to be filled out. As the resident script-fellow everything-doer I spent the majority of the late-to-post-launch period tinkering with that very atlas, and I actually appreciated how much more coherently/efficiently it was laid out than what I was used to.

So really, don’t feel bad on my behalf! One man’s obtuse system is evidently another’s jump forward in game engine convenience. This might speak more about my standards, having worked mainly indie games and mods… but still!

Texture atlasses tend to generate artifacts with mipmaps; texture arrays provide an alternative without these artifacts. They’re in core opengl 3.3 (but still not in Unity3D for no freaking reason). Since I’ve never used any opengl version prior to that one, I don’t know if they were there before. Might it be a solution? I’m no expert, and welcome any enlightenment on the topic.

For this type of usage, you simply don’t have mipmaps at all.

Mipmaps are for rendering large textures more efficiently when they’re displayed at small onscreen sizes.

Texture atlases are for rendering many, very small textures.

The texture is generally either being displayed at or near 1:1 scale.

The small onscreen size optimisation for these is usually “don’t draw it at all”, not “use a mipmap”.

The ‘classic’ use case is decals, text glyphs, and 2D GUI interface components.