So last time I described how OpenGL used to work in the pre-graphics card stone age. Those were simpler, clearer days. Yes, they were also slow as hell and unable to do much in the way of fancy graphics. But, you know, simple.

But the simplicity couldn’t last. The evolution of OpenGL is basically a long series of refinements where more and more work was gradually moved to the graphics card. Let’s go over them.

1. Rasterization

As far as I can tell, this was the “killer app” of graphics cards. Your game shoves the texture maps over to the graphics card. Then it tells OpenGL how big the canvas (the screen) is. Then it sends a bunch of 2D triangles along the lines of, “This triangle occupies this part of the screen and uses such-and-such part of this texture.” The graphics card would do all the work of coloring those triangles in with pixels.

Let’s talk about a CPU. The CPU inside your computer is a complex beast. Even ignoring the fact that it’s actually many cores strapped together, it needs to be able to perform all sorts of operations. Code needs to be able to branch. (If X then do thing A or else do thing B.) It needs to be able to switch between running one program and another, completely different program constantly. It’s got this complex system where it brings in data from main memory and moves it into progressively faster but smaller banks of memory. Basically, it needs to be able to run anything. I’ve heard people refer to this as being a machine that is Turing Complete.

Not so with graphics cards. They just needed to fill in triangles with pixels. They didn’t need to do three completely different things at once, or handle complex branching code. Since their output was in colored pixels and not hard data, a certain degree of slop was allowed. The math could make certain approximations and shortcuts because if the output was 0.01% off, nobody would be able to tell. Even if you had superhuman eyes that could spot the subtle color differences, you’re using a monitor that can’t display differences that slight.

All of this meant that graphics processors could be much simpler. It’s a bit like comparing a classic fast-food place to the work of a single highly trained chef. The chef can make you almost anything you can ask for, while the fast food place can only make hamburgers. But the fast food place is optimized for it and can crank out 24 hamburgers a minuteSomething like that. You young people don’t realize this, but fast food used to be FAST. Back before McNuggets, McChicken, McRib, McFish, McSoup, McPizza, Fajitas, Grilled Chicken, Chicken Tenders, six different salads and ten different types of hamburger.. Simplicity is speed.

Simpler cores didn’t just make them faster, it also made them smaller. So instead of one giant core they could have a whole bunch of them. And those cores would do nothing but take in 2D triangles and spit out pixels.

2. Transform and Lighting

So it’s sometime in the late 90’s and we’ve got these graphics cards that take 2D trianglesThat is, a triangle expressed in terms of where it will appear on the screen. The graphics card has NO IDEA where the vertex might be in terms of your 3D game. and fill them in with pixels. That’s cool, I guess. It was certainly a massive boost in terms of our rendering capabilities. But it was clear they could be doing a lot more.

There’s a step I’ve been sort of glossing over here. That’s the bit of math you have to do to figure out if a particular triangle will end up on-screen, and if so, where. “Hm. Given that the camera is in position C and looking in direction D, and this particular triangle is so many units away, then the vertices of this triangle will end up on this part of the screen.” Like coloring in triangles with pixels, this is yet another brute-force, bulk, doing-math-on-three-numbers kind of jobYes nitpickers, it’s actually FOUR numbers. But I don’t want to burn through a couple of paragraphs explaining why. We’re already in a two-levels-deep digression. Let It Go.. Which means it’s a good thing to offload onto the graphics card.

This process of translating vertices from game-space to screen-space is called “Transform and lighting”I’m glossing over the whole “lighting” thing here because I don’t think we need to go over it in detail. And we’ve got enough ground to cover as it is..

Because I spent so much of my career riding the tail end on the technology curve, the timeline is always a bit muddled for me. Apparently all of this happened in 1999, but I didn’t really think about it for at least another four years or soWhich is a long time in computer graphics, and a really long time at this particular point in graphics evolution..

According to the NVIDIA page, the new T&L-capable cards offered “an order of magnitude increase in visual complexity”. An “order of magnitude” is just engineer talk for “ten times more / less”, but it sounds so much more impressive and technical than just saying “ten times”. On one hand, I’d caution against believing breathless claims made by marketing. On the other hand, that sounds pretty accurate. We really did get a huge jump up in model complexity.

Here is a screenshot from the pre-T&L game Thief:

|

The canister on that table is a cylinder with 5 sides. (Not counting the top and bottom.) It looks ridiculous. At the time, every polygon was a precious thing, and we couldn’t afford to waste them. So our games were filled with coarse geometric shapes. When we were able to offload a bunch of polygon processing to the GPU, we suddenly had enough polygons to make round things look round.

The problem was, this jump was almost too big. It was so big that we kind of didn’t need to make another. If we double the number of sides in the canister above, you’ll end up with something that looks completely round to the user. Maybe if they mash their face into the object they will be able to see the angled edges, but it’s no longer the glaring problem. The jump from 5 to 10 polygons is a lot more visually important than the jump from (say) 10 to 100, or even 1,000. We suddenly had as many polygons as we could hope to use on those old machines.

3. Vertex Buffers

Remember that a graphics card is, in a lot of ways, a separate computer. It’s got its own memory and its own processors. So when you want to tell the graphics card to render a triangle, you need to send it all of the information about that triangle. There is a bit of a choke point between the devices, meaning it takes much longer to send a triangle to the graphics card that it does to (say) move the triangle from one part of memory to another.

Once you have T&L, you’ll quickly notice that you spend a lot of time sending the exact same data to the GPU over and over again. Every single frame, you tell the graphics cards about the same exact polygons that are still in the same positions. (The walls of your level, and other non-dynamic stuff.) The camera is moving, not the walls. So why do I need to keep sending the same huge load of data every frame?

So vertex buffers give us a way to shove all the data from the PC and store it on the GPU. So instead of sending 1,000 polygons, I just need to tell the card, “Remember where I gave you 1,000 triangles? Draw those again, but with the camera in this new location.”

4. Shaders

Like I said a few paragraphs ago: If we wanted games to continue improving visually, it was pretty clear that mindlessly cranking up the polygon counts wasn’t the way to go. To take the next step, we needed to change how we drew those polygons. And for that we needed shaders.

From this point, it was no longer possible to offer a generalized solution for all games. You could make a graphics card that was good at cel shading, but that would only help with games that were cel shaded. You could make a card good at bump mapping, but that wouldn’t do anything for games without bump maps. Instead of adding more “features” to the graphics card, we just needed to give developers a way to control all that raw rendering power directly. We needed to give them a way to write programs that ran on the graphics hardware.

So now developers need to make two shaders: A vertex shader to do the Transform & Lighting, and a fragment shader to do the rasterization.

|

Shaders made a lot of things possible or practical: Light bloom, anti-aliasing, various lighting tricks, normal maps. This was a massive turning point in game development. In one leap:

- Games took a massive step forward in visual quality. I think this was actually really important from a cultural perspective. Serious games now looked good enough that they could show up in a TV commercial without looking ridiculous. Before this point, only cartoon-y games (Mario, et al) could get away with this.

- Games took a huge jump in expense to produce.

- Games took a big jump in complexity. In the late 90’s, it was still possible for a small team to get together and make a AAA game without publisher backingBioWare is the oft-cited example of this.. This was the beginning of the end of that era. (You could argue that with the release of so many free AAA game engines, those days are returning. But that’s another article.)

Like a lot of technical advancements, you can argue about where to “officially” draw this particular line. I draw it at 2004. Half-Life 2. Doom 3. Thief Deadly Shadows. Compare each of those games with their predecessorsTo be clear, I mean you should compare Doom 3 with Quake III Arena, not with Doom 2. to see just how extreme this move was. Visually, I think Doom 3 has more in common with the games of 2015 than it does the games of 1999.

|

| Left is without normal maps. Right is standard rendering. Note the keyboard and face. Click for LOLHUGE! view. |

5. More Shaders

Since 2004, the graphics race has mostly been about what we can do with shaders. I can’t think of any big changes since then. Every once in a while shader programs get some new ability. Sometimes the ability is explicit. We’ve added some ability to manipulate pixel data in ways that weren’t possible before. Sometimes the feature is implicit. Graphics hardware is now fast enough to do some heavy-duty lighting effect that would have been too slow on the old cards. But either way, the steps have been more evolutionary than revolutionary.

And to be honest, for me all the changes kind of blur together here. The documentation on the OpenGL shading languages isn’t that great to begin with, so it’s pretty hard to piece together the various iterations of the language if you weren’t already following them when they happened.

The point of all this is: Right now, in 2015, you can use any or none of these features of OpenGL. You can render with all of the advancements of the last 20 years, or you can render raw immediate-mode triangles like it’s 1993. This has made OpenGL sort of cluttered, confusing, obtuse, and ugly. It’s poisoned the well of documentation by making sure there are five conflicting answers to every question, and it’s difficult for the student to know if the answer they’re reading is actually the most recent.

So now the Kronos group – the folks behind OpenGL – are wiping the slate clean and trying to come up with something specifically designed for the world of rendering as it exists today. Vulkan is the new way of doing things, and it is not an extension of OpenGL. It’s a new beast.

I know nothing about it, aside from the overview-style documents I’ve read and the tech demos I’ve seen. Given my habit of lagging behind technology until the documentation has a chance to catch up, I probably won’t mess with Vulkan for a few years.

In the meantime: OpenGL is strange and difficult, and there’s nothing we can do about it. This is a rotten time to be learning low-level rendering stuff. The old way is a mess and the new way isn’t ready yet.

Footnotes:

[1] Something like that. You young people don’t realize this, but fast food used to be FAST. Back before McNuggets, McChicken, McRib, McFish, McSoup, McPizza, Fajitas, Grilled Chicken, Chicken Tenders, six different salads and ten different types of hamburger.

[2] That is, a triangle expressed in terms of where it will appear on the screen. The graphics card has NO IDEA where the vertex might be in terms of your 3D game.

[3] Yes nitpickers, it’s actually FOUR numbers. But I don’t want to burn through a couple of paragraphs explaining why. We’re already in a two-levels-deep digression. Let It Go.

[4] I’m glossing over the whole “lighting” thing here because I don’t think we need to go over it in detail. And we’ve got enough ground to cover as it is.

[5] Which is a long time in computer graphics, and a really long time at this particular point in graphics evolution.

[6] BioWare is the oft-cited example of this.

[7] To be clear, I mean you should compare Doom 3 with Quake III Arena, not with Doom 2.

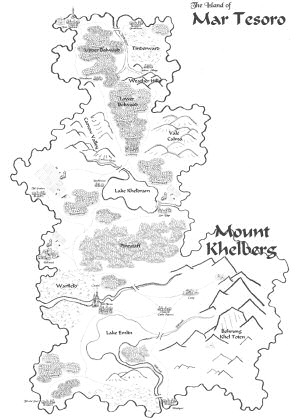

D&D Campaign

WAY back in 2005, I wrote about a D&D campaign I was running. The campaign is still there, in the bottom-most strata of the archives.

Dead or Alive 5 Last Round

I'm not surprised a fighting game has an absurd story. I just can't figure out why they bothered with the story at all.

If Star Wars Was Made in 2006?

Imagine if the original Star Wars hadn't appeared in the 1970's, but instead was pitched to studios in 2006. How would that turn out?

Games and the Fear of Death

Why killing you might be the least scary thing a game can do.

What is Vulkan?

What is this Vulkan stuff? A graphics engine? A game engine? A new flavor of breakfast cereal? And how is it supposed to make PC games better?

T w e n t y S i d e d

T w e n t y S i d e d

Typo check: I think the steps have been evolutionary, not the staps.

And in the same sentence “the steps have been more evolutionary than revolutionary”

While we’re pointing out typos, I think it’s supposed to be Quake III Arena in footnote 7.

And “a long series or refinements” in second paragraph; “or” should be “of”.

“Once” in paragraph 4 should be “one”

Interesting how everybody catches one typo.

And nobody’s caught this one yet: “Every singe frame,…”

Unless we’re talking about Furmark and you’re literally burning the frames with your video card. :)

Maybe that’s what Shamus’ custom OpenGL shader does.

That, or corrects typos on screen without fixing them in the actual document sent through WordPress.

Also, it’s the Khronos group, not the Kronos group.

You beat me to it! Stahp! :P

(I was pointing out the Quake III Arena typo, but MadTinkerer beat me to it. By over an hour. No, I hadn’t refreshed the article since I loaded it ages ago. :) )

I think every person writing “how to” or giving advice through forums for OpenGL based has avoided anything before 2.1 for the last 5 years. More recently people are starting to drift towards the 3.3 core or 4.x version where all of the old crap was cut from the library.

The trouble is this old openGL advice is still online and searching for a “how to” with openGL leads to forums and guides from 10 years ago. By actually changing the name of the rendering library they will do more good since it will mean future searches will no long bring up the openGL 1.x stuff.

What i really hate is the number of sites that say “Here is opengl guide for X” and will turn up no matter what version numbering you use. Then you close it as soon as they start with glBegin(). It just wastes a lot of time that people don’t have when trying to solve problems.

On google, you can give a date range for the results you want back. That should trim out a lot of the ancient stuff easily.

That won’t stop outdated advice that was recently given, unfortunately.

Or ancient forums that have been recently posted to, I think.

How about adding a “-glBegin”?

Shamus, I think since you have had modeling experience in the past you should try out the DCC software of today as a neat experiment to see if you can create models that would look up to today’s “standard.” Also to see where artist’s tools have improved/stagnated. You can get student versions for free(not a euphemism for piracy) and even your hated Blender is actually really nice now.

I too, would like to see a (mini?) post on this. Like, one afternoon, see if you can make a 3D model that looks nice. Maybe a gingerbread house? (Mmm, tasty!)

“and even your hated Blender is actually really nice now”

Sorry, but no, it’s not. Having used 3dsmax, Maya, C4D, Modo and XSI I can say that Blender’s UI is still as unusable as ever, even with a custom control scheme and each and every critique Shamus hurled at it in those old articles are still just as valid.

I would have to respectfully disagree. I have used 3Ds Max, Maya, Modo, Mudbox, and ZBrush (Modo is my favorite commercial app with Max at second and Maya last) and will say that Blender, for the most part, is fine now. This is especially true if you are doing simple work and not massive team based projects.

Almost nothing is hidden as it was before. You have to get used to the way that it operates in “modes” on an object, but that can be said of all packs(ie. Max you have to make sure it’s an edit/editable poly, and ZBrush is the unholy beast of cumbersome until you get used to it). In Blender almost all of the options are either in menus or in the “T” or “N” bars.

My only two gripes are that the transform widgets are not able to lock to two/all axis (at this point I don’t use them so I can’t comment too much) and that left click to select is not standard (some really strange people actually do seem to prefer this though ;) ).

EDIT:

I’m sorry but I forgot about the multi-monitor support. It can be done, but feels really awkward. You can add that to my list of grievances.

All this talk of fancy 3D rendering, and I’m just using Swing, AWT, and Graphics2D.

A year or two ago, I actually tried to teach myself OpenGL.

Boy, that was a fruitless venture. I learned since then that it wasn’t my fault that I wasn’t understanding the documentation that I could find. The code’s a mess, there’s no definitive, up-to-date guide that you can rely on, and even the most recent parts of OpenGL don’t even work as designed(Just ask the Clockwork Empires guys).

I’m glad there’s stuff like Unity now.

I can absolutely guarantee the new way will still be big old buffers of floats, so at least storing/sending data to the GPU will work about the same. I also seriously doubt the basic vertex/fragment shader pipeline will disappear.

It’s actually sort of all about changing how you send data to the GPU…

Not in the sense that you no longer send large chunks of data, but in changing how the data is treated, making things less about the specific graphics pipeline and more developer controlled.

Small nitpick: Anti-aliasing was possible before shaders. But shaders enabled variants (e.g. FXAA) that are fast and still improve the image quality quite a bit.

In fact, heavily shader based renderers, like the Unreal Engine 3, weren’t able to use classic anti-aliasing techniques in the beginning.

I can’t be the only one who thinks FXAA looks like trash.

Agreed, I prefer sharp edges with jaggies then have a blurry image from FXAA.

I absolutely agree that it looks Bad compared to MSAA or FSAA. I still prefer it over no AA at all :) But of course that’s personal preference

While we’re nitpicking, I think you meant “cel shading” and not “cell shading”.

As usual: brilliant article, brilliantly written.

Have you posted the Doom 3 comparison shot before? I’m getting deja vu looking at it…

Yes.

http://www.shamusyoung.com/twentysidedtale/?p=23513

All the images in this article have been previously used, with the possible exception of the Thief screen shot, that I might just recognize that from having replayed Thief about a year ago.

Excellent article. Good accounts of recent technical history are always surprisingly difficult to find. This does the job wonderfully.

Nitpick; I’m not sure the implication that a GPU isn’t Turing Complete is accurate. I’m obviously not disagreeing with “CPUs are very complicated in ways that GPUs aren’t” point, but it’d surprise me if GPU’s aren’t Turing Complete, because that bar, as I understand it, isn’t terribly high. Conway’s Game of Life and Minecraft’s redstone circuitry are both Turing Complete, despite both being very simple (well, very simple compared to CPUs and GPUs).

As I understand it (which is based on some pretty informal learning) not being able to loop and branch will disqualify as processor from being Turing complete. Shaders can’t branch. If you have a conditional:

if (thing) {

DoFoo ();

} else {

DoBar ();

}

Both DoFoo and DoBar are expanded by the compiler as if they were inline. And at run time, it will execute both blocks of code and simply throw away the result it doesn’t need. It also unrolls loops, so:

for (int i=0; i<3; i++) {

DoFoo ();

}

Becomes:

DoFoo ();

DoFoo ();

DoFoo ();

Which means you can't have a loop unless its boundaries can be known at compile time. There will be some problems you just can't solve, no matter how much time and memory you give it. Which (as I understand the term) denies it the TC descriptor.

I believe that SL 3 is Turing Complete. From a quick search it allows arbitrary iteration, and you can do branching in it (even if the branching is done in the way you mention). I have no word on whether you’d want to take advantage of its Turing Complete features or not, but I think it is.

Also of note is that I believe that SPIR-V, Vulkan’s new compute shader, is Turing Complete. Or, rather, it supports compiling a subset of C++ to SPIR-V, which can then be run as a shader.

Actually, I’m fairly sure that modern OpenGL shaders are (theoretically) Turing complete.

For example, it is possible to implement Conway’s Game of Life, which is Turing complete, exclusively in a pixel shader. Therefore, the pixel shader has to be Turing complete as well. But that’s cheating, because you still have to rely on the CPU to issue draw commands.

But apart from that, you can in fact loop over variables defined at run time, you can have branches (though with limitations, as pointed out by Shamus), and I believe you can also have recursion (Basically a way of implementing loops by having functions call themselves). That’s enough to be Turing complete, given little things like inifinite time and memory space of course :)

I haven’t tried writing recursive code in shaders, but I know it’s not allowed in OpenCL. And not in GLSL either according to this. Not sure about HLSL or the other shading languages, but I guessing not.

Yes, I think you’re right.

But you could probably fake it using an array as your call stack and an index into the array as your stack pointer… I think.

As someone with some formal learning (but way less experience programming on GPUs) this analysis seems correct. The two things you need for a language/machine to be Turing complete are branching and loops/recursion.

For conditional statements, depending on how the GPU “decides which value to use” it might have some limited branching ability, but without the ability to loop indefinitely, it’s not Turing complete. (I’m assuming the GPU also can’t run recursive functions. If that’s wrong, then the analysis changes).

True, however you can run the same shader an arbitrary number of times, mutating the dataset each time – eg Game Of Life simulations.

Which is a pretty good description of the actual Turing Machine.

Is it Turing Complete if it needs an external device to ‘crank the handle’?

If it needs the CPU to initiate each frame, it’s not Turing complete by itself. (but the whole system is)

Of course, any system involving the cpu is going to be Turing complete.

In that case, can you not say any system involving reality is Turing complete?

I’m not sure I’d call Minecraft’s redstone turing complete. You can build turing complete things with it, sure, but “redstone circuits” is about as broad a descriptor as “electronic circuits”. And I wouldn’t say that a transistor is turing complete either. Or a logic gate. It’s only turing complete when you put many, many many of these things together.

Im always puzzled by what “order of magnitude” means for a binary number system used in computers.Does it mean twice as good/bad?Or 8 times?Or 16,32,64?Or maybe even 1024?

Usually twice

I believe it just means the numbers are all shifted over one decimal place.

https://en.wikipedia.org/wiki/Order_of_magnitude#Non-decimal_orders_of_magnitude

One binary place. :P

An order of magnitude usually refers to adding a digit. In the classic way it was first used. 10 is an order of magnitude more than 1. 100 is an order of magnitude more than 10 etc etc

This can be complicated by marketing people co opting the word because it can sound impressive, and using it for big improvements but not that big.

Speaking of marketing people co opting terms, ever since reading the commentary on this Irregular Webcomic, I find great amusement in marketing people using “quantum leap” to describe the improvements in their products.

It’s a Quantum Leap in efficacy!

I know the definition of the order of magnitude.But I have no clue what it means in computers because I have no idea what scale is used.Is it just the binary bit,in which case it is 2?Or is the byte considered the basic unit,in which case it is 8?Or is it a number of bytes in which case it could be 16,32 or 64?Or is it going by the scale data is going by for files,in which case it would be 1024?So what does it mean if one memory chip is an order of magnitude better than another?

And thats not even going into the whole thing of stuff like graphics cards that use both the binary and decimal system for their memory and their speed.

Oh yeah,quantum leap.That one is even worse.

Saying something is an order of magnitude better than another thing is meaningless unless you specify how you define better. Aside from that, if someone with a technical background is saying it, it probably means 10 times. Computers may be binary, but people are used to thinking in decimal and converting numbers to different bases in speech is a pain (is 0x10 said “ten base 16” or “sixteen”?)

Usually in programming we’re talking magnitude as going up or down powers of two. Not sure if that’s intended or not but typically what you get if you use a technique said to be orders of magnitude more or less complex.

>> [3] Let It Go.

The code glows white on the phosphors tonight,

Not a traceback to be seen.

A function in isolation,

and it just might compile clean.

Vectors transforming like a swirling storm outside,

Couldn’t keep it scaled, heaven knows I tried.

We need more rows than just these three,

The matrix, it has to be 4D!

Rotate, rescale, what do you know?

Well, now they know!

The code never bothered me anyway.

**applause**

Very good, even follows the beat well.

I’m kind of impressed with how the the guy that did the hands of that guy in Doom 3 only created the index finger separately for the typing animation, the rest of the hand is just a blob, but the normal map makes it look like the other fingers are modeled as well. Pretty impressive.

It’s also adequately explained in-universe: the extremely poor lighting in the Mars base resulted in the rash of horrific industrial accidents which made it look like everybody’s fingers had each been broken four or five times

I have no idea whatsoever if that’s true or not. You could be making shit up, or that could be a joke in the actual game. Well played.

Thank you for writing these series of articles 10 years ago.

I remember learning about the legacy API in a college class (that was on the way out because it used said legacy API).

I have to think… the legacy API is still useful as a teaching tool. Having to learn all the different layers of complexity all at once is very difficult. I was very keen to learn where all those extra layers of complexity appeared in the timeline.

Of course, the modern API is the best/fastest way to do things. OpenGL 4.6 came out years ago at this point, but it’s still seeing lots of active use! It probably is still going to be used by people who want/have to do their own graphics for decades to come!

I’m not sure how big the leap is from “modern” OpenGL to Vulkan is and… I’m frankly afraid to look into it.

Thanks for this description. I am new with graphics. I am learning OpenGL as my first library for 3d graphics.

I like reading history how it was created and used.