My column this week is a piece-by-piece breakdown of all the crazy bits of technology we need to make the Oculus Rift work. I’m a bit nervous about this. I strongly suspect that it’s something people are curious about, and I don’t think anyone else is doing these plain-English descriptions right now. So there’s a demand for articles like this, but I’m not sure I’m the best guy to do them. I didn’t even understand chromatic aberration until Michael Goodfellow explained it to me a week ago. I’ve read a lot about the hardware in the last couple of weeks, but I could still be missing something.

Still, there’s my take on it. It’s a complicated little gizmo.

A Star is Born

Remember the superhero MMO from 2009? Neither does anyone else. It was dumb. So dumb I was compelled to write this.

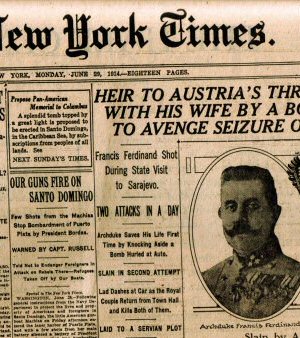

Internet News is All Wrong

Why is internet news so bad, why do people prefer celebrity fluff, and how could it be made better?

Spec Ops: The Line

A videogame that judges its audience, criticizes its genre, and hates its premise. How did this thing get made?

The Best of 2019

I called 2019 "The Year of corporate Dystopia". Here is a list of the games I thought were interesting or worth talking about that year.

Best. Plot Twist. Ever.

Few people remember BioWare's Jade Empire, but it had a unique setting and a really well-executed plot twist.

T w e n t y S i d e d

T w e n t y S i d e d

Well it was a pretty good read in any case. It’s always interesting to read about the issues involved in this stuff.

I’d try to find stuff you missed, but I’m even less knowledgeable about this sort of thing.

And Shamus you do a really good job of translating “really technical stuff” into complete layman’s terms.

Good job.

I never even thought of the shader correcting for aberration, that is a lot of processing to do.

Still, in a way it’s early days yet for this generation and it’s already pretty great.

Well as long as you know what the abberation of the lens is for each of the three wavelengths of light your monitor is putting out, it’s as simple as splitting the image by color and running your 3 transforms.

I’m not sure whether this takes 3x the processing power or not.

TL;DR: “Big juju, much magic.”

Oh! I get it now!!!

– What is it?

– Its a pair of interconnected monitors with special lenses that project the images close to your eyes,thus providing you with an illusion of a real 3d environment.

– What is it?

– Its a headgear that enables you to view two similar images at the same time in a way that connects them into a single 3d image.

– What is it?

– Its creating virtual reality around you with a specially designed pair of small monitors.

– What is it?

– Its creating something similar to modern 3d theater screens,only with the picture being inside the glasses rather then outside them.

– Oh its a magic pair of glasses!Why didnt you say so?

Heh. I should come clean. My comment is a quote from a book called “Illegal Aliens” by Nick Pollotta and Phil Foglio.

In the story, a street gang takes over an alien spaceship, and they capture the engineer. The engineer has a universal translator on his belt that not only translates language, it scans the brains of the listener and chooses words that their intelligence level indicates they’ll grasp. One of the gang members asks how the ship’s engine works, and the engineer launches into a whole treatise on hyperdrive mechanics, the fusion (or whatever) power core, etc. and the translator spits out: “Big juju, much magic.”

The engineer is stunned, thinking humans must be this ultra-advanced race that can pack all of the knowhow needed to construct a starship drive into four small words.

Later, one gang member asks why the ship’s interior is white. The engineer speaks at length about how chromatics have an effect on hyperdrive physics, including several tragic propulsion experiments that involved plaid. His translator says: “Ship no white, ship no fly fast.” Again, and for the wrong reasons, the engineer is awed by humanity. :)

Sounds like a book I need to read. Love silly sci-fi like that

You’ll probably also love a weapon that’s called the STOP THAT gun. :)

Really appreciate the source of that quote; I was pretty sure I’d heard it quoted before, but I put it into google… and this forum thread came up as the third result. It was going to drive me nuts until I scrolled down a bit and saw that you had explained it.

Then I’m glad I nerd-dumped! It’s kind of rare to find, I think, but I believe I saw it listed on Amazon used.

Hello, Cat.

That is a lot of shading. Considering that most 3D applications will do their own distortions before passing the entire thing back to the screen and thus Occulus, is it possible that we are doing same job twice here?

I would hope not; All the lens and sensor data should be dumped into OpenGL or DirectX, to be combined with the transforms the game is doing itself. Every millisecond counts! :D

I was on the bandwagon of people who thought we’d figured this out but didn’t have a market for it. When I was a kid, like 10-12 years old I think (roughly 20 years ago), I played Dactyl Nightmare somewhere in Chicago with the heavy headset and whole bit. You couldn’t keep turning in one direction because of all the cords. Which is why I thought we’d conquered this particular realm.

Now I’m curious what that system lacked in terms of technology, compared to this fancy gizmo.

Thanks for the run down.

I played that one, too. With glasses on under the helmet.

Ow.

As for the tech, I can’t say what drove those so-called VR “pods,” but a friend of mine who worked for a chain of arcades told me (a week AFTER it had happened) that it was more lucrative to dump a pair of the more recently updated models in the garbage and write them off than it was to keep them running (and this was, I think 2003 or so).

I think there was one or two SGI heavy-duty things driving each pod and a high-speed network (well, for the time, IIRC they’re from the early 90s, so we’re talking about 100 Mbps) linking them all together for the group play.

Tried it, once, in Trocadero (London), in (I think) 1991. Cool, for the time, but very VERY far from anything looking like realistic rendering (almost no shading, relatively boxy models).

The movement problems are a major problem in robotics as well, as over time positioning based on accelerometers and sensor data get error in it. There is a huge amount of research gone into this problem, and the current solutions is to apply a sort of percentage based guessed on how likely how much error has occured and attempt to use other sensor data to help reduce error from a single sensor.

I think VR is still a looong way from being practical, though at least now there is some dedicated people looking to get it working.

I’m almost of the opinion that when it becomes a mainstream technology, it’ll either look like a set of swim goggles or interface with your standard ocular implants.

Implants are for chumps! What happens when you get malware in your brain? :P

Low prices! Peni5 EnlaRgement therapy breasts for men! All women zises accepted!!!1

How is that different from most reality TV?

>Most webcams have a bit of latency and nobody cares, but now every millisecond counts, because the bigger the gap between the time your head moves and the time when your eyeballs see the movement, the more likely you are to find it confusing or disorienting.

If you decided to burn an hour sitting through Carmack’s keynote at OC last weekend, you would have learned that they don’t actually do realtime correction for the optical tracking: the onboard IMU does all the 6DOF motion tracking in realtime, and the optical tracker chimes in occasionally to say “oh 160 millseconds ago you were off by 3.7mm along x-axis, 0.8mm on y-axis, -1.1mm on z” etc etc. Obviously you get better data (smaller correction factors etc) with lower latency, but it’s not nearly as important as latency in the graphics pipeline.

Also, Carmack draws a distinction between graphics FPS and display hardware refresh rate. 75FPS is probably good enough from the video card, (though more FPS will reduce judder on fast head movements) but he’s said a couple times that 120Hz low-persistence is going to be a bare minimum on the display, and that you would see a noticeable improvement going from 120Hz (doable) to 1000Hz. (not gonna happen for decades.) He described some kind of interlacing per-scanline HDR scheme that would let you emulate some features of a 1000Hz display on a 120Hz panel, which he seemed to think would be a big win, but which would have some odd visual artifacting under some circumstances.

Also, Abrash in his 2013 GDC presentation described one big problem when you’re interacting with stuff in the near-field: the vergence angle of your eyes change, and your focus plane moves as you try to focus on something closer to you. But everything in an HMD is focused at infinity, and the HMD doesn’t track your vergence angle to change the projection accordingly.

You can kinda solve the second problem with eye-tracking, but how do you solve the first? You can’t do it by changing the focus of the eyepiece optics, because that very obviously flattens out the whole visual field. Per-pixel focus is a major open question, and nobody really has any idea how to solve it.

Also, also, to begin to match the acuity of the human vision system, you really need way more resolution. 10,000×10,000 panels are a start, and you could go higher.

That and haptics, which are probably going to be flat out impossible, barring really sophisticated robotics systems like in Rainbows End, nanotechnology magic, or just plugging right into the spinal cord.

Also, Carmack draws a distinction between graphics FPS and display hardware refresh rate.

Aye, but I’m sure the readers of the Escapist who simply want to understand the problems conceptually are more likely to appreciate the idea of screen refreshing in terms of frames-per-second rather than another technological bottlenecking rate.

The distinction is valuable in that doing 120FPS instead of 60FPS requires about twice as much computational power, (and the way GPU pricing works, a card that’s twice as fast is way more than twice as expensive) but a 120Hz panel isn’t much more expensive to drive than a 60Hz one. (Though raw interconnect bandwidth doubles, which is going to run into hard limits pretty soon: Displayport currently only goes up to 25 gigabits per second.)

Perhaps, but the underlying problem is equally explainable: “We need a thing to go twice as much as it does now, and that’s hard.” To a reader that’s not knowledgeable on technology problems who wishes to simply understand the big picture, which is presumably who this article is for, that’s mostly what’s necessary to express.

Maybe that’s a touch condescending to readers, but let’s be realistic about what we’re trying to accomplish here: Informing that this VR is not the same as the one people assume VR is from what they’ve seen in media over the past few decades, and why they can’t have one yet.

Technically,they can have one,but it would be a waste of money.I mean you can play a bunch of games on it already,like that guy who made that rig for playing doom 3 in vr.But the experience,while novel and cool,is nowhere near what vr is capable of delivering.Plus the whole making you sick thing.

It may be a little nit-picky, and something that most of the escapist readers (and many here) don’t need to know, but I’m glad that Samuel chimed in with it. It’s interesting to see the distinction, and learn a little more than we would have done otherwise. He’s also taken the time to link the source material, which is great for anyone who wants yet more info, even if it is more of a chore to understand than Shamus’ (as always) excellently worded summary

Don’t underestimate your ability to contribute to our understanding, Shamus. Your ability to explain technical details in non-technical language is valuable; it’s basically the techie equivalent of why folks like Bill Nye are famous (though you’re probably actually more a Neil DeGrasse Tyson, what with having actually worked in the field albeit a bit ago.)

The analogy still works for Bill Nye, seeing as he was an engineer before he got into television. :p

Good work on the article there, Shamus. It’s always nice to see technical problems spelled out for your “regular” dinkus like me.

I was able to figure out chromatic aberration from this Irregular Webcomic annotation. But then again, David Morgan-Mar does work with optics at his day job…

“We’ve been showing it in movies and talking about it for ages”

You can alway use a simple counterpoint:Star trek was talking about cell phones in the 60s.

So we should have widespread commercially available vr somewhere in the 2030s.

(tangentially:how long will 20XXs sound more natural than just XXs when talking about this century?)

EDIT:Wireless everything sounds nice.But did you know that we are nearing the point of “air oversaturation”?Meaning there are only so much frequencies that our devices can (safely) use,and with every new one those become more scarce.Of course,we are still far from this,and we have a bunch of frequencies we should snatch back from old tv networks,but sooner or later we will have covered all the frequencies available.

We’re also working on directional radios, though. If you can get the antennas right, you could have your gadgets automatically track the other radio(s) you’re communicating with, broadening and narrowing the beams as necessary, to save on battery. (and also interference with/to other devices)

There’s also fusion power. If VR was like fusion power, we should have expected it within 30 years back in the 80s when we first started working on it, and now 30 years later we should still be confidently expecting it in 30 years. Fusion power has always been and will always be only 30 years from practical application that will Save Us All, amen.

(Not that I begrudge fusion power research. I think it’s cool and they probably discover a bunch of useful stuff in the process. It’s just been rather amusing how its ETA keeps receding as progress is made)

Technically,we do have fusion power.Not just in destructive form.You can make a fusion reactor.The problem is,it sucks up more power than we can harness from it with our current technology.

Umm,why do we need a screen and lenses in order to envelop your field of vision completely?I thought one of the features of oled technology is that you can make flexible screens which you could mold into any shape you like(within reason).You would still need to do a bunch of image distortion in order to cover a bent monitor successfully,but you would at least avoid one step of complexity,and in a complex rig like this every step counts.

And no,I dont think that Ive discovered something new here,Im sure that someone who worked on this tried bending the screens.What I would like to know is why that failed?

Disclaimer: I’m a complete layman at this. I work in software engineering, but I know little to nothing about the hardware side of things.

I think the issue though, would be the angle at which the pixels on the screen are seen by the eye – as they’re closer you’d be able to see the edges more easily if they’re at an angle, which could make them appear to be the wrong angle.

TL;DR – you could end up seeing the edges of the pixels and ruining the illusion

From what I understood of Carmack’s presentation last year(?), a lot of the lenses jammed into the things, are to compensate for the individual positions, angles, etc of peoples’ eyeballs. Prescription VR headsets would cut down on a lot of this. 3D printers, plus auto-website to input your measurements? :D

I had the same idea, also that the since pixels are basically printed in the screen, so you could cut down on the number of pixels you have to render (and maybe make some gains in power consumption/heat dispersion/persistence) by making them denser in the middle, where you need more (the resolution of the screen needs to be really high because the middle needs it) and letting them spread out toward the edges.

I think the justification is, that the Rift started off as a Kickstarter project, and thus was originally designed to use off-the-shelf hardware that you can buy off of reliable suppliers. Even with Facebook money, I don’t think they have the capital right now to be doing that kind of manufacturing R&D, especially since they would have to overhaul their SDK if they did it.

I strongly suspect that if VR takes off, the hardware used in the headsets has a LOT of room for optimization, which we will see presently.

But Shamoose,what about light latency?Why didnt you talk about revolutionary microsoft faster photonsâ„¢?

On a similar note: I always enjoyed your minute-by-minute recaps of Carmack keynotes. Do you plan to do one for his new occulus schtick as well?

VR is an impressive piece of technology, but I doubt it will do much good for gaming. First off, it’s pointless and weird for anything which isn’t first person. Then in first person, we basically only have shooters, and VR won’t change that. Does Quake in VR play better than Quake on a screen? I don’t think so. It doesn’t solve anything, but adds a layer of extra problems.

Simulating a VR world is a neat concept, but in reality, it doesn’t make for good games. Skyrim is the best we currently have, and honestly, I’m still playing Quake 3 from more than a decade ago, but Skyrim has worn out its welcome. These games live off novelty, and novelty wears off. Good game mechanics don’t. I don’t need VR to play Outwitters, Auro, Hoplite, Prismata, Quake, LoL or Starcraft.

Actually it does wonders for first person,and can somewhat improve the rest of the genres(well maybe not 3rd person,because it practically kills all need for it).It adds something really important that has been lacking:Peripheral vision.And not just the peripheral vision you get with a 3 monitor setup,but one that enables you to spot parts of up and down.Furthermore,it adds one other thing that a few games have toyed with,with mixed results:leaning.Imagine thief 1 and 2 where you can actually slowly peek behind a corner,judging the lighting by actually looking at your body instead of a light gem.

These two seemingly simple things together will finally enable you to feel like you have an actual body in a video game,instead of various tricks that merely present you with a cool ghostly form(either through a third person avatar or through various parts of your body being visible in first person if you look down).

As for the other genres,it will make ui much easier.Moving the camera around in a strategy game,for example.Giving you more places for various hud elements,etc.

Of course,none of this is compatible with old games,but possibilities for new games providing you with greater immersion are huge.Though,sadly,the one type that should be geared for highest immersion will probably be the last one that manages to give it to you:RPGs with lots of talking.Because immersion during conversation requires a much more sophisticated ai than what we have used so far.True,human revolution and alpha protocol managed to do some interesting things with conversation mechanics,but their successes were mixed.

But,like Ive said above,those are all at least 10 years in the future.

Although,if you have stalkerish tendencies,this already existing game will allow you for 105% immersion in the role of a stalker.

I have my doubts about RTS games benefiting from VR. That kind of precision control would be killer on your neck muscles.

Now, as for RPGs – how about human-only games? Living-room, internet-based, LARP-ing would be fun to do, even if you only had Wii remotes and basic Oculuses! :D

“Now, as for RPGs ““ how about human-only games? Living-room, internet-based, LARP-ing would be fun to do, even if you only had Wii remotes and basic Oculuses! :D”

Indeed.But I wouldnt count on it,because wiiu is perfect for stuff like that,and yet since its release,not a single game that would use the gm/player feature.

The other thing VR does for games is make them much, much scarier. When I play Half-Life on screen, it’s nothing to walk on a narrow ledge, go over falls in the boat or run into giant bugs with the car. In VR, it’s terrifying.

Maybe for jump scares. Non-jump scares, like Amnesia, I think are already pretty darn good. I mean, VR would make them even better, but I still haven’t finished the game, so…let me catch up to the tech. :P

Well… but HL2 wasn’t trying to be scary in those parts. It’s just that stuff that we currently expect videogame protagonists to be able to do normally, is actually kinda terrifying if you try to do it in real life. And VR is closer to real life than it is to videogames.

Walking along narrow ledges, for instance — yes, I can *completely* believe that that’s unnervingly scary in VR, especially if you’re not in the “completely fine with arbitrary heights” group of people…

So,has anyone tried portal with oculus?I bet that one is both terrifying and nauseating.

Welll, not by itself, but if you add in a couple of gloves or such you could do some awesome martial arts or swordfighting games. They might be good exercise too. I can imagine Ultraman-type things; you fight the monsters hand to hand, and then you get your death ray thing by holding your hands like that, and . . .

Shamus,a few of technical questions:

Now that speed is of the utmost priority,would it be better to,instead of drawing only what is in the field of vision like we do today,load the whole 360 degrees into memory,and then have it on quick call for when you turn your head?This would increase the initial load time,but would it significantly reduce the requirments for quick updates from the gpu?And could this extra memory be stored in the headgear itself,since it already is mostly padding,and a chip or two of memory wont make it that heavier.

Also,seeing how games as made today are too fast for realistic movement in vr,how significantly will slowing the movement down impact updates required from the gpu?Is it enough to offset the higher fps for two screens?And how well can you use it to preload stuff depending on where the player is going to?

Have I given you enough stuff to write a new column about it?

I can answer a few of these:

– Rendering a whole 360° surround view would require GPU capacities probably at least as high as achieving a steady 120 fps. But it would also require much larger quantities of video memory on top of that, but for 120 fps you don’t need more memory than for the same scene at 60 fps.

– Usually, the entire scene is loaded into memory anyway, because there is stuff going on off-screen, too. Loading times are therefore unaffected by the field of view at which the scene is rendered.

– The speed at which the player entity moves within a game has very little effect here, with the sole exception where you have streaming content and assets have to be swapped out all the time; in these cases, however, you can still expect the bottleneck to be the hard drive. (You can experience this in many MMOs, when you log in into a very busy place, you may see character models appearing one by one around you, while the rendering itself remains smooth throughout.)

“Rendering a whole 360° surround view would require GPU capacities probably at least as high as achieving a steady 120 fps.”

Oh no, much higher, you would need 4 “screens” horizontally and one above and one below (asumung 90 degrees per “screen”.

For a 1920×1080 120hz (3D, 60Hz*2) resolution This would mean 1920*1080*6*120*24 = 5.971968, that is just under 6 gigabit of data to push per second, the fastest that data can be pushed today is via DisplayPort 1.3 using lossy video compression to reach around 87 megabit, “normal” DisplayPort 1.3 and HDMI is way less than that.

And why so any virtual screens? Because if you did it with only one you would have fisheye hell, look up multiple monitor gameplay on youtube, see those triple widescreen videos? Notice that fisheye on the left and right sides?

Then you might ask, “then why not just render the stuff right in front of you?”.

Well, as Mephane points out, that is exactly what is done today.

The only reason you would want to render more than the viewable (or single screen area) is with multiple displays (like triple monitors) when you want all displays to be legible.

You would not want the player to glance at some space ship controls on the left and see it distorted and stretched for example.

With the rift on the other hand you actually do turn your head and this is noticed by the software/game.

I guess pre-rendering 1 frame (that may or may not need to be discarded if the player stops movement early) would have some use.

But the GPU/CPU spent on that frame could be used towards keeping framerates up anyway.

Nope, and for a different reason than you think.

You’ll turn your head in the future.

That means the 360×360 degree scene the GPU rendered now will be wrong by the time you turn your head – you’ll have moved the character, perhaps moved your head forward/back etc and things within the world have moved or changed.

So that whole 360x360deg view is now looking at a slightly different world from a slightly different place and so needs to be redrawn from your new vantage point, showing how the world has changed.

For that last part, I strongly suspect we aren’t going to see savings from slower-movement characters. I think the areas are going to become more dense, so still have the same number of polys, just crammed into a 30m^2 area, instead of a 100m^2 area. No more need to give that kitchen table a 5m berth so the character can walk around it…

Well Shamus, you might be the right person (of many I hope) that brings attention to more “normal” stuff.

The Oculus VR SDK really should have the OpenGL stuff more looked at, at this stage.

As far as I know there is no Prototype III planned yet, it sounded more like the next hardware would be the consumer one (sometime next year?).

If that is the case then they really should use some of that Facebook injection of money and get some better SDKs out there, devs do need about a year of time to get a game to work ok with VR, and with the Steam box OpenGL is on the rise again.

I was surprised that the Oculus SDK did not have full OpenGL support from the start.

It is also possible (pure speculation though) that Facebook was interetsed because of the SDK.

If Facebook was to make a great Oculus VR SDK (for devs) and Oculus VR “OS” (for consumers) then they might pull off a Google and make their own “Android OS”, they’ll do the launch hardware as a example, and then make the specs easily available and then license the software, soon you would have Oculus VR hardware popping up from a bunch of different makers The Samsung Oculus, the LG Oculus and so on, all running the Oculus VR software.

I wonder if they’re holding out for glNext before they really put their weight behind support?

Ok, so you’ve done a “How Good Is It?” article (excellent), and a “How It Works?” article (also excellent).

Now I just hope you DON’T have plans for a “How Is It Built?” article… :-)

Shamus Young presents: Mighty complicated Machines!

“Hi, there, kids! I’m an Oculus Rift, but you can just call me Rifter, because our writers are crap. Anyhoo, say hello to Wendy, the Windows PC!”

People have been throwing up all over the sea for millennia, and humanity figured out why a long time ago (certainly before the past 20 years).

The reason for the motion sickness is, basically, an evolutionary defense mechanism. Since we are land creatures, there are very few occasions where our vestibular system and our eyes convey conflicting evidence about our movement. One of the most common is poisoning. Hence, vomiting.

In the real world, we don’t only sit in one place and look around. We move about. For some odd (and very interesting) reason, we find it easier to immerse into almost any type of action when we sit in front of a screen and have to rotate our view with a keyboard (very very awkward mechanism) than doing the same thing with a VR set. With VR immersion breaks much faster.

It’s like once we get the more refined input, we expect the output to be more sophisticated and refined, too.

This is a serious barrier for the application of VR that is not mentioned that much. I guess it’s because it doesn’t directly involve VR, and has more to do with cognitive psychology than with engineering.

OTOH, motion causing seasickness and freefall are things people get used to, to the point where many can adapt in and out of those thing multiple times per minute and fare well. Or, contrariwise, cope with for weeks at a time and recover in days from. Adaptable little cusses we are.