This week Michael is talking about ray tracing. Ray tracing is an odd thing. It’s both the most primitive and the most advanced way to create lighting in a scene. It’s basically just a brute force solution. You simulate the light. It’s crazy expensive is terms of CPU power, so I was shocked at how fast his lighting system is. Apparently his program can render a single frame in just 2.4 seconds. Now, that’s too slow to actually use in a game. But I remember messing around with ray-traced scenes in the early 90’s, back when a single frame would take over a minute.

I haven’t thought about ray tracing in a while, and I guess the progress from 60 seconds to 2.4 seconds sounds about right-ish for the CPU speed increases we’ve seen since then. (Allowing for the fact that our screens are now larger so we have more pixels to contend with. Perhaps it’s even possible to render a 640×480 or 800×600 scene at interactive framerates.) But it was still shocking to be reminded of how far we’ve come.

Keep in mind that most graphics technology is about lighting, and most lighting is about trying to get as close to the results of ray-tracing without having to do ray-tracing. With ray-tracing, everything will cast shadows, and in a scene with lots of light sources you end up with many overlapping shadows around your feet, creating a dark area. In the 90’s, someone came up with the idea of simulating this by putting a fuzzy shadow-ish dark blob under the feet of characters. It simulated that shadow we’re used to seeing in the real world, thus getting us a tiny step closer to ray-traced appearance without needing to do ray-trace calculations.

Since then, we’ve come pretty darn close. When I talk about the rising cost of game development, I’m mostly talking about the cost of using the newer lighting models. Setting up the lighting in a Crysis-like game can be very complex. All the objects need many textures to describe their color, surface contours, shine, and a bunch of other stuff. We’ve come close to the ray-traced appearance, but we’re spending twenty times as much money to do it. Now all of a sudden I realize that just brute-force ray tracing would be perfectly feasible if it were possible to offload the work onto your graphics card. (I don’t think it is.)

And just to be clear, I’m just musing about how the technology has evolved. I’m not not dreaming about a future when everything is ray traced. Ray tracing can’t give us cartoon shading or some of the other really impressive visual looks we’ve seen over the last few years. (Team Fortress 2, Super Mario Galaxy, Limbo.) Photorealism can go die in a fire.

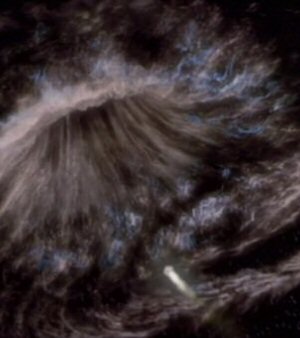

Anyway, Michael took the data from the Twenty Sided Minecraft server and put it into his program. Here is the result:

Link (YouTube) |

Really neat. Read the whole article if you want to know the how & why.

Secret of Good Secrets

Sometimes in-game secrets are fun and sometimes they're lame. Here's why.

Internet News is All Wrong

Why is internet news so bad, why do people prefer celebrity fluff, and how could it be made better?

How I Plan To Rule This Dumb Industry

Here is how I'd conquer the game-publishing business. (Hint: NOT by copying EA, 2K, Activision, Take-Two, or Ubisoft.)

The Gradient of Plot Holes

Most stories have plot holes. The failure isn't that they exist, it's when you notice them while immersed in the story.

Rage 2

The game was a dud, and I'm convinced a big part of that is due to the way the game leaned into its story. Its terrible, cringe-inducing story.

T w e n t y S i d e d

T w e n t y S i d e d

Raytacing doesn’t eliminate the need for many different textures. It is only used for shadow and light; surface properties of objects, like color, reflectivity, glossiness or the details added by normal maps, are still needed as separate information, i.e. textures. Also Raytracing tends to create really ugly shadows (sharp border between shaded and lit areas) without some advanced (and costly) algorithms (light source not a point, but an area/volume), so it won’t replace the current system for shading (shadow maps + ambient occlusion) for a long time..

Raytracing does need to have some information about the surface of the object, though, otherwise true reflections wouldn’t work.

As for the shadows, if you have a ton of time to spend on one frame you can get realistic looking shadows by using an array of lights (“area light,” as POV-Ray refers to them). That being said, they are very slow compared to a single point light.

I don’t know about Ray Tracing as a standalone solution, so maybe this isn’t something native to the method, but at least in Maya ray tracing has a way to define the focus of the light rays, which will not only give you less-sharp shadows, but will have the soft shadows get sharper as the object gets close to the cast-surface – which is a LOT more realistic, although it increases render time greatly.

soft shadows with raytracing usually require area lights (i.e. lights are objects with a certain size, no more point sources). The raytracer then determines how uch of the light is visible from the point beng lighted, i.e. it effectively needs to redner a complete image from that point of view, and the size of the light in that image determines how much light it sends to that point. The alternate method is to represent area lights with multiple light sources, so only those need to be tested.

The first method will also give you indirect lighting but is either horribly slow or gives grainy images (if the resolution of the secondary image is too low), the second is much faster but gives stepped shadows, since it still regards only descrete point sources.

This is why most raytracing software still uses shadowmaps and not the actual raytracing algorithm to create soft shadows: You render the scene from the point of view of every light source, project the visibility map on the geometry and blur the result. The downside is that you have no indirect lighting this way (i.e.: Looking through a lens or in a mirror is fine, but you can’t project an image of a light source or reflect light that way, that’s called caustics).

Shadowmaps also have the advantage that they don’t change unless the relative position of light and lighted geometry changes. So if you have fixed lights and most of the scenery doesn’t move, only perspective and maybe a few objects are moveable, you can just reuse most shadowmaps for every new frame.

I’ve played around with POV-Ray a fair bit and can say that it’s definitely possible to have a scene that takes a considerable amount of time to run on modern computers. That being said, a newer system can render a photorealistic scene in less time than it would take for your 386 to render a reflective sphere on a checked plane.

As for its use in games, I think it might be possible if you keep the scene complexity down. I had a demo a while back showing off real-time raytracing. The scene wasn’t particularly complex and the resolution was quite low, but given that its target hardware was a Pentium II, that’s not at all surprising. I can’t help but wonder how well a system like that (with a liberal dash of multithreading, of course) would work on a 6-core Core i7.

Photorealism can go die in a fire?

Really?

That was pithier than saying “the self-defeating, entirely pointless, and often eye-popping ugly PURSUIT of photorealism”… can go die in a fire.

I’ve been more or less happy with graphics since 2004 and I’d much rather have developers spend their money on just about anything else besides the graphics.

Same here…I’d like to see some other innovation besides graphics…good gameplay and story is much more important and I rather play some older games (that still have pretty graphics, at least in my eyes) than waddling through the next cover based shooter…that is…I wouldn’t mind some updates on my favorite games, like for example Zone of the Enders, but not so much for the graphics as for new challenges/story…

So your claiming that photo-realism is impossible(“entirely pointless…PURSUIT”) for a video game?

Unless you can prove that, what makes it so hateful of a goal? I understand and agree that fun must be the primary goal of a game, but can you concede that photo-realism would enable other potentially fun sorts of games, while enhancing the fun of many others?

If you read the various things Shamus has written on the subject of problems with the video game industry, you’ll notice a trend. There’s DRM, which is pointless and insulting and vile. Then there are graphics. The huge increases in spending on graphics cause all sorts of gamer woes: boring rehashes of successful past games because spending that kind of money on a risky new idea is…risky; DRM to “protect” said investment; riduculously high prices; the end of all hope for mankind.

Anyway, that’s what I’ve taken from reading this stuff for a few years.

Because if I want photorealism, there is a window literally right behind my monitor that I can use instead. I want my entertainment to be entertaining, not “realistic”.

I didn’t say it was impossible, I said it was pointless. It’s pointless because it doesn’t make a game better.

“can you concede that photo-realism would enable other potentially fun sorts of games, while enhancing the fun of many others?”

We’re 90% of the way there now, and games are less fun for me than they used to be.

I would be willing to concede that photorealism could enable fun new genres of games if someone would give an example to that effect. What sort of games are you thinking of that are not possible without truly photorealistic graphics?

Indeed.

I really prefer polished “old” graphics over the cutting edge. Compare HL2 Episode 2 to (any mainstream game made in the last ~18 months), for example. It’s obvious to me that when a developer has had an unusually long time (by industry standards) to use and become proficient with their technology, the results are much better, and perhaps not only in terms of the graphics. Although how the hell anyone can get comfy with Hammer I’ll never know.

It may just be because I’m binging on it lately, but WoW is one damn fine looking game, I believe because instead of focusing on cramming rendering tech features into the engine, they’re doing the best they can with what they’ve got.

There’s a great set of videos on YouTube (http://www.youtube.com/view_play_list?p=8A2AA06DBEBB012B) that’s a discussion between game developers Chris Crawford and Jason Rohrer. At one point, there’s a comment that “I think 3d is just a fad.”

*chuckle*

I’m pretty sure that the reason nVidia’s 3D system WORKS is because the engines can/are *already* processing in 3D, and relying on the graphics hardware API to handle the rendering for display. What that display looks like is pretty irrelevant to the game engine; it’s all on the graphics hardware. Which means pretty much that the game designers design once, and nVidia “3d-izes” it automatically. See http://developer.download.nvidia.com/whitepapers/2010/NVIDIA%203D%20Vision%20Automatic.pdf for more detail.

In short, it makes the “it’s a fad” as moot as game designers writing to particular color depths or monitor sizes.

Well, that doesn’t always work perfectly; if you’re doing anything clever with the depth-buffer, for example, I think you could probably fuck it up quite badly.

I’ve just treated myself to the Humble Indie Bundle, and although I’ve until now only played some of the demos (having no time for games right now (but apparently for writing comments here…)), they do wonderful things with graphics in Osmos for example. Not photorealistic (how can a game about Osmosis be photorealistic, anyway?), but waayy prettier than what was served in 2004. No fancy special effects, but good use of rather simple techniques, very good-looking. And World Of Goo … hey, World of Goo, need I say more? Essentially 2D, but I really love that style.

Case in point: You can make beautiful graphics with simple methods, if you dial down realism a bit, make a very cool new game, sell it for really small money and (afaik) make a living from it!

If large game companies took that lesson, invested just 10% less in graphics and then gave half of that to people who actually care about the rest of the game, the result should be overwhelming.

And ditch stupid DRM already, that’s a lot of money saved for them :)

Ars Technica likes RTRT. Here are some articles:

http://arstechnica.com/hardware/news/2009/07/800-tflop-real-time-ray-tracing-gpu-unveiled-not-for-gamers.ars

http://arstechnica.com/hardware/news/2007/10/real-time-ray-tracing-the-gpu-and-gpgpu.ars

http://arstechnica.com/hardware/news/2009/03/-if-i-were-to.ars

When you have more polygons on the screen than you have pixels, ray tracing would be just as fast. One ray isn’t much slower than one polygon, but for a long time we had 800×600 pixels (or so) and could go with a few hundred or few thousand polys, a tremendous saving. It’s just that ATI and Nvidia make cards with hundreds of specialized polygon processors, and most raytracing software runs just on one CPU with 3 Ghz or so. If we threw the same amount of power at ray tracing, it would be completely feasible by now.

And the concept of pixel shaders should work perfectly well for ray tracing too, so all the cartoony graphics and such were still possible. And probably easier to program too, since you pretty much only need to write one single algorithm (the one that finds the intersections of rays and objects) for everything. Imagine how fast spheres are, and how expensive they are in polygon-world.

RPS made me stumble upon something:

http://www.youtube.com/watch?v=_CCZIBDt1uM

Voxels, but still.

I love it! He says that “rendering is just a side product of all that”, referring to the use of physics throughout!

Re raytracing on GPU hardware, this has gone around recently: http://users.softlab.ece.ntua.gr/~ttsiod/cudarenderer2.html I’m not 100% certain this renderer is a straight-up raytracer in the core (didn’t take the time to read the code) but he does do(/try to do) shadow raycasting.

While I can’t cite any particular examples, the 3D graphics program Poser can do the cell-shaded look with raytracing enabled (I’m fairly certain).

You just need to have the right setup of materials for the render engine.

Ray-Tracing is also useful for reflections. Mirrors are easy. Mirrors mirroring mirrors, or non-flat mirrors are not.

There was this really cool Ray-Tracing demo in one of the logo screens of Darwinia. It had a couple spinning reflective balls and was very cool to play around with.

Loved that game so much.

And now you’ve got the song that plays during that bootloader stuck in my head…. and in a few seconds my mp3 player will be actively pumping it into my ears.

Trash80/DMA_SC’s ‘Visitors from dreams’ if anyone else cares for a listen.

Non-flat mirrors are no more difficult than flat mirrors, and multiple mirrors is just a matter of allowing more bounces, with exponentially more rendering time.

One nice thing about ray tracing algorithms is that they are really easy to get running on multiple processors – each algorithm pass is done once per pixel. That means that as you increase the number of cores in the computer, the speed of ray tracing increases that much faster.

Another thing that helps ray-tracing head towards usability is the trend towards more complex scenes in games. The time complexity to render a scene using a rasterizer is O(n), where n is the number of polygons in the scene. For a ray tracer, it’s O(log n) on the same scene. So as game designers make their environments and models more and more complicated and detailed it naturally erodes the starting disadvantage that ray tracing has.

Also, some interesting related links:

A forum dedicated to real-time ray tracing development, including using GPUs to handle some of the computation.

A thread on that forum where someone is using Minecraft world data as input to their hybrid CPU/GPU ray tracer, with links to Youtube videos of the same.

It’s actually surprisingly hard to offload ray-tracing onto the GPU. Most GPU instruction sets are very badly adapted to doing one-at-a-time highly-conditional operations like, say, ray/surface intersections with big bundles of not-necessarily-related rays.

I’m not sure what SmallLuxGPU actually does, bit it’s so fast, they’re using it for realtime-preview as well. It’s not raytracing as we know it but it’s not scanline rendering either. It somehow manages to produce a coarse preview image and then refine that iteratively, with more polygons on screen than you’ll see in any game in the foreseeable future.

Would not be suited for games, of course. As long as the camera’s moving, everything’s a blur.

http://vimeo.com/14290797

Wow, google ads. WoW gold on my 20 sided? I know that stuff is mostly automated, but jeez, didn’t expect to see that here. I don’t even know what to think.

Eh, for as much as Shamus talks about WoW it was bound to happen.

Pretty much every time I pop open an e-mail from Blizzard in my Gmail account I see an ad for WoW gold. Gold sellers practically come out of the woodwork.

Nvidia actually has a tech demo of a GPU based ray tracer that renders a scene with a shiny car. It gets pretty close to photorealistic in just a few seconds, which is impressive when you consider that it’s doing multiple reflections per ray, and the cars have some very complicated reflection areas (such as inside the headlights). If you have a 400 or 500 series Geforce card, you can download the demo at http://www.nvidia.com/object/cool_stuff.html#/demos/2116 (it sort of ran on my 275, but not usably, and it still lags somewhat on a newer card).

Ray tracing should be the epitome of SIMD techniques and therefore eminently suitable for GPU solutions. As far as I know there are a number of issues getting in the way of the process but GPU-based ray tracing is definitely being done. See nVidia’s OptiX (god I hate their insane capitalisation crap)

http://www.nvidia.com/object/optix.html

Are you going to post about every Let’s Code update? Because if you are, I might as well unsubscribe from his blog, so I don’t get duplicate posts like these.

I like to think I add something to the discussion with these posts. I mean, I banged out 500 words here, it’s not like this was just a link. He does a thing, I give my thoughts on it. It’s a way for me to talk shop even when I’m not working in the shop.

But. I dunno. It’s your RSS feed. Whatever.

From *his* blog, not your’s Shamus – I can kinda get the idea of wanting to read your viewpoint at the same time, though I have no problem with them being days apart.

OH GOD NO, NOT *A* DUPLICATE POST EVERY ONCE IN A WHILE! Your poor poor eyes and fingers.

Besides Nvidia’s Optix (which is a raytracer, implemented on a GPU), there used to be an Intel engine for Real-time raytracing. They used to run Quake 3 and 4 on it, with 127 fps, in 2007. They predicted first Raytracing games by 2009, but seems to have been overly optimistic …

I can only find German newreports about it. Probably because these guys were involved:

http://graphics.cs.uni-sb.de/RTGames/

They also developed a prototype RT chip that will put “normal” 3D chips to shame.

Anyway: The Raytracing method isn’t necessarily better than what’s being done today. I used to play with RT software a lot, and while raytraced shadows are physically correct, shadowmaps tend to look more realistic, with them being fuzzy. The question is rather this: How many triangles and light sources do I have, how much transparancy, partial reflections, area lights, caustics, indirect lighting (Radiosity) … all of this can multiply required rendering time by several orders of magnitude. If you reduce the specs so it will run with 50 fps, the result might still be based on physics, but won’t look as good as “regular” 3D with well-done fake effects.

I think this paper gives some good details:

http://graphics.cs.uni-saarland.de/fileadmin/cguds/papers/2008/georgiev_08_rtrt/RTRTIntuition.pdf

They don’t have any textures though, so … it’s all a bit crude right now, but if the complexity problem with rasterisation continues to grow as described in the paper, we might yet see raytraced games :)

I’ve no idea whether that makes live easier for game developers.

EDIT: Look at this!

http://www.youtube.com/watch?v=mtHDSG2wNho

Apparently it’s not just theory by now. I read another interview (ind German again) where someone said it’d be much easier to do this stuff with raytracing for the developer. Well, I guess that might be marketingspeech or not.

In the raytracing-focused comp graphics class I took back in ’90, I would have killed to be able to draw a even a simple frame (ie: a mirrored sphere, cube and cone on a plain with a few light sources at 800×600) in a minute. My code to do that took several hours on my 12MHz 286 PC (with 287 coprocessor!), or 45 minutes on the SGI workstation in the lab.

heehee!

In 95 I misused my mother’s 486 DX33 to render stuff with trueSpace (which sadly stopped existing a year ago…). Simpler scenes (stone cave, single light source, a glass ball with reflections, 640×480) got done in just a few minutes already, but I once blocked the computer for three days with one image (which was never finished, since I had to abort it). I think software made a lot of advances between 1990 and now, not just processors. Not just by utilising new hardware but also by optimising algorithms and implementations.

Just look ad C64 graphics demos :)

Something is definitely wrong here… The extra cost of games isn’t because they are simulating raytrace but because they are providing the information to do something quite like it.

Even if raytrace would be feasible one would still need to write the proper shaders, the bump maps, the glossmaps.

I don’t really get what you’re trying to say.

Or maybe… perhaps we could all go to a Reyes micropolygon pipe. Wake me up when another (decent) implementation arises besides Pixar’s.

As far as I get it, the thing with raytracing is that transparency and reflections and such are a pain in the ass with scanline rendering (because you need to sort the z buffer and make sure all’s right and stuff), and with raytracing the algorithm handles all of that implicitly.

=> If you just have a few triangles with maybe Guraud shading, that’s fine, but everything else costs extra, not just rendering time but also code. Also, it’s not as easy to get it physically right as with raytracing.

But the latter usually takes longer, and sometimes well-emulated physical behaviour (like adding textures for lens flares, and that type of stuff) might look better than what RT could come up with if you limit the iteration depth so it takes the same amount of time.

Have to agree here, real-time raytracing (or probably more accurately raycasting, given that most CGI is scanline micropolygons) is probably going to be a thing in the future since it’s simpler and more flexible, but CGI is crazy expensive for movies for the same reason graphics is expensive for games – artists have to make all those models and textures and animations still.

I think I see a common misconception here. Everybody seems to talk about “real-time”-graphics and “raytracing”-graphics. But in in the science field of CG the distinction that is most commonly made is between so called local illumination methods, part of which are the commonly used real-time engines (technically they are all rasterizers), and the global illumination methods, one of which is the raytracing technology.

Raytracing has the distinction of being probably the oldest global illumination technique and also the most prominent in media. That is also likely the reason why many high-end rendering suites choose to call their renderers raytracers, while in reality most of them use more advanced technologies like bidirectional path tracing, photon mapping or various Monte Carlo rendering algorithms. While most of those incorporate raytracing as part of their algorithm, they are not raytracing as it is defined and offer not only faster results but also more photorealistic ones (if that is something you like :-). The reason is that because of the main principle of raytracing it is very good at some things (like sharp, correct shadows, refraction and reflection) and not so good at others (e.g. caustics, incident lighting, soft shadows, depth of field). It also depends on what the actual algorithm does once it has traced the ray to some surface. IT could calculate (effectively fake) the color of the point or trace the ray somewhere else, depending on the level of sophistication of the algorithm. Photon mapping on the other hand can do more and faster, with the disadvantage of being randomized, thus being able to cause statistical anomalies, especially at small sample sizes. An example of photon mapping can be seen e.g. in any recent Pixar movie.

A local illumination algorithm, be it one of the modern rasterizers, or a voxel rendering engine (e.g. Outcast and Comanche 4), or a raycasting engine like in the old times (Wolfenstein 3-D), always has the disadvantage of being, well local. It therefore can’t accurately simulate any global effects like shadows or mirrors. It has therefore to fake them, like placing a camera inside the mirror, rendering the world from it’s perspective and putting that picture on the mirror as a texture. And the major thing that makes them real-time is the fact that we have pretty sophisticated hardware running them. Running something modern on a CPU or even an older GPU would make it a slideshow. It would probably be possible to make just as good hardware for raytracing or some other global illumination algorithm, but for that the GPU manufacturers and the major graphic companies would have to agree on that. Kinda don’t see that happening in the near future.