Hey man, I need a new toaster. You know all about kitchen stuff. Have any suggestions?

The KitchenAid4000 series just came out.

Are those good?

I have a KA4510, and it’s really good.

Does it have 4 slots?

Oh you want 4 slots? Well, the KA4510 XN goes up to four slots, but it only toasts one side.

Let’s pretend I want to toast both sides.

Then you probably don’t want a KitchenAid. Their 4000 series 4-slicers aren’t very good. You could get one of the old KA3510 XN or XNS for cheap these days, but they take like, twenty minutes to toast the bread.

Er. What else is there?

The Cuisinart 7000 series is comparable to the KA 4000 series. The 7420, 7520, and the 7420 all do four slices. Just don’t get any of the SIP models because they can’t do bagels.

SIP?

“Slim Insertion Port”. The units are small, but only regular sliced bread will fit. KA has the same thing on many of their units. Actually, if you want to do bagels with a KA you’ll need the ASI units.

Which is?

“Adaptable Slot Interface”. It just means it can handle bread of varying widths.

So I should get a Cuisinart ASI?

No no no. That’s nonsense. In Cuisinart the units all handle wide bread unless they are SIP.

My head hurts. So I want a Cuisinart 7000 series, but not a SIP, right?

Pretty much. Now, the 7000 series is actually two generations. You don’t want anything before the 7400, because the pre-7400 units actually took up two wall plugs. The 7100 and 7200 four-slotters were actually two dual-slot units strapped together, so they had two cords. Plus, they didn’t have a timer so you had to stand over them yourself.

All I want is to toast bread! Four slices! Both sides!

Then the C7520 T series is for you. You can pick one up at Wall-Mart for about $400 these days.

FOUR HUNDRED DOLLARS! I could buy an oven for that! I could just go out to eat every morning for that kind of money!

Ah, if you’re worried about price then the KitchenAid 4510 ES is a good pick. It’s only got three slots but it’s retailing for about $90.

I’m looking in the Wal-Mart flyer, but I don’t see that model.

Sure you do. Right here: The “Magitoast 7”. See how underneath it says “KA4510 Ex”? That means it’s the KitchenAid 4510 ES or the KitchenAid 4510 EP, just with a brand name slapped onto it.

…?

KitchenAid and Cuisinart don’t actually sell models directly. They make the insides parts of toasters, then other companies buy them, put the fancy shell on them, and give them a new brand name. But if you want to know what you’re getting, you have to look at which design the unit is based on.

Ah! I get it! Then why don’t I get this “TastyToast 2000”, which is like that 7520 you mentioned earlier. This one is only $50.

Er. That’s not the same thing. That’s a 7520 OS. The OS means “One Slice”. Total bargain unit for suckers. Some goes for the 6000 series and anything with a MRQ after it.

You know what? I’ve decided I don’t want toast anymore. I’m switching to breakfast cereal.

I’m shopping for a graphics card, and this is exactly what I’m going through, except I don’t have a know-it-all to help me out. I have never seen such rampant ineptitude at marketing products. I’m even savvy enough to know what I’m looking for, but the endless chipset numbers and sub-types and varying configurations makes it impossible to get any sort of handle on the thing. It’s actually worse than my example above, since higher numbers aren’t always better. I’ve searched around, and I have yet to find a breakdown as clear as the conversation above. What is the difference between these two generations of cards? What does this suffix mean? Why am I seeing this chipset in one place for $119.99 and elsewhere for $299.99? Is this the same product with a huge markup, or is this second unit different in some way I can’t discern?

Features get added in the middle of numeric series. Like, an NVIDIA 7800 supports 3.0 pixel shaders, and earlier 7000 models don’t. (Or don’t list it among their features.) So it’s impossible to do any real comparison shopping until you’ve memorized all the feature sets for all the chipset numbers for both NVIDIA and ATI. Yeah, let me get right on that.

Game developers who keep cranking up the system specs are killing themselves. They’re making sure that their only customers are people who are willing to wade through this idiocy, fork over hundreds of bucks, and then muck about inside of their computers to do the upgrade. You shouldn’t need to be Seth Godin to realize most people would rather drop that same $400 on a console and have done with it. In fact, it’s pretty clear that this is exactly what people are doing by the millions.

The main advantage of the PC as a gaming platform was its sheer ubiquity. But while PCs are probably more common than televisions, PCs which are equipped with the latest hardware are pretty rare, and graphics card manufacturers seem to be doing their level best to keep it that way.

This is the second time this year I looked into upgrading, and both times it seemed like such a stupid, pointless hassle. Like our toaster-buying friend above, I know what I want, but its the sellers job to tell me what they got. Offering someone a Fargleblaster 9672 XTQ is stupid and meaningless.

It really is a shame to watch this aggregate stupidity suck all of the fun out of this hobby. Buying other electronics is fun, but buying graphics hardware is homework. ATI and NVIDIA need to adopt a policy of sensible naming of product lines, fewer products, greater differences between products, and (most importantly) clearly delineated graphics generations, so that consumers can look at a product and know what it is without needing to read the long list of specs. In an ideal world, they shouldn’t even need to understand the meaning of things like DirectX 9.0c and 3.0 pixel shaders. They should know that X is better than Y, and buy accordingly.

Crash Dot Com

Back in 1999, I rode the dot-com bubble. Got rich. Worked hard. Went crazy. Turned poor. It was fun.

Why I Hated Resident Evil 4

Ever wonder how seemingly sane people can hate popular games? It can happen!

Bad and Wrong Music Lessons

A music lesson for people who know nothing about music, from someone who barely knows anything about music.

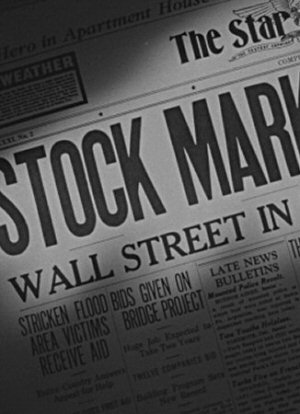

PC Hardware is Toast

This is why shopping for graphics cards is so stupid and miserable.

Starcraft 2: Rush Analysis

I write a program to simulate different strategies in Starcraft 2, to see how they compare.

T w e n t y S i d e d

T w e n t y S i d e d

AMEN!

100% in agreement. It’s not just graphics cards, either – pretty much any piece of PC hardware has the same problem, and it soon becomes pointless trying to figure it all out.

Not that long ago, I used to build my own ‘homebrew’ PCs. Now? PS3 and a Mac. Soooo much less hassle…

Haha, exactly, same here. Except I went the Xbox 360 route.

agreed. it took me DAYS to find the parts I wanted for my system, because I had to figure out what I actually wanted!

Marketing needs to have a long hard talk with their technical people and figure out a naming scheme for their products that doesn’t require you to research each and every one of them

I agree with you that graphics cards could be better marketed than they are. However, the “x is better than y” approach doesn’t work, because different graphics cards are better for different things. Some support more features than others but have lower specs, which means they can support more effects of games but at lower polycount/framerate/resolution. Which graphics card is better depends on what game you’re playing.

How come you know so much about toasters?

I heard that! The PC as a gaming platform is being killed by the very companies you’d think depend on it. NVIDIA and ATI made deals with Intel to put built in Graphics chips that don’t do games into most laptops. That’s a big reason why most people would have to upgrade to run any “real” PC game.

Meanwhile Microsoft became a console company. They seem to have forgotten that games are the big reason to get a PC over a Mac. As soon as Windows stops being a gaming platform, there is no reason to choose a PC over a Mac.

Yes, finding someone to get information on video cards is a royal pain. I have a bit of a clue with NVida cards but I lost track of ATI cards a while ago. I now know what the difference between the various specs are and why a card with 256 Vram may be better (and more expensive) than one with 512, but it was a long process to learn. Only getting worse too.

Best bet is going to a place like Tom’s Hardware and looking at their VGA charts, but even then you have to know what card you’re looking for.

See, this is why I have been pushing the “flex computing” meme. Rather than do desktop computing as one big box of components, you go modular. An external graphics card would be cheaper and easier to deal with.

Sorry for double-posting, but I forgot to mention: some hardware makers have handy choosers on their websites. You tell them what you want to do and your price range, and they recommend graphics cards.

For nVidia: http://www.nvidia.com/HelpMeChoose/index.aspx?qid=1

I couldn’t find anything like that for AMD’s graphics cards, but that’s okay, nVidia’s better anyway.

If it helps, I’m just flipping through the latest issue of Games For Windows and they’re singing the praises of Nvidia’s GEForce 8800 GT, which they claim delivers the performance of a current $400 graphics card for $250. Might be worth looking into.

I used to work for a company that did video slot machines. A couple of years ago they were settling on a video card for the platform, and at the time then nVidia 5200s were the best ‘reasonably priced’ cards.

They settled on a manufacturer. This manufacturer (eVGA I think, but I might be misremembering) had two models of 5200s, that were priced $10 apart. I think one was $70, the other was $80. If you looked at the retail boxes for them they were identical except for the model number. Literally 100% of the listed specs on the box and descriptive text were the same. You couldn’t find anything that would tell you why the one was better. But it turned out that the one that was $10 more expensive had a wider memory bus, or something, and was literally twice the performance, in our application.

Since then I haven’t bought an expensive graphics card, because frankly I don’t have the time to do the research to track down stuff like that.

Couldn’t agree with you more Shamus! I just built a new system last summer and I looked at so many different parts and numbers that I’m not longer certain what I have anymore. I’ll tell you that I was THOROUGHLY confused when I couldn’t buy the card from the chipset manufacturer.

I’m just glad I have a friend who owns an IT business. When I need new hardware, I just tell him my budget and rely on his judgment to get me the best deal.

If I had to do that myself, I’d have given up on this stuff long ago (BTW, it gets a lot harder when you have to factor in Linux compatibility for all your hardware).

Oh, come on…

3D games are the single most complicated implementation of applied mathmatics in the history of the world. You don’t want to make choosing hardware for it easy, now do you?

It used to be pretty easy, but I guess once the “hobby” became more wide spread the marketing people started to get their grubby little paws into it and now you can’t tell one toaster from another.

http://www23.tomshardware.com/graphics_2007.html

Usually where I go when I’m lost in the deepest darkest parts of the GPU jungle. It’s got pretty graphs an’ all.

Amen, I say, Amen!

Why do I have to be a mechanic if I want to drive a car? And why will people look down on you because you just want to drive?

The thought of trying to decide which graphics card is right for me ends up being a fantastic logic bomb. I shut down pretty quickly.

Same problem here. I have no desire or will to research all the different varieties of graphics cards.

Fortunately, at this point in time, it seems like the 8800GT is overwhelmingly the favorite in terms of performance and price. The prices seem to run about $200 to $250 for these cards, which seems quite a bit cheaper to me than $400 to $600 for a pure gaming peripheral. It’s an option I’m definitely looking into, although I think you mentioned previously that you don’t have PCI-E, so that might be out of the question for you.

mmm from your 120$ vs 300$ example, I’d say you were looking at Nvidia 8800’s :-)

one is 320mb memory and has a few less pipelines (if I remember correctly) the other is 640mb memory and a bit better hardeware-wise. if you want to go cheaper, get the 320mb one, it’s considered a good value for money in a lot of hardware sites.

Also, someone already refered you to Tom’s Hardware VGA charts: they’re actually helpfull, and if you look through their archives you’ll see explanations for most of the technologies present in graphics cards today.

Like kamagurka, I’ve a friend who’s a sysadmin. When I was building a machine last fall, I spent a couple hours in a Fry’s getting his advice via phone. I gave up tracking this stuff around 2002-03-ish. Can’t say I saved any money that way vs. buying a built system, but I mostly got better components and a nice, warm sense of self-satisfaction when I got it up and running a couple days later.

Yes, the 8800 are indeed nice, but don’t come in AGP. Thus I can’t use them. The highest I can go on the NVIDIA side is 7600. I haven’t sorted out ATI numbers well enough to compare them just yet.

Which just makes things even worse: Even with the Tom’s Hardware chart, I can’t sort out AGP / PCIe without going shopping.

Sigh.

I feel you pain. With this upgrade I ended up looking at Overclockers (after working out what chipset I wanted (GeForce 8800 GTS), and then finding out that the supposedly worse chipset (GeForce 8800 GT) was actually better in every possibly way – cheaper, smaller, less power-hungry, runs cooler, faster, etc), trying to work out if the different manufacturers names with only £2 to seperate them actually meant anything, and then getting the OcUK own-brand one (which was actually branded as Leadtek) as it was about the only one in stock.

Meh, it works, and it’s a massive jump from the aging GeForce 4 Ti4600 I used to have. I’m actually able to run my games with all the settings at max, without having to worry about dropping frames in graphically-intensive scenes (though the 2.4GHz quad-core intel might be helping a little).

I think the solution to your problem is geek bloggers. Tom’s Hardware is good. Two others I think highly of:

Tech Report

AnandTech

One thing that’s nice is that when they do a review of a new thing, they include a bunch of old things of the same type for comparison purposes. I particularly like Tech Report’s way of doing benchmarks.

Nvidia’s AGP offerings used to go higher, and now appear to be all out of production.

Tomshardware used to split up their AGP/PCIe charts (back in them 2004 days). Now the assumption is that evreyone’s on PCIe.

AGP just doesn’t appear to be a big enough market anymore.

Anyway, there’s plenty of nerds reading this place that can give you pointers =p

*Shrugs* Maybe it’s just me, but I never really have much trouble shopping for video cards and actually find it quite fun. It usually only takes me a couple hours of research on Tom’s Hardware and shopping on Newegg to find what I want. Like, right now for my dream system, I want an Nvidia 8800GT. To replace the blown-up Radeon X700 in my older computer, I got an ATI HD2600Pro (AGP). It did take me a little bit to figure out why two different 8800GT’s from the same manufacturer were priced about $20 apart (one was overclocked, which I don’t need to pay the manufacturer to do for me) and what the difference between the HD2600Pro and HD2600XT was (memory speeds, mostly).

To arrive at the 8800GT, I just looked at the charts on Tom’s Hardware, read a few paragraphs that indicated Nvidia was dominating currently, then selected the fastest Nvidia card in my price range. That narrowed it down to 8800GTS vs. 8800GT. Reading one more article indicated that the GT was the newest card, designed for that mid-range graphics card market, and was better than the GTS.

For the HD2600, I just determined that Nvidia is no longer supporting AGP in its current generation, checked out ATI’s latest offerings (noting the AGP support), then just went for price point and position on Tom’s Hardware’s VGA charts. Since it was to replace a burnt-out card on a 4-year-old machine, I decided sub-$100. It was merely a choice between X1650, HD2600Pro, and HD2600XT. I chose the middle one as the best speed without going over budget.

I definitely do see the appeal of consoles, but being primarily an FPS gamer, I will not move to console until they start supporting mice as controllers on consoles. I know there’s a mouse peripheral for the PS3, but that console is lacking in the “3D shooters I want to play” market. I know there is a mouse adapter for the Xbox, but having used it, it’s still not as good as PC control interfaces.

Oh, that and Warcraft.

Wow… I’m forwarding this to my dad. He does computer set-up/ordering/fixing, and we went through something like this when I wanted to buy a new video card. Incidentally, I gave up and used an older one given to me by the wife of a guy who just died. That’s one way to get a card, anyway…

(Oh, and this is my first post, but I’ve been reading your game design posts and finished DM of the Rings. Nice blog!)

When I got my first PC a couple of years ago, I was blessed to have a tech savvy friend help me sort it out so I could do things like play the Halflife games. Otherwise my brain would have glazed over just thinking about the process.

Even coming this far, you are a better man than I, Shamus.

Poor Shamus

Yeah I did this a year ago, and i’m so glad I am done with it. Endless hours of reading, reviews and all!!!

But if you want real advice youll have to delve into a forum… try these, these sites are pretty good:

http://enthusiast.hardocp.com/

[h] has really good reviews and news and a good forum

http://www.overclockercafe.com/

overclockercafe has good links

And your still using a AGP motherboard? I feel really bad for you now shamus. Looks like you need a mobo upgrade too

I belive they have a do it your self (or links) to how to build a kick butt rig for really cheap. And dont be afraid to ask them for help. Be very specific into your needs and wants and they will generaly at least give you a link to where you should look.

Now if your like me, I decided that I would be ontop of the game this time, and was prepaired to spend 2 grand on a system, and i’m extreamly happy about it in the end and every thing can be turned up to max. I figure I should be safe for at least five years and then have to do it again. =(

Perhaps you should do what I do: develop irrational brand loyalties. That way you don’t have to do most of the research.

I didn’t feel like reinitializing all of my software, so I ended up sticking with an AGP motherboard, which does dramatically affect the graphics cards available.

As a number of people have noted, there are excellent review sites available, and a lot processing power available.

Went skiing over vacation, could not play a lot of games, and have decided to take a vacation from them for a while.

But there is a heck of a lot of quality video power out there these days.

I browsed the above but still no one has posted this link.

http://www.tomshardware.com/2007/12/03/best_graphics_card/

The best graphics cards for the money, December 2007. Use that chart as your starting point – you should consider any card listed on that chart to generally be better than any other card for the same price, and should only consider wavering from that list if you do further research to make up your own mind.

It even has a separate section for AGP cards.

I’m with Doug, irrational brand loyalties ftw. I want to play on my computer, not build the stupid thing. When it comes to computer hardware I am a seriously mentally challenged individual.

That being said, I have a techie who loves this kind of stuff. When I go to purchase my next gamer comp I’ll be getting his input.

What, you didn’t seriously think I was going to make an actual decision on my own computer hardware… did you? :D

A

That link above also has a chart at the end that groups cards together. I believe Shamus has a 6200, so you can look at that chart and see which other cards by nVidia/ATi are about the same, worse, or better than what he currently has.

This means for example that if you can find an ATi x800 AGP card somewhere, for example ebay, then that may be a better buy for you than one of the other cards (which are current models only) earlier in the chart. The X800XT PE for AGP is an excellent choice – I recently sold my one second hand for $200 NZ.

The problem is that the hardware market wants it to be hard to understand and wants to put out 15 graphic cards a month. Because sadly they don’t make there money from resonable consumers who upgrade every few years. They make there money from Joe I’mlivingrentfreeinmybarentsbasement and Timmy MyparentscanonlyshowmelovebybuyingeverythingIwant who both go out and buy the next best card every few montsh because it has 10 more diddledues per thingybobs then the last card they got.

It all is confusing and cost to much money. For the cost of one of these super PCs now a days that can run these new games you can buy all three major platforms and a big screen TV to play them on. PC gaming just isn’t worth the hassle anymore.

Lanthanide totally nailed the link. That guide (updated monthly!) and tier chart breaks everything down to what you really need to know.

(Sorry, a “me too” post, but that chart is seriously good.)

I think it’s pretty clear what they’re trying to do…drive you to the higher priced graphics cards.

As you have discovered, no mere mortal can successfully navigate the venomous snakepit that is “mid-range” graphics cards, but any idiot can pick out a “high-end” card, because there are usually only 2 to choose from.

They are hoping that you will give up on your hunt for perfectly serviceable year-old technology and buy their brand new generation of products.

Best advice for anyone in this position: look for backdated articles, find out what the best card was a year ago, look for the EXACT model number, and buy it on newegg.com. The price will be extremely fair, and your order will be handled flawlessly. Also they take pictures of every item inside and outside the box from multiple angles, so you won’t buy the wrong one accidentally.

you mentioned a FargleBlaster. Did you not mean a Pan-galactic Gargle Blaster? If you did, then you made me very happy.

I usually start by finding out which price range I am in, and then research a little in various forums to find the ideal product. It seems there in each generation of cards, or within each price range is a card that’s the most cost-efficient. It doesn’t really matter if it has whatever shaders or what kind of ram, as long as you know you’re getting the most bang for your buck.

Sometimes, you just have to go out and get a whole new kitchen and a top-of-the-line toaster to go with it.

I just bought a graphics card, and luckily, it wasn’t such an ordeal for me. But that’s just because my computer is 3 or 4 years old with a “stock” video card (so ANYTHING is an improvement), I really only only want to play WoW (which isn’t very demanding compared to many games), and Futureshop had one on sale (and Nvidia 5500, I think) for $40 (a third the price of the next cheapest model). It should arrive any day now. :)

Ouch. AGP. Well, the 7800 should do ok, but ouch.

I know my hardware inside and out, and because of that, I love PC gaming. I took a $300 computer and turned it into the monster I’m using at home now. However, if I didn’t know so much, I would run to consoles in a heartbeat.

There’s no reason it should be this complicated, really.

Update for those who are following this sad waste of time:

I found a few cards which sounded like what I wanted.

http://www.newegg.com/Product/ProductReview.aspx?Item=N82E16814102711

http://www.newegg.com/Product/ProductReview.aspx?Item=N82E16814129094

These are the only two I’ve found that have what I need: DX10, AGP, and under $120. Sadly, neither one is a safe buy. The comments are filled with horror stories about getting the drivers working. I’m not going to plonk down that kind of money and then spend hours hacking away at config files trying to coax the thing to life.

Looks like the issue here is that when it comes to AGP, NVIDIA has nothing available and ATI has stuff available with didgy drivers and no official support.

“Buying other electronics is fun, but buying graphics hardware is homework. ATI and NVIDIA need to adopt a policy of sensible naming of product lines, fewer products, greater differences between products, and (most importantly) clearly delineated graphics generations, so that consumers can look at a product and know what it is without needing to read the long list of specs. In an ideal world, they shouldn't even need to understand the meaning of things like DirectX 9.0c and 3.0 pixel shaders. They should know that X is better than Y, and buy accordingly.”

-Shamus

That entire sequence of statements is exactly what a console is, ha ha. I stepped into the world of the console a long time ago. Yes, you lose somethings in the translation, but I have never had to wonder if my PS3 will play something. I have never had to wonder about the compatibility. I have never had to look into chipsets, serial numbers, or strange features.

I like my PC, I really do. I keep it upto date for what I want to play, which is mostly MMOs, when I need to but not for everything. Add to the long list of why a console is winning the fact that with your console the game will look great. You don’t have to scale it back, lower the resolution and turn of all the features. Yes, you can get a better than console look on a top of the line PC, but that is what we are argueing against. For the price of the awesome Graphics card, you could get a console that would play most of the same games you want to play on your PC, and do it looking awesome. Instead of having to buy an awesome Gfx card, a PCI express motherboard, a quad core proc, and a cooling system that would cost you as much as having all 3 major consoles and some games to boot.

DX10? Are you using Vista? I just kind of assumed that you were running XP–must have been the implied age of the machine.

If you are not using Vista or planning to upgrade to it in the near future, there is no point in limiting yourself to a DX10-compatible card since XP can’t use it anyway. (Naturally, Vista itself is a demanding taskmaster on the hardware in any case…)

J: Facepalm

You’re right. I was just thinking “get DX10 so I don’t have to upgrade in a year”, but of course DX10 is Vista only.

Sigh.

Okay, so I guess I just need a DX9.0c card. Let’s see what I can find…

When I wanted to upgrade my graphics card, it took me and a friend 2 days to figure out I needed to build a new computer for it. The only things left from my old computer are the hard drive and processor. Then I had to spend an hour on the phone with Microsoft tech support to re-activate Windows cause it didn’t like my new hardware.

Tell me about it! Even buying an older card a few years ago I still had a nightmare trying to choose something.

Well, I’m not sure how long your expected lifespan of this card will be. A year? Two years? Longer? At this point, AGP support is a crapshoot since both Nvidia and AMD are not exactly making it a priority.

Without knowing the rest of your system specs and your expected hardware lifecycle, I’m not sure whether to suggest you cheap out, upgrade substantially, or just hold off for a new system altogether. It could be that swapping out your motherboard may be an option, but then I have to wonder if the OS is OEM or retail. :)

I’m expressing significant interest in this since I assembled 2 machines for myself back in August so I went though the whole product research song-and-dance myself. Since then, I’ve been keeping up so all this crap is relatively fresh in my head.

I knew I’d find it eventually!

I used a previous edition of this guide to end up with a very reasonably priced XFX GeForce 8600GT. So far, so good.

Oh, and the aforementioned guide splits up the reviews into PCIe and AGP.

Let’s see if I can get these’ere XHTML tags to work for me. ;)

I have a system with an ATI X300 and can tell you for sure that the drivers totally suck for it. I’ve had numerous blue screens on this system solely because of the graphics card. Let’s not even get into the occasional graphical corruption I get just within Windows.

I had very similar issues with the X600 that I used to use at my old job, using several different driver versions. ATI makes fine chipsets (*nods toward the Xbox 360 and Wii*) but their drivers are crap.

I see that not liking or even being remotely interested in fps has saved me a load of hassle, thanks for adding yet another argument to the “why i dont play fps” pile

And the worst part is: you went to a store, you talked with an “expert” seller, but you bought nothing.

As you said, if you were to buy a video-console, you would had one in your home (not a WII, of course, due to the lack of units :D )

P.S.: try a friend that loves “toasters” and its non-acronyms (I mean, impossible combination of letters)

P.S. 2: try a video-console, the first units of PS3 and XBOX360 got very hot, so you could both play videogames and toast a lot of bread.

P.S. 3: my graphic card is a HIS H165XTQT256GDDA-R X1650 XT IceQ Turbo AGP HDCP 256MB GDDR3. It’s not a joke. I bought it 9 moths ago.

http://www.hisdigital.com/html/product_ov.php?id=276

Manufacturer: HIS

Chip: ATI Radeon X1650 XT

DX 9

Pixel Shader 3.0

That is, it falls in everything you said (included the nonsense numeration)

A while ago, I got the Orange Box; of course, on coming home I found out the PC had too little graphics card RAM, yada yada. My father, thankfully, works in the computer industry, so he set about changing settings in the BIOS and trying various other graphics cards (there was one called the All-in-One 128, which is fine as a name, but it only had 32MB).

Anyway, the story has a happy ending: he had a more recent laptop and I played it on that instead.

OT: This guy says that “Silent Hill: Origins” is better than “Silent Hill 4: The Room”.

ATi is actually pretty easy to sort out. The number that matters is the third one from the right.

A 9800, an X800, and an X1800 are the same level of card (enthusiast) with different levels of DX9 support: 9.0 (SM 2.0), 9.0b (SM 2.0b), and 9.0c (SM 3.0) respectively. Thank Microsoft for ramping up the shader models in the middle of a DX9 build for that confusion. SM 3.0 should have been DX10.

Think of the X as “10”, the X1 as “11”, and all of the sudden it makes some degree of sense. Then they go to the “2” series and the model numbers go to hell again (since they had just left the 8, 9, 10, 11 progression), but the third from the right rule still applies. If it ends in “500” you’re getting a bargain card. If it ends in “300,” regardless of how many numbers come before it, you’re getting crap.

Hope that helps ya. Good luck.

He, he. I was about to comment “Amen!” but I see that Warstrike beat me too it. “Homework”, is right.

dx10 is pretty much a farce anyway. The few companies that do use it don’t seem to focus a lot of energy on it. Crysis runs better even in vista using the dx9 executables. The fact that that the xbox360 is a dx9 machine pretty much guarantees that to be the standard for a couple years at least

You should get a decent agp card now and save your cash for a pci-e system down the road. Though odds are good that a top end agp card will see you all the way to the death of of gaming the way things are going. Let’s hope not though

When I’m looking to do some upgrading, I generally hit up Extreme Tech.

They have a section on building your own computer, with differing price points, from the budget $800 machine, to the colossal seasonal titan of computing power.

Shamus.. I don’t know if you waded through the pages of Tom’s Hardware… but the AGP stuff is on page 5

http://www.tomshardware.com/2007/12/03/best_graphics_card/page5.html

Good luck!! I suggest searching out what card runs the game you really care about eye-candy for and start there.

I actually did buy a toaster (well, an underpowered toaster oven) that toasts one side of the bread at a time. It was like $20 at Sears. It’s a hoot.

I’ve actually found the following two Wikipedia pages to be very helpful with choosing a GPU:

Comparison of NVIDIA Graphics Processing Units

Comparison of ATI Graphics Processing Units

I can generally make sense out of NVIDIA’s at a glance (since the FX series the first number from the left is the series, and the second number from the left is level within the series, starting at 2, for low-end, to 8/9, for the best). The tags at the end are a bit more confusing, but I’ve found that LE is a bit of a lower end version of the card (sort of like how the GF3 Ti200 is a bit slower than the regular GF3), then the GS, GT, GTX, and Ultra models are progressively better. Thankfully, in my experience lower-end chips with nice-looking tags don’t generally perform better than higher-end chips without tags. For instance, my 6600GT might be clocked higher than a 6800, but the 6800 generally performs better than mine due to its 256-bit bus width.

Zaghadka’s explanation for ATI cards helped, because before I had no idea what was going on with them. :P I have to admit to still being very confused about some things as well. For instance, I have no idea what the hell’s going on with the RADEON HDs, and the fact that they played the X1050 — an R300-family device roughly comparable to the X550 — between the R400 and R500 chipsets doesn’t help matters. I guess they were trying to put it “where it belongs,” but it would be far less confusing if they kept the different chipset families separate. For instance, give the R300s the X prefix, give the R400s the X1 prefix, give the R500s the X2 prefix, etc. It would do a better job separating the major chipset revisions and would do a better job preventing confusion (like how the X1300 supports Shader Model 3.0 while the X1250 doesn’t).

I find the best site to go to is either tom’s hardware or anandtech. Google up either and it will be the first link. Tom’s has a very useful “best card for money” which tells you which card in each price range and is updated when cards come out. If you are looking for under 200 the new 3000 series ATI is pretty killer, if you are looking for around 250 someone before mentioned the 8800gt. Those would be my recommendations right now. You will have a card that will last a good 4-6 years.

I’ve upgraded my system recently. I bought everything new except, you guessed, a graphics card. I just didn’t have the time to do detective work and figure out what card is best for my budget.

I just read that you have agp, well then that changes your choices, but the 7800gs is in the 140 price range nowadays and I had that card previously and I liked that card a lot. There is a rumer of a ATI 3800 series agp version, that would be the king of the hill but prob way over 200.

My solution?

Hey forum-I-post-on, I want to play these games…

Can anyone tell me what to buy?

And before anyone says that doesn’t work, it does.

As you have found out, AGP is severely limiting as to what graphics card you can buy. I’ve got an ati radeon 9250 256mb AGP. Works with most games until now but not well. I recommend not upgrading and just driving that computer into the ground until you can afford getting a whole new computer.

It’ll only be games that’ll suffer; you’ll still be able to do most important things. Also, when you get a new computer, you should still be able to get those games you really wanted cheaply.

Of course, it’s your money.

Shamus – just in case you ever think “hmm, maybe I can get Vista” – it will most likely not work on your motherboard due to driver problems. I’ve got an Intel 865 chipset motherboard, which was one of the last ones before the switch to PCIX (ie, the latest AGP board you’re likely to find), and there are no Vista audio drivers for it and no intention to ever release them. There are also no Intel storage manager drivers, so I cannot run the machine on RAID.

If you want to eventually get Dx10 and Vista, you need to bite the bullet and buy a whole new computer – it will be *far* less hassle that way.

Oddly, you don’t want a 4 slot toaster. For a really good toast you need a two slot toaster or a single slot toaster that can handle two slices at once.

To get a good toast on four slices at once you would need to draw enough electricity to trip some household breakers.

For toasters, you can’t really go past this:

http://en.amadana.com/product/tt111/tt111.html

Purely for entertainment reasons. Be careful though! “Cannot be used as an aquarium!”

Yeah, I know exactly how you feel as I myself want a new pc. Choosing the gpu seems like hell.

Pick a 8800 GT, GTX, GTS? What the heck is the difference?

Why is the $200 card faster then the $400 card, ain’t that completely ridiculous?

What the heck is pixel shader anyway, vertex shaders,…?

And if I decided to pick x, should I go with ASUS, another brand? What’s the difference anyway, one comes with game x and the other with game y?

Let alone how I can compare the ATI models to NVIDIA. Yeah, one has direct x 10.1, should I worry about that? Does it matter if one has more pipes?

Bottom line is, you have to check hardware sites who bother benchmarking them all and find out how good they perform. And follow their advices… And check them a lot as new cards come out on a monthly basis.

I’m far from being a pc noob but having to pick a GPU is so bloody annoying it just makes me want to not bother at all. It’s about time they find a better naming system!

Amen, Shamus!

I see a few plugs for the 8800GT around here, which jibes with what I’ve read in a few magazines- a $400 card for $250. I’ll be buying one myself in a few months. In the meantime it’s simply depressing to watch the Crysis mentality destroy my favorite hobby for the last 10 years.

Well well… personally I think it’s kinda easy nowadays IF you don’t have to search AGP cards.

For PCIe there’s actually only two intelligent choices:

nVidia: 8800 GT, since it’s the only Chip atm made in 65m, technology, thus uses a lot less Power than all other cards while still haveing a good performance.

Ati: Radeon HD 3870. Only chip made in 55 nm technology.

Both allow you to play high end games on decent settings and will continue to do so for at least 8-10 months.

As for Manufacturers: nVidia -> eVGA

ATI: Not so sure, I did not follow it closely, but my guess would be Sapphire.

But then again that’s just my personal Opinion and my 20 years experience as a Hardcore Gamer speaking. ;)

Current candidate:

http://www.newegg.com/Product/Product.aspx?Item=N82E16814131043

I was prepared to spend almost double this, but there doesn’t seem to be any need to do so. I don’t mind if it’s a little slow, as long as it has the features that will let me play the games. I don’t mind dropping the res down to 640×480 or whatever. I’d rather drop resolutions than turn off features anyway.

I’ll probably get this later today as long as nobody jumps in with dire warnings.

These days I try to buy the cheapest things possible. A good branding and all the features I know that I need or will eventually need. I bought a new laptop AND PC recently and happy with them. I will need a second video card soon and will need to shop around * sigh *

I just upgraded from my POS video card to a Radeon x1050, which is probably even less exciting than that one. I just wanted something that would run Portal and make WoW look shiny and cool (and not crash every time I went to Tempest Keep.)

Installing it was a pain in the ass. I ended up having to download and install older drivers in order for it to work on my machine, a solution I found on the WoW forums of all places, but since then I’ve been very happy with it.

Sounds like you did your homework.. nice find.. enjoy!

That looks like a solid card. I’m amazed you can get a 512 meg card for that cheap.

I’ve never been let down by well-reviewed products on Newegg. I’ve even bought one or two things based solely on customer ratings.

AGP had quite a bit of legroom left in it when it was depricated, so I wouldn’t feel too worried about having AGP.

Curaidh said:

“nVidia: 8800 GT, since it's the only Chip atm made in 65m, technology…”

Holy crap that’s a gigantic chip! (Yes I know it’s a typo, I still found it funny :))

Anyway Shamus, I’m afraid that AGP is at the end of its lifespan. If you don’t upgrade your motherboard now, you will before much longer, and that will just mean buying a new graphics card all over again. You should be able to find an inexpensive motherboard that fits your current processor without too much difficulty, and that’s the route I’d have to recommend. Good luck!

Your whole post shall be Quoted For Truth! It is *almost* the same with CPU, however, and probably getting worse with dual and quad core (and then eight core etc.) CPUs being compared to each other. Although I am a quite advanced user, I always feel humilated when I realize once again I have no idea whatsoever about all of the newest fancy hardware available. I just look up what’s out there in which price category when I am really about to buy a new machine, sigh.

Even as an experienced user, I’d rather much prefer a good old “look at the number – bigger is better” scheme, even if it doesn’t mean 2000 is twice as good as 1000, but definitely better. Wishful thinking, I suppose.

Fargleblaster 9672 XTQ? Oh NO. Don’t you know that the Fargleblaster 9672 XTQ Deluxe Gold PLUS Card is the one you really need? ;)

Shamus, you think you’ve got a graphic card. Prepare for fun, ATI has at least 3 chips with the X1650 number: X1650, X1650 pro and X1650 XT…

Which is the best? :D

Even worst: what the hell these silly sufix (pro, XT, nothing) stand for?

Enjoy yourself ;)

P.S.: as I said, I chose the X1650 XT (AGP) 9 months ago (I trusted a friend of mine for this choice)

General agreement here as well.

Game on!

As some one who recently upgrade their 3D card this summer, and ended up with the wrong one, having to sell that one and buying a new one again… I feel your pain.

Oh boy… I’m in the same boat and I can totally feel your pain. I’m on AGP too and I kinda gave up on that whole deal – I think I will just build a new computer instead :P

Thanks for the helpful hints everyone.

As Nilus pointed out, the marketplace is incomprehensible on purpose.

Next time you’re in the grocery store, check out the toothpaste aisle. You’ll see dozens of different kinds of toothpastes, “Whitening” and “Tarter-fighting” and “White Tarter Fighting” and on and on.

Then look closer. Most of the different kinds of toothpaste… [i]are the same brand.[/i]

Same stuff… in different bottles. And then they charge you more for it.

Whatever happened to the business model of “We’ll make the a really good product at a really good price?”

The joke is one me after all… :P

My “cheap” $40 card uses an ADP interface, and of course, I don’t have the proper slot for it. This is not, as you might imagine, because I have only PCI-Express, but because I have only PCI slots (yes, my computer is practically ancient by PC standards, but it’s always been dependable, which I find to be a crapshoot [even in a good product line] and I don’t want to get a new computer until they’ve got most of the bugs worked our of Vista).

One of the places in town here has a $100 PCI card (one based on the ATI Radeon 9250 set), and if that doesn’t work, I’m going to send away for a GeForce 6200A PCI (which is from the next generation after the GeForce 5500-based one I got cheap anyway).

You’re right, though – it is absolutely ridiculous how the graphics card market works. I am an intelligent guy and fairly good with computers, and I am having trouble finding something that I have any confidence will work when I get it here (I am worrying that I am still missing something)… *sigh*

The forums at Anandtech are a great place for purchasing advice, I’ve found. A post in the video forum saying “Help – I need to find a good AGP video card for under $120” will likely get some helpful well-informed answers. A great set of forums for almost any kind of PC info.

You mention that the benchmark lists don’t show AGP/PCIe – so you have to shop. But you can do a fairly easy correlation by opening the benchmark listing in one window and something like a Newegg.com guided search for AGP 4x/8x, sorted by price-highest. Then just drop down the list to your price point and see what models (chipsets) are available. Then look and see where those models stand on the benchmark.

But another question: have you considered upgrading your motherboard to a PCIe one? (Giving you an excuse to upgrade your processor too?) Since you have an AGP MB, I’m guessing your CPU is single core. You can get a dual core CPU for under $60 now. Add a cheap MB for $60 also, and you’re free to buy whatever vid card you like, including nvidia 8-series.

I’ve just come out the other side of the seemingly-endless research period and am now finding that the card that is for all intents and purposes perfect for me (NVidia’s 8800 GT) is incredibly hard to find, with shipments often selling out within hours. I may be limiting myself by sticking to EVGA (I’ve heard great things about their customer service and the lifetime guarantee is a must, with my horrible luck with any kind of technology) but this should not be so damn difficult. I just want to give them my money, why the hell won’t they facilitate this process? As a friend of mine pointed out, at least it’s better than the Wii situation, but honestly, not by much.

@Dana… buy a new computer with XP on it.

The first problem could be summarized by refering to my old Packard Bell or my eMachine. Both PCs were unusual among the industry for having motherboards that were are all put together and that was mostly it. You want a new graphics card it wasn’t a replacement but something put in the slot and installed on top. So computers that work like that…. aren’t begging for the modification.

Secondly, when I got Dawn of War for Christmas in 2006 I was shocked and disappointed to discover that my top of the line laptop computer couldn’t run it not because it wasn’t powerful enough or top of the line enough, new enough. Most simply the graphics card wasn’t built for the stuff that the game was designed for, including but not limited to something called “AGP video card with Hardware Transform and Lighting”. What the hell is that?

I looked it up. Didn’t help. In August or Sept 2007 I purchased a laptop with a ATI Radeon Xpress 1100 Hypermemory I’m not sure what that means but I was told it makes nVidia GeForce 3 look like nothing. Which makes sense.

The point is that neither of the two laptops, the first I got for my business (and I had to give back after I was discharged damn them) and the second I purchased in September, are gaming computers yet games can be enjoyed and good graphic appreciated if the graphics requirements for a game are not too specific.

Huge thread. I don’t really have time to read it all so i apologize if this has been said before. Anyway:

The real problem here is that it is difficult to impossible to find accurate and complete specifications for modern video cards. (Or, indeed, modern anything relating to computers. Video cards are just the part that hurts the most.) Because of that i ended up with a video card that doesn’t accelerate video(?!) but is otherwise extremely powerful and expensive. Now, NVIDIA doesn’t make any video cards that accelerate video… oh no wait, that’s not true: their 8800 GT does (i think) but good luck finding any sort of clue on the specifications. (I guess i can’t blame them, i wouldn’t want to admit that either.) I also ended up with a monitor that has DVI input and can go up to 1920×1200 resolution, but only does 1080i HD and not 1080p(?!) for some reason. And it doesn’t have HDMI, which is basically the same as DVI but with some bad parts and audio built into it. And my motherboard claims it can operate fanlessly, but that is (to borrow from Penny Arcade) a precisely-worded lie.

And i’m a computer science major and i spent about a month (in my spare time) researching.

Real specifications for these things would be nice. Even the experts have to run half-way on intuition.

Man I’m glad to read about that, from the lack of information the manufacturers were giving I thought I was missing some kind of obvious guide somewhere when I was searching for a card recently :p. As a side note kinda, I found a thread on a forum that was an awesome guide to power supplies. I know that’s not the main focus, but trying to find a decent power supply suffers from a similar lack of information.

http://www.devhardware.com/forums/power-supply-units-98/how-to-choose-a-power-supply-94217.html

Shamus, if you are stuck on AGP your best bet is either the 7900GS or X1950 – the X1950 is faster but costs a bit more. Forget about DX10, as the only cards that can actually RUN a DX10 game are the 8800 series or 3870. I must disagree with your post, however, I follow all the models just fine :D

@Shapeshifter: All current nVidia cards accelerate video, and the entire 8xxx line except the 8800 series has hardware decoding for HD. The lowly 8400/8600 will decode HD video on hardware just fine.

I know it’s late, but this site is simple and invaluable.

http://www.gpureview.com

You can compare videocards side by side.

When I was building my gaming rig I had the ‘techie-bloke’ in work sit down with me to help me work out what I’d need.

It still took us four days, and many of our conversations on the matter were very similar to your ‘toast’ example, above.

In the end I just told him to email me with what *he* would buy – and I bought that.

I’m blessed with a friend who owns his own IT business, so I know next to nothing about hardware now. Everytime I need hardware I just call him up, tell him how much I’m willing to spend and what I need the part to do, and he figures it out for me.

My video card just died on me, and I’ll have to buy a new one. I got a chill down my spine just thinking about it..

Please, if you don’t hear from me in 2 weeks, wait a little longer.

I don’t think anyone really understands every number and detail with this sort of thing. Some people get some bits, others get other bits but they all pretend to know everything just to look smarter then the guy next to them.

I used to build my own. Then the above mess happened, and the way I got my last PC was:

1.) Pick the games I care most about

2.) Find some systems I can afford

3.) Find benchmarks of those systems, playing those games

The one that did best overall in #3 I bought — happened to be a Gateway P-7811 FX (laptop), and I couldn’t be happier. I miss being able to understand the exact advantages of every component in my PC, but not so much I’ll actually get the associate’s degree I’d need to do it.

This just got re-linked so I thought I’d throw this in. TomsHardware.com is a decent review site for PC hardware, if you can get over their upgrade obsessions and the degree to which they seem to be in the pocket of components manufacturers. Most useful there is the monthly “Best Graphics Cards for the Money” feature, at the end of which, there is always a comparison table of all the desktop graphics chipsets ever made by Nvidia and AMD/ATI. They’re grouped in tiers, and three tiers of the suggested threshold for upgrading–anything less is two similar. Here’s this month’s article. It gets updated every month for prices, and the chart at the end gets updated every time a new card comes out.

http://www.tomshardware.com/reviews/best-graphics-card,2118-7.html

Anyway, that’s not to disagree with Shamus or say that the above is any less true–I agree with 100%. I just think this is a useful resource if you are shopping for a graphics card.

Homework indeed. I probably spent weeks sifting through reviews that compared it to other graphics cards and listed the frame-rates on different games such as Crysis or Left 4 Dead 2. Even after all that, pretty much the only thing I know now that I didn’t when I started was that the card I’m choosing will do what I want it to for about a hundred less than another card that will do the same a little better. Incidentally it is a Sapphire HD Radeon 5850.

Looking back on this now (after you linked it from your article the Escapist, I’m sure you’ll get a fair share of new comments), it’s funny because to an extent, the two GPU manufacturers have done exactly what you wanted. nVidia only had about a half dozen chipsets right now, and they’re labeled by Prefix (general performance level ) – Number (Relative performance in level). The G 210 is weaker than the GT 220, which is weaker than the GTS 250, which is weaker than the GTX 260, and then the GTX 275, 280, and 295 are faster still in that order. AMD’s done the same thing, and in fact done away with prefixes and suffixes entirely (except the “HD” which proceeds all of their cards). Higher numbers = better, period.

That’s only within the same generation, of course, but I don’t really think that’s unreasonable. When shopping for a new car, you don’t really consider anything but the latest model year unless you’re buying used, in which case you can expect to be in for a ton of homework, just like in computers or anything else. But for someone who just wants a shiny new graphics card, like a car, it’s as simple as “bigger number = better.”

agree, i just have a friend who i say, god card need to play napoleon total war, and he points and i pay

i tryet several times to figure the cards out and i simply gave up

Aww, nobody noted the 10-year anniversary of this classic post? I’d love to hear thoughts on how the situation has changed, if at all, since then. All I know is, 4 years ago I bought a cheap PC and had to drop a graphics card in it that cost almost as much as the PC did before I could play games on it, but it’s worked flawlessly or close to it on every game, current or not, I’ve tried to play since then.

It has and it hasn’t changed much since then…

This entire comedy routine could play out just as well here in 2019.

In fact, when you wrote your comment almost a year ago now, it was EVEN WORSE because of bitcoin miners, a huge graphics-card market that wasn’t using it for graphics. The miners competed with gamers, driving prices up and adding additional wrinkles in the shape of “this one is like 5% better for games and should theoretically have about the same MSRP, but complicated technical reasons make it way better *for mining*, so it’s actually twice as expensive on the market.” Fortunately that phenomenon (which had been ongoing since about 2015) collapsed in late 2018, so it’s back down to the base level of terrible now.

HOWEVER. While the situation is still this *complicated*, the *stakes* are lower. Instead of making night and day graphical differences like they might back in the aughts, all those card features you probably don’t understand enable game features you probably aren’t that concerned about unless you’re a full-fledged PC gaming hobbyist. And because the overall pace of development has slowed, you’ll probably only need to buy two or three new cards in the next decade to keep playing the latest games at roughly acceptable settings, instead of the half-dozen or so it might have taken to get through the aughts.

You know what blows my mind? I’ll tell you: What blows my mind is that we are twelve years past your original publishing of this and the satire is as relevant as ever. Good on you for writing this! I reference it at least once a quarter (which pains me) in discussions about the newest N versus A versus THIS card that is discontinued versus this OTHER card that just arrived….

It’s 2023 and it became a liiitle better, but still is a mess