Languages are usually described in terms of being “high level” or “low level”. This is usually presented as a tradeoff, and as a programmer you’re obliged to pick your poison.

High vs. Low Level Programming

If you’re not a programmer, then I need to make it clear that these two concepts are probably the opposite of what you’d expect. A high-level language sounds like something for advanced programmers, and a low-level language sounds like something for beginners. But in classic engineer thinking, these paradigms are named from the perspective of the machinery, not the people using them.

A low-level language is said to be “close to the metal”. Your code is involved with manipulating individual blocks of memory and worrying about processor cycles. It’s very fussy work and it takes a lot of code to get anything done, but when you’ve got it written you can be confident that it will be incredibly efficientAssuming you’re knowledgeable and experienced, you didn’t create any major bugs, and the limitations of the target platform were made clear to you and were accurate. You know, the usual..

A high-level language allows you to express complex actions using very simple bits of easily-written code. It’s easy to write, but often wastes processor cycles and memory. How much? There are arguments all the time over whether the overhead for language X is significant or trivialAnd I’ll bet your viewpoint depends on your domain..

If you want to output the phrase “Hello World!” to the console, then here is how you do that using assembler, the lowest of the low-level languages:

SECTION .DATA hello: db 'Hello world!',10 helloLen: equ $-hello SECTION .TEXT GLOBAL _start _start: mov eax,4 mov ebx,1 mov ecx,hello mov edx,helloLen int 80h mov eax,1 mov ebx,0 int 80h |

That’s a great big wall of inscrutable nonsense to perform a very simple task.

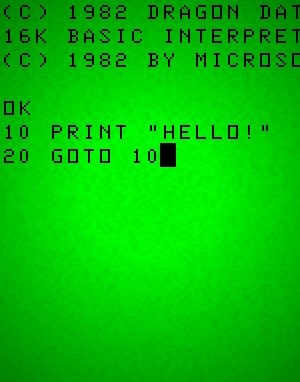

The BASIC programming language is extremely high-level and was a mainstay of computer education back in the 1980s. Here’s that same task implemented in BASIC:

100 PRINT "Hello World!" |

That’s a pretty big difference in both size and readability. For reference, C++ falls somewhere between these two extremes. It’s considered a pretty low-level language by today’s standards. I know I’ve covered this topic before on the site, but for those who are foggy on the details, here is…

Yet Another Terrible Car Analogy™

You can imagine driving directions for a road trip from New York to L.A. The low-level version would tell you when to leave, what specific roads to take, when to stop for fuel, where to stop for food, and how fast to travel on each road to optimize fuel usage and synchronize with local traffic lights. A high-level version of the directions would be “Drive west until you hit the coast and then head south.”

The high-level directions are easy to write, easy to read, and are unlikely to contain any errors. The low-level directions are time-consuming to write, difficult to follow, prone to mistakes, but massively more efficient if they’re written properlyAnd in this case, “properly” means basically “perfectly”..

In computer science, this is usually portrayed as an either-or type deal. If you want to do things the easy way, then you have to abandon efficiency. If you want efficiency, it will come at the expense of more work. In C++ you can make your programs very fast and keep your memory usage low, but your code will be massive, dense, and difficult to maintain. In Java it’s easier to write and maintain the code, but you’ll waste a lot of memory and processor cyclesActually, the resources wasted are completely trivial and never something you have to worry about. Just ask a Java programmer..

What sort of language you use depends a great deal on the type of problem you’re trying to solve. If you’re writing an operating system or device driver, then you’ll probably want to use something low-level. If you’re writing something fun and simple with lots of user interface elements, then you want a higher-level language. In my personal experience, writing interface code (dialog buttons, lists, scrolling text boxes, etc.) are pure torture to write in a low-level language.

The problem with gamesAnd maybe lots of other domains. I dunno. I can’t speak for them. is that you kind of need both. You want the guts of your rendering pipeline to be written as close to the metal as possible. On the other hand, a lot of your code is going to be dedicated to expressing the rules of the game: Hitpoints, damage, inventory, etc. That code usually isn’t very costlyDepends on the genre, obviously. in terms of processor cycles. It doesn’t need to be expressed in low level terms, and it’s often extra work to do so.

So Let’s Use Two Languages!

Developers have sometimes routed around this problem by using two languages. C++ will be used to make the costly stuff like rendering, physics, and audio. Then the gameplay stuff will be offloaded to a scripting language like UnrealScript, Papyrus, or LUAThere’s also a second reason for using a scripting language, which is that it allows end-users to make gameplay mods without giving them access to the engine.. This allows the developer to use the harder language when speed counts and an easy language when it doesn’t, although it has the obvious drawback of having the game implemented in two different languages. Also, you can’t really shove all of your performance-critical functionality into a box like that. In a complex system, there’s not always a clear line between the “engine” and the “game”.

Sometimes you’ve got a lot of game objects (like particles) that are so numerous that you have to think very hard about how they’re processed and you need to be very careful with their memory footprint. Sometimes you’ve got other game objects (like a bounding box to trigger a cutscene) that are few in number and require no special performance considerations. Sometimes objects start at one extreme and migrate to the other as your design changes. It really sucks if you realize you need to migrate some code from one side of the wall to the other, because that means re-implementing everything in the other language. It would be nice if we could make the transition from “convenient to write” to “fussy and finely-tuned” without needing to rewrite all of the code in a different language.

I spent some time learning C# and Unity last year, and I found myself questioning the inevitability of this tradeoff between processing efficiency and programmer expressiveness. I was amazed at how much faster it was to work in an environment with so many convenience features, but then I’d run into a situation where the language wouldn’t let me ask simple questions or take reasonable steps to ensure performance. These two things seem to be orthogonal, and I don’t see why we can’t have both.

Okay, I can understand why we can’t do both at the same time. But I don’t see why our language must limit us to one particular level of expression. Sometimes it would be nice to just PRINT “Hello World!” and other times you need to crawl down into the guts of the engine to allocate and manually manipulate individual bytes of memory.

In computer games, you don’t always need speed and ruthlessly frugal memory management. But when you do need those things, you really need those things. As the program grows in complexity and the performance needs become more clear, more of the systems will need to migrate from the “easy to write and manage” side to the “fussy and difficult to understand” side of things. Not only do you have to re-write the code in the new language, but you have to build a bridge between the two so your slow-ass scripting language can hand a tricky job off to your cryptic low-level language. And if you’re dealing with complex objects with lots of data – like Space Marines or space orcs – then you have to describe that data in both languages and keep those bits of code synchronized.

This isn’t an impossible task or anything. Coders do it all the time. But it would be nice if you didn’t have to.

A language designed specifically for games could perhaps allow us to be exacting when performance is crucial, and expressive when it isn’t. Sometimes you need direct memory access so you can worry about the placement of individual bytes, and sometimes you just want to process some simple data without having to think about the hardware.

Good Programmers

The second most controversial thing that Jon Blow has said about Jai is that it’s a language designed for “good programmers”. (The most controversial thing was his announcement to build the language in the first place.) This rubbed a lot of people the wrong way, since it seems to imply that all those other languages are for “bad programmers”.

Direct memory manipulation is a classic example. It’s tough to do properly, and very easy to make mistakes. The compiler usually can’t help you identify mistakes in memory management, which leaves you to face the problem on your own. Worse, memory management problems cause catastrophic bugs like crashes or slowdowns.

(Direct memory manipulation is a bit of an oversimplification for what I’m talking about here. I’m not just talking about allocating memory and managing it yourself, I’m also talking about allowing the programmer to specify the layout of things in memory, gain direct memory access to things their code didn’t allocate, and copy arbitrary blocks of memory from A to B.)

Back in the bad old days of C, this problem was really common. Every single string manipulation required manual memory management. Even if you just want to print “Hello World” to the screen, you need to take responsibility for those 12 bytes of memoryOne byte for every character in the message, plus one extra to mark the ending of the string. As you can imagine, that “one extra byte” business made it very easy to make subtle mistakes. and keep track of them yourself. If you make a mistake in managing memory like this, you’ll generally crash or experience bewildering buggy behavior. It’s like trying to prepare a sandwich when you don’t have pre-sliced bread and your only knife is an industrial buzzsaw. This is a simple task. Why does it need to be so dangerous?

Some languages try to protect the programmer. They impose structures and syntax to make it difficult or impossible to make these kinds of blunders. Effectively, the language takes away your buzzsaw. When coders complain that they need a buzzsaw, people explain that you shouldn’t be using a buzzsaw anyway because they tend to cause more problems than they solve.

I don’t like the narrative that the world is flooded with idiot programmers, but it is an observable truth that software has a lot of bugs in it, and statistically those bugs are the result of a small handful of common mistakes. It makes sense to take those hard-learned lessons and use that knowledge to build safer languages in the future.

This leads back to the classic programmer argument:

Alice: This tool is terrible! Look at how many problems it causes!

Bob: The tool is fine. You’re just bad at using it!

Not every company can afford to hire expert-level coders for every language. Sometimes the most qualified person to maintain that legacy C code is the woman with two decades of Java experience. It would be nice if we could afford our own cryogenically frozen greybeard C coder and we could thaw him out every couple of years when something needs to be changed, but the only thing more expensive than cryo tanks is greybeard C coders.

So we build a language that takes away the buzzsaw and gives the coder safety scissors. That won’t help us maintain this ancient C code, but it will help us to avoid leaving these sorts of messes for future coders.

But the buzzsaw DID have legitimate uses, and if you take away the tool entirely then you forever lose the ability to solve those problems. That helps all those other programmers who struggle with the tool, but it leaves you weaponless when you come up against a problem that requires it.

So we have this perceived trade-off between expressiveness and performance. When it comes to manipulating strings, C++ offers a great example of a case where we can have both. The C++ standard libraries offer specific types for manipulating strings. You can use these types when you just want to shuffle some text data around when you’re not worried about performance. If you are concerned about performanceLike, you have a program that needs to chew through gigabytes of log files for whatever reason., you can always pull out the buzzsaw and go back to the classic C style of handling text. I can imagine a language designed for games that offers this sort of choice everywhere. It would be a complicated language, but it would probably be less complicated than two unrelated languages being used in tandem, which is where we are right now.

Those safety-scissor languagesPlease don’t take this as mocking towards those languages. I’m not saying languages like C# or Java are for kids or that you can’t do serious work with them. I’m just trying to explain this divide without throwing too much jargon at the non-technical readers. are designed with the built-in assumption: “The programmer might make mistakes, so let’s limit their ability to hurt themselves.” Saying Jai is designed for “Good Programmers” doesn’t mean people using those other languages are lesser programmers, it means the language is going to assume the programmer knows what they’re doing and isn’t going to try to save them from themselves. Jai programmers will be just as flawed and error-prone as programmers in other languages because we’re all human, but Jai is designed to allow you to make those mistakes because sometimes a buzzsaw really is the best tool for the job.

Shamus, doesn’t it seem sort of rude to refer to the language as being for “good programmers”?

Doesn’t it seem sort of rude for all these other languages to assume you’re not a good programmer? If we’re going to get offended by the assumptions built into these languages then we’ll never run out of things to get mad about.

Again, domain is everything. A chef shakes his head and talks about the crazy old times when they had to make sandwiches with buzzsaws, while the carpenters are like, “Why does it need to be so hard to get my hands on a simple buzzsaw these days? Do you have any idea how hard it is to cut through oak with a bread knife?”

Footnotes:

[1] Assuming you’re knowledgeable and experienced, you didn’t create any major bugs, and the limitations of the target platform were made clear to you and were accurate. You know, the usual.

[2] And I’ll bet your viewpoint depends on your domain.

[3] And in this case, “properly” means basically “perfectly”.

[4] Actually, the resources wasted are completely trivial and never something you have to worry about. Just ask a Java programmer.

[5] And maybe lots of other domains. I dunno. I can’t speak for them.

[6] Depends on the genre, obviously.

[7] There’s also a second reason for using a scripting language, which is that it allows end-users to make gameplay mods without giving them access to the engine.

[8] One byte for every character in the message, plus one extra to mark the ending of the string. As you can imagine, that “one extra byte” business made it very easy to make subtle mistakes.

[9] Like, you have a program that needs to chew through gigabytes of log files for whatever reason.

[10] Please don’t take this as mocking towards those languages. I’m not saying languages like C# or Java are for kids or that you can’t do serious work with them. I’m just trying to explain this divide without throwing too much jargon at the non-technical readers.

id Software Coding Style

When the source code for Doom 3 was released, we got a look at some of the style conventions used by the developers. Here I analyze this style and explain what it all means.

Marvel's Civil War

Team Cap or Team Iron Man? More importantly, what basis would you use for making that decision?

Programming Language for Games

Game developer Jon Blow is making a programming language just for games. Why is he doing this, and what will it mean for game development?

Philosophy of Moderation

The comments on most sites are a sewer of hate, because we're moderating with the wrong goals in mind.

The Disappointment Engine

No Man's Sky is a game seemingly engineered to create a cycle of anticipation and disappointment.

T w e n t y S i d e d

T w e n t y S i d e d

… and that’s why most cooking schools start out by teaching you how to use a buzzsaw for a year or two before moving on to the knife.

Lol fair point, myself i started learning programming with C then worked my way up to higher languages but then again im not sure if that is how teaching progression of programming is in other places. Not to say its wrong to start of with a high level language being far more readable etc.

The CS department at my college starts with Java, while the engineering department taught C++. It was more than that really- the CS department started by teaching object-oriented design, while the engineering department just taught you how to program.

Personally, I preferred the latter approach. When I’d tried to learn to program on my own before my C++ class, I got lost in all of the OO talk that I didn’t have the context to truly understand. Once I knew some C++, and they got to objects, it was simple as hell- an object is a collection of variables with some functions attached to it. Obviously, there’s more to classes and objects to that, but that was a hell of a lot easier of a starting point than high-level analogies that have no apparent relevance to “hello world”.

We have a course in ‘computer science concepts’ which uses Karel to get students used to programming. If someone has programming experience already, the courses after that use C++ for everything that isn’t web-based.

I started with BASIC in high school (the advanced class used Pascal, I think), but my college courses used C++ (except for a web programming course and assembly).

I had some BASIC in high school and my local community college used Visual BASIC as its introduction to programming. (I don’t remember what the actual course title was). The courses after that were C++.

Call me overly sensitive, but I actually had to stop reading when the pictures of the buzzsaw showed up. I can’t handle even the implication on my screen for the time it would take to finish the article.

I wonder how does C#’s ability to inline C/assembly fare? I’ve never tried doing it myself, but it seems relevant.

Oh, and and I suppose… language language_evangelism_posts++;

There was a paper that came out recently on writing a (trivial) network driver in multiple languages and comparing performance. I was suprised at how fast C# was – I guess they really let you muck about in the mud if you need/want to.

paper is here: https://github.com/ixy-languages/ixy-languages

Would you now? I assume you meant to say “it.”

Also, to extend the analogy about most languages being “good enough” for most purposes, it’s not very hard to cut pine with a bread knife, even if it’s not ideal. This makes it more like a real programmer conversation, because both the cabinet maker using oak and the house frame fabricator with the pine will just talk about how well their tools “cut wood” and therefore each thinks the other is insane.

From the link you give here:

Shame on you! Oh, I guess that’s why they call you “Shamus”!

Anyway, this is all an interesting read. My knowledge of programming is very tangential. I dabbled with BASIC back in the day but I never went out of my way to try to learn a programming language, for several reasons. In any case, I spotted this kind of tradeoff very clearly when perusing some documentation. Though it’s made even more obvious with the use of game making software like, well, “Game Maker”, in which you can use drag and drop and visual tools to do stuff but if you want any sort of proper efficiency you have to get inside and write the code.

I’ve never used Game Maker, but I have some (extremely) limited experience with drag-and-drop visual tools for making GUIs in Java. By which I mean that I once tried to make a JFrame (a window) that way with the Netbeans IDE. It was maddening. As I was dragging, dropping, and trying to set the properties of various GUI objects, I was plagued by a terrible sense of uneasiness. I didn’t know what the code I was ostensibly generating looked like. It felt like I was abdicating my responsibilities as a programmer. I couldn’t take it, and quickly returned to hand-coding everything. It probably takes longer to do and it can sometimes be hard to get things looking just right, but my brain finds it so much more tolerable.

I expect I would be happy to use drag-and-drop tools for other sorts of projects though. If I had to design a web page, for instance, I would gladly use a drag-and-drop editor. I don’t want to learn how to use modern HTML, CSS, PHP, or anything JavaScript-ish. I’m not interested in those things and don’t care about understanding how they work. I do, however, care about understanding Java. As an amateur and mostly self-taught programmer, I regard learning as the most important part of most programming projects. If I’m not looking at the code and writing it directly, I feel like I’m not learning and just possibly like I’m cheating somehow.

This gives me a great idea for a programming language! Linaa, short for Linaa is not an acronym.

Recursive acronyms are fun. My personal favorite is Wine Is Not an Emulator.

PNG is not GIF is also quite nice.

Except that, sadly, that’s a backronym – the real acronym is Portable Network Graphics format. Real recursive acronyms are rare but real, with probably the best known being GNU (Gnu’s Not Unix), with Wine close behind.

Unsurprisingly, they’re most common in the open source space – somehow they always get shot down and changed to something boring by commercial enterprise firms’ marketing departments. :)

ZINC is not C is thr most obscure language I know of, and it happens to have a recursive acronym.

There’s also PHP, which originally stood for Personal Home Page but was later changed to PHP Hypertext Preprocessor.

KDE — K Desktop Environment

Not recursive but still a nice one (and not even open source):

TWAIN — Technology Without An Interesting Name

… or at least that’s what I thought from 1995 until today, when I looked it up and found that was actually a retroactive interpretation:

https://en.wikipedia.org/wiki/TWAIN

I’m a proud member of the gaming clan The TTR Recursion since 2001!

Java is weird in that it’s a fairly high level language, but with the verbosity of a low level language. Mainly due to having to declare both the package and class in the file you’re using.

Also, if you thought Java as-is is inefficient, wait until you get working with a project that has to handle multithreading properly and therefore requires every object to be immutable. It’s so slow you’re better of doing all the work by hand.

I’m not entirely sure what you mean here. It takes a single line to declare what package a class belongs to. I agree that it’s sometimes awkward that everything has to be in a class (or sometimes an interface), but I’ve never found it to be intolerable. I mean, yes,

seems a bit excessive for something as simple as “Hello, world!”, but for any more serious, substantial project it doesn’t seem like that big a deal.

I agree that multi-threading is awful, mostly because I only sort of understand it and all the good multi-threading tutorials I’ve found are old and out-of-date. (Those things still work, but I gather that the state of the art has moved on since Java 8.) Regrettably, multi-threading is unavoidable if you want to do games in Java. Your game will inevitably have at least two threads–the Runnable thread with the main loop and the event dispatch thread for input-handling–and probably more besides. As I said, I only sort of understand multi-threading in Java. I consider it a minor miracle that my game works at all.

I think he means that you can’t declare multiple public classes in one file.

Is that a thing that people often want to do? I’ve found that one-class-per-file helps to keep things readable and makes methods easier to reference as I’m programming. I’d rather go back and forth between multiple tabs in my editor than scroll up and down in a single tab.

When I have a class that consists of nothing more than three public members? Yeah, I’d like to not have a separate file for that.

I concede the point for teeny-tiny classes.

This is one of the less obvious problems of moving between, say, C++ and java. Having a small class with just a few data members is a perfectly normal C++ way of doing things, and there is nothing wrong with it (in C++ *or* java).

But if you spend enough time playing around with java, you’ll find you gravitate towards slightly different designs, and would probably have structured the code differently so that the small data class is an ‘inner’ class of some other class. You might register a listener with a manager class, and receive callbacks containing a single data structure but the listener interface and data structure are both implemented s public classes *within* the manager class.

Java just happens to be designed in a way that makes the the most flexible and easiest way to do things. While C++ makes the separate data structure class approach easier to handle and more intuitive.

You can reasonably take either approach in either language, but you might find yourself having lots of minor extra annoyances if you pick the ‘wrong’ one. Different languages just naturally tend to slightly different approaches.

I call the Java “let’s use a inner class to put multiple classes in the same file” solution a messy workaround to dodge an artificial limitation imposed by the Java language designers because they feel they are right and anyone who disagrees is wrong.

C# is mostly Java-like, and it doesn’t have this limitation.

You can put multiple classes in the same file without resorting to inner classes. You just can’t have more than one public class per file. It’s restrictive, yes, but not that restrictive.

Surely if it’s not a public class then it’s an implementation detail that the rest of the application doesn’t even know exists.

– Or if it can somehow discover it, nothing else can rely on it existing as it can change or be removed entirely without breaking the API contract.

(It could affect the ABI, but Java doesn’t really have one of those)

It seems a case of assuming that the programmer isn’t smart enough to keep their files to manageable/easy to understand sizes themselves and arbitrarily enforcing a (low) limit on how much you can put into a single file.

Which is fair enough, the point of Shamus’s article is that programming languages are all along a spectrum of how much control programmers have, and hence how much responsibility programmers take for getting it right.

Personally though, I feel the “one public class per file” rule is well past the limit of how much I am willing to let the programming language dictate to me.

Well played sir, well played.

As a Java programmer (as in I’m getting paid for writing Java code, which is then used by clients), I agree. But then I’m not writing a game, pushing the next version of the software is what’s important, not making sure the software eat too much ressources. Also our software usually run on dedicated boxes and don’t have to deal with a lot of data at once in memory, so there’s less incentive to be parsimonious with memory.

This is why the programmers gods invented DLLs and libraries. Come up with a good interface and hand the task off to a library that uses a language suitable for it. The trick is to make sure that the binding/communication between the library and the main software is efficient.

It something I have to deal with all the time when developing motion control software, where the design and control has to be software with a rich UI and the motion side has to operate quickly and in real time.

I can definitely confirm that the performance needs are highly domain specific. I am currently a Java developer (though I have worked with half a dozen other languages), and our application is heavily bound by the database with large amounts of data – not BIG DATA big, but still, we’re talking tens of thousands of customers, thousands types of products, reports and statistics, the works. So it doesn’t matter much whether your UI code is efficient or not (at least in 9% of the cases), since the difference is often in terms of milliseconds (which is an eternity for a game), while your database might take seconds to get you your data. Most performance tuning is done on the level of the database and the data access layer – adding indices, tweaking queries to not fetch too much data, or to do it in one select instead of hundreds or thousands, optimizing joins. And having memory corruption is much worse here, especially on the server side. If your game crashes, worst case is the player loses some progress. In our case, the client might lose a lot of money. And even memory leaking becomes more dramatic, because your application runs not hours at a time, but often days, weeks or even months, so you better make sure you don’t run out of memory. And this is much easier to do in Java or C# than in C/C++.

Funny thing about Java is you won’t leak memory but the sink can get clogged. Having to tweak things to force Garbage Collection because the app is too busy to bother with it right now and then running out of memory is a PITA.

You certainly can leak memory in java, although not in the same way as in C++. You can’t delete a pointer leaving some dangling memory they can’t be reclaimed, but you can, for example. leave references to objects lying around in lists forever when they should have been released

Holy shit the default garbage collector has crashed SO MANY of my projects. Just…. Yuck.

I fully admit to being biased in favour of C++, but RAII > garbage collector. While C style manual memory management sucks and is extremely error prone in both directions (double deletion and memory leaks), RAII solves this by tying the resource to an object with a lifetime that can be easily controlled, in many cases by just putting the object on the stack.

I very much dislike garbage collection.

It’s usually sold as “no memory leaks!”, but it’s just not true. Forget to remove (ie delete/free) an object reference when you’re done using it, and that object is effectively ‘leaked’.

To put it another way, object lifetime always matters, regardless of the memory reclamation scheme.

So in reality, it doesn’t solve the problem at all.

It does mean that you cannot double-free or use-after-free.

But the cost is high – the entire application stops, potentially for several seconds, while the GC does its job.

And worse, if the GC can’t prove an object really has gone, it will leave it around forever – leaking memory.

However, the reason GC helps avoid these mistakes not because of the GC itself. It’s because garbage collection must remove the concept of a raw pointer – all pointers are of necessity reference-counted ‘smart’ pointers.

So to get the same safety benefits, all you have to do is ban raw pointers and use appropriate ‘smart’ pointers (eg RAII classes) instead.

And then the world does not stop – unless you want it to, of course.

(Both approaches fall foul of “If the application is terminating, why bother cleaning the carpets and repainting the walls? The whole building is about to come down!” It’s quite frustrating when quit-without-save takes many seconds.)

As someone who hasn’t done any assembly since 6502 (well, 6510 but who here knows the difference, eh ;) ?) I’m interested in how that hello world assembly example works. Looks like there’s something missing there to me though. Is there?

The “int 80” is doing a system call, basically calling into the operating system to do some work in kernel mode. The EAX register is a numeric identifier for the call to perform, the remaining registers are for passing parameters to the call. The first system call is “sys_write”, with the first argument (EBX) identifying the “std out” file descriptor as the target for the write, and the remaining arguments giving the string’s address and size. The 2nd system call is “sys_exit” which asks the OS to terminate the program. The number 0 passed in EBX is the exit code that’s to be returned from the program’s execution.

See http://shell-storm.org/shellcode/files/syscalls.html for more info on Linux system calls.

The actual writing of characters happens in the OS, most likely invoking some hardware driver.

Our company still does embedded systems on 65c02’s – much nicer assembly to work with than the x86 assembly shown here. I’d take it we’d recognise the difference with a 6510 once you start writing to memory locations that only exist on a Commodore 64, eh?

As Lethal says, this isn’t so much how to print “Hello, World!” to the screen, as it is how to make syscalls in Linux. Opening a file handle uses a similar sequence of calls; change the mov ebx,1 line to whatever number you got back from that call, and you’d write “Hello, World!” to a file, instead. What the OS does to parse a filesystem on disk, allocate space for it, write whatever you’ve requested, update all the datatables related to the filesystem once you’re done, and so on, is obviously a great deal more complicated, *but* the kernel wouldn’t allow you to execute the commands directly in userspace anyway – only kernel-mode code is permitted to do that. The OS is both a door and a gateway like that.

This code, even though it’s assembly, could still be even more low-level and run with less overhead yet, by not having an operating system and just bashing the hardware directly, but unless you’re doing embedded, that kind of coding went out of fashion at the end of the 16-bit era – the direct, low level manipulation of all of the permutations of hardware in a modern desktop PC would require some superhuman coding abilities, and would produce some very diminishing returns.

Nitpick: Except that you don’t do int 80h anymore. That’s what you did 20 years ago.

Nowadays, on 32-bit x86, you’d use the sysenter opcode, while on 64-bit x86 (x86_64 aka x64 aka amd64) you’d use syscall.

“6502 (well, 6510 but who here knows the difference, eh ;) ?”

*raises hand* The dumb terminal company I worked for in the late ’80s used the 6502 as its common processor for a number of products, and I looked at family variants several times for new designs. I no longer remember the exact details of the difference, but I compared both back in the day

My “favorite” moment was when I just started. The 4 MHz 65C02 processor was just over $3 while the 2 MHz was just under, and that was a hard cost limit from the production side so we had to put the slower part in and divide the clock by 2 to save the extra $0.15. Admittedly, that was per unit, and our volume was > 10K/month, so it added up. I still cried in my beer.

It doesn’t seem rude to me at all. I mean, (okay I’m not a programmer) but it’s within every programmer’s ability to self-define as a good programmer or not. YOU KNOW WHO YOU ARE!!

;)

I don’t know about programming, but the evidence for the accuracy of these kinds of self-assessments is mixed when it comes to, say, math. Speaking very loosely, people who think that they’re good at math seem to be better, on average, than people who think that they aren’t good at math. However, around a third of the people who think that they’re good at math are in fact not good at math. For whatever reason, men are more likely to over-estimate their mathematical ability. So as not to mislead anyone, I should add that for the purposes of these kinds of surveys “good at math” should usually be read as “able to do arithmetic, work with percentages, and do risk calculations”.

(I will ask everybody to please refrain from incorrectly quoting Dunning-Kruger yet again; thank you)

‘You can always trust the Americans to do the right thing – after they’ve exhausted every other option’ – Dunning-Kruger, 1943.

Basically, if you say your language doesn’t do X because it’s meant for good programmers, then the implication is that programmers who prefer langues that do X are not good programmers.

I figured where this was going is that Jai includes the buzzsaw and the breadknife. It’s “for good programmers” because it includes the low-level features necessary for highly optimized games; it assumes that you know how to safety use these dangerous tools.

If I was applying for a programming job and they asked me if I was a good programmer, and I said, “No,” I don’t think they’d say, “That’d fine, we’re using C#, not Jai, so you don’t need to be.”

And the way they self-define as a good programmer is to use the language. Brilliant!

It’s an excellent start to a logical fallacy in favor of the language. The people who like it are good programmers and the ones who don’t aren’t, because the language is meant for good programmers.

I guess this is where a motorcycle analogy makes sense: If you give an overpowered racing cycle to someone who just learned to ride on a 125cc machine, they will be lying on their back before long. Which is why (in my part of the world), a novice may buy and ride one of those monsters with the appropriate license, but needs to have it throttled down for a certain time.

However, being able to handle the power isn’t the same as being a “good” motorcycle rider. I’d actually argue that a good driver wouldn’t get that kind of motorcycle because why would you get it if not to ride at unsafe speeds while making a lot of annoying noise?

In the same way, there’s probably multiple ways to interpret John Blow’s “good programmer”. As I understand it, he means “knows when, where and how use low-level instructions that can go very wrong, and can’t be easily debugged”, rather than “can write lots of code in short time”, or “produces well-structured code that’s stable, extensible and easy to interoperate with, and great documentation”.

I think the problem with saying that the language is for “good programmers”, at least in the context of high vs low level languages, is that it’s not the actual reason for the difference, and saying it that way only exacerbates the clash between programmers who prefer one or the other style. Returning to the memory management in C issue, there is NO programmer good enough to never make a mistake with that, and so to never forget to do a free or delete or to never free or delete something in the wrong place and so before everyone has stopped using it. In fact, I recall an instance where someone was using one of the better profiling tools and took its recommendation to free something which then caused massive problems with the software because the profiling tool, with access to all the code, didn’t realize that something else had to access it after that point. So while obviously bad programmers can make it worse — although ironically they can actually be SAFER with this by being overly paranoid and freeing anything as soon as they get a chance and reallocating it later if they need a similar structure, making things inefficient — this would protect good programmers from those common mistakes as well, and so high level languages are for good programmers as well as bad ones.

This leads to the real distinction: how much do you have to care about these things? High level languages are indeed for when you don’t have to care, either because your application is such that you don’t have to care or else that the compiler is going to allocate things good enough that you don’t have to worry about it. Low level languages are for when you have to care. I tend to prefer what might be called Mid-Level languages — C++ might be a good example — where most of the time you don’t have to care but if you do have to care you can do things yourself. My biggest frustration with High-Level languages and packages/libraries is that if it does what you want it to then it’s great, but if it doesn’t then it’s at least a massive chore to trick it into doing what you want it to do and is possibly impossible (my biggest frustration with Java). And I tend to get answers of “Well, you shouldn’t want to do things that way!” which my answer is always “Yes, I’d rather not do things this way, but life dictates that either I do that or rewrite the entire application, and since the latter’s not happening …”. So, for me, the ultimate language is one that has nice, clean, simple base behaviour but that is very flexible if I want to do things differently.

I’ve grown up with Basic, a little Assembler (both on C64), then Pascal, a little C, some Matlab, a little more Fortran, and finally, my great love, Python.

Most of the time, I’m very happy not having to care about memory allocation and low-level optimisation (beyond making sure that most calculations are not performed by my own code but by numpy, and my code doesn’t waste time). However, there are these things sometimes that I know could run much faster, and where I know the user will be waiting for my code to get done — and in those cases, I’d like to be able to drill down and just execute it in a faster way which I know is possible but not in Python. In all other cases, I’m really enjoying not having to care about those details. There’s enough to improve my coding on the higher-level end: structure, UIs, multithreading/processing, learn to use proper vector maths…

I think “high-level” vs “low-level” is an incorrect way to think about programming languages. Leaving aside extremes like Python on one side and Assembly on the other, most languages are pretty similar in regard to what the average statement does.

Code written in most commercial languages usually boils down to “x = y + c” and “foo( bar(), “someString”, false )”. How much boiling is required to get to these statements varies by language, but the bulk of the programmer effort is usually going to be thinking about what foo and bar should do, not how the boilerplate should be arranged.

And while we call the languages that remove that boilerplate “high-level”, there’s no inherent reason for them to be inefficient. There’s nothing expensive about writing “foo( bar() )”, and indeed, C++ compiles it down to a few lines of assembly.

The idea that a language has to either have a garbage collector and poor performance or be adaptable and easy to write is a leftover of the 1990s-2000s period more than an accurate statement on the state of the industry in the 2010s.

It’s possible Blow is just using “a language for good programmers” as a cipher for “low level language” and “manual memory management”, and could simply choose less inflammatory terminology. But, while I think that’s part of it; he certainly objects to the “safety scissors” languages like Java[1], but I also think he’s primarily criticizing the modern language-design efforts to build safer buzzsaws; I think the “languages for bad programmers” bit is largely directed at Go and Rust[1], more so than, e.g.) Java[2].

Go is particularly infamous on this point as the language designer has explicitly taken the opposite tact from Blow, saying:

Some people have claimed Go is a “language for dumb programmers” as a result, but I think there’s wisdom in realizing that not every team (not even at Google) is fully stocked with “cryo tanks of gurus”, and that a simpler language design (not necessarily higher or lower level, just simpler[3]) can help avoid issues. Go is probably a moot point for the topic of heavy game development (garbage collector and all), but I think the aim is laudable.

And Rust is more relevant, as the entire raison d’être for Rust is the idea of gaining safety (and expressiveness) without sacrificing efficiency (which is why the “Rustaceans” are so bullish about the idea of game dev in Rust). But it does so through “friction” – the Rust compiler is particular about how code is structured, because you essentially have to prove to the compiler that your code is handling memory safely. It seems Blows point is that the friction imposed by compiler-mandated safety in Rust is unnecessary if you’re a “good programmer”.

And maybe if everyone on your team is about as talented as Jon Blow, that’s true. (Which is quite feasible with a 4-person indie team) But the larger your team gets the less true it is. Not only can you not maintain the same pristine quality of developers, but errors tend to occur where different people’s code meets. Even conceptually very simple stuff (e.g. “is this particular variable `null`”?) are frequent sources of error, much more inherently complex stuff like memory management.

Rust accommodates for “bad programmers” by advanced compile-time checks, and Go does it through a simpler language design, but both are aimed at reducing defects through language design: that this is apparently an explicit non-goal in Jai is likely the biggest red flag for me. It’s not really a question of condescension or elitism, it’s just that I think, given a sufficiently large enough team or long enough project, everyone is a “bad programmer”.

—

[1] D is, as far as I know, is mostly just a newer, more modern buzzsaw and not really “safer” buzzsaw, but admittedly I know the least about D of all the languages that are commonly discussed on this topic.

[2] I don’t think that comment is aimed at Java; he’s not arguing that games shouldn’t be written in Java because he doesn’t need to make that argument: I’ve never seen a reputable argument that game devs should all drop C++ and switch to Java. (IIRC he only mentions Java as an aside about how he doesn’t like “big idea languages”)

[3] I think the question of simplicity is separate from the questions of “high or low level” and Manual Memory Management. MMM inevitably adds some complexity to the language, but you could certainly envision a simpler version of C++ that still retains MMM and is equivalently low level. …actually, I don’t think you have to imagine it, it’s C. And there are people who advocate C over C++ because they think a lot of the stuff C++ added (e.g. object oriented programming) made things more complicated but not better.

I’m one of those people who likes C better than C++, but I’m not a language snob.

Rust might be a little paranoid about memory but knowing that everything gets reclaimed as soon as it goes out of scope does save you from having to be paranoid about leaks.

And even Perl 6-level languages can work for 3D platformers if you’re willing to work with lower specs in mind, considering how blindingly fast everything is nowadays.

I think this focus on “Blow wants only UBER_PROGRAMMERS working in Jai projects” is somewhat false. He has repeatedly said that he wants both low and high level language features. Take Garbage Collection as an example. Well the problems that’s trying to solve are, accessing un-allocated memory, as well as forgetting to free memory. Those are things that a programming language should help with. However, GC absolutely ruins performance both by collecting the garbage, and even more subtly by enforcing a system of heap allocations because you can no longer manually allocate memory. On top of all that, many memory allocations are not for “garbage”, and freeing memory can serve as a fairly useful comment on the code.

Jai solves the above problems in a few different ways. The first is by having a little buffer called “Temporary Storage” for what we would traditionally think of as “garbage” memory allocations. You get a small buffer of memory that you can freely allocate from. At the end of the frame, or any logical moment for your program, you set the pointer back to the beginning of the buffer, and start over. This is a much more elegant solution to, for example, tiny little string allocations, since not only is the memory already allocated, and therefore much higher performance for getting and “freeing” the memory, but getting memory from the Temporary Storage comments the code, so anyone looking at that can immediately see “oh this is just some garbage allocations, okay.” It completely prevents memory leaks, at the tiny cost that you might overflow the buffer, which is completely on you.

Another example. You can call the defer keyword to have anything happen once you go out of scope. So if you’re opening a file, you can write defer fileName.Close(), right after opening the file so that you don’t have to worry about freeing the memory. This is useful for a lot of other things, but it also helps for that. As another example, just like modern C++ you can give pointer ownership, so when the pointer is deleted, the memory is immediately freed. As another example, you can tell the compiler to procedurally allocate memory for entities all at once. To explain that, if you have some entity that needs, say, a mesh, you find out how big the mesh is, and you can allocate the memory for the random variables + the sizeof(mesh) all continuously in memory. Then you just free the memory all at once.

The point is, these are all solutions that do a lot, have very little downsides, and help programmers be more productive. However, they require good programmers to get the most out of them, and can’t totally save a bad programmer from generating bugs like GC can.

I initially saw the same red flag that you did. And I generally agree with you about the problems with the “language for good programmers” idea.

But on reflection, I wonder if it’s simply OK for games to be less safe than other kinds of programs that are currently written in C or C++ (the ones Rust et al are targeting). There’s way less attack surface for security critical bugs (though, importantly, not zero attack surface), crashes aren’t as big of deal and are even arguably an expected part of the package, etc.

Rust accommodates for “bad programmers” by advanced compile-time checks, and Go does it through a simpler language design, but both are aimed at reducing defects through language design

The backdoor for Rust might be that a bad programmer never figures out the Rust checker properly, so you filter them out. Might be better than having them write rotten C++ that crashes intermittently. (Myself, I’ve never used Rust so I’m not sure how I’d rate. Fingers crossed! <== wrong attitude)

In my experience Java dips into both paradigms. Your code will be massive and hard to maintain while at the same time wasting memory and processor cycles.

Well I think it’s not fair to blame that on java. Because java is byte-compiled, it can be quite fast. Faster than scripting languages.

The problem is that Java is taught in schools… That means that a lot of people who are stuck in what they learnt at school will write java. In school they will have learned about OO programming, the sin of code copying, that writing reusable code is good and you should not hard code all sorts of values. They will take it to the extreme…

And then you get this: https://github.com/EnterpriseQualityCoding/FizzBuzzEnterpriseEdition

Or this: https://youtube.com/watch?v=-AQfQFcXac8 (warning: very funny if you have ever had to work with other people’s code)

This is hilarious, but also so close to real life. FML

It’s wild that you listed both Papyrus and Lua as your examples of high-level languages, because I’ve been working on hacking a Lua interpreter into Skyrim. It’s nowhere near done, so hopefully this comment isn’t a plug, but my experience doing it bears out a lot of what you’re saying: not everything needs to be low-level, and it’s surprising how much leeway there is even in games.

One of the fascinating bits about Papyrus is that it isn’t just designed to be simple; it’s also designed to be interrupted. A Papyrus script can theoretically run on any thread, but certain API functions (e.g. forcibly killing a character) must be carried out on the main thread. Papyrus makes this invisible to a script author: when you call these functions, your script halts execution and queues a task to run on the main thread, and is resumed after that task completes. (There are also a few API functions that queue a task and then continue on without waiting for a result.) Papyrus is simpler and allows content creation without direct engine access, but it’s also designed to run asynchronously to the main engine and juggle lots of concurrent tasks at once — which, naturally, frustrates mod authors who want their scripts to run immediately and synchronously as they did in past games, this being a huge need for custom game mechanics and similar. These are the folks who want to get closer to the metal — but not so close that they need a disassembler.

SKSE has a framework for code injection, so I can do things like hooking the “character is dying” code in the game engine — saying, “When this happens, run my code first, and then after that, I’ll tell you whether you should behave as normal.” I can do this to literally anything I can find, but because I’m actively interrupting the game, whatever I’m doing has to finish. I’m not allowed to wait for something else happening somewhere else. That means that if I want to give other mod authors an API to, say, intercept character deaths and change how they’re handled, I can’t use Papyrus. Thus, Lua, which by default runs synchronously and to completion (and if I don’t load the coroutine stuff, then there oughta only be that default).

There are some events I can hook that, surprisingly, carry little risk of slowdown — things like intercepting every stat change on every character, including things like “every frame that your stamina decreases while sprinting” and “every second an actor is affected by damage over time.” I can just slam Lua into that — and that, too, is a place where just about the entire game is waiting on code to finish — and I don’t see any lag, at least not yet. It’s interesting to think about exactly where the boundary is, where high-level code becomes “a problem.”

The Lua interpreter is surprisingly fast.

It’s widely used on very low-power CPUs for a lot of complex tasks, and I’m often surprised at how well it works.

Until it doesn’t, of course.

Only thing I don’t like about Lua is duck typing.

Strings, integers and floating-point are very different things, don’t convert from one to another unless I explicitly ask for that!

Yeah but …

… I have to say that Assembler isn’t the lowest level code … hand writing the object bytes (the Assembler’s output) into memory is … and even then rather than make syscall (aka interrupt) you could write directly to the video output using a hardware port …

“… and you tell young people today and they won’t believe you!” (obligatory? Monty Python (At Last the 1948 Show) quote)

One of my maxims is “Anything powerful enough to be useful is powerful enough to be dangerous.”

The contrapostive is equally true.