Link (YouTube) |

The most interesting thing about these philosophical debates is this: Many people, when presented with these questions, seem to already have some kind of mental model for how they think it all works. They have their own definition for what a person is, what consciousness is, and what it means to “die” in a world where people can be copied. And to them it’s all sensible, reasonable, and consistent. Perhaps even obvious. Everything is fine until they talk to someone else, who has a radically different mental model, which the other person feels is equally inescapable and obvious.

For example? Everyone keeps linking the Transporter Problem video by the awesome CGP Grey. In that video, the mental model is that since your cells are all destroyed, you die, and then a new thing – a copy of you – is created in a new location. This doesn’t match my mental model at all and so just comes off like a bunch of wanking to me. When talking about someone “dying” I’m much more concerned with the continuity and fidelity of their thought processes than with which particular pile of cells those processes are running on.

This is one of the reasons I like this game. It seems to be pretty good at finding those narrow gaps between people’s mental models and wedging them open.

For the record: I think the bit with Brandon is actually pretty tricky, ethics-wise. I shrugged it off during the game, but if we were causing him physical(?) pain then I might have reacted differently. But to me we were slightly upsetting someone for twenty seconds for our own survival, and that seemed like a pretty clear-cut case. The fact that he won’t even remember being upset makes this even easier. Also – and maybe I’m being unfair to Brandon – but I felt like he should have handled this better. He’s exhibiting Simon-levels of panic and confusion, when he ostensibly grew up around this technology and has been given ample time to wrap his head around the idea.

Dead Island

A stream-of-gameplay review of Dead Island. This game is a cavalcade of bugs and bad design choices.

How to Forum

Dear people of the internet: Please stop doing these horrible idiotic things when you talk to each other.

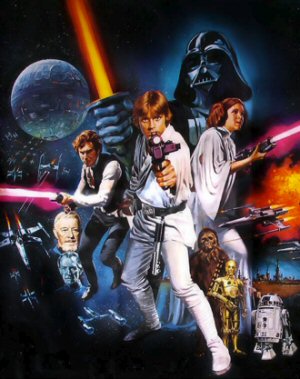

If Star Wars Was Made in 2006?

Imagine if the original Star Wars hadn't appeared in the 1970's, but instead was pitched to studios in 2006. How would that turn out?

Trekrospective

A look back at Star Trek, from the Original Series to the Abrams Reboot.

Grand Theft Railroad

Grand Theft Auto is a lousy, cheating jerk of a game.

T w e n t y S i d e d

T w e n t y S i d e d

You know, this question of “How can everybody be so boneheaded about this when they live in the future where people have had time to wrestle with the nature of transhumanism because it’s really happening?” has come up a few times now. I’m sort of head canon-ing at this point that, while the technology to practically “upload” (or however you wish to define brain-scans) one’s consciousness into a computer has existed for a long time in this universe, the actual practical applications of the technology hadn’t really been well explored before that whole nasty “armageddon” business.

For instance, the technology actually being used for brain scans wasn’t originally designed for that function; they were meant to be used as neural control interfaces for robots. As far as I saw in the game, it wasn’t until the WAU put together a primitive virtual reality simulation that Catherine even got the idea to create the Ark. This doesn’t really seem to fit in a world where anyone had been seriously considering–let alone attempting–to prolong and enhance human life through major cybernetic alterations or by replacing a brain with a computer or anything else in the transhumanist stable. And I think I remember there being dialogue at some point where Catherine states that the idea of putting a full replica of a human consciousness into a robot body wasn’t something that was really being considered before the end of the world.

I’m thinking the “transhumanist revolution” in SOMA was brought about by the desperation of the Pathos II crew after everything had already gone to hell, and that, in the larger world before the comet hit, it was still firmly in the realm of philosophical discussions. This is why everyone on Theta seems to be wrestling with what seem like frankly childish questions for someone living in a transhumanist world. Because they weren’t.

“Pathos II” implies there was a Pathos I. Was there another one launched (just before Simon was “awoken” or was one launched a long time ago? (filled with only rich people presumably).

Pathos II is the launch complex, the ship carrying the scans is the ARK.

I think the automated tour guide on the train said something about it being bigger and more powerful than the Pathos I station.

The end cutscene has a satellite with Pathos II on it, one can assume that the satellite and base station/space gun thingy is part of the same project.The ARK is a box that is put inside the satellite.I was initially confused about why he got as freaked out as he did, since he presumably expected to wake up on the ARK, but then again the way the brain scans seem to work, I guess he wouldn’t have been. There doesn’t seem to be any transition phase from “I’m here getting a scan” to “I’m in a simulated world” and this guy seems a bit on the paranoid side regarding the WAU entrapping them, where the lady in the broken diving suit was pretty chill with whatever she was experiencing.

Ultimately though, I attributed it mostly to the simulation he found himself in being sudden and maybe imperfect. Since we’ve been told that scans in robots that don’t have the ability to give the same sort of stimulus that are expected for a human tend to go crazy, and again the diving suit girl and Simon being some of the sanest so far seems to pan that out, I figured that suddenly being all alone in a strange place that may not even feel/smell/sound like a real place to the scan with only a weird disembodied voice urgently asking for stuff probably made for an environment that wasn’t good enough to exist in sanely.

Maybe it was just the perfectly alone part since once the guy had an Alice model to talk to he was fine, though I’ve also never tried or seen the Alice bit done in any simulation other than the scan room, so could be the opportunity to transition through what you expect to have.

I got the impression, that this guy was freaking out, because they were already in some kind of conflict with the WAU. Like, it was getting out of control, and hacking into everything. He was some kind of security officer, so I guess he assumed that waking up in a sim meant the WAU was trying to mind-hack him, to get access to stuff?

When Rutskarn mentioned the monster method I was waiting for him to segway into calling it a monster mash then start singing the song. I was disappointed.

“He's exhibiting Simon-levels of panic and confusion, when he ostensibly grew up around this technology and has been given ample time to wrap his head around the idea.”

We’ve had flight for more than a century and we still get people who freak when they do it for the first time. Though this might be one of those realistic/fun dichotomies, panicked people aren’t especially fun to deal with, it can even shut down emotional response and shift helpers into problem solving mode, which isn’t great if you’re railroaded into being a bit of a dick, it can feel frustrating. But for others it’s a really immersing sense of dread and horror.

Precisely this.Just becaue something is common doesnt mean all people will automatically be used to it.I mean we all know what vr is,weve known about that tech long before it became commercially available.But can anyone honestly say that putting oculus on their head for the first time is a mundane thing that they were 100% ready for?

Yes? It’s a HUD helmet. It might cause nausea, but it’s not some amazing thing, compared to what we already have. The jump from animals to engines was huge. The move from crappy low-res display, to less-crappy display is iterative.

Ah,you are one of those people:

https://www.youtube.com/watch?v=xAeVE3Lsr2I

Wow. That was really funny. I’ve never listened to the Co-Op Podcast. (Since I have no commute, I don’t have time or need for podcasts.) I know TotalBiscuit mostly as the insanely popular guy who opens a game and spends 10 minutes dicking around with the options to uncap anisotropic bling-mapping. But that entire conversation was great.

Yeah,they have a guy who animates snippets of their randomest of conversations and turns them into these….things.They are pretty funny.I think most are on the polaris channel.

The guy in the chair, was he one of those that committed suicide during the upload? If so it might explain the erratic behavior.

Also, isn’t Simon used as a blueprint for the AIs and that might affect some people that are uploaded? (the people are a software and the Simon scan is the OS in other words)

“When talking about someone “dying” I'm much more concerned with the continuity and fidelity of their thought processes than with which particular pile of cells those processes are running on.”

Can you disconnect the “software” from the “hardware”, though? There’s no evidence whatsoever that this can be done. Just because the self and the brain are not identical does not mean they can be separated. The green of a green-painted object is not identical with the paint, but that doesn’t mean you can make it not be green without doing something to the paint.

I suspect (but do not know) that it might eventually be possible to hook two brains together (or a brain and a brain replacement) and let the brain-owner “drive” the second in parallel for long enough that you could begin to suppress parts of the first brain and the person wouldn’t notice, until eventually you could suppress 100% of the original brain with no effect on the person. This is basically what your brain does anyway when it replaces old cells with new, after all. But the amount of data-swapping you would need to do would likely be staggering. (Or not, I really don’t know.) And I have no idea how long it would take your brain to get used to running in parallel in that way.

“Can you disconnect the “software” from the “hardware”, though?”

In real life? That sounds like some post-singularity shit to me. Brains are complicated the way space is big.

But in fiction? Obviously it depends on the fiction. Trek just flat-out presents a world were the new copy is 100% accurate, and sometimes even shows that the transition is seamless. (In one of the movies, Kirk is busy talking while the transport is happening.)

But I keep asking this same question with regards to SOMA. The game presents all of these copies as if transporting the software to new hardware was reliable / straightforward. Catherine even says, “You’re still you.” But then you’re obviously different with regards to sensory input. It’s hard to tell if the game is – for the sake of the thought experiment – saying that copies really are 100% accurate regardless of hardware, or if this is one of those things you’re supposed to wonder / worry about.

“Trek just flat-out presents a world were the new copy is 100% accurate, and sometimes even shows that the transition is seamless. (In one of the movies, Kirk is busy talking while the transport is happening.)”

Kirk #2 has been assembled in a state halfway through the act of talking. That doesn’t automatically mean Kirk #1 is not dead, only that Kirk #2 knows what he knew.

From a real life hardware perspective there is absolutely no way that you could 100% simulate all neuronal chemical activity in any brain. The calculations for anything more than a few molecules require empirical approximations.

Even simulating a single molecule requires approximations of the Schrodinger equation that require ever increasing amounts of computational time for ever decreasing gains in accuracy.

So no. There is no way you can 100% simulate a brain (Or any other chemical process).

Approximations would have to be made.

Even if approximate simulated neurons that give the same empirical I/O results could be used then you’d still need a massive array of microprocessors running parallel. (Researchers are already doing this to simulate invertebrate brains – and putting them in control of robots . The ethics of this get pretty hairy if you go bigger than an insect).

In reality there is no physical way to get anything better than an approximation that you could hook up to sensory input and output. But it would be running on a supercomputer in an airconditioned room somewhere while its avatar is controlled by wi-fi.

But maybe Simon is running on some newfangled quantum biochip handwavium nonsense.

That all depends on how much quantum fluctuations influence our thoughts.It could be that majority of them depend on minuscule fluctuations of individual subatomic particles,in which case 100% replication is impossible.But it could also be that only a tiny portion,or no portion at all,of us are affected by those,in which case 100% replication is just a matter of high enough computer power and energy.

I don’t think it’d actually be necessary to 100% simulate the function of the brain in order to move “someone” to a “new” brain, though, because your brain dies off and replenishes itself constantly. “You” are not identical with a particular arrangement of cells and chemicals.

I suspect it will turn out to be necessary to have an extensive hardware hookup, possibly through the corpus callosum, and it would literally feel like you had two bodies for the time it would take to train the new brain to “support” you.

It would be exceptionally bizarre, to be sure, but there’s a fair analog for this on a smaller scale with the way stroke victims can train their brain to “re-route” around damaged areas and re-acquire lost capabilities over time. Even adult brains are fairly plastic, and it seems that stem cells and other therapies can improve this plasticity.

Having only watched through this playthrough (and a bit of another on YouTube just because you guys haven’t been playing fast enough :-P), I take Catherine’s “You’re still you” as pragmatic–she’s telling you what she thinks you want to hear so you don’t question it and will act according to her purposes. To me it seems like something the player is definitely supposed to question and consider.

“You’re still you” is an opinion, and not even really falsifiable. Certainly you are as continuous with the ‘you’ that walked across the ocean floor a minute ago as anyone is from minute to minute… but are you the same you that was in a state of unconsciousness for ten seconds after the monster hit you?

If you are the same as an unconscious and incoherent entity, why can’t you be the same as an entity running in non-potable water substrate instead of doped silicon substrate?

“You are you,” is more a tautology than an opinion.

Those who have not yet might want to read up on the “real” Rainman. Supposedly his brain-halves was disconnected and worked independently (a 2 CORE human CPU in other words).

But unlike others he retained a high speed bridge between the two halves so information that one brain lacked could be retrieved from the other half.

Hmm! I wonder if a human brain is a RAID of some sort?

The real Rainman’s brain https://www.psychologytoday.com/blog/the-superhuman-mind/201303/the-brain-the-real-rain-man

Actually, is it me, or does Catherine seem slightly different every time she jumps between computer terminals?

She doesn’t notice or acknowledge it, but her mood and persona shift slightly, as though the same memories are being interpreted in a slightly different way.

I was wondering about this sort of thing when Shamus or whoever was talking about the “guess the number I’m thinking of” trick.

Would you necessarily think of the same number each time? In the case in this episode, they try different simulations (a cabin, the beach, etc.), and the questioning isn’t exactly the same either. The setting, the way the questions are asked, any minor differences will surely have an impact on the thought process. Not to mention that the scanned person might lie.

“Escape From Monkey Island” is your go-to for time travel puzzles in adventure games? Puh-leeze. Does no one remember the Infocom game, “Sorcerer?” It had an area called “The Coal Mine” which presented you with an interaction between two chronological versions of “you”:

The Coal Mine

Save the game. Check that you are carrying nothing but the orange potion and your spell book. Go east into the Coal Bin room. Open the orange vial and drink the orange potion. A future you will show up. Drop the orange vial. Your future self will tell you a combination number; make a note of it. Give spell book to older self and then go east into the Dial Room. Turn the dial to the number you were just told by your older self. Open the door. Go east into the mine.

Get the rope and go up, southwest, get the timber, northwest, and west. Tie the rope to the timber, drop the timber, and throw the rope down the chute. Climb down the rope. (If you don’t land in the Slanted Room, you were still carrying some other object; restore.)

Take the shimmering scroll (golmac: travel temporally) and cast it on yourself from the scroll. Open the lamp and get the smelly scroll (vardik: shield a mind from an evil spirit). (Without your spellbook, you can’t gnusto the scrolls you find in here, not that you have time to anyway.) Go down. Now you must repeat the actions that you saw yourself performing. Say younger self, the combination is [number] (the number you were told before). Wait one turn; your younger self will hand you your spellbook. Go down. Read the smelly scroll and gnusto vardik. Wait a turn or so until you are told that the vilstu potion has left you exhausted, then sleep.

This was in 1984, and the Escape From Monkey Island puzzle wasn’t “created” for another sixteen years.

And you call this a show about video games. Harrumph and monocle!

That was a dick move from catherine in the end.Why did she break character before we unplugged the guy?It was unnecessary and cruel.

Apologizing made her feel better, and she figures her simulated emotions are going to keep being simulated, while she’ll be resetting his memories back to their previous state?

Except she isnt resetting his memories.They are literally killing a guy,then pulling another copy of him into existence(only to kill him a minute later).So she is basically telling the guy on his death bed “This isnt real,we tricked you and are going to pull the plug now.Sorry.”

Interesting idea: Create two instances of him in the same simulation, and inform them whoever spills the beans gets to continue existing.

Conversely, if there’s something you want to know from a brain scan, and they can’t decrypt whatever it is they want from the data they have, either the scans are in a form that can’t be teased apart, or we’re seeing someone trying to figure out what the source code of an application is with only the install disk and no de-compiling software available.

A good summary of the two theories of identity in conflict in the game is given in this video. It also explains “the continuity” position far better than the game. Mark Sarang would probably side with John Locke and Catherine Chun with Derek Parfit.

Also, if you really want to get into the philosophy of identity then here are some summaries of the verious problems and positions by some philosophers:

Identity

Personal Identity

Relative Identity

Identity Over Time

Personal Identity and Ethics

Not quite.The video explores both of those options and raises questions about both.The “you is cells” thing has problems when you can be transfered into a new body,and also when your whole body regenerates.The “you is continuity of mind” has problems when you are duplicated,but also when you go to sleep,are knocked out,suffer amnesia,etc.

And thats the reason why the question is still being raised by philosophers even though the technology has advanced incredibly since it was first thought of.There still isnt a satisfying answer to it.

“Transporter Problem” is not much of a problem if you think about it logically.

There is no “you” left behind, matter is transformed into energy which is then transformed to matter.

There is no pile of goo left on the teleport pad.

Whether one is spiritual or pragmatic does not matter in this case.

Now Simon’s “dilemma” is different as he’s a clone or copy (the original him was not transformed from matter to energy).

Edit:

SOMA raised the interesting question of the soul.

In my eyes the soul and consciousnesses is the same thing. If you mind is paused so is your soul.

If your brain is copies so is your consciousnesses and therefore your soul.

Take Catherine for example, one can’t deny that she is sentient despite being a copy.

(I also can’t help but feel that Catherine is running on a different OS than Simon is, considering how stable she seems compared to him)

Er… not exactly.

See, transporters try to convert as much of the same energy into the final matter as possible, but they actually always lose at least a percentage. If there’s a negative space wedgie effecting the transporters, you go in knowing that 50-100% of the “you” on the other side will be replicated from stored energy on hand at the destination.

The extra energy gets dumped into the power grid and used to power holodeck games, or whatever.

That’s how a transporter can clone a person. Two transporter beams dissolve 50% of your matter each, and then they replicate the missing parts and interpolate the missing data.

Considering the inherent dangers of interpolating from such a fragmentary data set, W.T. Riker is lucky he didn’t come out looking like something from Deep Dreaming.

“but they actually always lose at least a percentage” can you point me to the science article for this?

I was speaking of a rhetorical transporter based on the idea/concept (which is all that exist today).

maybe https://en.wikipedia.org/wiki/Laws_of_thermodynamics#Second_law ?

Actually the video touches that as well.Because sometimes a single person gets split into multiple persons,or multiple persons get fused,its not the energy gained from your destruction that is transfered*.Rather information gained from scanning you during your distraction is whats sent to the destination where you are being rebuilt from surrounding matter and energy.So there is a you are being left behind in a floating gas of loose energy left after disintegration.

*Even if we assume 100% matter/energy transformation and energy transportation,you cant recreate two same objects with the energy of just one object.And this is all assuming that the energy coming from your destruction is somehow still you,and not just a generic type of energy.

Having molecules interpolated or replaced is not an issue for me at least, the same happens daily in the human body.

Now I can’t deny that the death of a brain cell and the replacement with a new one won’t alter my personality over the years (it probably does, and just as what we eat and drink affect us).

Now if we’ll take one transporter in particular (Star Trek) rather than a theoretical one.

Why didn’t they use transporter tech to renew the body (but retain the brain pattern)?

This would allow eternally youthful bodies. (can’t recall if they ever addressed that in any of the series).

Also, if people/crew died, why not use some reactor energy and re-create a person from their last known pattern. Even if a red-shirt died you could always “revive” them.

Lost a limb in an accident, a long damaged? Just send them through the transporter and replace what was damaged.

Star Trek was always a juxtaposition of fantastic tech and ordinary tech.

Its a poor presentation by the game,but the guy wasnt just slightly upset.I mean we adjusted easily to simon transitioning from that chair into the future,but imagine if you werent watching it through a computer screen,rather that it actually happened to you.You put this thing onto your head,pull it up and suddenly everything is different.You wouldnt be just slightly upset,no matter how familiar you are with the theory of it.

And thats one of the reasons I dont really like this game.It just raises a bunch of questions in an awkward way,doesnt say anything about them,nor does it present it that well.Its too flat.

So I ended up picking up SOMA when it went on sale last weekend, and it was actually really fun to play with the Wuss mod. Kind of a Firewatch meets Dead Space insofar as gameplay and aesthetic. It’ll be fun to watch the rest of this season actually knowing what’s coming now.

There should be more games that acknowledge the environment they exist in. Everyone is hooked into the internet these days, and they’re always talking about the games that they’re playing with eachother, asking advice, finding common ground in complaining and praising various elements, and snooping around to see whether a game is a good purchase in the first place. SuperBunnyHop has been grousing lately about the nature of multiplayer shooters being lost to history after their playerbase has moved on, but there’s a certain single-player game that has taken its environment fully into account…

Brace yourself for this (trigger warning?), I’m talking about Dark Souls.

Everything about Dark Souls feeds into the online environment. You’re not supposed to seclude yourself from the outside world. There’s tools everywhere within the game for people to communicate with eachother in one way or another, and the game is designed with all these goofy little secrets that people are supposed to share with one another.

“When talking about someone “dying” I'm much more concerned with the continuity and fidelity of their thought processes than with which particular pile of cells those processes are running on. ”

It’s a difference of perspective, I guess. From my self-preservation perspective, I’m much more interested in me than a second me that’s exactly like me. Say I was cloned perfectly and then disposed of, and my clone was living my life. That’s technically a perfect continuation of me, and perhaps other people won’t ever notice or complain. But I don’t get to enjoy it on account of being dead, and I sure wish my clone would try finding my killer if he ever found out. Or to put it another way: I do not believe my literal point of view would change if I was cloned. The game would end early while an NPC did the rest off-screen.

And from a fiction perspective, I think dealing with the original person is more interesting. Sometimes characters exist, either by way of magic or way of science or reincarnation, that are perfect or imperfect “clones” or ghosts or whatever of people a long time in the past. I remember several villains being literal “ideas” that would possess people like ghosts to carry on their legacy. That’s frustrating to me. Unless that personality is just amazingly charismatic(I recently watched an anime in which Leonardo da Vinci’s mind was uploaded into a clone body in the present, and he was a wonderful villain), it’s annoying to deal with whispers of the past that should long since be gone and have no place in the present.

This is part of why the lore of (Trigger warning)Dark Souls 2 is annoying.

I don’t know what it is but that video about the transporter and following discussions really pisses me off and I can’t explain why. Seriously I was like this https://www.youtube.com/watch?v=gZEdDMQZaCU but now I’ve gone full circle and actually am finding it funny (seriously, I’m chuckling like a loony right now) how annoyed it made me. That’s bizarre.

I forgot to comment on this earlier but, I like Shamus’ remark on how it would be a waste to delete something.

I agree with that, heck I would not have an issue with another “me” out there.

More than likely we’d help each other, and we’d both be very pragmatic knowing that one gets to leave and the other not.

Obviously the me left behind would not want to die but that me would not deny the other the chance to live either, as both are me my self and i.

I think two (or more) me would be able to do so much more than just one me creatively speaking, and we’d easily understand each other.

If I recall correctly Stargate SG-1 actually had a few episode where they met multiples of themselves (character Samantha Carter ended up meeting a bunch in one case). Although those where from alternate dimensions and not true copies.

PS! Do note that when I say copy/clone I mean a full one not just body but mind as well (which is what SOMA touches on), a mere clone could have different brain connections than the “original” if it was raised differently, or raised in any way at all. So when I say copy I mean split from the same moment in time and for all purposes both are the original/copy at the same time.

This is late, but I wanted to respond to the whole ‘deleting thing’

I deleted the Simon scan, and here’s why. The Scan isn’t sentient, it’s just in the computer at the moment. But while the scan exists in the system, the WAU can bring back more Simons in robots.

This universe sucks, I don’t want more of me to have to deal with it. My character will be the last Simon, at least. (Well, at least that was the plan at the time.)