I worked at Activeworlds for a lot of years. Activeworlds is a social / gaming world along the lines of Second Life or Roblox. It’s a virtual world with user-made content. The experience gave me some interesting phobias regarding CPU cycles.

Perhaps some anecdotes would help. For the sake of argument, let’s say these are all taking place around 2003 or so.

Joe User is building himself a virtual office in Activeworlds. He wants dark windows with a heavy tint, but the object library only has these windows with 50% transparency. Joe doesn’t understand transparency. He’s not a graphics artist or a programmer. He’s just a regular person, and to him tinted windows are tinted because they’re “dark”. He tries changing the color of the window from the default blue to black, but confusingly it doesn’t help. He can still see through the window just fine.

He makes a copy of the window to try another color when he has a eureka moment. Looking through both windows – one in front of the other – really makes a huge difference. What’s really happening is that the first window is blocking 50% of the outside color, and the second window is blocking 50% of the remainder for a final opacity of 75%. Joe doesn’t know this. All he knows is that this looks nicer. He makes another copy, and it’s better still! This is clearly the key to success. Two more windows perfect the look, giving him an overall opacity of 97% or so. He’s got five windows stacked up here. He nudges them so they’re only a centimeter apart.

If you’re a professional, your eye is probably twitching by this point. This isn’t art, it’s sabotage. Alpha surfaces (like our partly transparent windows) must be sorted before rendering. It’s a constant struggle to limit the number of transparent surfaces you’ve got in the scene and you want to be very careful about situations where the user will end up looking through multiple alpha polygons at the same time. The program has to get the distance to each surface, then shuffle them around and put them in order from furthest to closest before they can be drawn. All of this work must be done by your CPU. (Maybe new graphics cards have some trick for this, but in 2003 this load went right to the CPU.)

But that’s not the bad part.

The bad part is when Joe grabs those five stacked windows and begins duplicating the group over and over to construct an office building out of them.

At a lot of development studios, if a member of the art team did this you could go to their cubicle and set them on fire without breaking company policy.

Meanwhile…

In another zone, Jane User is building herself a grand welcome area to her sea park. She couldn’t find any sea-themed stuff in the default library so she went online and searched for “3d models”. She found this awesome model of a dolphin balancing a ball on its nose. It was built in Maya or 3DS Max. She finds a converter and proceeds to take this thirty-thousand polygon dolphin and put it in her world as a statue, right near the welcome area. In fact, she builds two of them, facing each other, to form a little archway that new visitors will walk through when they arrive. She overlaps the models so that the beach balls are both in the same spot, making it look like the two dolphins are holding up the same ball. She stands back at a distance and admires her handiwork. Not bad, not bad at all.

She notices that this Activeworlds program is kinda slow all of a sudden. Oh well. Maybe they’ll get around to fixing this software one of these days.

That’s not the fun part.

The fun part is twenty minutes later when Bob Visitor arrives on his wobbly old 2001 laptop. He follows the path to the archway and walks through. As he passes under the beach ball(s), he’s actually occupying the bounding boxes of both dolphin statues at the same time. His computer is now performing collision checking on sixty thousand polygons and has basically stopped doing anything else. After a few seconds he figures Activeworlds has crashed. He closes the program.

Stupid buggy Activeworlds.

Then Jeff User comes along. He’s a kid. One of his friends tells him about this command you can put on objects that will cause them to display pictures from anywhere on the web. He’s figuring out this scripting language and he realizes you can set it up to show a whole bunch of images! He writes this long command to download all these gigantic (1280×1024, big for the time period) desktop wallpapers and animate them on a wall. It’s slow as hell for some reason, but it looks sweet!

Sadly for the people walking by outside, it slows them down too, even though they can’t see inside Jeff’s house.

Now, there were guidelines and rules and tutorials in place to encourage people not to do these destructive things, but you can probably guess how likely it is that young people will want to read technical documents before playing “a videogame”. This was exacerbated by the problem that people usually continued building and didn’t think about framerate until their computer began to slow down. And some people have a lot of tolerance when it comes to low framerates. And the icing on the cake is that everyone has vastly different computers. So one person with extreme tolerance for low framerate will use his brand-new lightning-fast computer and build until his framerate is in the single digits. Then other people show up with normal computers and it’s all madness and tears and crashing.

As one of the programmers trying to keep this system going, this setup made me paranoid. In a videogame, if the art team uses a texture eight times larger than allowed, or if they blow through their polygon budget by a factor of ten, it’s no big deal to the coders. Maybe the program crashes, maybe it runs slow. Either way, it’s not your problem. If you’re feeling super-nice you’ll put in a warning message to let the artist know they screwed up. You can’t do this when you’re dealing with user content. Especially when you’re a small company and you can’t afford to drive people away for being bad at 3D graphics.

I had to operate under the assumption that any system could begin devouring system resources at any time. Maybe too much collision detection. Or the animation system might begin shuffling massive texture billboards around in memory. Or the alpha sorter might go nuts. Or the raw polygon-pushing stuff. Or the character animation system. Anything. Anything can run out of CPU or memory at any time.

The result is that you have to build your application like a tank. It needs to be able to absorb and mitigate any level of art asset insanity.

The ramifications of this are kind of crazy. A regular programmer will nod sagely and suggest you deal with CPU usage spikes by putting the problem code in its own thread. But that doesn’t help when any code can become “problem code”, and running every single system in its own thread can introduce a whole new batch of problems.

It wasn’t until recently that I realized this paranoia was unusual. Even now, it’s really hard to make a system without asking myself, “But what if this takes a really long time for some insane reason?”

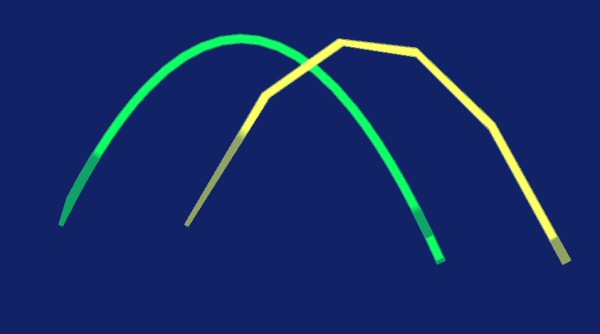

Let’s say you’ve got a physics simulation running at a nice smooth 60 frames a second. Say we toss a ball and watch it drop:

|

In an ideal world, if the computer is slow then we’ll get less frames, but we’ll just be seeing fewer timeslices of the exact same scene. If we go down to 10 frames a second, then we’ll end up missing 5 out of every 6 frames, but the ball will still follow the same trajectory:

|

But in reality, we end up with a badly mangled simulation that no longer works properly.

|

We get a different parabola because this isn’t linear movement. If the program says, “I’ll do six frames worth of accelerating right now, and then I’ll apply six frames worth of movement to my position.” then we’ll end up with the red curve above. Calculating 1% interest a day isn’t the same as 30% interest every 30 days.

And of course, what if there’s a wall in the way? The green line will bounce off it like a good physics simulation should, while the red line will skip over it. One frame it’s on the left side of the wall, and the next frame it’s on the other side of the wall, and we never got a frame where the ball was intersecting the wall and was available to bounce off of it.

Now the obvious solution is to just go back and do the per-frame calculations. If it comes time to update the ball and we’re five frames overdue, don’t just do one giant move times six. Do all six frames, one at a time, before going any further.

That’s a pretty good solution. Unless the physics simulation is what is causing the slowdowns in the first place.

That seems like a funny idea when we’re talking about a single ball arcing through the air, but if we get a few thousand of them (say, debris from that giant robot you just blew up) and they all need to collide with the scenery (say, the building right behind the robot) then your ‘lil CPU might have some serious math homework to do. If you’re five frames late because you were running physics simulations last frame, then attempting to run an extra six frames of simulation THIS frame is not a solution, it’s the start of a death-spiral.

|

I’m reasonably sure I’ve seen this death-spiral in commercial games. In Half-Life 2: Episode 2 there’s a scene at the start of the game where a bridge collapses. My computer was on the low end of the spectrum when the game came out, and this scene spiked exactly like our hypothetical runaway physics simulation. The frame rate cascaded downward, with each frame taking longer than the one before it util the game came grinding to a halt and the sound began stuttering. This ended in a long (ten second?) pause before the game snapped out of it again and returned to normal. I’m guessing the physics was trying to catch up, and each frame it was frantically trying to make up for the deficit, which only pushed it further in the hole. The only reason it didn’t crash the game was that the bridge collapse was scripted to take a fixed amount of time. If this was an ongoing thing there would have been no coming back.

You can put in some kind of safety-valve trigger that will skip the physics if they start killing you, although detecting and isolating performance problems on the fly from within the thing you’re trying to measure can be problematic. And even if you guess right, skipping the physics means that you’ve got different parts of the game running at different framerates and at different levels of fidelity, which can lead to strange problems in other areas.

But what if we just decided we didn’t care? What if instead of trying to detect, correct, and mitigate performance problems, we just built a program with no such safeguards? I’m going to find out. To wit:

This game is going to run at sixty frames a second. Period.

Instead of treating 20fps as “not the best”, I’ll treat it like a failure state. If the game doesn’t have the power it needs, it won’t skip non-critical things to speed up. It won’t run parts of the system at a lower framerate. It won’t try to catch up. If the computer slows down, the game slows down. (And to be clear, the game won’t go faster if you have a fast computer. It’s capped at 60fps.) If for some reason the game is played on a machine that can only keep up 30 frames a second, it will feel like some sort of half-speed bullet time.

This saves… well, it saves a ton of work. An unbelievable mountain of uncertain guesswork and difficult-to-test safeguards can just be eliminated. Since we’re not doing heavy-duty 3D, we can just assume the speed will be there.

I don’t know. I just clocked it, and at this point in the project I’m idle for about 730 milliseconds out of every second. So, I’m idle about three-quarters of the time. This is using a debug build with no optimizations.

I don’t know if this is a good idea, but I’m going to try it. I can always add more sophisticated time management later if I need to. To a certain extent, this is like a man announcing that when he takes the dog for a walk, he’s no longer going to wear the crash helmet, safety harness, steel-toed boots, and bulletproof vest. If nothing else, this will be a new experience for me.

And yes I did just write a 2,000 word entry on a feature I’m NOT putting in.

You can’t stop me! I’ve got the source code!

Megatextures

A video discussing Megatexture technology. Why we needed it, what it was supposed to do, and why it maybe didn't totally work.

PC Gaming Golden Age

It's not a legend. It was real. There was a time before DLC. Before DRM. Before crappy ports. It was glorious.

Crysis 2

Crysis 2 has basically the same plot as Half-Life 2. So why is one a classic and the other simply obnoxious and tiresome?

Trashing the Heap

What does it mean when a program crashes, and why does it happen?

The Game That Ruined Me

Be careful what you learn with your muscle-memory, because it will be very hard to un-learn it.

T w e n t y S i d e d

T w e n t y S i d e d

I’m not clear on how that works – how does “if my computer only has power for 30fps, it’ll be in some kind of weird bullet time” even happen? Where are the other 30 frames magicking themselves in from? Are you saying that each frame will appear twice? I’m really confused. “If the computer slows down, the games slows down” I understand – that would be fewer frames per second. But you just said that it never has fewer frames per second. It’s locked at 60Hz.

No, you got it. The framerate just drops. I said it was “locked” at 60 to (try) to make it clear that 30fps wasn’t a deliberate fallback state. It’s not DECIDING to drop to 30fps. It’s just happening.

Probably could have phrased that better.

Soooo, in terrible pseudo-code:

frame_start = time_in_micros();

do_the_thing();

sleep_time = 16666 – (time_in_micros() – frame_start);

if ( sleep_time > 0 ) micro_sleep( sleep_time );

Just one dumb question, then. Why 60Hz, when it interacts so poorly with the times given by computers (generally microseconds in modern computers)? Historical habit? 64Hz makes a nice, round number of max-micros-per-frame :)

Because there’s so much variation in the time that it takes to complete a frame that the game will almost never run at the precise rate you want.

Whether the game runs at 60Hz or 64Hz, it’ll still spend several milliseconds per frame waiting to start the next one (or if it’s slow, working overtime to catch up).

(And also, games almost exclusively use milliseconds in my experience. There’s just not enough time to count in microseconds).

As mentioned above, this has to do with monitor refresh rate.

I would imagine that the VAST majority of LCD monitors out there run on 60Hz refresh.

So more than 60 would be pointless (assuming you don’t want to have tearing), less than 60 would be less smooth.

I guess because it is a multiple of 10 (decimal), which makes it a far more comfortable number for non-computer people. That’s why some software* calculates kilobytes, etc. by using factors of 1000, in what I assume is a vain attempt to not confuse users who don’t understand the number either way.

*The other reason is dumb programmers. Let’s just say I’ve once stumbled upon professional code using a factor of 1000 for internal calculations.

A real kilobyte is actually 1000 bytes. The ‘kilo’ comes from SI prefixes and means 10^3.

What people usually understand as kilobytes (i.e. 1024 bytes) are called kibibytes (KiB), from ki(lo)bi(nary)bytes.

So when USB drive makers put ‘4GB’ on the package and give you 4000MiB, they’re actually not lying.

Well, they still are being incorrect. Since GB is 10^9B and not ((2^10)^2)*1000B. SI prefixes derive from base unit, not from the multiplier one step down.

“Since GB is 10^9B”

Only for hard drives. A 4GB memory stick has 2^32 bytes. CPU cache works the same way.

The problem with kibibytes is that nobody knows what they are (and everyone knows a kilobyte isn’t really a thousand bytes,) and also that it sounds kind of anal-retentive.

I can’t believe I’m taking the descriptive language side here vs proscriptive side, but the ?[ei]bi prefixes just sound dumb.

Introducing a different definition is a great way to get around accusations of lying.

Correct me if I’m wrong, but isn’t the whole “kilo means 1024 in computers” tradition a product of unjustified inertia? There are some types of memory that need to come in powers of two in order to be addressable (CPU cache, RAM chips), but there’s no particular reason to use it when talking about HDDs, file sizes or network speeds. Though ironically, I like seeing file sizes reported in KiB – unlike kB, there’s no ambiguity which scale is used.

I always assumed it was because Americans are used to looking at 60Hz things.

It’s because most screens are 60 Hz.

Capped to 60 frames per second means if something takes less than 1/60th of a second – we’ll wait until we filled the whole 1/60th of the second.

“Locked to 60 frames per second” is probably the wrong way to say it. The game will run the world as fast as it possibly can, but is designed to be viewed/played at 60 “ticks” to the second. It means if something takes more than 1/60th of a second we’ll wait until that bit of update and drawing is done before doing anything more. Nothing else happens in the game world, it is timed by the work needed to be done.

The capping will stop good computers from running the game at 4 times (or more) the designed speed, but nothing will stop a bad or busy computer running it at half (or less) the speed.

The “We” is a figure of speech, I’m not in on this. ;)

I think what Shamus is saying is that “from the point of the code, it’s executing at 60 fps” but from the point of the world, it’s “you see 30 screen updates per second” and since the “do not run at hgher than 60 fps” sleep loop(s) never happen(s), the code is oblivious to the slowdown that you see.

As the blog post explains, in his earlier career he has written code that deliberately detects a drop in performance and tries to save CPU cycles by using approximations or shortcuts in an attempt to maintain the frame rate. This time he is just going to assume everyone has a machine able to run the game at 60 FPS, and whatever happens on slower machines is undefined behaviour.

Yup, that’s pretty paranoid coding, but it’s interesting to see (and hear about). My (very) limited experience was always ‘make it work and damn the torpedoes’ but I was coding fairly simple things on powerful machines with very strict deadlines.

Really enjoying this series! I love learning about the backend of programs.

Yeah…alpha sorting can be a huge pain in the ass. For the last project I worked on (for University) I needed some particle system, with many, many particles, all with an Alpha Channel. And then I wanted more particle systems to make it look nicer, but I didn’t want to sort them, too, so I just made an additive shader…these were explosions and lazers anyway, so they looked even nicer overbright.

Regarding the collision: With a functioning BVH (Bounding Volume Hierarchy) you would not have to make a single triangle-triangle test in the example with the dolphins (if there were any triangle-triangle tests in 2003 at all…oriented bounding boxes are already heavy enough when dealing with many objects). But collision detection and physics in general is evil black magic in any case and making it work correctly within the constraints of a realtime application can drive you insane. Especially if you not only want collision detection, but also collision response, with objects changing their movement vectors after a collision, since you first have to simulate a complete frame, check for collisions and then roll the complete scene back to the first collision, modify the vectors and restart the simulation from that point on, until you get to the end without further collisions. Madness…

But, for the player, sometimes, amusing madness when a bucket gets stuck in a chain-link fence after blowing up a street full of derelict nuclear cars and unwittingly killing the trader and her dog living on the bridge with said fence.

One thing I’ve wondered about for quite a while is what the hell happens when I ever my house in Skyrim. It happens in many other game, but I’ve been playing Skyrim the last weeks, so it’s the most recent.

I enter my house and suddenly some thing I arranged without any problem are propelled with terminal velocity in all directions, bouncing through the room and knocking other thing over. Even objects placed by the game(s designers) to it, especially in my new Hearthfire DLC house near Falkreath.

I build this house myself and it is HAUNTED. Rearrange some pots and when you enter the house next time, everything will be in the place, it was before. Put a few bottles of wine on a table or a weapon/jewelry in a display case? Next time you visit, it will all lie strewn on the floor and there will be grubby fingerprints all over your statue of Dibella.

I noticed that too, it’s a common issue and it has to do with their content loading system. When you enter an area the game tries to load everything’s locations, unfortunately sometimes it has to approximate it or items are in partial ‘invalid’ states such as sticking through another object. When the items spawn the physics simulator kicks in and the items will react accordingly, thus you get the physics explosion when you walk in your house.

I poked around the modding tools at one point and there was an article about how to test item placement in rooms you created to specifically avoid the physics explosion problem. It usually involved using a tool to simulate the physics or using workarounds to temporarily disable physics on an item.

Ooohhhhhh. That explains, why items are sometimes placed slightly above a surface and when you enter a new room and gravity kicks in, then fall down a bit. To prevent poltergeist activity.

There’s actually a trick that involves dropping stuff on the floor of your house from your inventory, then walking out the door and saving (distinct, NOT the auto-save). Reload the save, go back in, and everything you dropped *should* be safe to place.

I’ve tested it and it works, though I have *no idea* why.

Trouble is, you have to go through the process again every time you accidentally put one of those items into your inventory while manipulating it, because the point is objects right out of your inventory aren’t “safe” (which WILL happen, lots). Oh, and some objects, like dragon-priest mask, will never be safe even with this, so I can’t be arsed anymore. Why the hell can’t we move and rotate the items anymore when placing them, anyway??

You might want to look it up for the process. It’s been forever since I did it and I might have missed a step.

Is your thinking behind this maybe also applicable to other companies and design decisions?

In several Spoiler Warning episodes there came up one point: that developers today are too afraid of the player doing anything not predicted by them and so they don’t give the player (m-)any freedoms. (Un-)Like all the abuse/glitching possible in Deus Ex, often simply created by allowing the player lots of freedom, even to build high crate towers to climb over everything and break the game if they really want to.

I’m sure you could have imposed some restrictions: No windows too close to each other, player imported models’ hitboxes may not touch (and can’t be walked into?)… oh, and also players may not jump, cover can only be used in specially designated chest-high wall areas, all the buildings and waterparks have to be linear experiences and all the buildings are instances!

In fact, just remove the building part altogether. All players really want to do is shoot each other.

Wouldn’t that mean as your computer is slower, the game gets easier? Slow motion is a very powerful tool to have.

Yes. Although that would be a really crappy way to cheat. Probably better to just make real cheat codes available so nobody goes to all the trouble of finding a tool to download the game.

Why not sell the cheats as DLC? I mean, even Saints Row 4 could do that before the game was actually released. You could, like, hand out the game for free, but it make it so brutally hard that people will want to pay for godmode.

Considering the nature of the internet, I suppose something like this has already happened somewhere.

Isn’t that the whole Free2Play/Freemium business model?

The standard model is to make the game not fun, and charge for something that promises to make it fun. (Actually making the game fun appears to be optional in that business model)

Actually I think the more ideal (and potentially more successful) model for FTP is having nearly all the gameplay content free and simply adding convenience/cosmetics as the paid stuff. Basically the kind of design where a person could still enjoy the game without paying, but they’re likely to become invested enough in it to want to shell out on the cosmetics for ‘identity’ or whatever.

The problem with a model that leaves out much of the fun in a game is that it’s less likely to retain players – some might get hooked, but I bet a bunch will see the apparent pay-wall and think “this isn’t worth it”.

No, that’s not the ideal, because you need to get people to pay to keep it running, and keeping a game running entirely off of the cosmetic things is pretty hard to do. It’s why Glitch failed, mainly.

If you’re going to do F2P, you need a better way to get people to pay than hats.

It seems to be working for Dota…

DotA 2 doesn’t need to make money though, same as TF2 (TF2 hasn’t been making money by itself). It brings people to Steam, making it more likely that they buy something: that’s where Steam makes money. Don’t really need to make the F2P model sustainable.

League of Legends, then. That’s Riot’s only source of money (technically you can buy champions, but other than that it’s all skins and boosts) and holy cow do they have money to BURN.

This absolutely IS the best way to do F2P. If you ask me, it is the ONLY way to do F2P. People are willing to pay for cosmetics and convenience. Cosmetics are status symbols, convenience is optional. You don’t want to limit the free players, because they will have less fun with the game and then play something else, reducing the number of players and you want as much players as possible to fill your world and as potential customers. A player who leaves after a day or two will never pay for anything, but when he can play the complete game, albeit without some optional features, he might one day see some cool hat that he wants and buys. (My complete thoughts on this, using the example of SW:ToR, can be found here, if anyone is interested)

‘Let’s limit the number of skill bars they can have to two so they can’t put all the skills they need to use in combat on them, let alone all the teleports and mounts and social skills! That’s the best idea EVER!’

SWTOR’s F2P model was pretty awful.

No, it’s the nature of the Pay To Win model, which is a highly-maligned subset of the F2P/Freemium model.

Highly-maligned and numerically dominant subset, which makes it standard.

Good point. Guess that’s why we can’t have nice things.

You know what else is a numerically dominant subset of F2P?

Failed F2P games.

I like to think this is not a coincidence.

For Pay2Win to have any lasting power, there has to be something holding the free players in, so the pay players have someone to flash their cash for.

Didn’t Sleeping Dogs?

In this case, the cheat could be a piece of DLC malware that slows down the computer enough to make the game playable. It’s brilliant, you’ll make millions!

You say crappy way to cheat, I say this justifies the turbo button that is still on my case.

As a “this will always be running at a consistent speed so it doesn’t matter” there are a couple of concerns:

Mains isn’t 60Hz everywhere and so there is a lot of 50Hz preference in displays from when the refresh was linked to the mains and everyone standardised their expectations. Watching a 60Hz refresh rate at 50Hz is not good for micro-stutter. Someone who overclocked their display to 72Hz finds out that they now broke a game isn’t great. If in 5 years we all decide that low persistence strobing backlight 100Hz or 200Hz LCDs are the correct rpice/performance point then sticking to a 60Hz world state tick is going to look weird.

This will not always run at a consistent speed and so people will have to adapt. The W key moves up at 10 ingame metres per real second when at 60Hz but 5m/s at 30Hz. Yes the entire simulated world is slowing down (so in-game m per in-game s are constant), no that doesn’t make it any easier to manage the controls when you’ve got to feedback the performance of the game into your control desires.

As you say, this isn’t a trivial issue and many engines do have a fixed internal tick on top of which they can run a presentation layer to try and give a consistent view of the underlying system. And this is a fun project to get stuff done so making this decision makes sense. But it still looks like a horrible hack, especially with the performance of a 2D system.

The game has a fixed internal tickrate of 60 fps, and cant go above that. If the CPU cant keep up:then the game slows down.

60fps = 16.6 ms per frame, my but you do know how to test yourself Shamus! I’m looking forward to the optimisation phase of this project and the nail biting decisions such as “I love feature X but its spiking to 24ms per frame!”

Great series, I forgot how much I love these coding bits, makes me nostalgic for the coding career I abandoned :D

My CPU runs at about 2.4 GHz. That’s 2400,000 cycles per millisecond. It’s CISC, so that’s not 2,400,000 operations per second, but some do only take 1 cycle. Normally, bottlenecks are in I/O bandwidth (memory and disk included) but this post was about saving CPU cycles. The DDR2 memory on the system I work on is good for 1,600 million transfers/second. Those are 1-bit transfers, so that’s 1.6 Gb/s, or 800 MB/s. That’s enough memory bandwidth for 13,280,000 bytes per frame at 60 fps. That is a lot of data to send to memory. I don’t know how fast PCIE busses are these days, but I’m guessing they’re at least that fast. That’s a good amount of bandwidth to the GPU.

Of course, there’s some latency to memory transactions, so every time you try to hit main memory on this system you’ll incur a few cycles of overhead, but compared to 16.6 ms, they’re effectively zero. On top of that, CPUs have megabytes of cache now.

My point is that today’s computers are FAST. 16 and 2/3 ms is a long time.

True story: one of the reasons I have for switching from C++ to D when developing my game is that the built-in timer for SDL only has milisecond granularity, while the D standard library has a built-in timer with granularity equal to the system clock speed.

Yes, I am aware there are ways to get this without porting to a completely different language, but I was already planning on switching at the time and it was just a nice bonus.

This was noteworthy because in the version of the game running on SDL, frame calculation times at 100+ frames per second were so short that my physics calculations were rounding to 0, so if the system got fast enough, nothing could move anymore. Between a more precise clock and capping the frame rate at Vsync and sleeping for the rest of the frame, it’s not an issue anymore.

PCIe gen2 can do 500 megabytes/sec (5 gigatransfers/sec, minus some overhead in the encoding) per lane, times sixteen lanes for most video cards. So around 8 gigabytes/sec.

Note however that your DDR2 memory is *not* 1 bit per transfer. DDR2 is 64 bits/transfer. DDR3 is a different spec, but mine runs at 233MHz base clock, times 4 (4 clock cycles of the memory bus per 233MHz base clock cycle), times 2 (2 transfers per clock cycle; one on the rising edge and one on the falling edge), times 8 (8 bytes/transfer, or 64 bits/transfer). So that’s 1866 million transfers/sec, times 8, or 14928 million bytes/sec.

Which comes out to 13.9 gigabytes/sec, or about 1.7x the PCIe gen2 x16 rate.

I recall learning that the ‘cinematic physics’ events in Valve games were actually pre-calculated outside of the game and baked in as an animation to prevent the exact thing Shamus is accusing the game of doing.

Yes, the ingame commentary says so. Still there must be something going on during that animation that causes framerates to drop since people have experienced it.

I believe that particular train sequence was a live physics simulation, unlike many of the other ones which are pre-calculated animations. I believe they were experimenting with it at that point, to see if they could pull it off.

I could be wrong, though. I do recall the dev commentary singling that scene out as being different from the rest of the scripted sequences in the Half Life series.

It was different BECAUSE it was pre-baked.

That being said, premade or not, theres still a lot of particle effects and bending of polygons (yes, cringe!) happening in that scene, plus the regular physics for the smaller props flying around.

Every time I read one of your posts it makes me very glad I am not working in graphics. I do love reading about it though.

“You can't stop me! I've got the source code!”

Is that the new catch phrase?

I think it would look great on a T-shirt.

I’d wear it.

Me too :)

Another example for a similar thing in HL2 main game was the bit as you were running away from Combine in City17 – right after dropping through a train carriage with a resistance dude and a vortigaunt, there was an obstacle of a big planked wall inside a traincart/debris pile.

Breaking through that wall always seemed to produce a very noticeable hitch, and the audio in particular would just bug out (tbh, the shards would do weird stuff too, but hey, Havok).

I wonder if that is the same issue or something subtly different.

I know exactly where you mean. Yes, I remember hitching there as well.

It might not be physics. It could also be particles. Create a few hundred splinters of wood / puffs of dust and it can slow things down. (In those days, at least.) If they have geometry to be moved and collisions to perform, things can get out of hand.

I doubt an artist would deliberately create that many particles, but if there are triggers like “when this bit of wood breaks, make 2 smaller pieces of wood, 10 bits of dust, and 20 splinters” then you can have an “asteroids” type thing where pieces are breaking into smaller pieces, which are then also instantly broken, etc.

It can get REALLY nuts if the particles are flagged to collide with each other. You end up with n particles requiring n! collision checks. Even if they’re quick checks, factorials are dangerous.

That’s another wild guess on my part, for what it’s worth.

Don’t you mean n^2 (or, more precisely, n · (n-1) / 2)? That’s how many pairs of particles there are. Factorial would be if you needed to do something for each ordering of particles, but I can’t think of anything like that here.

And n^2 grows relatively fast too, but it’s nowhere near n!.

Yeah. You nailed it. (n*(n-1))/2. Is there a name for that? Bubble sort has the same property.

We need all of the possible pairings in the group. If we have 4 particles, then we need six pairings. A+B A+C A+D B+C B+D C+D. Or 3+2+1. That last bit looked like a factorial, which is how I made that mistake.

The name of that property is O(n^2). The notation is called “big O notation“, common in descriptions of numerical algorithms. It can be thought of as “on the order of n^2”. It refers to the growth rate being the same as the order of the function (in this case n^2 has an order of 2).

Well, it’s the formula to produce traingular numbers so for n you could say “the nth traingular number,” which is admittedly longer than n!.

Note that multiplication:factorials::addition:traingular numbers

Frequently designated T_n (i.e., the n is a subscript) in mathematical contexts.

It’s also the binomial coefficient C(n,2) but that’s probably overkill here.

I think of that as “n choose 2”, sometimes written C(n,2) or as an n above a 2, all in brackets, which I will now attempt to ASCII-art here:

( n )

( 2 )

Ah, just noticed that Kacky Snorgle (if that *is* your real name) said the same thing below, even noting that it’s also called the binomial coefficient.

But whatever it’s called, you’re right that it represents all the unique pairs of two things (or k things, if you go with C(n,k) instead). Handy at parties for figuring out how many handshakes or glass-clinks would be required in a group of N people. Well, “handy” may be the wrong word … (But it’s usually easy to calculate in your head, if you want to amaze your easily-amazed friends, since either N or (N-1) will be an even number, and thus easy to divide by 2. So with 10 people, you need 10(9)/2 = 5*9 = 45 glass-clinks if everyone is going to toast with everyone else.)

I am impulsively terrified of running at a fixed framerate. Abuse was locked to 15 FPS, which was one of the three things that ruined a game that was actually fundamentally good. I tried to fix up the open source port by doubling the framerate and adding a divisor to all of the velocity setters in the game, but shiRt went nut heads real fast as I discovered just how deeply it was built around this.

The decision being made here is different though. Right now, 30 fps is considered minimum playable by many. In ten years, maybe 60 will be. Since the human eye clocks at somewhere around 100, there isn’t a lot of fear of this game being ruined by this.

Yup, you encountered more or less the same problem Shamus described with the parabolas, from the other end.

Over all, too, human beings have been used to 24 FPS for a long time (over a century now), and some pretty hefty work went into figuring out that that was a solid baseline of “this is as slow as most people can tolerate, under ideal conditions.” And speeding of FPS in other things has been fraught with a whole lot of discovering how it can be done WRONG and look bad. So I don’t think there’s going to be a huge need to change the frame rates other than from the simple frame rate math.

(Oh, and a “jiffy” is an arbitrary but short period of time, and coincidentally, it’s 60th or 50th of a second in most electronics applications, so I think we’re perfectly justified in stealing it for these purposes too.)

24 FPS only feels smooth when you are merely watching, for example a movie. As soon as one controls what’s happening on the screen, the constant stutter becomes obvious. For example, right now sitting in Lion’s Arch in Guild Wars 2, in fairly populated part of the city, when I do nothing but check the framerate – 26-28 FPS. It does not look bad. But as soon as I make a few steps forward, thought the framerate does not drop further down, it can feel all the stuttering.

I know some games do this with the game slowing down if it can’t keep up.

One notable example was Red Faction: Guerrila, likely in response to the insane physics that could possibly needed to be calculated sometimes.

Though having buildings crumble in slow motion was pretty awesome anyway.

“It wasn't recently that I realized this paranoia was unusual.”

So, you realized it a long time ago?

(Or is there an “until” missing?)

So why is transparency not a problem with particle systems? Are they sorted differently?

I’m using Unity at the moment and pretty much spamming particles with alpha channels and it seems to not slow down.

Usually particles – particularly bright, glowing particles – can skip the sorting step. The’re usually drawn last in the scene, but they don’t need to be sorted relative to each other.

The troublesome bits are usually things like foliage. The edges of a leaf are PARTLY transparent. Say I’ve got a tree leaf in front of me, a fern behind that, and sky in the background. If I draw the tree leaf first, then the edges of the leaf will blend with that sky color. Then I draw the fern, but it doesn’t overwrite those partly-transparent edges on the leaf. This looks really screwy. The edge pixels of the leaf become a magic window that lets you see through the stuff behind it.

If particles have to be sorted is usually determined by the shader. If you use an additive shader, you don’t need to sort, if you use a ‘normal’ alpha-blending shader, you absolutely have to sort the particles, if you don’t want strange artifacts, like particles that are further away from the camera rendering in front of nearer particles. But since the relative positions between the particles don’t change much between frames, sorting algorithms are very efficient here.

Man, that’s what causes that effect? I’ve always wondered! Thanks, Shame.

This is visible at some points in Kerbal Space Program, specifically in the VAB or SPH.

I also had ideas on how to use the “magic window” effect as a sort of X-ray vision effect or in text boxes.

Regarding the example with the foliage: You can sort your Alpha objects front to back or back to front, it just has to be consistent. But you have to disable z-writing, or you will get exactly the behavior you describe, with transparent/invisible parts of a triangle occluding objects behind them and showing the background. The sorting is done in order to not need to write to the z-buffer and to know how the blend equation looks like. For the same reason you render the transparent objects last. You do read the z-buffer to see if you are allowed to draw the transparent object, but you don’t write to it, so the transparent objects don’t occlude other objects. The occlusion from the opaque parts of a triangle is done via the blend equation.

(example back to front: SRC_ALPHA * SRC_COLOR + INV_SRC_ALPHA * DST_COLOR)

I like to report when I literally (in the old sense of the word, not the new sense that means “not literally”) laugh out loud at something. In this case, I literally laughed out loud at “At a lot of development studios, if a member of the art team did this you could go to their cubicle and set them on fire without breaking company policy.”

Well done!

My solution to problematic usergenerated art would be to create invisible bots that would wander around the in game world and measure performance. If they find something like the dolphins they’d slap a giant quarantine tent on the problem so that nobody could see it. Then a combination of a FAQ and user forum would help users self-solve the problem before doing something else.

As a non-programmer I can’t implement any solution and I’m sure there is a reason why this wouldn’t work- especially in 2003. I am curious about how viable this is though.

I’m not sure, but I think it would be difficult for a machine/AI to determine if it is currently experiencing a performance drop, since the methods for measuring would also be affected by the slowdown. You would need an observer that is independent of the entity testing the game.

Actually, the real problem with finding performance spikes is one of scale. Hundreds of people were building tens of thousands of objects a day, just in the space our company owned. Then there were all the scattered worlds owned by users. We didn’t have the right or power to make changes in those worlds any more than a domain registrar has the right to change my website. So a lot of the space was out of our hands.

Too much stuff was built too quickly in too many places for us to ever keep up, but the bad spots in those spaces impacted the user experience and made people want to use the software less. So we did what we could. Probably our best feature was one that capped how large any single downloaded object could be. So if someone had a 5 megabyte dolphin statue, it would exceed the max size and nobody would download it. (You could turn off the limit for yourself, but that just meant that YOU would see it, not everyone else.) This limit did a lot to mitigate the really egregious stuff.

But to a certain extent, it probably wasn’t worth going to any great lengths to make the bad stuff less horrible. Odds are good that people making these low-level mistakes are probably not the most talented. Like, even if I found a way to magically fix all of Joe User’s performance problems, it’s VERY likely that his office building was still going to wind up being a horrible eyesore. Just making sure the client survives the performance spike well enough that they can flee the offending scenery was probably the optimal solution from a cost / benefit standpoint.

If I was tasked with finding trouble spots, I’d probably do some sort of aggregating: Look for areas where clients were moving really irregularly. (Like, position updates once a second instead of ten times a seconds.) The server could flag areas where users were moving in a very “jumpy” way. It could build up a list of hotspots for us to deal with / investigate.

On the other, OTHER hand, Moore’s law was very good to us. If something is a performance nightmare today, next year it will be a trouble spot, the year after it will be borderline, and the year after that it will be just fine, probably a lot better than all the NEW stuff people are building around it. :)

It would make more sense to generate some kind of rough performance cost for objects and limit their amount according to that. Each ‘object’ would have a certain ‘cost’ to it determined by things such as the polygon count, if it spawns particles, if it has alpha-transparency, etc. Then the costs of the objects being rendered would be sorted through every time a user went to add a new object, allowing the game to cap them from adding that new object if it would exceed the performance cost.

So, what you mean by “This game is going to run at sixty frames a second. Period.” is that the game will always process and display 1/60th of a second of game simulation at a time, no matter how long that processing actually takes in real time. You’re essentially locking the in-game time-step to 16.6ms regardless of available resources.

Good idea! Constant simulation increments are the way to go if you want consistent performance.

On the subject of time, it’s worth noting that EVE online has a time dilation mechanic so the simulations on the servers can keep up. Too much player content happening in one sector at once, and that sector (and only that sector) goes into slow motion.

Would it be possible to detect the user’s refresh rate and easily increase the fps cap to 90/120/144 for higher end monitors?

It’s the futuuuureeee!

That would sort of defeat the point. Same problem, just in the other direction.

It should be possible. I have plans to do this in my project, I just haven’t gotten around to figuring it out yet.

By the way, while working at my last job, I was able to find what is probably the single worst way to figure out what the coordinates of a given monitor are in Java: You set the program to fullscreen, record the window coordinates, and then put it back to windowed mode.

Makes every single monitor flash when you do that. Good way to horrify users, senior programmers, and managers.(senior programmer actually told me “PLEASE don’t do check that in!” Of course I’m not going to check it in, I’m not that stupid.)

While this would be consistent (until their CPU couldn’t handle it and the game got slower) for each game / refresh rate then the problem becomes what the real game rules are. At 144 ticks a second then the rules of physics (collision for example) have changed for me vs someone at 60Hz (they can teleport though a wall I see as solid going at the same speed). There is also the issue of blocking off multiplayer (at least if you’re not playing with a friend at the same refresh rate) and making replays impossible for some computers (again, you can only show them with matching refresh rate).

The world tick kinda has to be decided upon as the rules to the world and then on top of that you have to build the presentation layer (which can interpolate and make for the actual rendered frames you see) which cannot modify the game world (only observe it). Input would feed into the underlying world tick layer and now you’ve added the network issue of player shooting at what the presentation layer says is in front of them when the underlying reality if things are not exactly where they see at that exact moment and how do you make that fair.

For those writing game engines with fixed ticks for the high frequency events (collision) and a performance eye (or ignoring low end CPUs) then the number 600 has a great many useful factors (not 144 unfortunately) for this sort of thing. I can recommend it highly, if you can get the job done in 1.67ms.

Edit: but in a world of pure NTSC (no 50Hz consideration) then going for 720 adds in 144Hz/72Hz while retaining the really important stops (24, 30, 60, 120).

Funny you should write about this. In a small piece of software I code, called GridStream Player which is a custom shoutcast stream player for a radio station http://player.gridstream.org/

It supports the use of skins (the in the new WebP image format actually) with full alpha channel/blending (left and right channel images on top of a base image).

It used to have a 2D desktop/software implementation. But recently I just changed it to use Direct3D since I coded it in PureBasic that part was not too difficult (from http://purebasic.com/ )

Thing is, the interface/GUI/UI/main program is it’s own thread, the audio stream/decode/playback is handled by BASS (from http://un4seen.com/ ) ad thus in it’s own thread.

And the Skin/VUMeter animation is in it’s own.

It is running at a 25FPS rate, independent of the actual screen refresh/vsync.

Not only that, it only changes/renders if there has been a change.

But the key is that if you choose 25FPS or 30 or 50 or 60 FPS, then make sure it is independent of the vsync, the worst you can do is tie your rendering to the vsync.

In too many games you find that user input lags if the rendering lags.

Now my player has no need to keep track of any user input (except typical OS tracked stuff that is relayed to the software normally).

If it was a game I’d actually create a separate user input thread.

So to re-iterate:

* Main GUI/UI/Program thread.

* Audio thread.

* Rendering thread (FPS independent of vsync rate)

* User input thread

PS! May I suggest using 50FPS or something else than 60?

1000 ms (millisec) divided by 60 equals 16.666666666….666666667 something.

While 1000ms/50fps=20ms between frames.

That could make planning timings on animations and events a little easier.

Unless you actually use floating point instead of integer.

If using 32bit or 64bit floating point milliseconds does not add that much (or any) overhead compared to integer milliseconds then floating point will be more flexible obviously since you actually can store the result of 1000/60, with integers you need some extra code to throttle a little too keep. You still need code to handle sudden framerate drops but it should be a little easier.

Anyway, the key point is to make sure you rendering thread is not locked to the vsync, the rendering thread should only lock to vsync when it swaps the front and back buffers. Thus vsync will act as a upper limit.

In the case of my player the frame rate hovers around 25FPS (+/- 0.5ish fps) but the vsync is at 60hz.

If the display was a 50hz display then the frame rate would probably be a rock solid 25FPS.

And if for some reason the rending is too slow, then a frame will be dropped (or more) as it’s actually time slice based. The loudness of the audio stream is taken in 20ms slices, and if you did you headmath you’ll notice that two timeslices of audio loudness is used to calculate the animation/rendering of one frame.

I’m still in the process of tweaking stuff but… Looking in “Process Explorer” (by the sysinternals guy) which is highly recommended. Om my system the rendering thread (which I can see te CPU use of in Process Explorer) only about 0.5% CPU is used and 0.5% GPU is used. when the two larger bundled skins are used. (the default skin is very small and does not stress the system much at all)

Anyway! I love reading this series.

And there is nothing wrong with code hardening, in fact with my player it is rather important as you can not have a player that many listeners leave on for days non-stop suddenly fail or have issues.

So taking a little extra time to validate values or normalize/clamp them is pretty smart in my opinion.

At the very least one can say. “Yeah! I guess it’s not the fastest engine out there, but it certainly doesn’t crash!” :)

So I’m a bit late to this thread, but let’s see if I can get an eyetwitch.

So I was playing Sins of A Solar Empire on a single core P4 with integrated graphics (and serious kudos to those guys to getting it to run at high settings on that terrible of hardware).

The enemy force and I were stalemated along a front. I noticed that the enemy always jumped in at the same point and attacked along the same axis. If I could destroy the enemy fleet without losing my own in the process, I could hopefully advance.

So I set up 150 mines along that line, and turned them off.

~200 ships jump in, and advance along the axis. I turn the mines on.

150 mines immediately detonate triggering collision checking and particle effects galore. 200 ships die triggering MORE particle effects.

The end result is that I ate dinner, went back up, and it had advanced 2 frames. And after that, that computer was never, ever, able to play that game (or any game) again. In fact, it immediately started suffering from double-length boot times and major slowdowns. I triggered so many collisions etc, that I literally broke the computer.

I played the game a lot some years ago, but my machine was not the strongest then, and the longer an bigger a game got, the slower it was. Not the stuff that was on screen at a given time, but the overall size of the different empires. If I wasn’t fast enough to kill of some AIs early, the whole game would slow to a crawl until it was completely unplayable.

I think Painkiller (demo) ran like that. I remember playing it on a weaker machine and it slowed down instead of stuttering. I dont know if the full version has this, how does it work in multiplayer or how to reproduce it now, though.

I’m a bit late here, Shamus, but the algorithm you describe for simulating the motion of the ball is Euler’s method. If you want to preserve accuracy when the framerate drops (and thus, have the option to drop the framerate without the physics changing) you need to use a more accurate method. RK45, (which Wiki calls RK4) is standard.

It never crossed my mind before that games might not use such a method because it doesn’t really matter if the physics faithfully follow our rules as long as they follow some rules. Is this how it’s actually done in gaming?

PS: I love the checkbox at the bottom :D

Way, way back in time, before 3D cards or even color graphics, there were games that allowed the players to build a world. In text.

A branch on the Multi User Dungeion (MUD) games, the MOO (Mud, Object Oriented) had a custom language that allowed the *players* to script the objects in the world. This basically involved listening to events that happened around and to the object.

For simple object, such as a vending machine, the script would wait for a user to type PUT COIN IN VENDING MACHINE and if a coin was actually available to the player the vending machine would accept it to its inventory and “dispense” something to the user. The same machine might also greet users arriving in the “room” it occupied in some way.

Of course, simple objects were just the tip of the iceberg and users built games within the game. These complex objects burned a lot of processor time: to deal with this there was a “currency” for computation, often called Pennies or Zennies.

Each player had an allowance and when that allowance ran out for the day, the objects they created stopped operating. This wasn’t optimal, but it was better than allowing unlimited processing on a limited system.

(Much later, Second Life tried to implement such a system for scripts, but did it too late, after a culture of free scripting was already entrenched and it never went anywhere.)

If I were ever to create a virtual world (which I won’t, but if I did) I would establish an allowance based system for both scripts, render costs and object storage out of the gate, to avoid exactly the situation you describe at Activeworlds.

Shamus, “(Maybe new graphics cards have some trick for this, but in 2003 this load went right to the CPU.)”

“Solving” the rendering order for transparent surfaces is done via:

* Depth Peeling

or

* Order-Independent Transparency

See this EXCELLENT presentation: http://fr.slideshare.net/acbess/order-independent-transparency-presentation

Also see: http://en.wikipedia.org/wiki/Order-independent_transparency

Don’t want the game to slow down? Minimum system requirements is the solution. :)