I apologize if this series seems to be glossing over some details while exploring others in exhaustive detail. This is a very hard series to write and I’m having trouble keeping it all straight in my head.

At any given moment there’s the stuff I’m working on and thinking about. Lagging behind that activity by about three weeks is the stuff I’ve written about and organized into words. And lagging behind that by another week is what has actually been posted to the blog. So when organizing my thoughts I have to figure out if feature X is something I’ve done, something I’ve documented, and something you know about.

It’s confusing, is what I’m getting at.

One one hand, these programming posts are really useful for documenting and clarifying my thoughts. On the other hand, having this muti-stage process with three weeks of lag time is not so useful. Because of this, I’m really eager to plow through this early stuff as fast as possible. Do I document all the little diversions and side-paths that didn’t work out? If so, then I’ll never get caught up. But if I leave that stuff out then this threatens to became a very dry recounting of features added.

I still don’t know how to handle this.

And now I’m about to make the problem worse. Here is a feature from day three. It took me longer to document in this post than it did to write the feature in the first place:

It was many hours into my first play-through of Plants vs. Zombies before I realized, “Wow. Every single object in this game has a face.” Every plant, every weapon in your arsenal has at least a pair of eyes to give it some personality.

|

I’m kind of hoping I can use this idea to give the game some character. Descent put eyes on their robots and I have to say it made a huge difference. They would have been much less interesting if they just looked like flying vehicles. I’m hoping that if I give them faces (or at least eyes) then the player will fill in the gaps and ascribe personalities to them based on their behavior and facial “expression”. Ideally, the suicide bomber foes should look kind of crazed while the ones that shoot at a distance should look kind of cunning and maybe slow-moving foes would look kind of derp. That’s the idea, anyway.

To keep the eyes from looking like simple running lights, I hope to make them move around a bit.

So I make a new type of object called “eyes”. To start with, it just renders this little circle of color:

|

I don’t know if I actually want to use that color banding. We’ll see. In any case, we need an iris (or a pupil, the physiology of cartoon robot eyes is a little sketchy) that moves around in this circle. It’s not enough to just draw a bright dot and call it a day.

|

The illusion of this being an eye is broken, and it looks like a firefly zipping around in front of a round window. The dot needs to be constrained to the area we’ve already drawn. You can accomplish this with the depth buffer.

How it normally works is that you draw some polygons to the screen and OpenGL (or whatever you’re using for drawing) writes to the depth buffer. This keeps track of how far away from the camera each pixel is. When you draw more polygons later, they only show up if they end up closer to the camera than the stuff that’s already drawn. This is how we can draw a scene and have some objects appear to be behind others.

But we can change this behavior if we want. We can tell it to only draw if the new polygons and the old polygons are at exactly the same depth. Doing it this way, the iris only shows up where it overlaps with the eye:

|

Up until now my bad robots have just been silhouettes with some running lights or simple fixed dots for eyes.

|

And now we add the eyes:

|

Some eyes sweep back and forth, like Cylons. Some point the way the robot is going, like Pac-Man ghosts. And some follow the player, like creepy stalkers.

Hm.

The difference is not nearly as noticeable or attention-grabbing as I’d hoped it would be. In my mind it was going to be this OH WOW effect that would bring the robots to life. In practice it’s kind of subtle. But it’s good. (Enough. (For now.))

Now we need a character for the player.

I went through a lot of designs over the course of a week or so. I didn’t take screenshots of the early versions, but the figure in the lower right is what I settled on:

|

The player is a robot, and their character is a little bit cute and a little more “human” than the robots they’re fighting. I was aiming for gender-less. Not sure how well I succeeded. There’s nothing about it that screams out “THIS IS A MAN ROBOT” to me, at any rate.

It’s called an “avatar” internally, a habit I picked up at Activeworlds where all human-controlled models were called that. Outside of the source, there’s no official name for it. The avatar is made of several different sprites instead of a single fixed whole. This means that as they zip around the level, the torso can lean into the turns or lag behind ever so slightly when accelerating. This puts a lot of life into the little thing. The avatar has animated eyes like the other robots, although the avatar eyes look whichever way the mouse is pointing / aiming.

The left (from your perspective) arm shoots lasers, and the right arm shoots missiles. And now I realize I added missiles to the game without mentioning it. I also didn’t mention them in the next entry, which is already written but which you won’t see until Friday.

See what I mean about this being confusing?

Gah. Here we are at the end of an entry and I’ve managed to document ONE feature. Considering at this point in the project I was adding a half dozen features a day, I was working 6 days a week, and these blog entries only come out three times a week, this is clearly unsustainable. We will never catch up like this.

So you get a double-sized entry today. Let’s talk about game controllers. The only controller I have is the USB XBox controller for the PC:

|

I did have another off-brand controller around here, but I seem to have misplaced it in the move. For the purposes of what we’re doing here, it doesn’t really matter.

For the record: I’m talking to the joystick through SDL. SDL is nice, clean, lightweight, and cross-platform. I could also talk to the joysticks through the Direct X library, which I think is called Direct Input. Or maybe I’m remembering wrong. It’s been more than a decade. At any rate, this would marry my project to Direct X, which is massive, complex, and would limit me to platforms supported by Microsoft. It would also give my game a bad case of DirectX dependency. Many of you have no doubt installed a game and ran into the situation where it said, “Installing Direct X step 1 of N” even though Direct X was up to date, in which case you’ve seen this terrible sickness.

Basically there are an endless number of different versions of Direct X. There are versions and sub-versions and in-between versions and each of them has their own binaries. Games are usually built against one particular version, and getting the various nearly identical but ever-so-slightly distinct versions to coexist is apparently a major headache.

So screw DirectX, is what I’m getting at.

Here is how the controller looks to the software engineer:

|

Yes, the trigger buttons are actually a single analog stick. If neither trigger is down, then the stick is in the neutral position. If one trigger is down then this “stick” is pressed one way and if the other trigger is down it’s going the other. If you squeeze both triggers at once they cancel each other out. This is kind of interesting. I didn’t know that about the trigger buttons. That does place limits on how we can use it. I don’t know if this is how it’s “supposed” to work, or if this is a limitation of interfacing with a Microsoft controller using a non-Microsoft interface. It does seem strange to design a controller with trigger buttons that are mutually exclusive.

The green X brand button in the middle of the controller is invisible to me. If I was using Direct Input I could probably see it, but since I’m talking to the hardware through SDL I don’t know it’s there and I can’t tell if the user is pressing it. But who cares?

The d-pad is technically a “hat” control. You probably remember hat controls from those mid-90’s joysticks:

|

I imagine the reason for this distinction is that while the hat can technically be thought of as four direction buttons, in practice they have a limited number of mutually exclusive states. You can’t physically push the hat right and left at the same time. You can’t press both “hat up” and “hat down”.

And while all of this is kind of interesting and important to people who design these input devices, it’s all very tedious and beside the point to a would-be game developer. When I’m trying to figure out if the user is pressing a “go right” button, I really don’t care if that button is a joystick button, a hat button, an analog stick, a keyboard button, or a mouse button. All I care about is if they are doing it.

In terms of physical hardware, we have seven distinct forms of input:

- Analog stick.

- Hat control.

- Joystick buttons.

- Mouse movement.

- Mouse buttons.

- Keyboard standard keys.

- Keyboard special keys like shift, control, alt, or numlock.

Each of these input types has a unique way of querying it. Sometimes SDL tells me, but sometimes I have to ask. Sometimes the answer is obvious and easy to read, and sometimes the answer is complex and needs to undergo some sort of scaling and conversion. (Like mouse speed or joystick dead zone, for example.) But in practice, there are only three distinct forms of input:

- Analog stick

- Mouse movement

- Buttons. Any button.

It’s really inconvenient to have to poll all these different input channels, so what we need is a system of virtual buttons. I create a layer of pretend buttons and we feed all these disparate input channels into this one list of inputs. While the user is playing, I can query if they’re pressing button X without concerning myself with where X is physically located or what sort of button it is.

This also lets me create new abstract or amalgamated buttons. I can create a generic “joystick up” button. If the user nudges the left analog stick up and releases it again, it generates a keypress on this new imaginary button. If they hit up on the D-pad, it generates the same keypress. This means I can ask even more abstract questions, like “Is the user trying to move up?” and not worry about how they are expressing this decision. It’s not terribly useful for gameplay, but it’s a must-have if you’re going to navigate menus.

With all of this done, I end up with a nice clean input system. I shouldn’t need to touch it again unless this turns into an actual game, in which case I’d probably need some kind of way to remap keys, which is a complete pain in the ass to support properly. In order to let the user re-map keys, you need a robust menu system, you need to be aware of all the possible inputs even if they don’t exist on keyboards in your country, and you need to know the proper written names of those buttons so the user can see that the selection they made is the one they wanted. (The piss-easy way to do it is to just jam all those settings in a config file, which hasn’t been tolerated since the 90’s. I should know. I’m one of the people who doesn’t tolerate it.)

One thing to note is that the hardest part of adding controller support was getting it to handle hot-plugging of joysticks. When you start up SDL, it looks around, takes an inventory of the available joysticks, and sets them up for use. But if you plug one in afterward it doesn’t notice or tell you about it. Not even if you ask.

This is made worse by the fact that some genius at Microsoft decided that when the wireless controller loses connection it should yank the device away from you as if someone physically unplugged the joystick. I have no idea why. The receiver is still plugged in.

This is annoyingly common. If the batteries are starting to go, that will lead to a disconnect. If you set the controller down for more than a couple of minutes it goes to sleep, which is also a disconnect. To your game, this doesn’t just mean you stop getting input. It means the device itself vanishes.

A lot of games (indies in particular) don’t take this into account, which means that if the device isn’t awake when you launch the game, you will never, ever get the game to acknowledge the controller. You have to exit the game and re-launch it to use the controller. (Which sucks, since the most likely thing is for the user to launch the game and THEN pick up the controller.) In some cases, it doesn’t handle the re-connect properly which means that this disconnect will force you to relaunch the game if you want to use the controller again.

It turns out the secret to fix this is to shut down the SDL joystick subsystem and restart it at regular intervals. Like so:

//No joystick active. See if one showed up. SDL_QuitSubSystem(SDL_INIT_JOYSTICK); SDL_InitSubSystem(SDL_INIT_JOYSTICK); if (!SDL_NumJoysticks()) return; //Still nothin' |

I mention this only because it was really, really hard to track this down. I found this buried deep in a forum somewhere. I didn’t even know you could shut down individual SDL subsystems like this. I have no idea if there’s a performance cost to doing this, so I only do the check every five seconds.

With this in place, I can handle the strange comings and goings of Xbox wireless controllers without forcing the user to exit the game.

The game feels very different with the controller. Unlike an FPS, this is more of a trade-off than a handicap. It’s a little easier to set up really precise long-distance shots with the mouse, while the analog stick just doesn’t have the same ability to make fine micro-adjustments. On the other hand, moving with an analog stick is a lot nicer than with the keyboard. WASD are obviously binary indicators, and the only way to go slow is to flutter the keys. Worse, you can only go in the 8 ordinal directions with the keyboard, while the controller allows you to fly in nice swooping curves if you want to.

I don’t think one is significantly easier than the other, but they do feel very different in your hands.

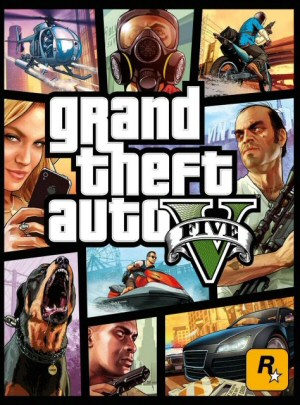

Grand Theft Auto Retrospective

This series began as a cheap little 2D overhead game and grew into the most profitable entertainment product ever made. I have a love / hate relationship with the series.

Autoblography

The story of me. If you're looking for a picture of what it was like growing up in the seventies, then this is for you.

Bethesda NEVER Understood Fallout

Let's count up the ways in which Bethesda has misunderstood and misused the Fallout property.

Fable II

The plot of this game isn't just dumb, it's actively hostile to the player. This game hates you and thinks you are stupid.

Playstation 3

What was the problem with the Playstation 3 hardware and why did Sony build it that way?

T w e n t y S i d e d

T w e n t y S i d e d

You might want to look into SDL2, which had its first stable release a few weeks ago. Its new Joystick API is allegedly better.

It also supports newer versions of OpenGL than 1.2 out of the box without you having to query the function pointers yourself (or using GLEW or something similar), and supports OpenGL ES for mobile devices.

(DISCLAIMER: I personally haven’t used SDL2 yet. Also, they yanked Audio CD support, which sucks.)

SDL2 is a massive improvement in certain areas, but it’s also a massive change in many areas. There’s nothing like switching an engine midproject to introduce bugs like crazy while you redo the work you’ve already done.

Plus I like giving the mailing list a few months to work through a stable release and find the more egregious discrepancies between the docs and reality (SDL 1.2’s SDL_HWSURFACE don’t actually do anything on windows for example)

SDL has had some good developments in 2.0.0, especially for indie development.

First the license was changed to zlib (from LGPL), meaning that you can modify it or static link it to your binary without making your project open source. This is especially useful for me since I program in Go, which favors static linking to produce binaries not dependent on many DLL’s. With SDL statically linked, I can do window management, 2d graphics, sound, and input with a single binary only dependent on system libraries.

Second the new Controller API allows you to detect XBox 360 and similar controllers (or in my case a PS3 controller pretending to be one) and treat them as such (15 buttons, two sticks, and two triggers) with a (relatively) known layout of buttons and sticks. I believe it resolves the 2-trigger issue, but as I mentioned I only use a PS3 controller masquerading as a 360 controller. It also can get controller mappings from Steam, so if you plug in a joystick to Steam, set up it’s buttons in big-picture, and then run your app through steam (I add my Terminal as a Custom “Game”), it shows up as a 360 equivalent “Controller”. The full controller API isn’t fully documented yet on the Wiki, but I was able to tell most of what’s-what from the header file http://hg.libsdl.org/SDL/file/default/include/SDL_gamecontroller.h

Third the 2d drawing API has been split between surfaces, which are nearly identical to 1.2 surfaces, except that there is no concept of HW surfaces (cutting down on surface locking), and textures/renderers which give an interface that makes it easier to do 2d HW accelerated rendering properly (and is much better supported in X11, Quartz, and Windows), but restricts the user because it doesn’t allow direct access to the pixels in a similar way to OpenGL.

BTW: the HW_SURFACE of SDL 1.2 didn’t work anywhere I used it (linux X11, Windows, or OS X). The only time I was able to get it to work was for the DirectFB driver in Linux, which meant shutting down X11.

GAH! No! Statically linking SDL is just wrong!

1) Bloat. So with 5 those binaries installed, you got SDL 5 times on your HDD. This is even worse than the standard Windows MO of failing library management

2) Bugfixes and security fixes. So if your standard GNU/Linux package manager installs a new version of SDL, because SDL had a nasty bug, your program will not get that fix. Bad.

3) Did you compile SDL with the obscure sound output I like to use? Maybe I use plain ALSA, maybe PulseAudio, maybe ESD, maybe I’m still using OSS. Can I do that with your binary? Hell if I know

Dammit, system-wide libraries exist for a reason. Statically linking everything is 1000 steps backwards, not something to be proud of.

(And if you ask me, the change to zlib was a huge mistake, but I’m a GPL zealot, so YMMV)

You seem to be suffering from an irrational fear of static linking.

1) File size bloat is not a serious issue here. Any game beyond a toy app (or a heavily procedural one) will have significantly more space wasted in assets then game code, statically linked or not. The libSDL2.a library is 2MB on my system, however in a final linked app, far less of that will actually be added to the binary because the linker will only include symbols the code actually uses. Even ignoring the linker factor, 2MB * 32 games is 64MB, which isn’t a space issue for modern media, and hasn’t been so for quite a while.

2) Security updates in the face of a single game is a wash, update the binary for static linking, or update the library for dynamic linking, same difference. Why? In general, games on Windows and Mac (and many of those ported to Linux) are distributed with local copies of most of the libraries they use. On systems without a system-wide package management system it makes little sense to set up a more complicated installer to install these libraries system-wide (why complicate things?), and it’s also bad form to tell Mac/Windows users to install something else first to get the game working (if you didn’t include dependent libraries). The cost of including the libraries you use in your distributable is tiny compared to your actual product in most cases (#1 again). Also, packaging your libraries with your product (either statically or not) makes it far more difficult to break your app since you have to modify it’s actual installed files, not some system-wide libraries it depends on.

3) The multiple choice sound API (and other such systems) is a self-created problem only present on Linux. All other platforms have a single or small number of system-wide methods for sound, graphics, and input. Even so building a Linux build of SDL with most/all its supported alternatives for these options takes up little additional space compared to both SDL as a whole, and your final product (see #1 yet again). It may not be as future proof as you may like, but then again OSS, ALSA, and X11 are still around today, and are often emulated by newer systems to maintain compatibility. Finally, documentation, from a README to a simple list of system requirements is simple enough to make and will tell a user if their obscure sound system is supported or not, there is no guessing needed.

Extra Points:

4) Right now most “stable” linux distros won’t have SDL2 in them (it only just recently was considered stable enough for release), and so your linux variant of choice won’t have an option to install it for possibly a year or more (depends on the policies of the distro). You thus have to either rely on your users to manually install it to a location where your dynamically linked app can find it, or package it with the app and thus have a single distributable usable by essentially “all linux users” (barring GLIBC/Kernel incompatibilities). With dynamically linked libraries provided this way, an extra file per library is needed as opposed to simply being present in statically linked binaries.

5) Breaking changes can creep into any library. With a dynamically loaded library you have to hope that the ABI for the sections of the libraries you use never change. Whilst when using statically linked libraries you almost never need to worry about ABI, and your binary can’t break until you try to build it against a version of the library with an incompatible API. This is less likely, doesn’t affect users, and for the most part is completely under the developer’s control.

6) http://en.wikipedia.org/wiki/DLL_Hell

BTW: I originally only meant with my post that statically linking is now possible for SDL thanks to the change in license. For many situations dynamic linking SDL is not possible (popular consoles & handhelds), or provides more problems for near-zero actual benefits in the common case (Mac & Windows). Basically since SDL2 can now be statically linked, and I can think of plenty of cases where this is an advantage, SDL2 benefits from the change.

1) You claim 64M is not an appreciable amount of disk space. I claim death of a thousand cuts.

On the other hand, this isn’t a *huge* problem. It is, however, still a problem.

2) Security updates are *not* a wash. If the developer dynamically links the binary, they have to do *nothing* to get the security fix onto their users’ machines. If they either statically link or override LD_LIBRARY_PATH to find the library locally, then the users are beholden to the game developer to get the security fix actually applied on the system.

How many game developers are going to care about my system’s security? I bet I can count them using zero of my hands.

Same reason statically linking libz.a is a bad idea.

3) True about it only being a problem on Linux, but also, not a problem at all if you just dynamically link against the system libSDL…

4) The “policies of the distro” in my case are “build whatever it needs”, because “the distro” is not some nebulous group of people I may or may not trust, it’s *me*. (Everything possible is built from source.) If your game requires SDL2, I’ll look into what’s required to build SDL2, and probably just build it.

5) Er. That’s the whole reason sonames *exist*. You don’t release a new version of a library that has an ABI incompatibility, without changing its soname. (And you don’t release a new version of a library that has an API incompatibility without changing the -l flag required to the linker.) Any upgrade that *doesn’t* break ABI or API compatibility is applied to the system by changing the target of a symlink.

6) I have no idea what you’re trying to imply with that. I will choose to believe, however, that you’re attempting to imply that installing the library alongside the executable is a bad idea. I will 100% agree with that.

(That is, the problems with Windows are because everyone tries to distribute systemwide libraries, because Windows has no central package database — or independent maintainers who understand how the heck the system is supposed to work — and this causes problems. Yes. The fix is not to statically link against everything, the fix is to provide the central package database, and make the system understandable to others. But since they built the system to be sold, themselves, without the protections of the Linux ecosystem, this is not possible on Windows. Doesn’t mean the correct fixes are inappropriate on other systems.)

Please be aware that most of my arguments are made in the context of (closed source) game development, the primary use of SDL, especially on Mac/Windows.

1) I was more concerned with Bones’ assertion that 2MB of “wasted” space in the binary was important compared to the gigabytes worth of space that the assets of modern games consume. I think you don’t need to worry about the thousand cuts, when you’ve been crushed by a 20t boulder.

2) OS’s with a system wide package manager on the level of a Linux distribution are a serious minority especially in the game space. There, nether users, nor the OS providers can be expected to update SDL (a non-system library) on their own. I agree that a system-wide package manager is a great benefit to system stability and future developments, but it is not prevalent. On other systems the responsibility falls to the program developer to ensure that bug-fixes and security updates to SDL happen. It then makes sense to provide it locally. At that point either updating the binary, or the library is essentially the same effort.

3) Agree, only on Linux is there a problem. Time to weigh benefit of supporting of future theoretical sound system X, years after release vs. benefits provided now.

4) If you’re rolling your own Linux (is that what you’re saying here?) then I can’t make any assumptions about the state of the libraries on your system, better provide them myself. Real example: lots of people (see distrowatch) use Ubuntu, or Mint (based on Ubuntu). Neither have SDL2 in their stable channels, and I don’t know whether it will be made available in backports later either for slow upgraders or LTS users. I want to release a game now using SDL2 to as many people as possible, I should package it (somehow) with my app… Not strictly a point for static linking, just that to provide a product for Linux that will use SDL2, you can’t rely on users having a version of a distro that has SDL2 for a while.

5) SO names require that the maintainer of the library recognize the introduced incompatibility and update the name (devs aren’t perfect either). Even if the name is updated, both versions of the libraries need to be installed until the app is updated. If I am developing a closed source game, then I have to stay on top of any ABI changes in the libraries I use and push out patches for the applicable distros, otherwise my app will be eventually be “left behind”. This management has to be done for every distro I want to support. Side Note: for SDL, the opposite happened, since so many games depend on it, we were stuck with 1.2 under the same soname and ABI for such a long time.

6) The implication was that shared libraries (windows DLLs or not) present a minefield of problems you don’t have with static linking. At that point I realized that much of what I was writing (and going to write) would be re-treads of that article. I probably should have added some context.

I don’t hate Linux, I use it a lot, in fact. The systems that are in place to deal with the problems that can arise using dynamic linking are better then anywhere else. But, it often requires that I have open source (and properly licensed) code so that the wrinkles can be ironed out by distro maintainers or users. If I can’t lets others help me (eg: I’m trying to sell closed source game) then its my responsibility to maintain code for several distros or be left behind. I will have a bad user experience if some update or lack thereof outside of my game’s scope causes it to not work (see: Rage). Once I am required to bundle the libraries I use with my app, then static linking vs dynamic linking is no longer an issue of code-sharing, size, or automatic updates. Static linking in this situation can make things easier to build, test, and distribute.

I’m also not against dynamic linking, just the bile-filled reaction against static-linking, as well as some of the toted “advantages” of dynamic linking presented. If I need to do at-runtime plugin loading I’ll need dynamic linking. If I want to make an eventual “fix SDL yourself” release, then I’ll switch the linkage of SDL. If a library that I use is stable and ubiquitous I’ll dynamically link to a system version (eg: libc, libpthread, libm, zlib).

My original post was about adding static linking support (legally) to SDL brings advantages, not “thank god I don’t have to dynamically link it anymore”.

What Bryan said.

Also, an example: Neverwinter Nights.

NWN’s Linux version was released in 2003-ish, and came bundled with a libSDL.so it dynamically links against. Good thing that it did, because its version has several small bugs I could fix easily by symlinking a system-library in its place.

As recently as last month, I found another small bug that made setting gamma fail on my laptop: https://bugzilla.libsdl.org/show_bug.cgi?id=1979 . BioWare doesn’t care about Neverwinter Nights anymore. nwn.bioware.com has been dead for several years now. If SDL were statically linked, I’d have to resort to trying to patch the NWN binary if I wanted that fix to apply (never fun). Instead, thanks to being able to symlink against a system binary, the fix is trivial.

Shipping the libraries with the game I’m okay with, as a necessary evil, as long as you can easily replace them with system libraries (Ogre, I’m looking at you).

My main problem with not being able to statically link libraries is that once someone wishes to uninstall your app, they end up with an increasing number of unused libraries lingering on their system from days gone by wasting an increasing amount of space. There is also another space issue to consider with DLLs. An individual DLL wastes more hard drive space than a statically linked one. Not only because statically linked will only use what is needed, but even if it did not, a DLL will be stored on the HD separately from the main executable, and due to a minimum hard drive block size on the hard drive, there will be wasted space there, where as statically linking limits that to just the hard drive blocks used by the executable. When something I created is uninstalled, I want everything that was installed removed, without anything lingering. I also don’t like seeing 10000 files listed in the program directory when I am looking for certain one. If the library turns out to have a bug, not a problem, as a developer I simply update my game and release a new version/patch for it that reflects these changes.

I also do not like someone dictating to me that I MUST release my source code and that I MUST not statically link because of some concept THEY think is best. I will avoid libraries that restrict my freedoms like the plague they are. Developers that try and impose limits based on what THEY think is right or wrong are a plague in my humble opinion.

You could write the posts while you are programming, but edit and release them later, when you know where the project is headed…

You could commit the posts while you are programming but edit and rebase them later. Just don’t forget where HEAD is.

Hi Shamus, long time reader of your blog here – you inspired me to get into programming and I have turned similarly-minded people onto you as inspiration.

Anyway, maybe you don’t want to hear about it, but there’s a nice 2D C++ OpenGL-based cross-platform “engine” (I’ve used a few times) called Angel. It handles lots of stuff very nicely (textures, fonts, menus, sounds, input, AI, particles, even some kind of garbage collection) for you, and the source is laid out nicely and well documented. It’s designed for fast development, so maybe you or someone reading this would have a use for it.

Thanks for the post!

The “both analog triggers are one axis” thing in the (official!) driver is weird indeed, and you have to use XInput directly if you want to use both at the same time.

This is why in the early development of X Rebirth (it wasn’t even called that yet) you weren’t able to fly and shoot at the same time when you used the controller.

I can only imagine that this is precisely why Resident Evil also did this ;-)

This is something that has been annoying me for years. PC games that are console ports (or have been developed simultaneously for PC and consoles) typically assume that you have that kind of fine control an analog stick gives. But on the PC, with a keyboard, all you can then do is the hardest turns and fastest accelerations, and only in 8 directions. This is what makes driving in games like GTA much more difficult on the PC than on a console (for me, atleast).

But on the other hand, I utterly suck at aiming a reticule with a gamepad; I need a mouse for that. I even own an old off-brand gamepad with an analog stick from many years ago, and it is collecting dust. The only things I use it for is the SNES and N64 emulators, which I only rare fire up these days. (Actually, I bought the thing for the sole purpose of playing Secret Of Mana on the PC with an actual gamepad.)

I suppose there are peripherals for PCs to accomodate that, like stand-alone analog USB thumbsticks or something along these lines. But using such a device would likely rob me of the sea of keys surrounding WASD, for which my muscle memory is now wired.

Le sigh.

Weeell, and I was really really annoyed when I tried to drive a rallye car for the first time on a console with a thumbstick after having become used to proper analog joysticks… these thumbsticks offer soo little control!

I still have my old joystickj with z-axis, throttle control and a dozen or so buttons, but nobody wants to make games these days that would use it… (and honestly the quality is not very good — shouldn’t there be optical joysticks these days that don’t need calibration anymore?)

I’d tend to think that Shamus’ robot would be best steered with a proper joystick in one hand and a mouse in the other. But then maybe that is something only possible for people who like me are left-handed but still used to operating a joystick with the right hand and a mouse with either…

Yeah, what I’ve always felt PC needs to truly get the best of both worlds, is the left half of a joystick to hold in your left hand (or right half, for lefties) and your other hand on the mouse. That way you have the precision of a mouse for long shots, while the analog stick for slow/fast movements or gentle curves. Make a comfortable handheld stick that has a thumbstick, triggers, and a half a dozen buttons (like 4 face buttons) and we’re gold.

I use a 360 controller in the left hand and a mouse in the right for L4D and L4D2. Those are the most recent FPS I have played extensively. In many games this isn’t possible, you have keyboard + mouse OR joypad and enabling either locks out the other. Incredibly annoying.

That was the promise of the Nostromo… which failed becuase Belkin|Razer implemented a hat instead of proper analogue, since it was easier to map.

To speed the writing process up, I suggest just bullet pointing new features in a daily (or twice-daily) post. If you really want to, you could setup a poll for readers to pick interesting ones for you to expand on (up to a couple of paragraphs only!) in the next post – but that introduces another 48h delay in the writing.

I wonder if a live stream of you programming would be interesting – maybe then you could hand the footage off to someone else to edit & narrate into highlights.

This seems like a good tradeoff. Regular updates on the little things, with bigger updates on important things.

So, when is the game coming out?

:-)

The poll is an interesting idea. Although, I would never vote because I’m not competent to assess what would be interesting and what wouldn’t. Fortunately for me, 97.5% of what Shamus does write he makes interesting, so I win in any event.

(I’ve docked 2.5% for the same reason a Philosophy or English teacher would never score an essay at 100%.)

Strange, I would vote precisely because I’m not competent enough to know what’s interesting.

I guess it should be possible to play with a gamepad in one hand and a mouse in the other as long as the game recognizes both inputs.

This is exactly what I was thinking :D

The eyes do add some personality to the drones, don’t they? I can see what you mean with that it’s not incredible, but it’s definitely an improvement. Is Square-y Red-eye in the game too?

I wonder how many people looking into disabling SDL subsystems are going to find their final answer here. Given that your site is fairly high-profile and you dug the original answer out of a forum somewhere…

Also, I’m glad to see I’m not the only one who works dumb comedy into his comments that nobody else might ever read.

You mean in the image caption? I’m not seeing anything there other than the picture file name…

Real fact: I get disappointed every time I hover over an image on the internet and don’t see a witty comment. BSoA has ruined the internet for me.

“(The piss-easy way to do it is to just jam all those settings in a config file, which hasn't been tolerated since the 90″²s. I should know. I'm one of the people who doesn't tolerate it.)”

Let me introduce you to Company of Heroes: To edit the hotkeys you need to use the modding tools because the controls aren’t simply in a config file but instead in a simple config file that is packed (and encrypted?) in an archive file. The modding tools can unpack them but they can never ever repackage them, making modifying hotkeys not only hard (like in CoH1) but completely impossible!

what is the problem with the config file is it that it becomes bloated?

Nope, I unpacked it a while ago and edited it. It is pretty simple and not much different compared to CoH1.

However, to use the edited hotkeys you need to repackage them and the modding tools cannot do that (yet?).

Overall, if it worked it would still be incredibly complex compared to just changing the hotkeys in the options like it should be possible in every proper game.

This matches my own experience with a gamepad. Harder to aim, but much easier to move. That’s a very good thing, IMO. More options for the player to experience your game the way they like it. Then again, I didn’t bother to make the controls configurable. >_>

Are you sure those triggers are mutually exclusive? I’m sure I’ve played FPS games on the XBox where you could dual-wield guns, with each trigger button controlling one of the guns, and pushing both triggers fired both guns simultaneously. (Did I explain that right?)

They are. I use my USB xbox controller to work my N64 emulator, and if you’re pressing L1 (say, z-targeting) and want Link to raise his shield (which is R1), it doesn’t work. It cancels out the initial press. L2 and R2 are actual buttons and don’t have this problem.

It’s really dumb. I know what you’re talking about, but I don’t know how the console FPSs do it.

The controller itself has no such limitation. The axis limitation is purely a driver issue and can be sidestepped by using a third-party driver.

If I had to guess, I’d say that the rationale behind it is simply backwards compatibility. I think the triggers are mapped to what most games use as the throttle by default, most likely so that it would work with older games. I’m not sure if you get full access to the controller by using XInput.

For what it’s worth, there are a number of other controllers that bump into the same limitations. I recall using a few wheels that had this issue (i.e. you couldn’t press the accelerator pedal and brake pedal simultaneously as they would “cancel each other out”).

Another fun little fact is that the d-pad buttons are also mapped individually by the hardware, so if you had a semi-broken controller (or a DDR mat) it could send out left+right and up+down. Again, the Xbox 360 controller driver in Windows prevents software from actually seeing that, as it maps it as a hat.

It’s not a hardware thing, it’s a software/driver thing. And it’s not even a Windows driver thing (Rage, for example, has no problem using the two triggers simultaneously.) I mean, Microsoft’s Microsoft, but they wouldn’t sabotage their own gaming controller in that way.

I think Shamus hit upon a bug/deficiency in SDL.

Yes, this is definitely not a restriction on the controller. Almost every shooter on the Xbox has aim mapped to the left trigger and shoot mapped to the right trigger. It would be awful if those were mutually exclusive. :)

I assume it works as you’d expect with Direct Input.

Here’s a really good video on making 2D stuff more interesting and alive. It covers eyes and stuff, so clearly the presenters know their stuff.

That is a good video. I’ve been thinking a lot about this since the project began. My thinking on this goes all the way back to the Errant Signal episode on kinesthetics, which in turn goes back to Steve Swink’s book “Game Feel”. (I actually tried to but a copy of the book this morning, and found out the KINDLE VERSION(!) is forty dollars. I’m curious, but not $40 curious.)

This will probably get a great big post of its own when I get to week 2 of the project. Which, at the rate this series is going, ought to show up around 2018 or so.

This should go on your amazon wishlist!

Have you checked your local library?

Interesting. When I Google “Game Feel”, like fourth link is a link to the PDF of the book (pirated probably). It probably says something about my browsing ways.

Funny, that exact Errant Signal video led me to buy Game Feel for myself. It’s sitting on my table right now, with a bookmark a few chapters in…

Very cool video! Thanks for sharing.

It’s amazing how much more fun that game gets with “juice”. I wasn’t even playing and I still smiled when they turned on all the elements.

Ooo. I consulted a book on how to make games juicy (or was that just a chapter?) during a school game project. So I’m familiar with the concept. But this video is an excellent demonstration. Shared!

Seems to me like it isn’t terribly important that this series stay in chronological order, so I wouldn’t worry too much about covering everything before letting yourself talk about what’s currently going on. If you have a lot to say about a feature, we’d love to hear it — and similarly if you want to just list a change real quick then a single bullet point is enough.

(Really enjoying this series by the way)

Agree definitely that terribly important chronology isn’t.

Maybe I’m getting too old for all this, but it came as a bit of shock reading this part and realising you’re using new-fangled 3D drawing techniques to create and old-fashioned 2D game.

To create the moving eyes, the first thing that came in to my head was drawing the iris masked off at the boundaries rather than using the graphics card to track the depth of the scene.

I think some aspect of the 2D/3D thing was covered in an earlier Good Robot post. Or maybe it wasn’t – I’m completely out of my depth here! Aaargh! *glug, glug*

Speaking as someone who spent some time working on code to fetch controller state directly, there shouldn’t be a reason for the two triggers to be opposed: they’re reported as a pair of adjacent bytes in the input stream.

I can’t confirm this with my own stuff quite yet, since my code is in the middle of some

over-engineeringperfectly reasonable architectural restructures, but similar code written using the same unofficial specs is perfectly capable of reading each trigger separately.They don’t even have the same resolution as the other axes; it’s really weird that they’d be treated “the same”. (The sticks have a signed short for each axis, and the triggers have an unsigned byte.)

It varies by controller setting.

I don’t use an Xbox controller, I use Dualshock 3 controllers(I actually owned 2 of these for over a year before I bought a PS3) and run them through Motioninjoy which is slightly fantastic.

It has 4 built-in modes(PS1, all digital, no analog sticks, PS2 all digital, with analog sticks, PS2 with lots of analog support, PS3 with motion, Xbox 360) plus the option to redefine keys any way you want(I have a setup to play League of Legends), and if you have a supported Bluetooth device, you can pair the controllers to your computer.

Well, I’m specifically talking about writing code to grab USB communication from an XBox 360 USB controller on a Mac, which gets me:

A device that totally could be a straightforward gamepad, except it doesn’t feel like it.

An unofficial driver that’s fragile enough to reliably get me to OS X’s tastefully gradiented, multilingual BSoD.

No official support except for, of all things, Chrome build 29, which support I can’t figure out how to turn off. (See, it grabs the controller for itself, which means my code can’t touch it.)

I can’t figure out how to get the bizarre, multi-party edifice of USB code the OS gives me to handle straightforward situations gracefully, so I’m jumping through weird hoops in my design process to get it to work.

Oh. A Mac.

Well bummer. I don’t know anything about controller support on a Mac. Motioninjoy is Windows-only.

Sorry about that.

I really liked the control scheme of the first metroid game that came out for the Wii. Your left hand gets an analog stick for moving around and your right hand gets a very good (it used the IR camera, not the accelerometers) pointer. It would necessitate sitting farther from the screen, but you might want to consider trying out a wiimote for controls. I don’t know anything about windows drivers for them, but I tinkered with one in Linux and they show up as regular old bluetooth HCI.

Somewhat alternatively, there’s the nunchuck/mouse approach. It would still require wiimote drivers, but you don’t need to stick IR LEDs on your screen and sit far away from it.

Personally, I can never get Wii FPS controls to work. I don’t know if it’s the overhead lights and/or the window behind the TV interfering or what, but if I hold the pointer in place for a couple seconds, it seems to fall asleep, locking my direction until I shake the remote a bit to wake it up. That particular problem only ever occurred in Metroid Prime 3, but I did have plenty of issues with the cursor position getting unaligned in The Conduit and Skyward Sword.

Cursor unalignment (totally a word) is a common issue with Skyward Sword, which I assume is the reason they enable you to realign the cursor in game without turning off the controller. I think it was an issue with the Wii Motion Plus system. Not sure about the Conduit, but I definitely never had that problem with Metroid Prime 3. Having played FPS games using X-Box, Keyboard & Mouse, and Wii controllers, I have to say the one the felt the most natural and precise for me was the Wii. Like Nathon said above, the Nunchuk controller with an analog stick and two triggers plus the IR Wiimote was perfect for me.

“WASD are obviously binary indicators, and the only way to go slow is to flutter the keys.”

You’re well-aware of just how many buttons a keyboard has. ‘specially For a twin-stick type game, there’s plenty spare buttons that can function as a velocity limiter or a brake. :) Doesn’t give quite the fine control of the analog sticks, but it’s a big improvement to go from tapping D to simply holding Shift-D for slower movement, in my opinion. Whether this is a useful feature is of course better answered by someone who’s played the game.

It’s a small improvement, but it’s actually much, MUCH worse for what you would call “beginning gamers.” There was a certain elegance and simplicity to the NES. If you wanted to do something, you had 8 possible buttons to choose from, at least 2 were usually foregone, and you could typically guess from 2 or 3 by process of elimination.(commonly-used actions aren’t going to be on the Select or Start button, jumping is going to be up, A, or B)

As games have developed, game controllers and control systems have gotten exponentially more complex, to the point that if you stuck the kid you were when you first picked up an NES or SNES controller in front of Halo, he would probably be fairly confused and not have much fun. To appeal to casual gamers, you MUST design a control scheme with a low button count.

I’m curious why config files are no longer tolerated in this context? I still see a lot of games with various types of config files. I find them extremely convenient as an end-user.

In the old days I didn’t mind so much. But now every game has their own place to hide their settings.

Is it under user/SavedGames? Or user/Gamename/settings? user/files/publisher/company/game/settings? Or under the folder where the game is installed? Or one of the folders under it? The permutations are endless, and doing a * search on your hard drive(s) just to find a stupid text file is cruel.

And then there’s the problem of making the file make sense. If the file says:

[Keys]

FireMissiles=E

Okay. I want to change that to use the shift key, for whatever reason. Should that be:

FireMissile=Shift

FireMissile=Left Shift

FireMissile=LShift

FireMissile=Left_Shift

FireMissile=Left-Shift

And you try each of them and none of them work. So you google it, and then after twenty minutes you discover it’s actually

FireMissile=LfShift

So config files involve a scavenger hunt and a lot of guesswork on the part of the user. And of course, not everyone playing the game has the technical savvy to make use of them.

This issue however could easily be mitigated by a bit of commenting in the original config file. And personally, I don’t mind manually looking through a game’s directory for config files. And much better than manually looking through Window’s maze of appdata/documents/user/public/roaming/local/whatever directory mess.

Edit: Woah, this comment got into the moderation queue. Is “Windows” one of those bad words now?

It’s probably all of the forward slashes. Makes it look like you’re some kind of robot.

I was assuming it was the phrase “And personally”; that has a tendency to be followed with bad things.

Hah! I came here to post my config frustration story related to exactly that reason AND with a very similar example.

Except the ridiculously the person who had made the game (a small indie) had been VERY fond of shortening stuff. So left shift? “ltshft” and “rtshft” for the right one.

For the controller triggers? Which, and I can’t recall why but they could be remapped; “rtrg” and “ltrg”.

Other highlights included “rtrn”, “spcbr”, “arwlt”, “arwrt”. My personal theory was that the fellow had a strong aversion to vowels.

M(y)b vwls klld thr prnts.

The avatar indeed looks a little like the silhouette of the non-gendered offspring of WALL-E and EVE.

Oh great, thanks. You just undid some of my brain bleaching from a couple of years ago.

I happened to see a cartoon depiction of said characters engaged in the early stages of production of such offspring – mammal style, with organic bits drawn on both. I lose no sleep over Rule 34 itself, but that thing just looked so stupid I had to erase it from my memory. And now it’s back again. :C

(Had the artist drawn the.. eh, interlocking mechanisms with non-biological components, I’d just have thought “meh” and moved on unperturbed. :/ Do I score a nerd point with this?)

Here’s an idea: DOUBLE MOUSE SUPPORT! Make the trickiest shots while maneuvering through a maze of bullets like it’s no thing.

That’d probably be kinda cool. And realistically, who doesn’t have a spare mouse floating around the house somewhere? I swear those things are breeding in the bottom of my cable drawer. But you’d almost certainly have to write a new mouse driver to get it to cope with multiple simultaneous inputs separately.

“And realistically, who doesn't have a spare mouse floating around the house somewhere?”

I count three on my fiance’s desk alone (four if you count the laptop’s trackpad). Adding my mouse, that’s four (or five) in just this room. We probably have at LEAST three more hiding in the Room O’ Computer Bits.

BUT WHAT WILL I DO WITH MY WASD HAND!?!?! I NEED THREE ARMS!

Used to love Cybersleds, which was a dual-joystick set-up. Each stick controlled a “tread,” so if you pushed them forward, you went forward, but you could also slide left and right, or spin on an axis.

Favorite control system ever.

Alas, fitting 2 sticks and a keyboard on the desk is a challenge, and most games don’t support the input system.

Mouse,Keyboard,Joystick,Gamepad,Pedals,Whatever.

I created an abstract for these in a project of mine (not a game) where whatever the raw values of the device, it was translated to a 0.0 to 1.0 range.

Where 0.0 is not press/inactive and 1.0 is fully pressed/fully active.

This means a pedal would be at 0.5 if pressed halfway down.

While a keyboard button would obviously be either 0.0 or 1.0 depending on whether it’s pressed or not.

And the neat thing is that the addition of deadzones is easier this way too.

It also makes it possible to treat analog as if it was digital.

For example pushing a trigger from none to max and it could be 0.0 for the first half then switch to 1.0 once it goes past the halfway mark. (0.0 to 0.49 = 0.0 and 0.5 to 1.0=1.0)

This even works nicely with a hat switch though in that case instead of values from 0 to 359 (or 1 to 360, or -180 to +180), it’s just 0.something to 1.0 (0.0 being hatswitch center) Although I guess you could also do a x and y on the hatswitch, in which case x=0.0 and y=0.0 would be center, myself I prefer doing it the latter way, WASD mapping and DPAD would be similar to that as well.

I wrote a thin shim on top of the Linux joystick “API” (I call it an API, because it kinda was, but actually it was a stream of frames on a special file, at some sort of rate) and ended up using a similar method, although I treated each axis as being from -1 to 1 (from “fully left” to “fully right”).

I did have the concept of “translation functions” you could tell the interface I wrote to use, though. With at least the stick I had, it was glorious using a “cube the value” translator. Gave you a somewhat unresponsive around-dead, a good precision middle and a nice comfortable “fully engaged although you are not quite at the extremes of the stick physical range”.

I don’t recall what I did for buttons, I think they may have just been bitmasks.

I do remember the horror of having a dedicated thread reading /dev/jsX through, with the locking required to get the ever-changing “real” state into something that was sanely usable from code.

I’m totally okay with the double-sized entry.

And it was my understanding that both triggers register as a single “stick”, one being X and the other Y.

They register as a single axis in directInput, so both at the same time makes them cancel out. Xinput fixes it.

I really like the effect adding the eyes made. I had been looking at the running lights as the “face” of the robots, so I saw the middle one as pointing down and the right-most one pointing up. Adding the eyes made a big difference in how my brain interpreted the scene.

While you may have covered this in the time between when this post was in progress of being programmed till now, I am kind of curious what kind of direction you are thinking with sound design. You are kinda borrowing on the art design from games that tend to have more serious, sweeping melodies. But as gameplay you are kinda touching on descent and scrolling shooters.

Since sound design can have a major effect on people, I am kinda curious to hear (both through you and hte game) how you are establishing the tone. Descent style kinda semi-electronic music designed to pump the beat up, more serious music to make the player feel tense? Or just say screw it and have legendary guitar solos… and explosions.

In any case, looking better with every post, really enjoying this series, thanks Shamus.

Dubstep. Calling it now.

Polka.

With record scratch sound effects every time you kill an enemy.

“A lot of games (indies in particular) don't take this into account”

Possibly because we only have “off brand” controllers attached with wires and have never encountered that stupid feature? Because we stopped after we got the first XBox (ALMOST WROTE XBOX ONE DAMN YOU MICROSOFT) and don’t have a 360 controller? I think those are acceptable excuses for failing to adequately serve the consoletarded hegemony with our game(s) designed for personal computers.

While I’m a little late to the party, I have this to say with respect to how you’re laying out this series:

– What made your previous series so compelling was a combination of your sense of humor, your ability to create similes that everyone can understand, and your immense fixation with the little side-roads that didn’t pan out… and WHY you did things the way you did, including why you threw away X amount of code. It’s the journey.

– To me, the current series isn’t as interesting and I’m not reading them the day you post them. They’re still very well written, but it feels a lot more like you’re writing down things that are required in order to get to the stuff you DO want to write about. (This would have been a more impressive prediction if I’d said it before you said the same thing, but here we are.)

My advice is, spend 100 words summarizing what you’ve done. Then get on to the stuff that you’re thinking about instead of falling asleep. That’s your crack, and it’s our crack too. :)

I really love the idea of virtual inputs. It also makes it possible to lump several keyboard keys into one “input” for people who are not so precise at key presses (like children, for instance).

I’ve wanted, for quite a while now, the ability to map a whole bunch of keys to the same action. Say the whole left side of the keyboard to “move left” and the whole right side of the keyboard to “move right”. It would make teaching computer interface to children so much easier! Their fingers are small, but those keys are small too.

As far as re-mapping inputs, yeah, that’s a difficult problem. It seems like Unicode should help with this, but as you say, there are lots of devices that use all kinds of different formats for communicating data (analog for instance). I think the most straightforward way (from a user’s perspective) might be some kind of “training” window. Instead of filling out a config file, or looking through a menu list, just have a visual of the thing you’re controlling, and then have it respond to inputs exactly as it would when you are playing the game. In essence a “control test sandbox” right there in the button re-map. It’s really just a menu with feedback, but the feedback is super helpful, especially when messing with controls.

I remember reading once -I think it was in gameinformer -that creating a control scheme really depended on what the inputs were. The controls for a computer FPS were different -emphasizing the quick changes possible with the WASD keys -that made them fun. Computer FPSs end up encouraging dodging and jumping and snap-shots at hast moving targets. Console controls, though, don’t allow that -so console shooters settled into a system of circle-straffing and using cover.

So, if these entries are 3 weeks behind your current progress, don’t you fear the time when someone posts a comment “…but did you try this?…” and you have one of those head-slap-V8 moments, and wish you had made the post the day of that trouble you were having, instead of 3 weeks later???

More likely is the scenario:

“Hey why don’t you try this?”

(four months of posts later)

“Yeah, I tried that two weeks before you made the suggestion, and it didn’t work because of X”

XInput does indeed pick up each shoulder trigger individually.

From memory it was first set up as one axis for generic joystick drivers so that the default value for them was neutral instead of fully in one direction.

Or at least the justification was something like that.

XInput is actually a very simple API for supporting the xbox controller on pc, but of course a nice generic solution is better if you can be bothered with the extra effort (which clearly you could).

Mono-eyed floating enemies that shoot rays and beams?

If this game doesn’t have a Beholder boss fight, I’ll be crestfallen.

Since this post is also about controls, I’ll quote from Shamus’ earlier comment:

Yesterday I tried a demo for a 2D shooter (done in 3D, but gameplay takes place on a strict 2D plane) where the controls are the same. Mouse pointer aims the reticule, WASD control relative to the screen. I forgot how awkward that feels.

My brain can’t wrap itself around this. When trying to back away from an enemy, I automatically want to go backwards relative to the character, pressing W, not the screen direction my character’s back is facing. I am probably weird like that, but please consider an option to switch the controls to character-relative WASD. Otherwise I don’t think I’d ever be able to beat your game on easy.

Two things, one good, one bad.

I think the addition of eyes looks fantastic. To me at least, the switch between those screenshots WAS an oh wow, they these have come to life moment. I think the eyes you made do a great job of instilling personality in the enemies.

The criticism is that I really don’t care for the avatar model. All the enemies look like that would be floating, while the player model looks like it would be rolling along the ground, and that makes it just not look right to me.

I am also being bugged by the asymmetry of it (especially in the eyes). I understand the idea of two different types of weapons, but having them clearly separated to a side each like this would make me feel like I was going backwards half of the time I think.

Obviously it is completely up to you, but I think I would like something less human(ish) more. Maybe it could be some kind of modification of the more basic enemy designs. More detail and bits, but like they all came from the same manufacturer or something. Could maybe fit into some kind of story of the player being a robot that was constructed improperly, and is now fleeing to avoid being destroyed. (they would be out to destroy you because you are a defective model.) Just an idea to throw out there.

In any case, this whole project looks awesome, I hope I get to play it at some point!

Pretty serious 1.5 year thread necro here, but looking through the archives made me realise something; doesn’t the PC version of Dragon Age: Inquisition have terrible trouble with controllers? As in, people were complaining about how the game only performed a controller check at startup and wouldn’t be able to pick the controller up again if it was disconnected and reconnected during gameplay?

Well here’s Shamus Young spotting and fixing that problem more than a year before Bioware launched their game :)

On the topic of button configs – isn’t it easiest to have a user config option be like:

“Go Up”

“Go Down”

“Fire”

etc.

And then highlight each one and let the user hit whatever key/button/stick direction they want – read what happened – and map it to your “virtual buttons”? You could even accept multiple inputs by having a dedicated “toggle through the list” button be the first things selected.

Since you’re only on that screen if you want to customize your button configuration, having user instructions seems like it would be OK.

That would be easy. It’s basically how most games do it anyway. The problem, however, is that you want a screen that you can check the controls on, not rebind them on. That depends on keymap. Have you ever been told to push ~ only to find out that it’s actually ` for you or vice-versa?